One GPU, Zero Labels: Forge a Domain-Specific Embedding Model Overnight

A lone H100 GPU whirs in the corner, churning your docs into a razor-sharp search brain. No data labeling drudgery, no massive clusters—just pure AI magic in under a day.

News on GPUs, specialized silicon, data center scaling, and the infrastructure powering the AI revolution.

A lone H100 GPU whirs in the corner, churning your docs into a razor-sharp search brain. No data labeling drudgery, no massive clusters—just pure AI magic in under a day.

Your next AI won't just chat—it'll hijack your desktop, editing docs, surfing sites, all while unknown startups erect datacenters the size of power plants. Buckle up.

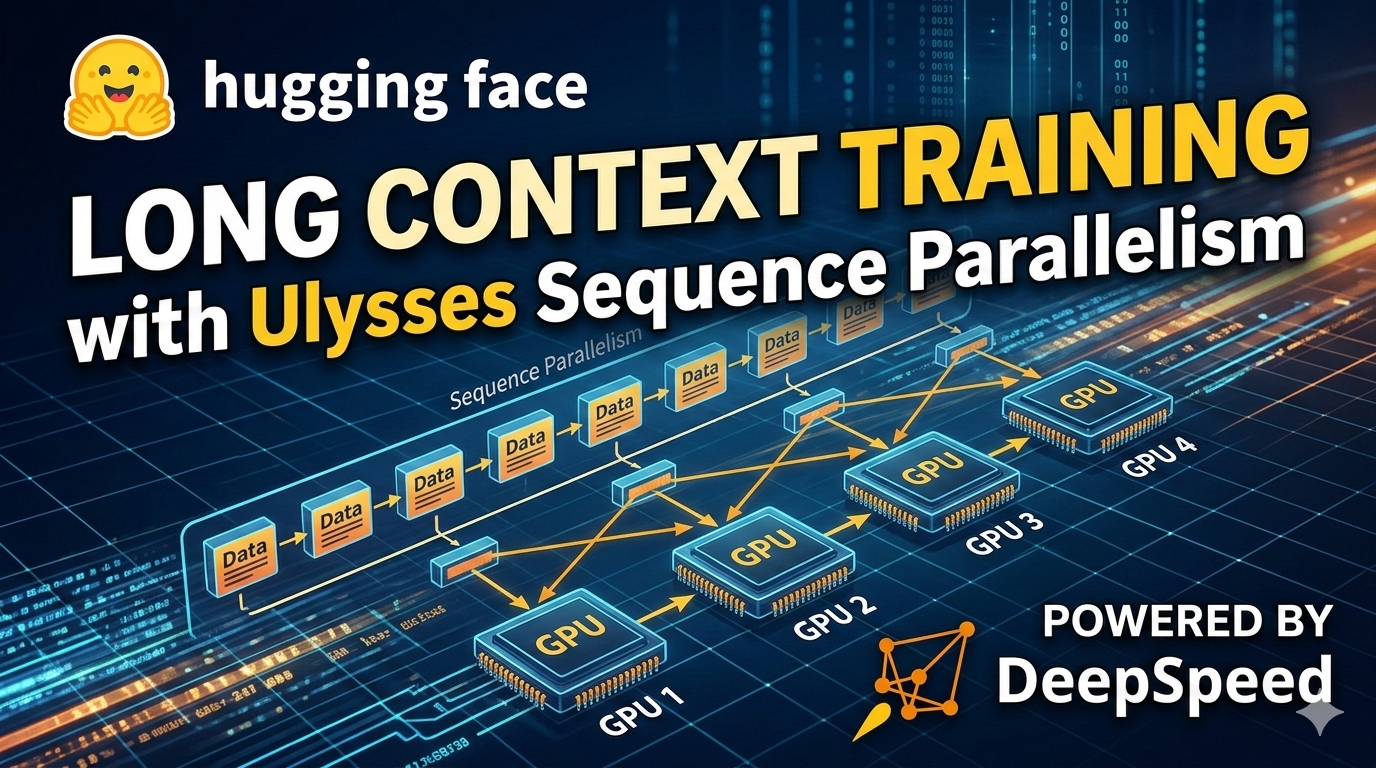

FlashAttention killed the memory beast for long sequences, but compute still explodes quadratically. Enter Ulysses Sequence Parallelism: shard your attention heads across GPUs and train on a million tokens without melting your cluster.

Forget plodding multimodal models. H Company's Holotron-12B just doubled throughput for computer-use agents on a single H100, hitting 80.5% on WebVoyager. This isn't hype—it's a production breakthrough.

Raccoons fled Fab 9 when Intel poured billions into advanced chip packaging. Now, it's gunning for AI riches against TSMC.

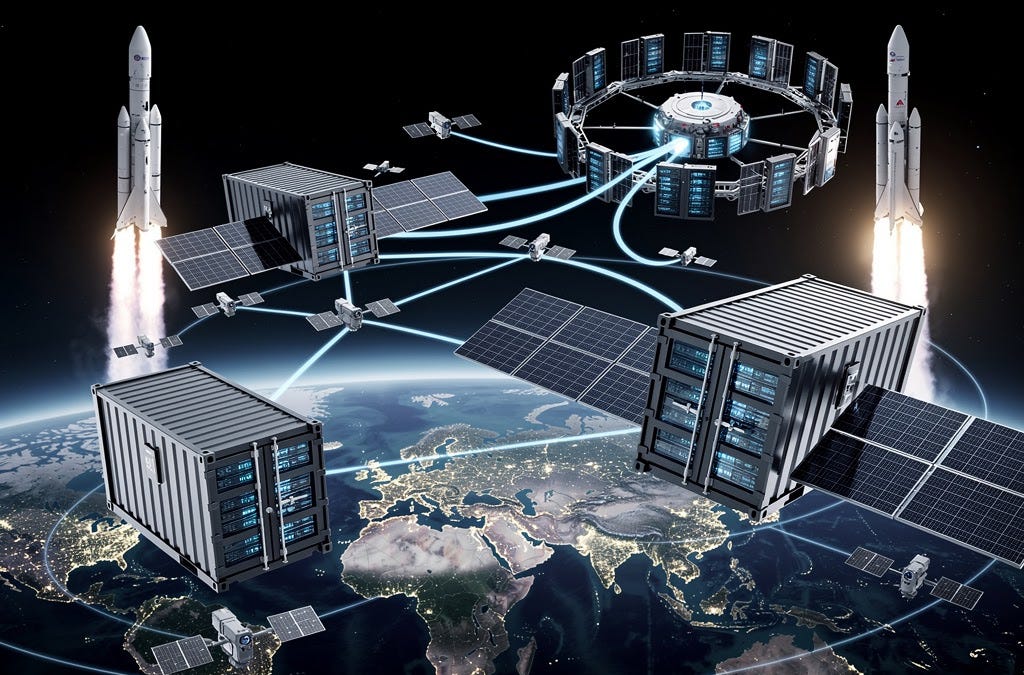

AI's insatiable hunger for power is crashing against Earth's grid limits. Space—orbital datacenters, lunar factories, solar arrays—might be the radical fix no one's fully pricing in.

Barely 30 days since Apple unveiled the M5 MacBook Air, Amazon's slashing it to $949. That's not a glitch—it's a signal.

AI was barreling ahead on GPUs alone. Now quantum's creeping in, promising to evaluate millions of parameters at once. This shifts the race.

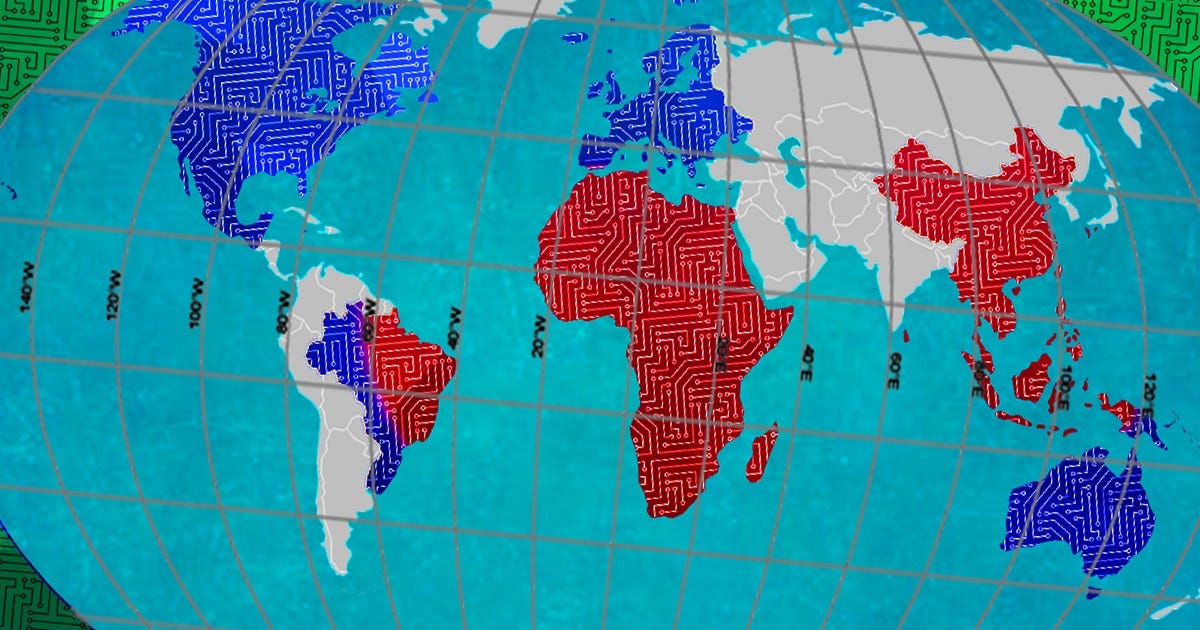

Forget algorithms—what if party balloon gas decides the AI winners? Geopolitical chokepoints like helium and high-bandwidth memory are set to strangle datacenters.

Edge AI moves machine learning from the cloud to local devices, enabling faster, more private, and more reliable AI applications across industries.

A detailed comparison of NVIDIA's H100 and A100 GPUs, covering performance benchmarks, architectural differences, memory specifications, and cost considerations for AI workloads.