For most of the past decade, the dominant paradigm for AI applications has been cloud-centric: data is collected at the edge, transmitted to powerful servers in remote data centers, processed by large models, and results are sent back. This approach works well when latency is tolerable, connectivity is reliable, and data privacy is not a primary concern. But an increasing number of real-world applications cannot afford any of those assumptions. Edge AI — running machine learning models directly on local devices — is emerging as the solution.

What Is Edge AI?

Edge AI refers to the deployment and execution of artificial intelligence algorithms on hardware devices at the periphery of a network, rather than in centralized cloud servers. The "edge" can be a smartphone, a security camera, a factory sensor, an autonomous vehicle, a medical device, or any computing device that operates at the point where data is generated.

When AI runs at the edge, data is processed locally without round-trip communication to the cloud. This fundamental shift in where computation happens enables a new category of applications that demand real-time responses, operate in connectivity-constrained environments, or handle data too sensitive to transmit over networks.

Why Move AI to the Edge?

Latency Reduction

Cloud-based AI inference introduces latency from data transmission, network congestion, and server queue times. For applications like autonomous driving, industrial safety systems, or augmented reality, even tens of milliseconds of delay can be unacceptable. Edge AI eliminates network latency entirely, enabling inference times measured in single-digit milliseconds. A self-driving car cannot wait for a cloud server to decide whether an object in the road is a pedestrian or a shadow.

Privacy and Data Sovereignty

Edge AI keeps sensitive data on the device where it was generated. Medical imaging data can be analyzed without leaving the hospital. Facial recognition can operate on a smartphone without biometric data ever traversing a network. Voice assistants can process commands locally without recording conversations to cloud servers. This architecture aligns with increasingly strict data protection regulations like GDPR and provides users with greater control over their personal information.

Bandwidth and Cost Efficiency

Transmitting raw sensor data to the cloud is expensive and bandwidth-intensive. A single autonomous vehicle generates terabytes of sensor data per day. A factory floor with hundreds of IoT sensors produces continuous high-volume data streams. Processing this data locally and transmitting only actionable insights dramatically reduces bandwidth requirements and cloud computing costs.

Reliability and Offline Operation

Cloud-dependent AI systems fail when connectivity is lost. Edge AI continues operating regardless of network status. This is critical for applications in remote locations, military environments, aircraft, ships, and any scenario where connectivity is intermittent or unavailable.

Key Hardware for Edge AI

Neural Processing Units (NPUs)

Modern smartphones and laptops increasingly include dedicated neural processing units — specialized silicon designed specifically for the matrix multiplication operations that dominate neural network inference. Apple's Neural Engine, Qualcomm's AI Engine, and Google's Tensor Processing Units for mobile all provide significant performance improvements over general-purpose CPUs for AI workloads while consuming far less power.

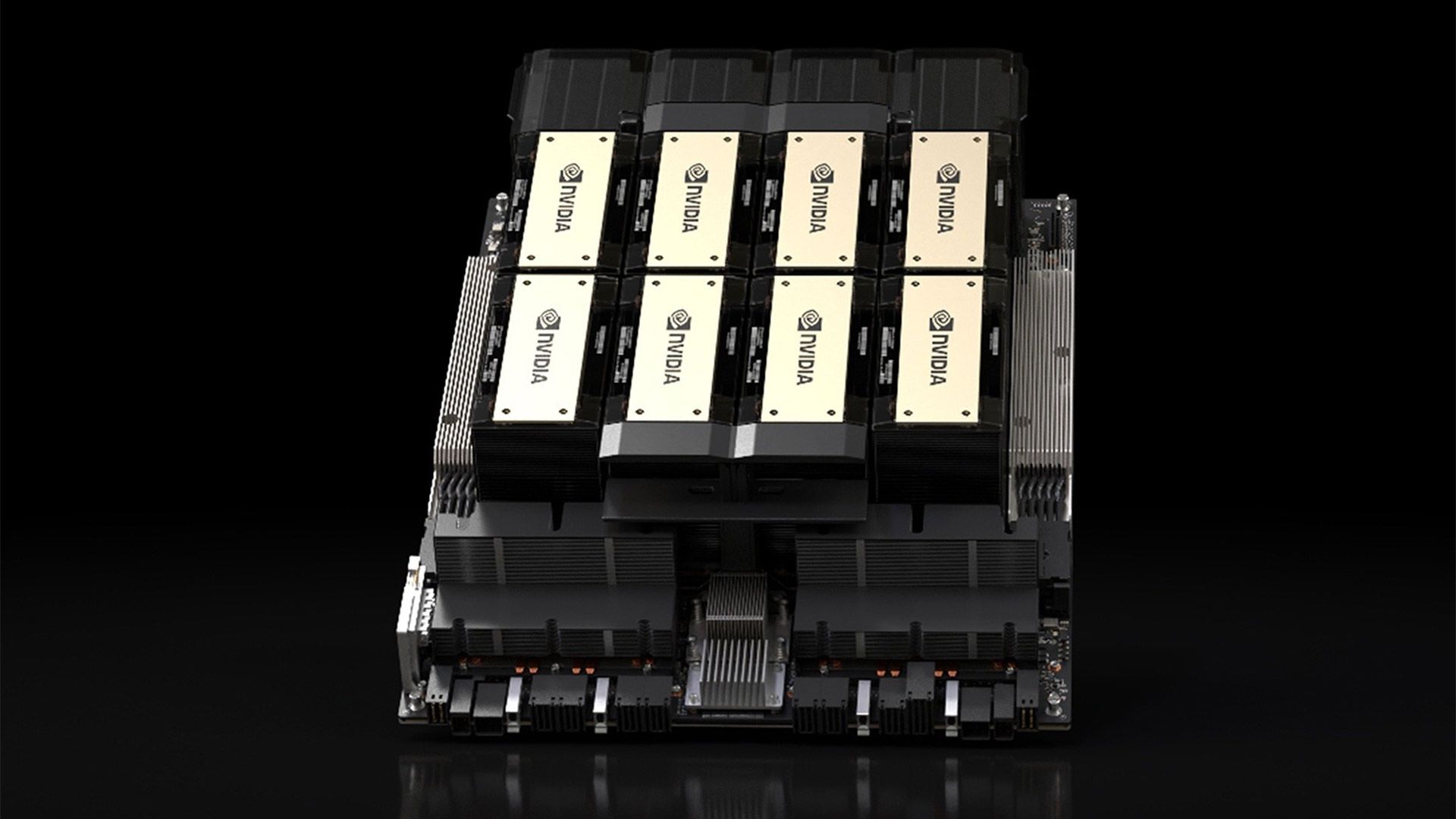

Edge AI Accelerators

Purpose-built edge AI hardware includes NVIDIA's Jetson platform for robotics and IoT, Google's Coral Edge TPU for embedded vision applications, and Intel's Movidius VPUs for computer vision at the edge. These devices offer a balance of performance, power efficiency, and cost that makes sophisticated AI practical in resource-constrained environments.

FPGAs and Custom ASICs

For high-volume applications with specific performance requirements, field-programmable gate arrays and custom application-specific integrated circuits provide optimized inference performance. While more expensive to develop, these solutions offer the highest efficiency for fixed workloads, making them ideal for applications like network equipment, automotive systems, and industrial controllers.

Software Frameworks for Edge Deployment

Deploying AI models to edge devices requires specialized software frameworks that optimize models for resource-constrained hardware. TensorFlow Lite and ONNX Runtime provide model conversion and optimization for mobile and embedded devices. PyTorch Mobile extends the PyTorch ecosystem to edge deployment. Apache TVM compiles models to optimized code for diverse hardware targets. NVIDIA TensorRT optimizes inference specifically for NVIDIA hardware across cloud and edge.

These frameworks employ techniques including model quantization (reducing numerical precision from 32-bit to 8-bit or lower), pruning (removing unnecessary network connections), and knowledge distillation (training smaller models to mimic larger ones) to shrink models while preserving accuracy.

Model Optimization for Edge Deployment

Quantization

Quantization reduces the precision of model weights and activations from 32-bit floating point to 8-bit integers or even lower. This can reduce model size by four times or more while maintaining most accuracy. Post-training quantization requires no retraining and can be applied to existing models, while quantization-aware training incorporates reduced precision during training for better accuracy.

Pruning and Compression

Neural network pruning removes weights or entire neurons that contribute minimally to model accuracy. Structured pruning removes entire channels or layers, producing models that are directly smaller and faster. Combined with compression techniques like weight sharing, pruning can reduce model size by 10 times or more with minimal accuracy loss.

Architecture Design

Some model architectures are designed from the ground up for edge deployment. MobileNet, EfficientNet, and SqueezeNet use architectural innovations like depthwise separable convolutions and inverted residuals to achieve strong performance with dramatically fewer parameters than standard architectures.

Applications Across Industries

Edge AI is already deployed across numerous sectors. In manufacturing, vision systems on production lines detect defects in real time without cloud dependency. In healthcare, portable diagnostic devices analyze medical images at the point of care. In agriculture, drones with onboard AI identify crop diseases and optimize irrigation. In retail, smart cameras analyze foot traffic and shelf inventory without transmitting video to the cloud. In automotive, advanced driver assistance systems process sensor data locally to make split-second safety decisions.

Challenges and Trade-offs

Edge AI involves real trade-offs. Model accuracy often decreases with optimization — smaller, faster models are typically less capable than their cloud-based counterparts. Hardware constraints limit the complexity of models that can run at the edge. Updating deployed models across thousands or millions of devices presents significant operational challenges. Power consumption, thermal management, and physical durability add engineering complexity.

Despite these challenges, the trajectory is clear: AI inference is moving closer to where data is generated. As edge hardware grows more powerful and optimization techniques improve, the gap between cloud and edge AI capability will continue to narrow, enabling an expanding universe of intelligent, responsive, and private applications.