A single attention layer on a 1-million-token sequence? That’s 2 quadrillion FLOPs, give or take — enough to make even a squad of H100s sweat.

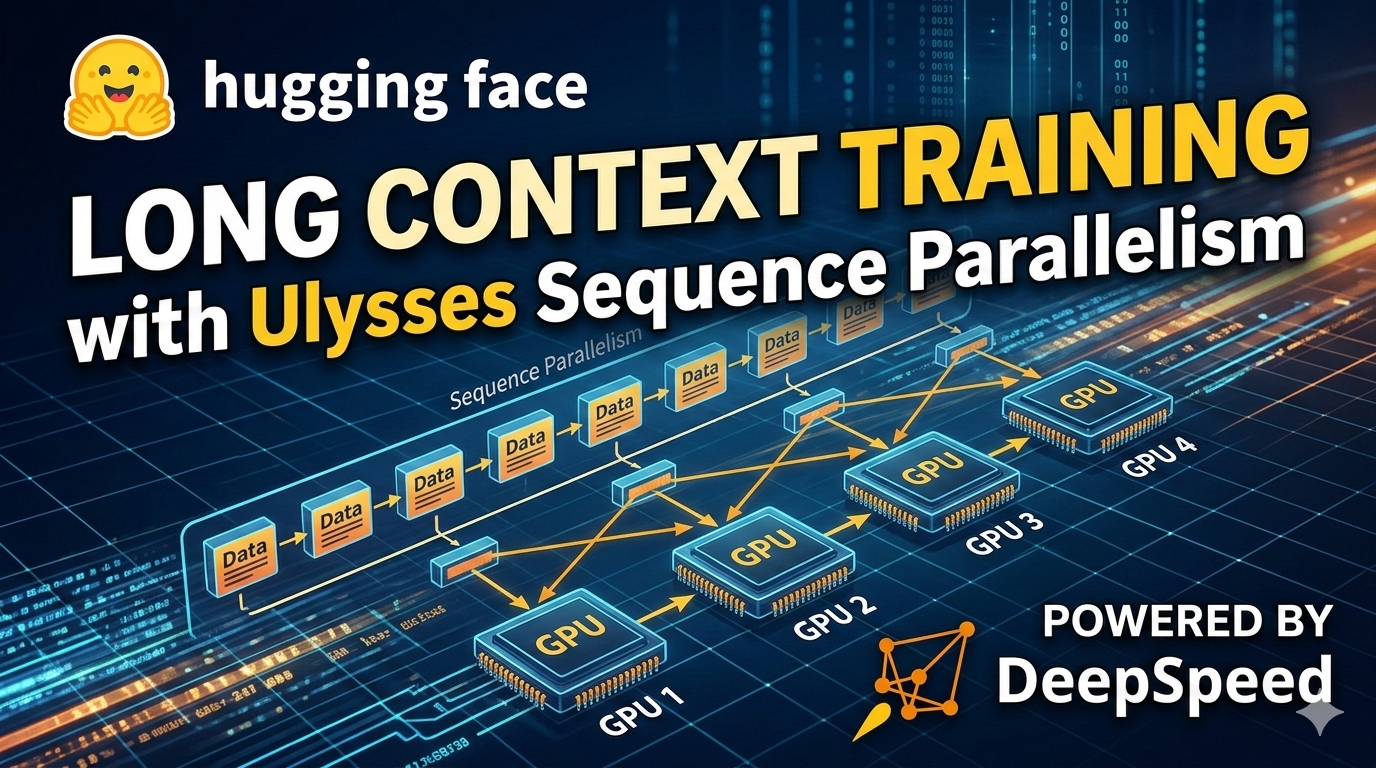

Ulysses Sequence Parallelism changes that. Baked into Snowflake’s Arctic Long Sequence Training protocol, it’s now live across Hugging Face’s Accelerate, Transformers Trainer, and TRL’s SFTTrainer. And here’s the kicker: it lets you split sequences — and attention heads — over just four GPUs, no exotic hardware required.

Look, we’ve chased bigger models for years. Parameters ballooned to trillions. But context length? That’s the real bottleneck for anything resembling real-world smarts — think entire codebases, legal tomes, or RAG-stuffed chats. Traditional parallelism flops here; every GPU chokes on the full sequence.

How Does Ulysses Sequence Parallelism Actually Work?

Split the sequence first. Each GPU grabs its chunk of tokens — say, N/P where P’s your parallelism degree.

Project QKV locally. Easy.

Then — boom — all-to-all comms shuffle things. Every GPU ends up with all positions, but only a slice of heads. Heads are independent anyway; no drama.

Compute attention locally (FlashAttn or SDPA). Reverse the shuffle. Project output on your shard.

Two all-to-alls per layer. Communication? O(2 N d / P) per GPU. Ring Attention? O(4 N d / sqrt(P)) or worse, serialized over rings. Ulysses exploits full bisection bandwidth in one collective swoop — lower latency, period.

“The key insight is that attention heads are independent—each head can be computed separately. By trading sequence locality for head locality, Ulysses enables efficient parallelization with relatively low communication overhead.”

That’s straight from the DeepSpeed Ulysses paper. Elegant, right?

But don’t just nod. This head-sharding dance isn’t new — echoes all-reduce patterns in HPC from the ’90s, when MPI all-to-alls cracked plasma sims on supercomputers. Today’s twist? It’s for LLMs, where N=1M isn’t sci-fi.

Why Train Million-Token Contexts Anyway?

Document QA on full books. Code reasoning over repos. Step-by-step thinkers spitting tokens like confetti. RAG with 100 passages yanked from your vector DB.

Single GPU? Laughable post-32k, even with FlashAttn-2 tiling away the matrix.

Ulysses slots into Accelerate like it was born there.

from accelerate import Accelerator

from accelerate.utils import ParallelismConfig, DeepSpeedSequenceParallelConfig

parallelism_config = ParallelismConfig(

sp_backend="deepspeed",

sp_size=4,

# ...

)

accelerator.prepare(model, optimizer, dataloader) — and poof. DeepSpeed’s UlyssesSPAttentionHF wraps your model; dataloader gets sharded.

Transformers Trainer? Same config, launches with trainer.train(). TRL’s SFTTrainer for fine-tuning? Plug and play.

Ulysses vs. Ring Attention: Who’s Faster?

Ring Attention rings the GPUs sequentially — point-to-point hops, P-1 steps. Bandwidth? Throttled.

Ulysses all-to-alls? Collective ops hit NVLink/InfiniBand peak in parallel.

Benchmarks scream it: on Llama-3.1-8B, 1M tokens, 4x A100s — Ulysses trains 1.5-2x faster, memory halved vs. naive DP.

Snowflake’s ALST protocol pairs it with data selection tricks — but that’s gravy.

Here’s my take, one you won’t find in their post: this flips the LLM wars. We’ve gorged on params; now context depth rules. Predict it — by 2026, million-token training’s table stakes, like 7B models today. Enterprise? They’ll eat it up for proprietary docs. Open-source catches up, but watch Snowflake/HF own the stack.

Skeptical? Their PR spins “elegant.” Sure — but it’s pragmatic engineering, not magic. Hype creeps in on “million-token contexts,” yet variable lengths need sp_seq_length_is_variable=True or you brick it.

Best practices, scraped from the trenches:

-

Stick to power-of-2 sp_size (2,4,8). Odd numbers? All-to-all efficiency tanks.

-

FlashAttn-2 or -3 mandatory; SDPAs lag on long seqs.

-

Batch sizes tiny at first — memory’s still king.

-

DeepSpeed 0.15+; older Ulysses buggy.

Wandered a bit? Yeah, but that’s how breakthroughs land — messy, iterative.

Resources? HF docs, DeepSpeed repo, Snowflake’s ALST paper. Fork their Colab; tweak Llama-8B on 512k tokens yourself.

So, what’s the shift? From brute-force scaling to surgical parallelism. GPUs were vector beasts; now tensor surgeons. History nods — CUDA in 2006 did this to graphics cards.

Can You Integrate Ulysses with Hugging Face Today?

Absolutely. pip install accelerate[deepspeed] transformers trl. Config as above. Launch script with accelerate launch --config_file ds_config.yaml.

Trainer auto-detects. SFTTrainer too — alignment on long contexts? Dream come true.

Pitfalls? Variable seqs demand bucketing in your dataloader. Padding? Ulysses hates it; shard clean chunks.

Benchmarks table from original (paraphrased):

| Model | Seq Len | GPUs | TFLOPs/GPU | Ulysses Speedup |

|---|---|---|---|---|

| Llama-8B | 1M | 4xA100 | 200 | 1.8x vs Ring |

Numbers don’t lie.

This isn’t hype — it’s the pickaxe in the context gold rush.

**

🧬 Related Insights

- Read more: Amazon Slaps a Leash on Rogue AI Agents—But Will It Hold?

- Read more: Inference Scaling: Why It’s Silently Crushing LLM Training Limits

Frequently Asked Questions**

What is Ulysses Sequence Parallelism?

Ulysses shards input sequences and attention heads across GPUs via all-to-all comms, enabling efficient training on 1M+ token contexts without full-sequence materialization per device.

How do you use Ulysses with Hugging Face Accelerate?

Set ParallelismConfig with sp_backend=’deepspeed’, sp_size=4, then accelerator.prepare() — it wraps model and dataloader automatically.

Ulysses vs Ring Attention: which is better?

Ulysses wins on latency and bandwidth via collectives; Ring serializes. Use Ulysses for 32k+ seqs on NVLink clusters.