AI Hardware

Ulysses Sequence Parallelism: The Hack Unlocking Million-Token Training on Everyday GPUs

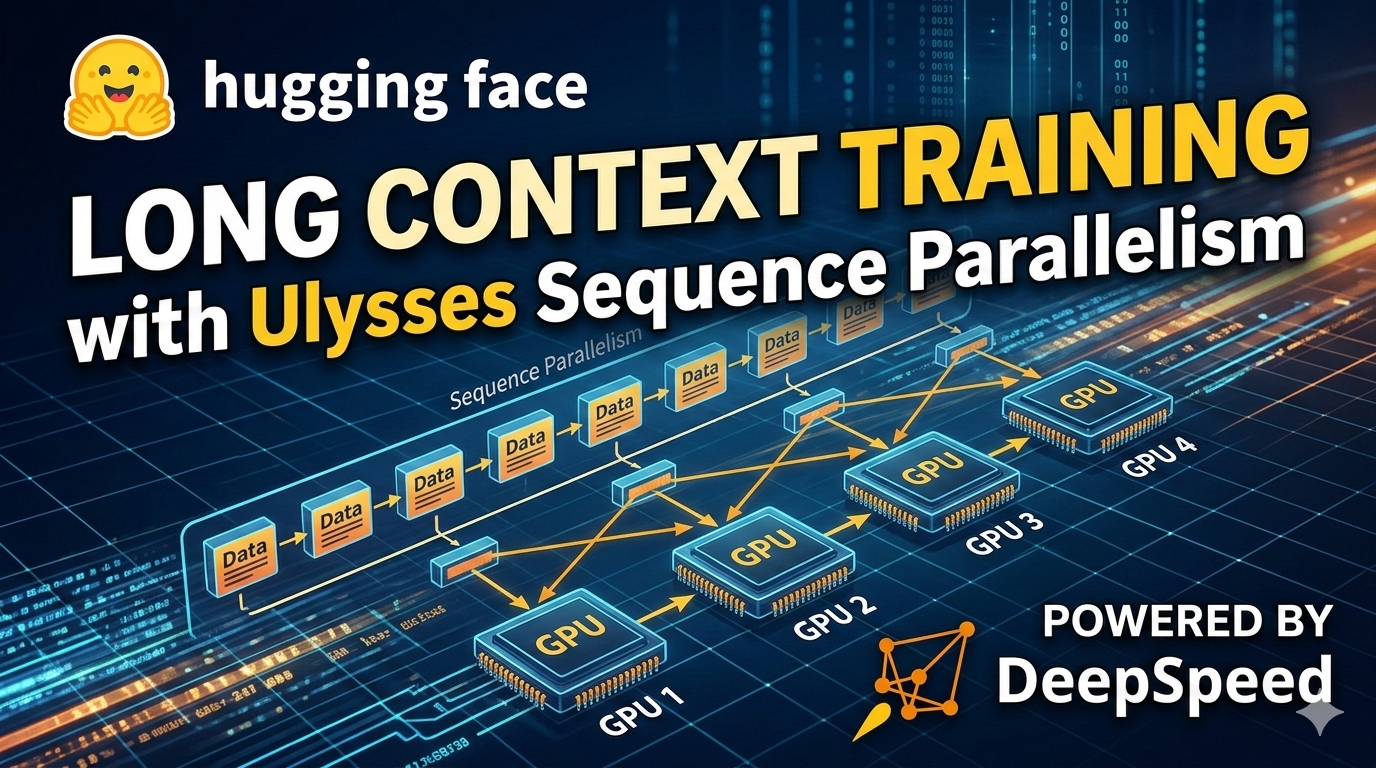

FlashAttention killed the memory beast for long sequences, but compute still explodes quadratically. Enter Ulysses Sequence Parallelism: shard your attention heads across GPUs and train on a million tokens without melting your cluster.