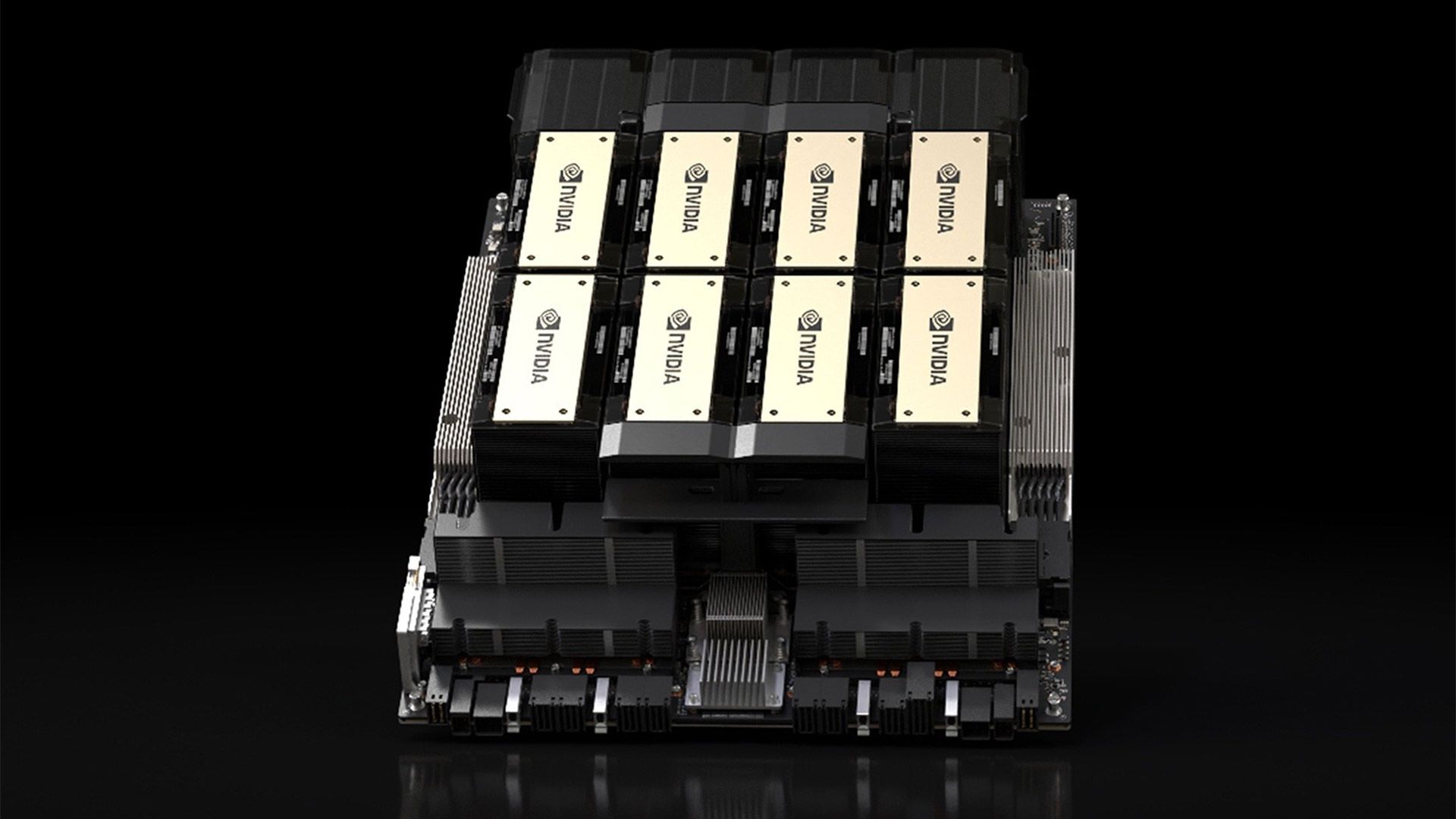

Spotlights flicker over a humming server rack in NVIDIA’s labs. One A100 GPU, a stack of your company’s docs, and boom—your domain-specific embedding model emerges, laser-focused on jargon only your team speaks.

And here’s the wild part. You don’t need armies of labelers or weeks of compute. NVIDIA’s dropped a recipe so slick, Atlassian’s team cranked their JIRA search recall from 0.751 to 0.951—a 26% leap—on a single card.

It’s like handing every dev a personal alchemy lab for search. Remember when PCs let hobbyists build their own software empires? This is that moment for embeddings. General models like those from OpenAI guess at your world; these bespoke beasts know it.

Why Chase Domain-Specific Embeddings?

Think of vanilla embeddings as a tourist in Tokyo—decent at spotting ramen shops, clueless on vending machine poetry. Dump in your niche (say, legal contracts or GPU specs), and suddenly it’s fluent.

NVIDIA’s pipeline starts with your text files—docs, markdown, whatever. No gold-standard pairs required. Their NeMo Data Designer conjures synthetic queries from thin air, using a beastly LLM (nemotron-3-nano-30b-a3b) to grill the corpus.

Run nemotron embed sdg -c default corpus_dir=./data/my_domain_docs, and out pop QA pairs. Simple facts. Multi-hop brain-teasers. Each scored for quality—relevance, accuracy, the works. Only the gems make the cut.

Look. That first query? Straight pull: “What cooling for 4+ H100s?” Answer nails it.

But the magic’s in the multi-hop: “How does 700W TDP force liquid cooling in tight racks?” It chains facts, builds causal chains. Complexity levels 2-5, hops 1-3. Your model’s feasting on hard, realistic cases.

The Hard Negative Secret Sauce

Positives alone? Meh. Model learns easy wins, flops on tricksters—docs that seem relevant but aren’t.

Enter hard negative mining. nemotron embed prep -c default embeds everything with the base Llama-Nemotron-Embed-1B-v2 (1B params, speedy inference). Similarity scores fly. Mask the true positives. Grab the closest imposters.

Margin filter kicks in—only negatives within a hair’s breadth. Now contrastive training teaches discrimination. Boom. Recall@10 jumps 10%, NDCG@10 too, per NVIDIA’s tests.

Atlassian applied this recipe to fine-tune on their JIRA dataset, increasing Recall@60 from 0.751 to 0.951, a 26% improvement - on a single GPU.

That’s no lab toy. Real pipeline, real stakes.

And my hot take? This isn’t just a tool—it’s the Altair 8800 of retrieval. Back then, pre-Apple PCs were elite club gear. Altair kits hit garages, sparked the revolution. Now, one 80GB Ampere (A100/H100) democratizes custom embeddings. Forget Snowflake’s $10k/mo RAG fees; spin your own in hours. Prediction: by 2025, 80% of enterprise search ditches off-the-shelf for these.

Can One GPU Really Do This?

Skeptical? Tested on 1xA100 or H100, 80GB min. Compute cap 8.0+. Free NVIDIA API key. NeMo Automodel handles the finetune—bi-encoder style, contrastive loss on those juicy pairs.

Eval with BEIR benchmark. Split 80/20 train/test. Metrics scream improvement.

Then? NeMo Export-Deploy spits ONNX/TensorRT. Slap on NVIDIA NIM for prod serving. Pipeline locked.

But wait—NVIDIA’s gifting a synthetic dataset from their docs. Plug in, replicate the 10% lift. Or feed your own.

Short para: It’s stupidly accessible.

Here’s the whirlwind: chunk docs, LLM-generate pairs (stages: extract, query, score, filter), mine negatives, train 1B model (<day), eval, deploy.

Energy drinks not included, but you’ll feel the rush.

Wander a sec—why stop at search? RAG boosters, recsys, clustering. Your domain’s playground.

NVIDIA spins this as ‘hit the ground running,’ but let’s call the hype: it’s not effortless if your docs suck. Garbage in, meh embeddings out. Still, the synthetic gen fixes the label drought brilliantly.

Why Does This Matter for Your Stack?

Devs, imagine JIRA queries nailing edge cases. Marketers, customer docs search that gets your lingo. Biotechs, papers retrieved sans noise.

Platform shift vibes. Embeddings were the moat—proprietary, cloud-locked. Now? Open the hood.

Base: Llama-Nemotron-Embed-1B-v2. Balances quality/cost. Finetune it, own the vector space.

Dense five-senter. Production? NIM serves at warp speed. TensorRT optimizes. No Kubernetes nightmares.

One caveat—80GB GPU gatekeeps solos. Colab? Nope. But DGX clouds rent H100s cheap.

🧬 Related Insights

- Read more: KV Caches: The Hidden Speed Boost Powering Your Daily AI Chats

- Read more: AWS’s Bedrock Bots Slash Compliance Screenshot Hell by 90%

Frequently Asked Questions

What is a domain-specific embedding model?

It’s a tuned vectorizer that groks your field’s quirks, outperforming generals on retrieval tasks like search or RAG.

How to build a domain-specific embedding model with NVIDIA?

Grab docs, run NeMo SDG for synthetic QA, mine hard negatives, finetune 1B model on one A100/H100—done in <24h.

Do you need labeled data for embedding fine-tuning?

Nope—NVIDIA’s pipeline synths high-quality pairs automatically, with multi-hop smarts and quality gates.