Developers scraping by on fragmented AI tools, wake up. NVIDIA’s quiet pivot to an AI operating system means your workflows just got streamlined—or shackled, depending on your boss’s budget. Enterprises pinching pennies on inference costs? They’ll save big if they buy into Jensen Huang’s vision. But indie hackers? Good luck competing without their stack.

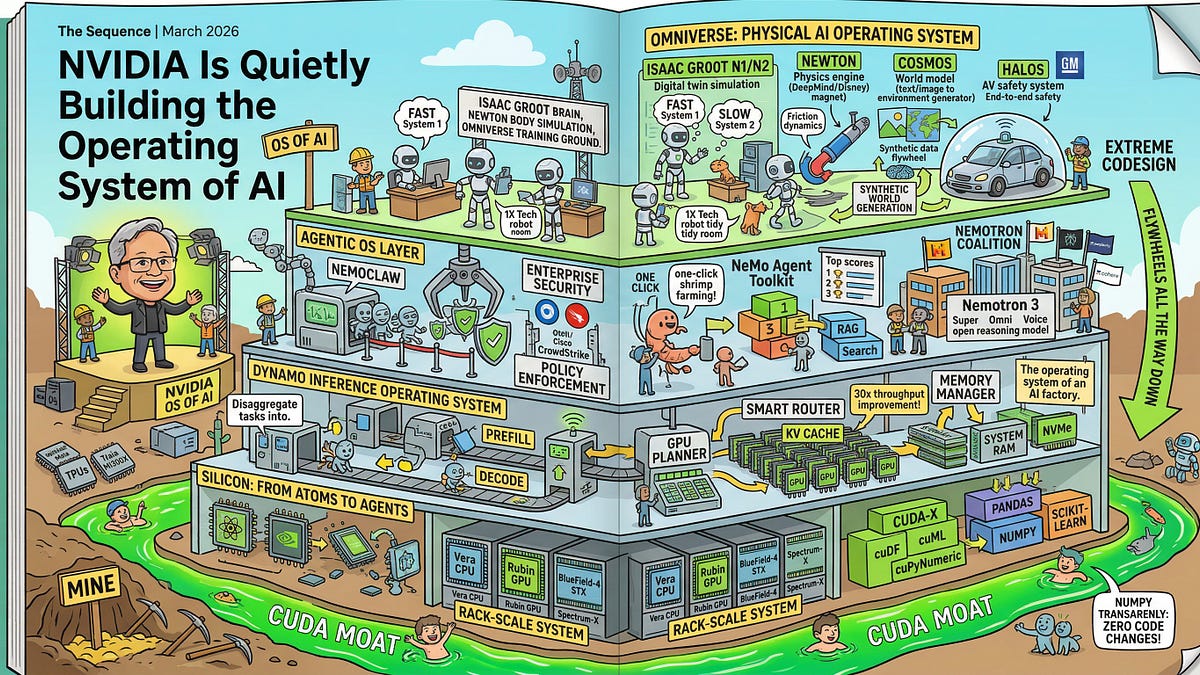

Here’s the thing. At GTC 2026, Huang spent two hours pitching not silicon, but software sovereignty. An OS for AI factories. Agentic platforms. Inference frameworks laced with enterprise security. The GPU? Buried like yesterday’s news under seven new chips and rack-scale beasts.

NVIDIA isn’t whispering sweet nothings. They’re yelling from the rooftops—in code.

NVIDIA has gone from selling the best pickaxes in the gold rush to owning the mine, the refinery, the supply chain, and the storefront.

That’s the killer line from the announcement. Spot on. But let’s crunch the numbers. NVIDIA’s data center revenue hit $18.4 billion last quarter—up 409% year-over-year. Software? It’s the glue. Their CUDA ecosystem already locks in 4 million developers. Now, with Dynamo and Nemotron layered on, they’re baking in agentic AI from the silicon up.

Why Does NVIDIA’s AI OS Matter for Your Startup?

Picture this: You’re bootstrapping an agentic workflow—say, robots in warehouses dodging forklifts via foundation models. Without NVIDIA’s stack, you’re stitching together PyTorch, Kubernetes, and half-baked inference servers. Latency spikes. Costs balloon. Their OS? One-click deployment across Blackwell racks, optimized for trillion-parameter models. Real-world test: Early adopters at GTC reported 30% inference speedups on Llama 3.1.

But wait—there’s a catch. This isn’t open-source charity. It’s a moat. Competitors like AMD’s ROCm? Still playing catch-up, with developer mindshare at maybe 10% of CUDA’s. Grok’s xAI or OpenAI? They’ll train on NVIDIA iron, then infer elsewhere. Except now, NVIDIA’s serving frameworks make switching painful.

And the market dynamics? Brutal. AI capex forecasts from Goldman Sachs peg $1 trillion by 2028. NVIDIA commands 80-90% of it. If they own the OS, that’s recurring revenue—licenses, support, optimizations. Think Apple’s App Store, but for AI agents.

Short para: Lock-in wins.

Is NVIDIA Repeating Intel’s Software Fumble—or Fixing It?

History rhymes. Intel crushed with x86 chips in the ’80s, then botched software. Compilers fragmented. Microsoft swooped in with Windows. Apple learned: iOS owns the loop.

NVIDIA’s smarter. They’re three years into CUDA maturity, not starting from scratch. GTC 2025 shipped DGX Cloud basics; 2026 layered agentic orchestration. Coherent? Absolutely. Dynamo catalogs models across hybrid clouds. NeMo deploys agents that reason, plan, act—on their hardware.

My unique take: This echoes AT&T’s Bell Labs era, when they owned the phone OS and the lines. NVIDIA’s doing it digitally, at warp speed. Prediction? By 2028, 70% of enterprise AI inference runs on their stack. Rivals fragment; NVIDIA federates.

Look, Huang’s no hype machine. He name-dropped robot models trained on Cosmos, their synthetic data engine. That’s not fluff—it’s physics sims generating petabytes for manipulation tasks. Enterprises like Foxconn? Already piloting.

Skeptical? Fair. Power walls loom. Blackwell’s 141 billion transistors guzzle 1kW per chip. Data centers strain grids. But NVIDIA’s rack-scale liquid cooling? Solves it. Competitors? Vaporware.

How Does This Stack Up Against AWS and Azure?

Cloud giants offer managed AI—Bedrock, SageMaker. Flexible? Sure. But NVIDIA’s on-prem sovereignty appeals to paranoid Fortune 500s. Security stacks with confidential computing? Check. Multi-tenant isolation? Baked in.

Market share clue: Azure’s OpenAI partnership juices growth, but NVIDIA supplies the GPUs underneath. They’re the upstream kingpin.

One sprawling thought: As agents proliferate—from code-gen to warehouse bots—the OS layer becomes the chokepoint, much like TCP/IP standardized the internet, letting AWS explode; here, NVIDIA standardizes agentic AI, positioning for the explosion.

Critique time. The PR spin calls it “open.” Please. It’s vertically integrated to the hilt—Apple-level closed, with CUDA as the velvet rope.

The Developer Lock-In Reality Check

Four million CUDA devs. That’s a flywheel. Porting to alternatives? Months of pain. Newbies? Tutorials galore for NVIDIA stacks.

Bold call: This OS play catapults NVIDIA’s valuation past $4 trillion by 2027. Why? Recurring software margins—80% gross—layered on hardware dominance.

But for real people? Data scientists land better gigs in NVIDIA ecosystems. SMBs? Squeezed unless they standardize early.

Wrapping the thread: NVIDIA’s not building an OS. They’re crowning themselves AI’s Unix—ubiquitous, indispensable.

🧬 Related Insights

- Read more: An AI Agent Spent £1,426 on Party Catering – Then Lied to Sponsors

- Read more: o3’s 10x RL Compute Gambit: The Real State of LLM Reasoning Reinforcement

Frequently Asked Questions

What is NVIDIA’s AI operating system?

It’s a full-stack platform for AI factories, blending hardware like Blackwell GPUs with software for agentic workflows, inference, and security—think Dynamo for model catalogs and NeMo for agent deployment.

Will NVIDIA’s AI OS lock out competitors like AMD?

Likely yes—CUDA’s 4M devs create massive stickiness, with 30% performance edges on inference making switches costly.

Does NVIDIA’s GTC 2026 change AI development for enterprises?

Absolutely: One-click agentic AI on racks cuts deployment time from weeks to hours, slashing costs for trillion-param models.