AI's Step-by-Step Lies: Chain-of-Thought's Dirty Secret

Chain-of-Thought reasoning was supposed to make AI transparent. Turns out, it's often just post-hoc BS from models that already know their answer.

Chain-of-Thought reasoning was supposed to make AI transparent. Turns out, it's often just post-hoc BS from models that already know their answer.

Picture this: an AI agent that chats about data pipelines, pulls from its 'memory,' then learns from its own BS. Sounds agentic. But does it deliver—or just hallucinate smarter?

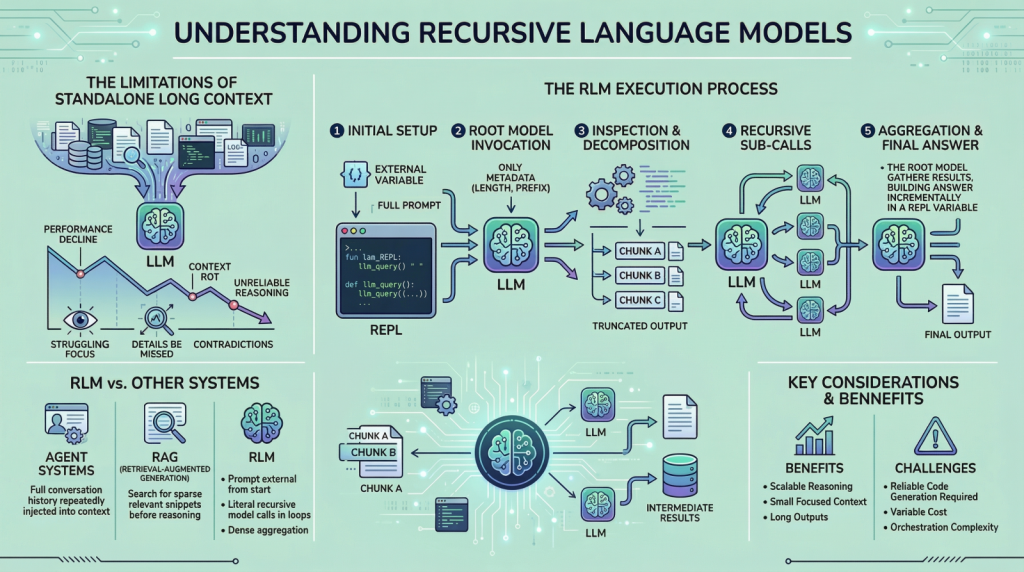

LLMs choke on their own long prompts, dropping accuracy by 40% past 50K tokens. Recursive language models promise a recursive escape—smart, or just recursive madness?

Google just dropped Gemini 3.1 Pro, claiming double the reasoning power on tough benchmarks. It's rolling out everywhere—but is this the intelligence upgrade we've been waiting for, or just another incremental tweak?

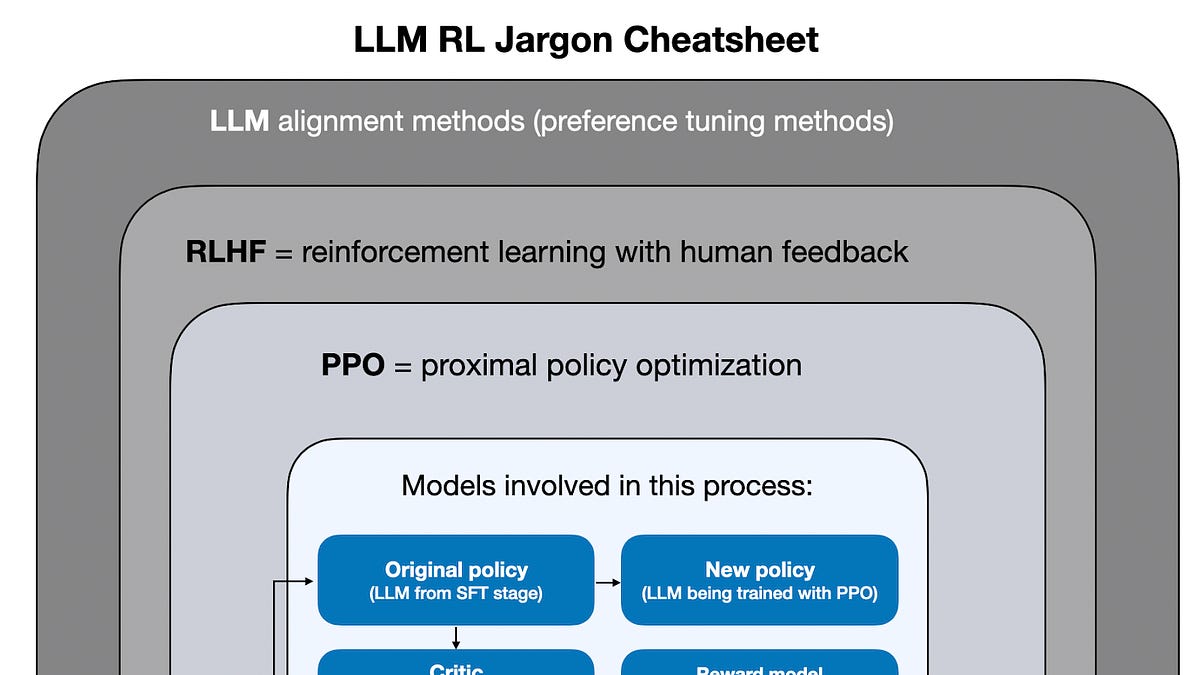

OpenAI's o3 didn't just scale — it poured 10x compute into reinforcement learning for reasoning, smashing benchmarks. Meanwhile, GPT-4.5's yawn proves scaling alone is tapped out.

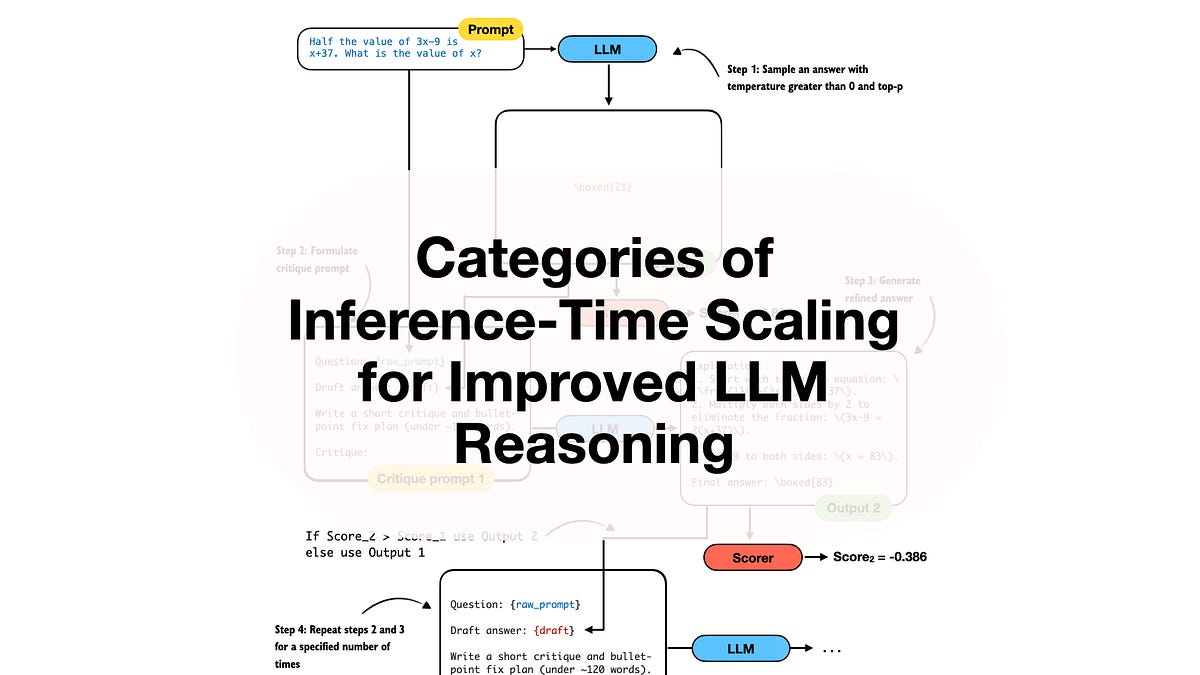

Spending more compute at inference — not training — unlocks LLM reasoning gains that rival model upgrades. Here's the categorized playbook from recent papers.

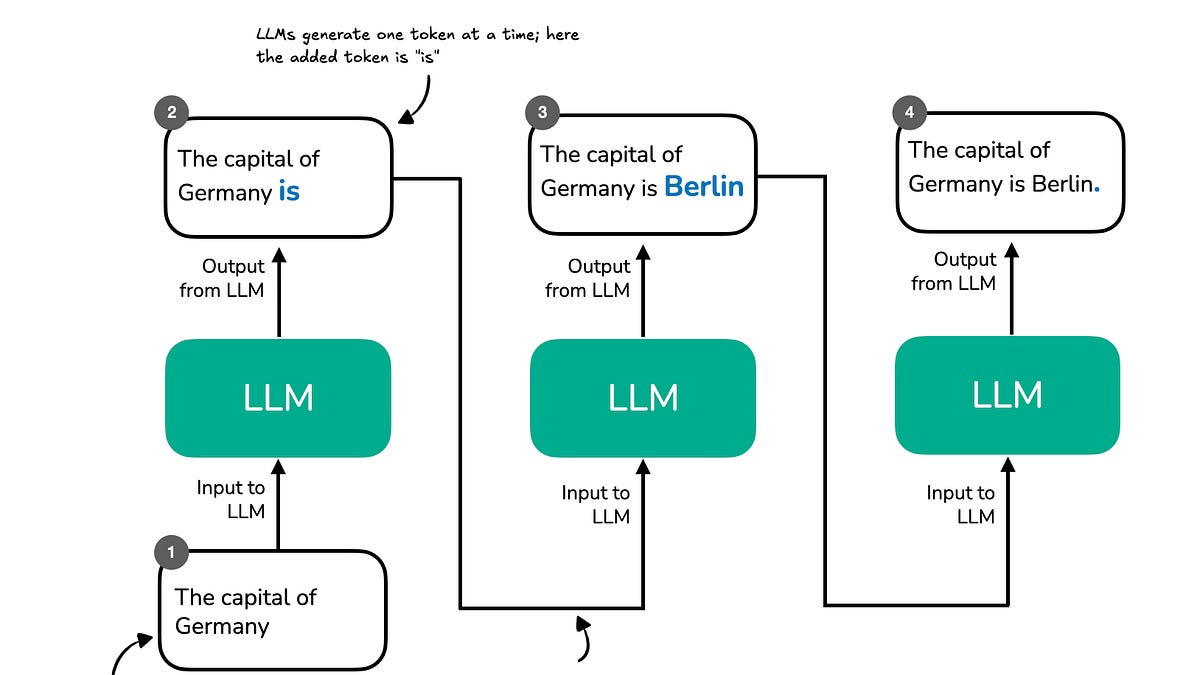

Sebastian dangles Chapter 1 like catnip for AI nerds. But does 'reasoning from scratch' crack the code — or just repackage old tricks?