Sebastian’s cursor hovers over ‘publish,’ and poof — Chapter 1 of Reasoning From Scratch lands in paid subscribers’ inboxes.

It’s a teaser. A 15-page appetizer for his book on how large language models pretend to reason. And yeah, LLM reasoning gets name-dropped right up top, because that’s the hook everyone’s biting.

Wait, LLMs Don’t Reason Already?

They don’t. Not really. Sebastian nails it early:

LLMs have transformed how we process and generate text, but their success has been largely driven by statistical pattern recognition.

Spot on. These behemoths — GPTs, Claudes, whatever — they’re autocomplete on steroids. Feed ‘em enough puzzles, they regurgitate solutions. Throw a twist? Blank stare.

But. Here’s where I smirk. Sebastian calls this the ‘next stage.’ Next stage? We’ve heard that before. Remember when transformers were gonna end programming? Or diffusion models would paint like Picasso overnight?

Short answer: hype cycles spin, reality lags.

Reasoning, he says, means tackling logical puzzles, multi-step math. Fine. Sounds noble. Yet the chapter’s a glossary — definitions, pre-training vs. post-training, inference-time scaling, RLHF tweaks. It’s survey stuff, dressed as revelation.

And that quote above? Buried in pleasantries. The real meat’s in the promise: hands-on coding later. Good luck, paid subs.

How’s This Different From Pattern Matching, Exactly?

It isn’t. Not fundamentally.

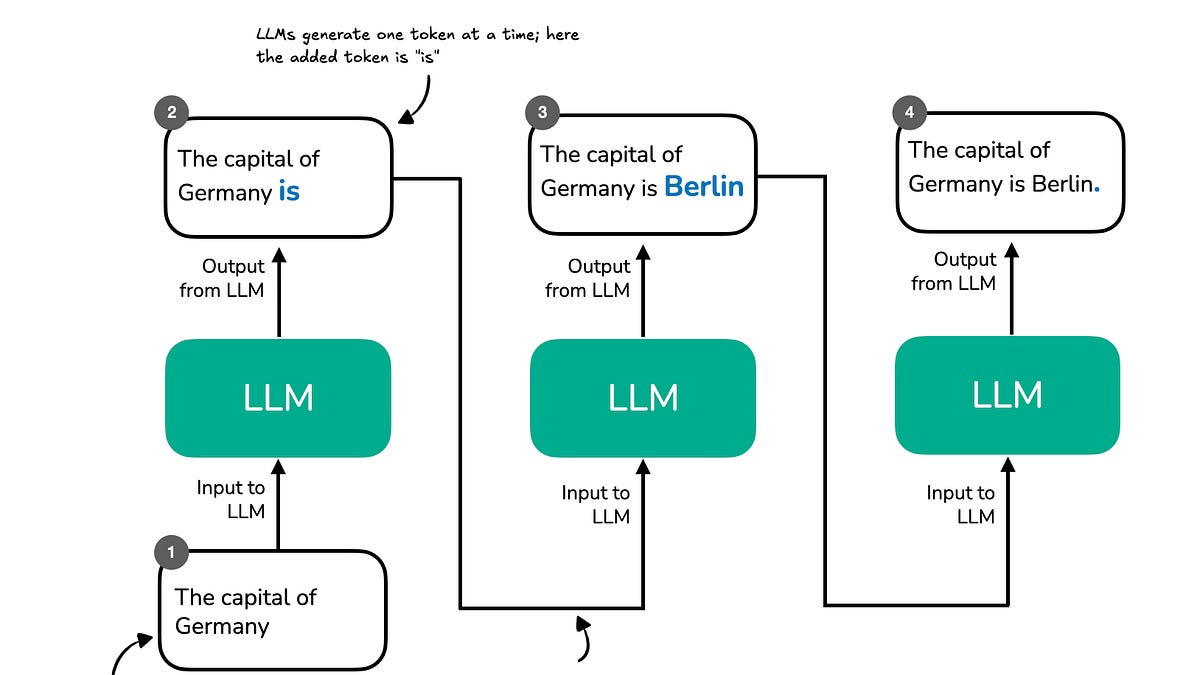

Sebastian draws the line: pattern matching predicts next tokens; reasoning chains thoughts, verifies steps. Noble split. But LLMs? They’re still predicting. Chain-of-thought prompting just makes the prediction longer, wordier — like a student bullshitting an essay.

However, new advances in reasoning methodologies now enable LLMs to tackle more complex tasks, such as solving logical puzzles or multi-step arithmetic.

Advances. Sure. Test-time compute bumps scores on benchmarks. Pump in more tokens during inference, watch GSM8K jump 10 points. Cute trick. But scale inference forever? Your electric bill begs to differ.

I dig the candor on pre-training — hoover data, predict masks — then post-training fine-tunes for chat. Standard playbook. No shocks.

What irks me? The ‘from scratch’ angle. Why rebuild? To grok limits, he claims. Fair. But smells like PR spin for ‘we’re doing real science, not OpenAI cargo culting.’

One para. That’s my beef.

LLMs shine on seen patterns. Flop on novel combos. Sebastian lists fixes: longer contexts, synthetic data, process supervision. Solid. Yet none birth true reasoning — that emergent ‘aha’ humans get.

My hot take, absent from his pages: this echoes the 1980s symbolics vs. connectionism wars. Back then, logic rule nerds sneered at neural nets as dumb pattern-munchers. Nets won — briefly. Now we’re grafting symbols onto nets again. History rhymes. Predict: another stall when benchmarks saturate, hallucinations persist.

Why Build Reasoning Models From Scratch?

Control. Insight. Trade-offs.

Sebastian pitches it straight: don’t tweak black boxes; craft from ground up. Test scaling laws raw. Poke holes deliberately.

Smart. OpenAI’s o1 hides guts; you guess at ‘reasoning tokens.’ Here? Transparency play.

But — em-dash alert — is it practical? Coding chapters ahead sound fun. Implement test-time scaling? RL for reasoning? Devs drool. Yet ‘scratch’ implies no PaLM-scale compute. Toy models won’t scale to real wins.

Corporate parallel: Anthropic’s constitutional AI. Shiny papers, meh products. Scratch-building risks the same — academic wankery, not world-changers.

Punchy doubt: it’ll illuminate. Won’t illuminate new paths.

Is ‘Reasoning From Scratch’ Worth the Sub Fee?

Depends. If you’re knee-deep in LLM tinkering, yes. Free teaser? Meh overview.

He covers:

-

Reasoning def: deliberate step-chains, not snap guesses.

-

Training arcs: pre (patterns), post (alignment).

-

Boosters: scaling inference, RL rewards process not outcome.

Teases coding bliss next.

Dry humor: it’s like a menu promising steak, serving bread. Enjoyable bread, though.

Skepticism peaks here. Benchmarks lie — ARC-AGI laughs at chain-of-thought. True reasoning needs world models, not token roulette. Scratch or not, LLMs lack ‘em.

Bold call: by 2026, ‘reasoning’ flops commercially. Agents hallucinate trades, crash portfolios. Back to hybrid rules + nets.

The Hands-On Promise — Or Pipe Dream?

Chapters ahead: code it yourself. Inference scaling loops. Custom RL loops. Alluring.

Reality check. You’ll need GPUs. Time. Bugs that’ll fry your weekend.

Worth it? For masochists, yeah. Rest? Wait for GitHub repos.

Sebastian’s gift to subs — gratitude wrapped in ambition. But reasoning from scratch? More map than treasure.

Wander a bit: I’ve hacked similar. Prompt engineering beats most ‘methods’ still. Don’t tell researchers.

Paid wall irks free readers. Fair play — content ain’t free. Still, drip-feed teases engagement metrics. Smart biz.

🧬 Related Insights

- Read more: Claude Code Agents in Parallel: Worktrees End the Waiting Game

- Read more: NotebookLM + Gemini: 30 Use Cases That Cut Through the Google Hype

Frequently Asked Questions

What is Reasoning From Scratch Chapter 1 about?

Intro to LLM reasoning basics: definitions, training stages, improvement tricks like inference scaling.

Does Reasoning From Scratch fix LLM hallucinations?

Nope — it’s conceptual groundwork. No silver bullet for bogus outputs.

Can I build real reasoning LLMs from scratch?

With code examples ahead, maybe toy versions. Real ones? Compute walls await.

Is LLM reasoning just pattern matching?

Mostly, yeah. Chains look thoughtful; still predict next word.