OpenAI engineers let it slip during the o3 livestream: ten times the training compute over o1, funneled straight into reinforcement learning for reasoning. Boom. Benchmarks lit up.

And yet GPT-4.5 and Llama 4? Crickets. No explicit reinforcement learning for LLM reasoning baked in. That’s the tell. Markets shrugged because we’re hitting scaling’s wall — bigger models, more data, same old tricks won’t cut it anymore.

Zoom out. xAI’s Grok and Anthropic’s Claude already flaunt “thinking” buttons that toggle extended reasoning. Users click, models deliberate. OpenAI’s o3 response? A compute blitz on RL methods. It’s not hype; it’s market dynamics shifting hard toward post-training RL pipelines.

Here’s the thing — this isn’t some lab curiosity. DeepSeek-R1, fresh papers on GRPO, they’re stacking evidence: reasoning RL delivers reliable gains on tough tasks. Expect it standard-issue soon.

What Counts as ‘Reasoning’ in These Models?

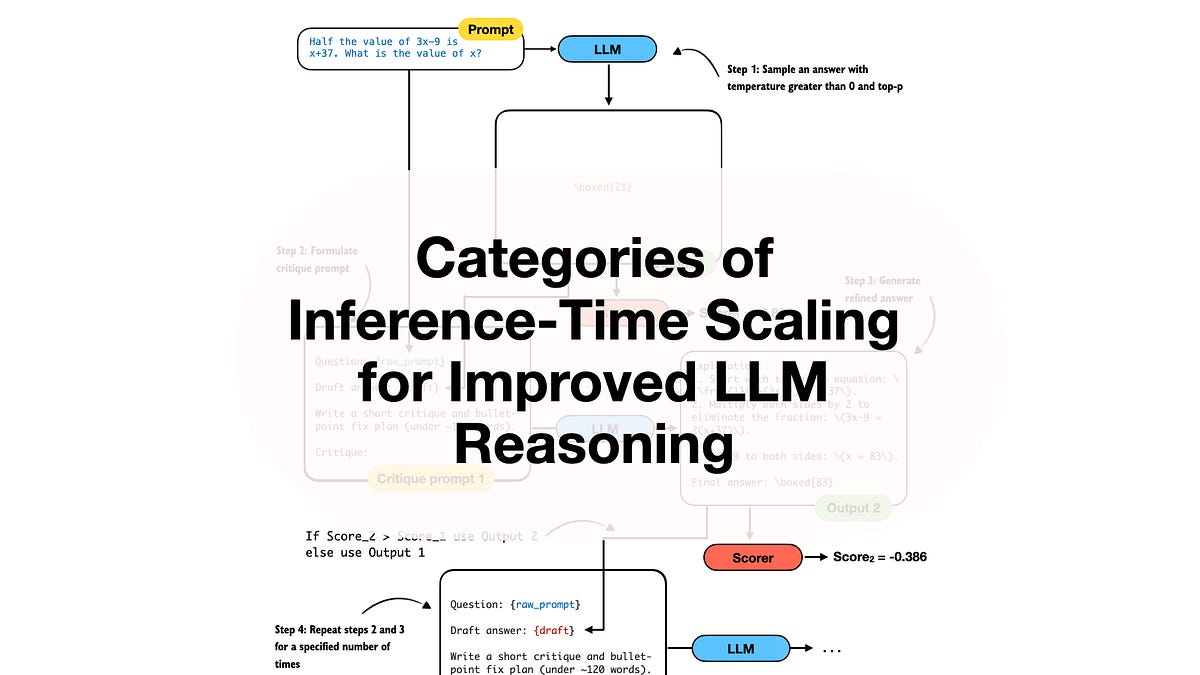

Reasoning boils down to spitting out intermediate steps before the final answer. Chain-of-thought style. Structured logic, computations, whatever gets you there.

Reasoning, in the context of LLMs, refers to the model’s ability to produce intermediate steps before providing a final answer. This is a process that is often described as chain-of-thought (CoT) reasoning. In CoT reasoning, the LLM explicitly generates a structured sequence of statements or computations that illustrate how it arrives at its conclusion.

That’s straight from the source material — clear, no fluff. Test-time tricks like longer prompts help, sure. But training-time RL? That’s the unlock.

OpenAI’s own charts show it: pre-training scales broad smarts, RL hones razor-sharp inference.

Short para for punch: We’re past vanilla scaling.

RLHF: The OG Alignment Play That Started It All

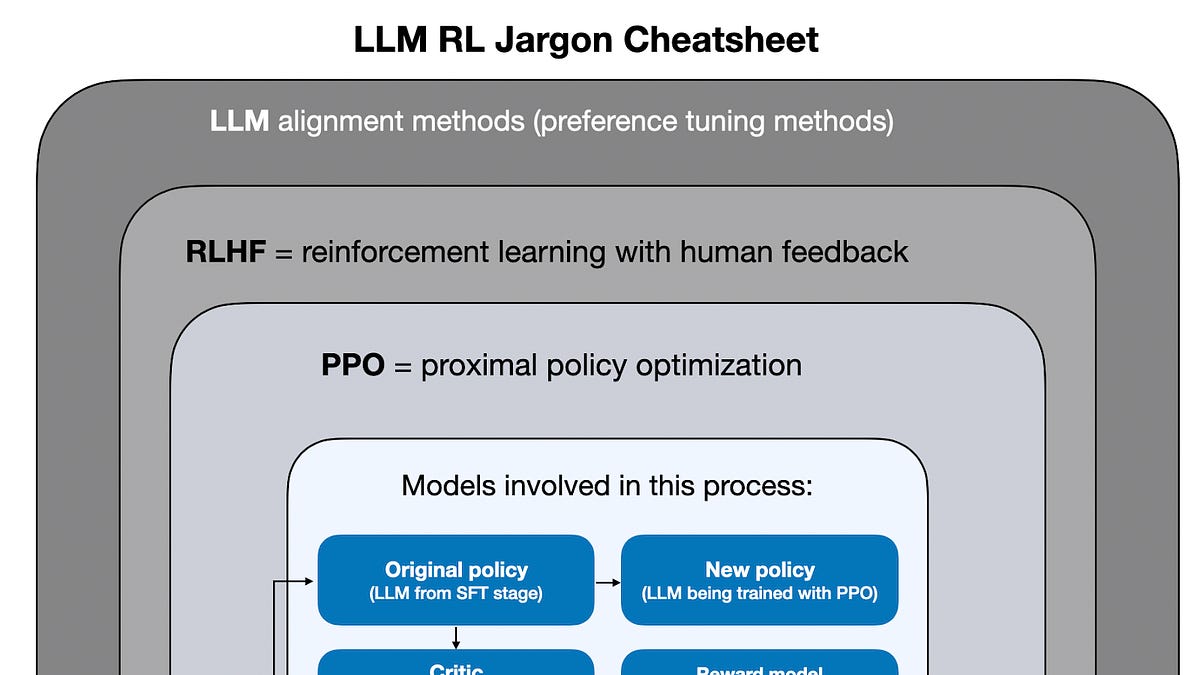

Remember InstructGPT? ChatGPT’s blueprint. Pre-train massive. Supervised fine-tune on instructions. Then RLHF — reinforcement learning from human feedback — to nudge outputs toward human prefs. Helpful, harmless, honest.

It worked wonders. Multiple responses per prompt? RLHF picks the winners, amplifies your style. Safety too — no leaks, no curses.

But reasoning amps this up. Not just prefs; accuracy on puzzles, math, code. Human feedback gets swapped or augmented.

PPO ruled early days. Proximal Policy Optimization. Stable, workhorse RL for LLMs. But it’s clunky for long reasoning chains — value function mismatches tank it.

Enter GRPO. Group Relative Policy Optimization. New kid, fixes PPO’s gripes by grouping trajectories, relative rewards. Less compute waste, better stability.

My take? GRPO’s no revolution — it’s evolution. Like upgrading from V8 to turbo V6. Efficient, potent.

Will GRPO Finally Dethrone PPO in LLM Training?

PPO’s been king since RLHF exploded. Reliable on chit-chat alignment. But reasoning? Chains get long, KL divergence explodes, training craters.

GRPO sidesteps. Samples groups of responses per prompt, ranks ‘em relatively. Reward model’s happier — no absolutes, just comparisons.

DeepSeek-R1 leaned in hard. Trained on synthetic math/code data, iterated RL loops. Beat o1-preview on some benches with less overall compute. Smart.

Data point: o3’s 10x over o1? Mostly RL stages. Per staff. That’s conviction.

Critique time — companies spinning ‘scale is all’ are PR-puffing. Muted launches scream it. Investors watch benchmarks; reasoning gaps show.

And here’s my unique angle, absent in the originals: This echoes 2022’s RLHF pivot. GPT-3 raw was smart but meh. RLHF birthed ChatGPT mania. Ignore reasoning RL now? You’ll lag like fine-tune holdouts did then. Bold prediction: By 2025, 80% flagships ship RL-reasoning or bust.

DeepSeek-R1’s RL Recipe: What We Can Steal

They didn’t mess around. Start with strong base — DeepSeek-V3. Supervised fine-tune on reasoning data. Then RLVR — reward modeling from verifiers, not just humans.

Verifier? Automated checker for math proofs, code runs. Scalable, cheap.

GRPO kicks in: rejection sampling for diversity, relative ranking. Multiple iterations. Boom — math scores jump 20+ points.

Not perfect. Hallucinations linger on edge cases. But gains are real, reproducible.

Market read: DeepSeek’s open-ish drop pressures closed shops. xAI, Anthropic iterating too. OpenAI leads compute, but recipes leak.

One-sentence gut check: RL reasoning commoditizes soon.

Key Lessons from the Hottest RL Reasoning Papers

Paper one: GRPO intro. Ablates beautifully — relative rewards cut variance 2x vs PPO. On GSM8K math, +5% easy.

Another: Online RL beats offline for reasoning. Interleave generation, reward, update. No static dataset staleness.

DeepSeek’s own: Multi-stage RLVR. Humans bootstrap, verifiers scale. Hybrid wins.

Insight cluster: Compute allocation matters more than total FLOPs now. 70/30 pretrain/post? Nah. 50/50, skewed RL.

Skeptic hat: Not silver bullet. Commonsense still slips. But for STEM tasks? Locked in.

xAI whispers Grok-2 used similar. Claude 3.5 too. Convergence.

Why Does This Matter for the Next Model Wave?

Flagships without reasoning RL? Dead on arrival. Users toggle ‘think’ now — expect it default.

Enterprise angle: Coding agents, analytics. Accuracy jumps pay ROI.

Compute bar rises — o3’s 10x signals arms race. But GRPO efficiency tempers it.

Position: Bullish. Smartest strategy today. Scale chasers? Pivot or perish.

We’ve covered basics to bleeding-edge. GRPO evolves PPO. RLVR scales rewards. o3 proves payoff.

🧬 Related Insights

- Read more: Perplexity Computer: Your Second Brain or Just Clever Note-Taking?

- Read more: Orbital Datacenters: AI’s Escape from Earth’s Energy Shackles

Frequently Asked Questions

What is GRPO and how does it improve LLM reasoning?

GRPO — Group Relative Policy Optimization — ranks batches of model responses relatively, stabilizing RL training for long reasoning chains over PPO’s pitfalls.

How did OpenAI train o3 for better reasoning?

10x more compute than o1, heavily into reinforcement learning stages with tailored reasoning rewards, per their livestream.

Will reinforcement learning replace scaling in LLMs?

Not replace — augment. Scaling hits diminishing returns; RL strategically multiplies gains on hard tasks.