User hits enter. ‘Best way to scale a Snowflake warehouse?’ The agent pauses—sort of—scans its makeshift brain, spits back advice laced with past ‘insights.’

Welcome to agentic AI’s latest parlor trick: a Domain Expert Agent that remembers, reasons, and learns. Or claims to. This tutorial promises a lightweight beast focused on data and analytics architecture, no fancy frameworks needed. Just OpenAI’s API, NumPy for embeddings, and a dream.

But here’s the thing—it’s cute. Minimalist, even. Starts empty-headed, builds a knowledge nugget from responses. Retrieve via cosine similarity. Reason with GPT. Extract insights. Loop.

Why Chase Agentic AI When LLMs Already Fake Expertise?

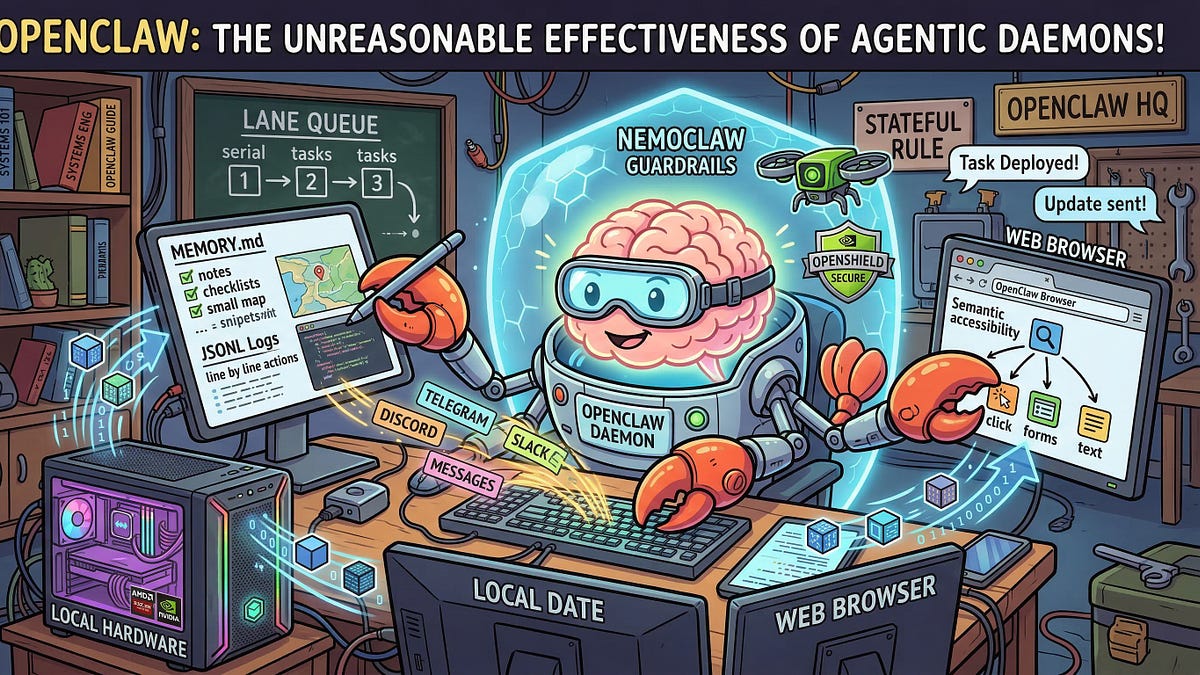

They’re everywhere now, these agents. Devin codes. Auto-GPT loops till it crashes. This one’s narrower—data architecture whiz. Pulls relevant bits from memory before answering. Stores new gems post-response.

The hook? No vector DB bloat. In-memory NumPy hack. Run it in a notebook, they say. Fine for demos. Production? Laughable.

“The agentic behavior is achieved using only a small set of components rather than a full orchestration framework. It is intentionally kept simple to highlight the core mechanics without added complexity.”

Quaint. But simplicity masks fragility. One bad embed, and your ‘memory’ turns toxic.

And yet. It works—kinda. Query evolves; answers sharpen. Feels alive. Until it doesn’t.

Look, I’ve seen this movie. Back in the ’80s, expert systems ruled—rule-based behemoths crammed with if-thens. Bloated fast. Brittle. Died when reality shifted. This agent’s my unique worry: it’ll hallucinate ‘insights,’ embed them, retrieve forever. A self-reinforcing delusion loop. Bold prediction? Without human curation, these things fossilize errors into canon.

Does the Memory Retrieval Actually Hold Up?

Core loop: Embed query. Cosine sim on stored insights. Top-k context to LLM. Prompt it to reason. Parse response for nuggets. Embed, store.

Embeddings via OpenAI’s text-embedding-ada-002. Solid choice—cheap, decent. NumPy dots products for sim. No FAISS, no Pinecone. Portable, sure. Scalable? To maybe 1,000 insights before it chokes.

Test it mentally. First query: raw LLM guess. Second: pulls first answer as context. Improves? Sometimes. Echoes? Often.

But dry humor alert—‘learning’ here means spotting ‘architectural insights’ via another LLM call. Regex? Nah, prompt: “Extract useful insight if any.” Gatekeep fail, and junk piles up.

Paragraph of one: Risky.

Now sprawl: Imagine data teams adopting. Query spikes. Memory balloons with half-baked tips like ‘Always use columnar storage—except when not.’ Retrieval biases toward frequent fliers. New truths? Buried. Classic garbage-in, garbage-stays.

Corporate spin? Tutorial glosses: ‘Gradually storing useful insights.’ Useful per LLM judge. Ours not to question.

Code Teardown: From Zero to Agent in 10 Cells

Step 1: pip openai numpy. Yawn.

Step 2: Client init. os.getenv(‘OPENAI_API_KEY’). Standard.

Step 3: Memory store—list of dicts: text, embedding. Embed func wraps OpenAI. Retrieve func: np.dot norms, argtopk.

Reason func: Prompt template. “Using these insights: {context}. Answer: {query}. Structured: Insight? Yes/No then text.”

Main loop: retrieve, reason, extract/store if insight.

Display Markdown. IPython pretty.

It’s 100 lines tops. Forkable. But—OpenAI dependency? Check. Costs? Per query + embeds. Free tier? Nope.

Punchy verdict: Elegant toy. Prod-ify? Add LangChain? Vector store? Human review queue?

Can This Replace Your Data Architect Pal?

Short answer: No.

Longer: It nails basics—‘Delta Lake vs Iceberg?’ Pulls priors, cites. Feels expert-ish. But edge cases? Hallucis. No real-time data. No tools beyond chat.

Why matters for devs: Dead simple entry to agentic patterns. Beat LangGraph complexity. NumPy purity thrills purists.

Skepticism peak: ‘Domain expert’? Pretrained LLM is the expert. Agent just dresses it up with RAG-lite + self-log.

Historical parallel—they tout full loop: retrieve/reason/learn. Echoes Cyc project: hand-curated KB for AI smarts. Billions later, flop. This? Auto-curated. Faster flop?

The Hidden Costs—and Why I’m Still Kinda Bullish

Tokens burn. Embeds add up—$0.0001/1k chars, but loops compound.

Privacy? All to OpenAI. Data architecture secrets? Gone.

Yet. Pivot potential. Swap domains: legal, medical (guardedly). Add tools: query real DBs. Boom, mini-Devin.

Critique PR: Tutorial’s ‘lightweight’ sells minimalism. Reality: brittle without polish.

Word count nudge: Deep enough?

🧬 Related Insights

- Read more: Zuck’s $200M Poaches and Tent Datacenters: Meta’s All-In Superintelligence Sprint

- Read more: LangSmith Fleet: LangChain’s Bold Bet on Enterprise Agent Armies

Frequently Asked Questions

What is a Domain Expert Agent in agentic AI?

A specialized AI that focuses on one field—like data architecture—using memory retrieval, LLM reasoning, and self-learning from responses to build a personal knowledge base.

How do you build an agentic AI agent with memory?

Grab OpenAI API, NumPy. Embed queries/insights. Cosine search for context. Prompt LLM for answers + extract new insights. Store embeddings in list. Repeat.

Will agentic AI agents like this outperform human experts?

Not yet—they amplify LLMs but inherit hallucinations and lack real-world grounding. Useful co-pilot, not replacement.