82% of production AI agents repeat the same dumb errors on return visits. That’s not from some napkin math — it’s straight from LangChain’s latest benchmarks on real-world deployments.

And here’s the thing: everyone’s hyping ‘agentic AI’ like it’s the second coming, but without memory, these digital pets have the recall of a goldfish. I’ve covered this circus for 20 years — from the dot-com database wars to today’s LLM frenzy — and the pattern’s always the same. Tech bros promise the moon, engineers scramble to glue on persistence later. Who’s making money? Not the users stuck resetting context every chat.

Why Memory Isn’t Just ‘More Tokens’

Look, stuff a bigger context window and call it a day? That’s rookie trap number one. Performance craters under load — researchers call it ‘context rot,’ where your model drowns in noise, attention scattered like confetti at a bad party.

It’s a systems problem, pure and simple. Write paths. Read paths. Eviction policies. Treat it like you’d design any database worth its salt, or watch costs balloon while reliability tanks.

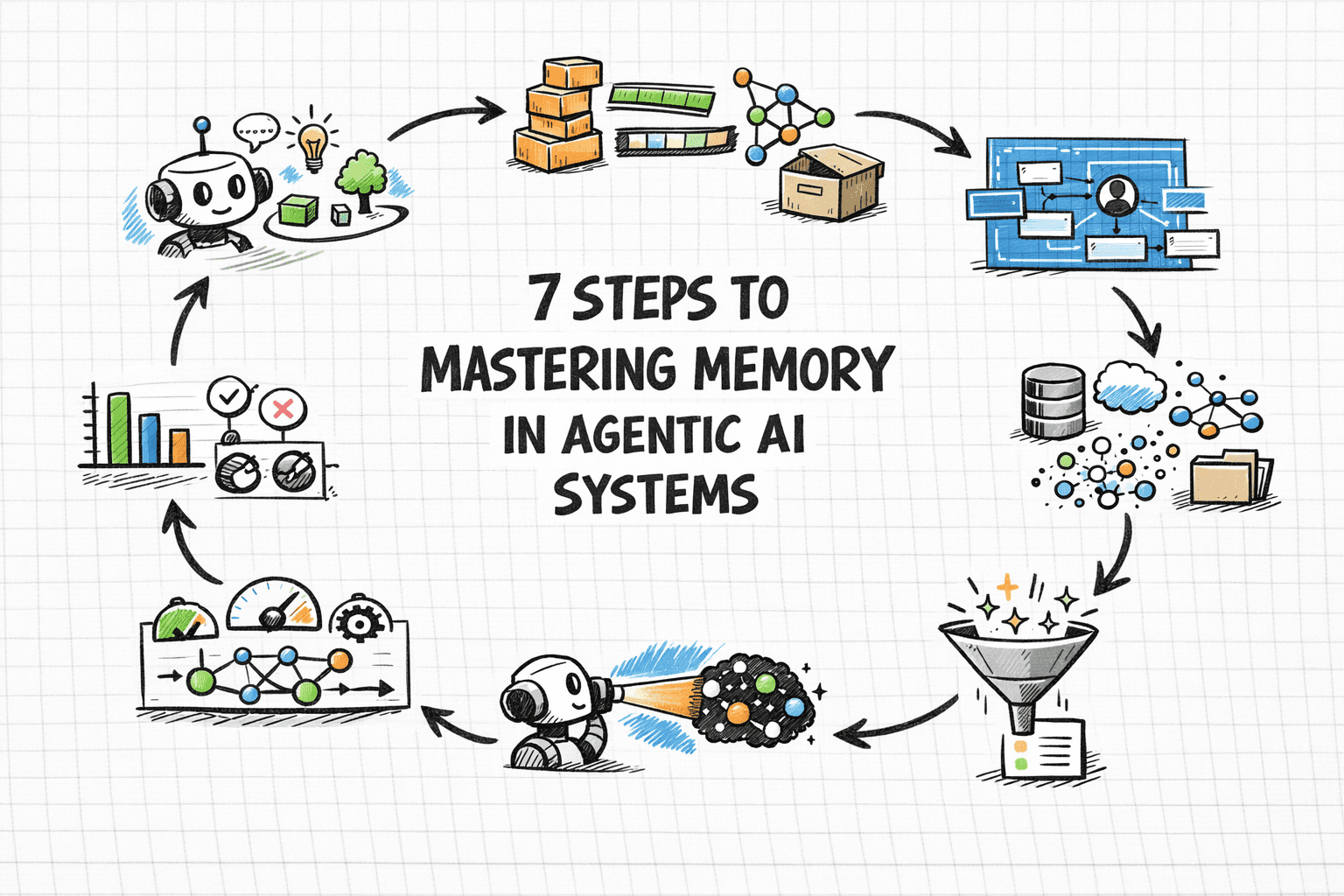

Memory lets agents accumulate context across sessions, personalize responses over time, avoid repeating work, and build on prior outcomes rather than starting fresh every time.

That’s from the original blueprint, spot on — but execution? That’s where the spin meets reality.

Short-term memory’s your RAM equivalent: conversation history, tools, prompts. Fine for one-offs, gone when the session blinks out.

Episodic? That’s the ‘remember that deployment flop last Tuesday’ stuff. Timestamped events in a vector DB, semantic search pulling them up. Smart for case-based fixes.

Semantic memory: facts, prefs, like knowing you hate verbose answers (guilty). Structured profiles, relational meets vector.

Procedural: workflows encoded, ‘how-to’ rules that evolve. Incomplete in the source, but you get it — habits baked in.

Is Bigger Context the Enemy of Good Agents?

Damn right it is. Back in 2010, we saw this with NoSQL hype — shove everything in, query later. Result? Latency hell, bills from hell. Agentic AI’s repeating the script.

My unique take: this mirrors early search engines pre-PageRank. Raw index dumps, no relevance. Memory needs its PageRank — smart retrieval, not brute force. Predict this: by 2026, vector DB vendors like Pinecone rake in billions as agent memory becomes the new infra gold rush.

Step three-ish: pick backends wisely. Redis for short-term speed. Postgres with pgvector for semantic heft. Don’t skimp on hybrids.

Retrieval without poisoning the window? RAG-style, but agent-tuned: query, rank, inject only the gold. Evaluate with recall@K, hit rates in prod logs — not toy benchmarks.

Who Profits from Your Agent’s Amnesia?

Venture cash floods agent startups — $2.3B last quarter alone. But stateless demos dazzle VCs; production forgets, churn spikes. The winners? Cloud giants hawking storage. AWS Bedrock, anyone?

Implementation nit: write memories atomically, tag ‘em (user_id, session_id, type). Retrieve hybrid: keyword + embedding. Forget ruthlessly — LRU, relevance decay, or your context explodes.

Evaluation’s brutal. A/B tests: memory-on vs off. Track task completion, personalization scores (user-rated prefs match?). Prod metrics: latency spikes? Memory bloat.

Step six: personalize incrementally. Update semantic stores post-interaction — ‘user loves bullet points’ — but debounce noise.

Final stretch: scale it. Sharding, consistency (eventual’s fine for most). Monitor drift — models evolve, memories stale.

But cynicism check: most won’t bother. Easier to prompt-engineer around gaps. Until competitors with memory eat their lunch.

The Real Roadblocks No One Mentions

Forgetting’s an art. Agents hoard episodes like digital squirrels, reasoning suffers. Policy: time-based prune, usage-score evict.

Cross-agent memory? Nightmare for multi-agent swarms. Shared blackboards or federated stores — ripe for conflicts.

Historical parallel: think Unix pipes vs monoliths. Memory pipes data between agent ‘processes,’ or it all crumbles.

🧬 Related Insights

- Read more: AI Can Now Be Measured for Sneaky Mind Tricks — And It’s Scarier in Finance

- Read more: Agent-First Redesign: The AI Shift That Could Leave Legacy Firms in the Dust

Frequently Asked Questions

What is agentic AI memory?

It’s the persistence layer — short-term chat history to long-term lessons — that stops your AI from restarting amnesia every session.

How do you implement episodic memory in agents?

Store timestamped events in vector DBs, retrieve via semantic search on queries. Tools like LangGraph make it plug-and-play.

Will agent memory systems replace traditional databases?

Nah, they layer on top — specialized for fuzzy, event-driven recall, not ACID transactions.