Picture this: your slick ReAct agent, humming along on a critical task—booking flights, querying databases, crunching data—suddenly stalls. Not because the internet blinked or the API hiccuped. No. It’s retrying a tool called ‘web_browser’ that lives only in the LLM’s fever dream. For everyday builders shipping AI into production, this isn’t trivia. It’s cash evaporating, tasks failing silently, and that promotion slipping away because latency spiked ‘unexplainably.’

ReAct agents. They’re the workhorses of agentic AI, looping through Thought-Action-Observation like a diligent intern. But here’s the kicker—they’re torching 90.8% of their retry budgets on guaranteed failures. Tools that never existed.

And get this: your dashboards? They’re lying to you. Success rates look peachy. Latency’s ‘fine.’ But under the hood, retries are hemorrhaging on hallucinations.

Why ReAct Agents Waste Retries on Phantom Tools?

Look. The smoking gun is one innocent line in every ReAct tutorial you’ve ever seen:

tool_fn = TOOLS.get(tool_name) # ◄─ THE LINE

LLM spits out ‘sql_query’ or ‘python_repl’—tools that aren’t in your dict. Boom. None. But the retry loop? It doesn’t care. It treats this like a flaky network blip. Retry. Retry. Burn the budget.

Here’s the thing. Retries only make sense for errors that can change. A hallucinated tool name? It’s etched in stone. No amount of re-prompting conjures it from thin air. Yet your agent spins its wheels, 90.8% of retries down the drain in a fresh 200-task benchmark.

I ran the numbers myself—deterministic sim, seed 42, calibrated to GPT-4-class hallucination rates (28%, conservatively). Naive ReAct vs. fixed version. The waste? Eye-watering.

But wait—it’s worse. When a real error hits later (timeout, say), the budget’s gone. Task fails. Logged as ‘retry exhaustion.’ No mention of the ghost tools that starved it.

The Hidden Cost to Real-World AI Builders

You’re an ML engineer at a startup. Every API call costs real dollars—OpenAI tokens don’t grow on trees. This waste isn’t abstract; it’s your burn rate inflating 3x on agent steps alone.

Variance explodes too. One task zips through in 4 steps. The next? 10, clogged with retries. Predictability? Shot. Scaling to production? Nightmare.

And the PR spin from framework docs? They tout ‘resilient agents’ without whispering about this. It’s like selling a car with brakes that lock on dry pavement—shiny demos hide the flaw.

My unique spin: this echoes the early days of web APIs. Remember when devs blindly retried 404s like they were 503s? Took structured error handling to fix. AI agents are hitting the same wall—now.

Three Fixes That Nuke Wasted Retries

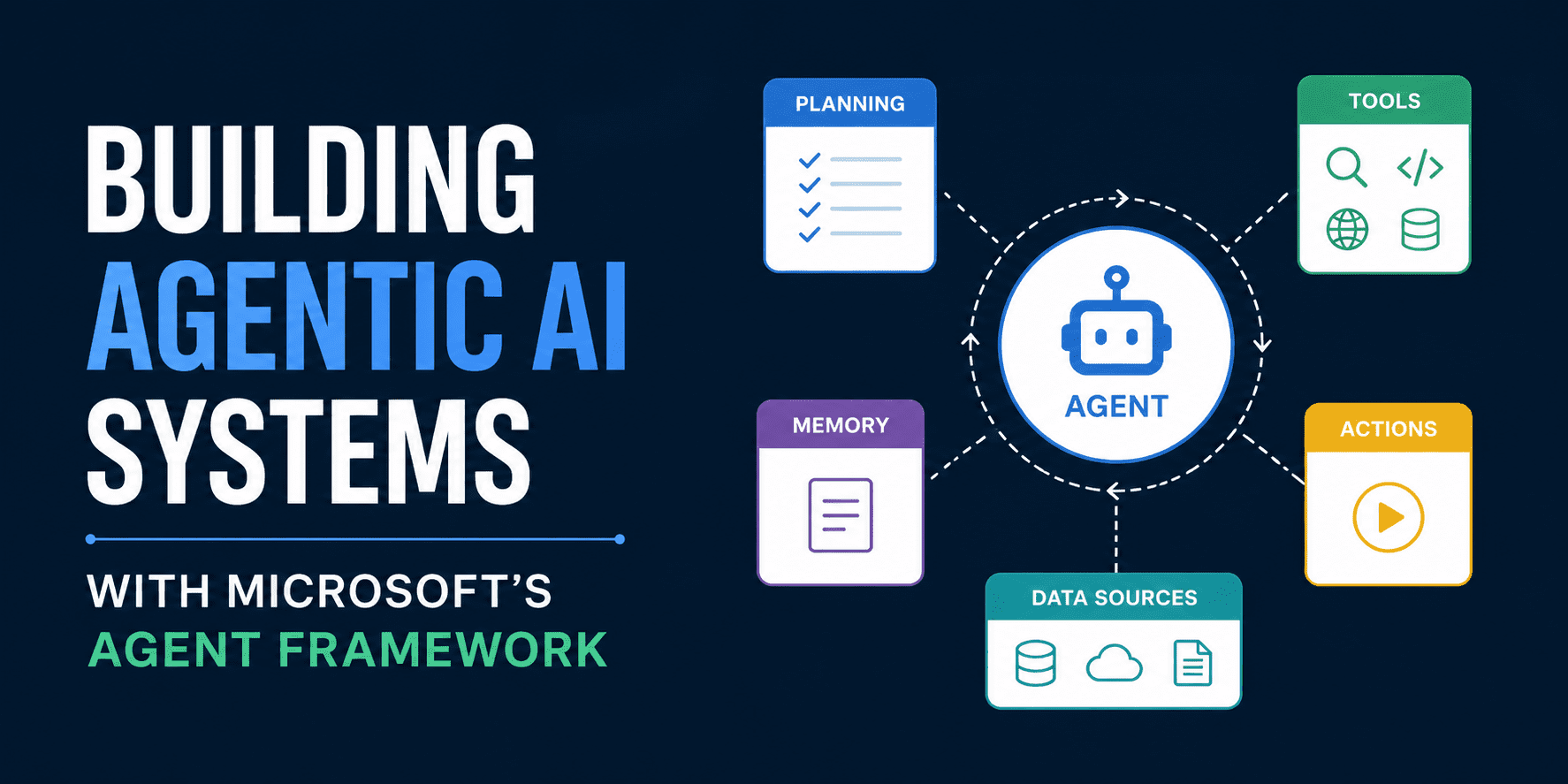

Fix one: Taxonomy. Classify errors before retrying. TOOL_NOT_FOUND? Permanent. Skip. TRANSIENT? Retry.

Fix two: Per-tool circuit breakers. Hallucinate ‘web_browser’ thrice? Lock it out for that task. No global budget bleed.

Fix three—the bold one: Route tools in code. Ditch runtime LLM picks. Parse intent, hard-match to your toolkit. Hallucination impossible at the routing layer.

Apply all three? Zero wasted retries. Step variance drops 3x. Execution? Predictable as clockwork.

In a 200-task benchmark, 90.8% of retries were wasted — not because the model was wrong, but because the system kept retrying tools that didn’t exist. Not “unlikely to succeed.” Guaranteed to fail.

Reproduce it: python app.py –seed 42. GitHub here: https://github.com/Emmimal/react-retry-waste-analysis.

Is This the Dawn of Bulletproof AI Agents?

Absolutely. ReAct’s a platform shift—like HTTP for intelligence. Agents aren’t toys; they’re the new OS for work. But without these fixes, they’re leaky buckets.

Bold prediction: by 2025, every production agent framework bakes in error taxonomy. LangChain, LangGraph, AutoGen—they’ll patch this or get left behind. It’s not hype; it’s engineering gravity.

Wander a sec—think railroads in the 1800s. Trains derailed constantly from bad signals. Solution? Standardized blocks, circuit logic. Same here: agents need railguards against LLM chaos.

For you, the builder? Implement today. Your prod systems thank you. Costs plummet. Reliability soars. And yeah—that intern vibe? Gone. You’re piloting a rocket now.

So. Fire up that repo. Instrument your retries. Watch the waste vanish. The future’s agentic—but only if it’s smart about failure.

🧬 Related Insights

- Read more: Firmus’ $5.5B Nvidia-Fueled Valuation: Crypto Roots to AI Hype Machine?

- Read more: NVIDIA’s Europe AI Blueprint: Hardware Goldmine or Empty Hype?

Frequently Asked Questions

What causes ReAct agents to waste 90% of retries?

Hallucinated tool names. The LLM invents tools not in your TOOLS dict, and blind retries treat them like fixable errors.

How do you fix wasted retries in ReAct agents?

Classify errors pre-retry, add per-tool circuit breakers, and route tools deterministically in code. Zero waste, instant win.

Does this affect LangChain or AutoGen agents?

Yes—any ReAct-style loop with runtime tool selection. Check your logs for TOOL_NOT_FOUND retries.