15,000 GitHub stars. In two months flat.

That’s OpenClaw — the open-source beast that’s clawing its way to the top of developer charts, leaving even fresh LLM releases in the dust.

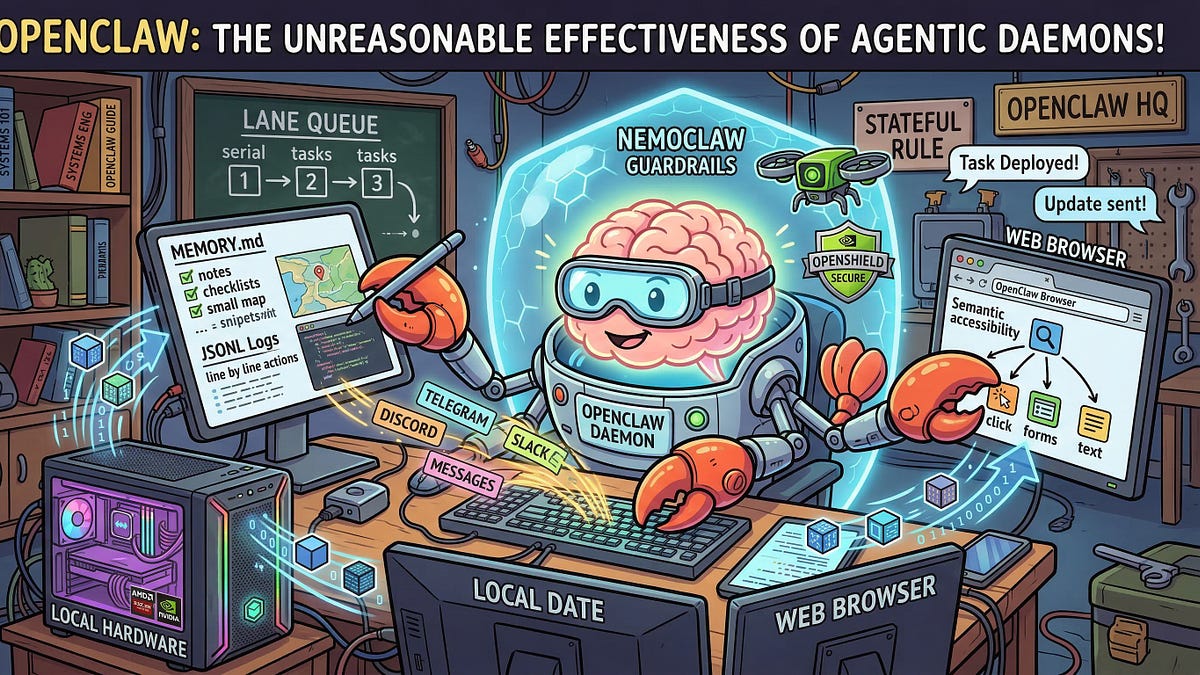

Imagine LLMs as brains in jars. You poke, it spits out wisdom, then poof — context gone, state reset, back to square one. But OpenClaw? It’s strapping rocket boosters — no, claws — onto those brains. Suddenly, your AI isn’t just chatting. It’s remembering. Acting. Persisting across your entire machine, like a digital lobster scuttling through your files, apps, and the wild web.

Peter Steinberger, the Austrian coder behind it (now at OpenAI, eyebrow-raisingly), didn’t invent a new model. Nope. He built an orchestration layer — a daemon that squats on your hardware, hooks into any LLM, and runs agentic workflows. Messaging apps? Check. Local files? Yours. Web browsing? Autonomous. This is the shift from passive oracles to stateful agents, folks. And OpenClaw’s the blueprint.

What Makes OpenClaw Tick — Under the Hood

Strip it down: OpenClaw’s a persistent service. Lives in the background. No browser tabs to kill its memory. You fire it up once, and it holds state — your goals, tools, history — forever (or until you say stop).

It connects to LLMs via APIs — local like Ollama, or cloud giants. Then? Tool-calling magic. Need to email? It drafts, sends. Files? Reads, edits, saves. Web? Scrapes, clicks, learns. All orchestrated in loops: observe, plan, act, reflect. Wash, rinse, repeat.

“OpenClaw isn’t a new foundation model. It’s an open-source orchestration layer—a daemon that lives on your hardware, connects to an LLM, and executes workflows across your messaging apps, local file system, and the web.”

That’s the core, straight from the docs. Simple. Elegant. Deadly effective.

Here’s my hot take — the unique angle you’re not reading elsewhere: OpenClaw echoes the browser’s conquest in the ’90s. Back then, Netscape wasn’t just a viewer; it became the platform, spawning JavaScript empires. OpenClaw? It’s the agent browser for the AI era. A daemon kernel that tames “agentic lobsters” — wild, multi-tool AIs that’d otherwise thrash and forget. Bold prediction: In three years, this architecture powers 80% of production agents. Why? Because stateless chatbots are so 2023.

Why Developers Can’t Stop Raving About OpenClaw

Speed. Locality. Control. It’s running agents on your M1 Mac without phoning home to Sam Altman every five seconds. No vendor lock-in. Fork it, tweak it, own it.

And the rise? Meteoric. GitHub trending for weeks. Twitter devs geeking out. Why now? LLMs got tool-calling down — OpenAI’s function calling, Anthropic’s artifacts — but no one nailed persistence. OpenClaw did. One daemon to rule them all.

But — em-dash alert — it’s not perfect. Early bugs with certain LLMs. Web tool flakiness on dynamic sites. Still alpha vibes. Yet that’s the beauty: open-source fixes fly in daily.

Is OpenClaw Safe for Your Local Machine?

Short answer: Mostly. It’s daemon-life means root-ish access — files, apps — so sandbox it if paranoid. But for tinkerers? Gold. No cloud bills spiking as your agent browses Wikipedia for hours.

Think lobster: Claws for grabbing (tools), eyes for seeing (observation), persistent shell (memory). Untamed? Chaos. OpenClaw? Tamed beast, ready to fetch your coffee order via Slack while summarizing TPS reports.

Energy here is palpable. We’re not hyping corporate spin — Steinberger’s indie roots scream authenticity. OpenAI snagging him? Validation jackpot.

Can OpenClaw Replace Cloud Agents Like Auto-GPT?

Hell yes — for many. Auto-GPT’s cool, but clunky, ephemeral. OpenClaw’s daemon persists workflows across reboots. Imagine: Wake your Mac, agent’s still grinding on that research task from yesterday. Local LLMs keep it cheap, private.

Deeper: Architecture lessons. Reflection loops prevent hallucination spirals. Tool registries make swapping browsers or email clients trivial. This scales to fleets — think home servers running agent swarms.

Critique time: The “lobster” metaphor? Cute, but PR fluff. Real talk — it’s a reactive loop on steroids, kin to LangChain but daemon-native, hardware-hugging.

Picture this sprawl: You task it with “Plan my week.” It scans calendar (local), emails boss (SMTP tool), books flights (web API), updates Notion. All autonomous, stateful. Wonderstruck yet?

And the platform shift? Monumental. AI agents aren’t apps; they’re OS layers. OpenClaw proves desktops win this round — edge computing crushes latency.

Why This Matters for the Agentic Future

Forget hype. Patterns here: Persistence first. Local-first. Orchestration over raw models. Every big player — xAI, Anthropic — will daemon-ify.

Unique insight redux: Like Unix pipes birthed shell scripting, OpenClaw pipes tools into LLM brains. Historical parallel? Emacs — that extensible editor daemon — birthed org-mode empires. OpenClaw births agent empires.

So, devs: Clone it now. Users: Pray your tools integrate. Futurist me? Beaming. This tames the wild, makes AI live.

**

🧬 Related Insights

- Read more: Amazon’s Hybrid RAG Hack: Bedrock Meets OpenSearch to Outsmart Fuzzy AI Searches

- Read more: NXP’s Blueprint: Squishing Robot AI Brains into Phone-Sized Chips

Frequently Asked Questions**

What is OpenClaw and how does it work?

OpenClaw’s a local daemon that orchestrates AI agents using any LLM, handling tools like files, web, and apps with persistent memory.

Can I run OpenClaw on my laptop?

Yep — Mac, Linux primary. Install via brew or cargo, hook your LLM, and unleash.

Will OpenClaw work with GPT-4?

Absolutely, via OpenAI API. Or go local with Llama3 for zero cost.