Bill hits inbox. $380. Gone.

Just like that—poof—my RAG pipeline’s feast on GPT-4o tokens. Fifty thousand requests a day, churning summaries, classifications, extractions. Straightforward stuff. But at $2.50 per million inputs? That’s not innovation; that’s a toll booth on the AI highway.

Zoom out. We’re in the midst of the great inference gold rush. OpenAI’s API? Fantastic, sure—like a Ferrari for grocery runs. But why pay supercar prices when a souped-up electric truck hauls the same load cheaper, faster? This dev did the switch. Replaced OpenAI entirely. Bill craters 94%. And get this: same Python SDK. Zero rewrite.

The Great API Escape

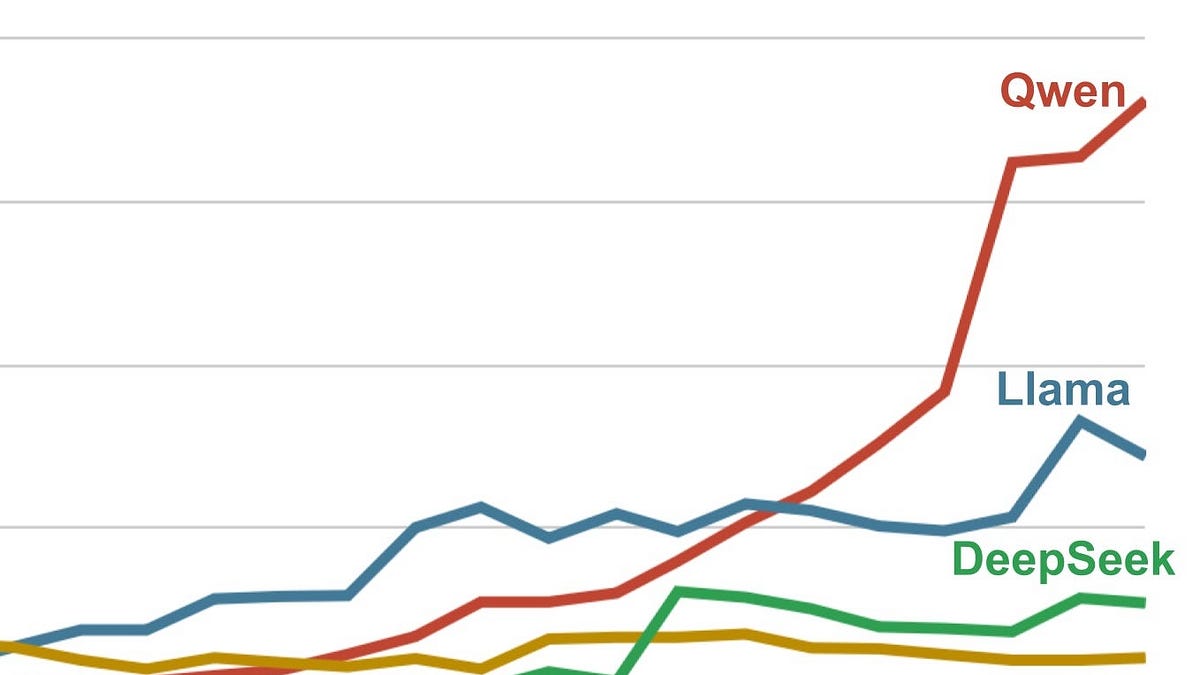

Imagine mainframes in the ’80s—hulking beasts, rented by the hour from IBM. Then PCs hit. Suddenly, compute’s dirt cheap, everywhere. That’s us now with AI inference. Open-weight models like Qwen3-32B aren’t toys; they’re the x86 chips of LLMs. Platforms like VoltageGPU serve ‘em via OpenAI-compatible APIs. Drop in the base_url, swap the model name. Done.

He laid it bare in a table—brutal honesty.

I was paying OpenAI ~$380/month for a RAG pipeline doing ~50K requests/day. Most of them were straightforward: summarize this, extract that, classify this ticket.

Ticket classification? 30K hits daily on GPT-4o, 800 tokens each. Summaries? 15K at 2K tokens. Extraction? GPT-4o-mini for 5K. Total: $380, mostly inputs.

But here’s the spark. Those tasks? Don’t need 1.7 trillion params. A 32B model crushes ‘em. Tested. Proven.

Can a 32B Model Hang with GPT-4o?

Short answer: Hell yes, for 90% of workloads.

He benchmarked 1,000 tickets. Six categories. GPT-4o: 94.2% accuracy, 340ms latency. Qwen3-32B: 92.8%, 280ms. Cost per 1K? $0.0020 vs $0.00012. Wrong on 58 vs 72 edge cases. That’s 1.4% dip for 94% savings.

Look. Edge cases matter in chatbots chasing perfection. But classification? Extraction? Summarization in a SaaS? Good enough is gold. And faster? Your users won’t complain about 60ms shaved off.

Code’s poetry. Same client:

client = OpenAI(base_url=”https://api.voltagegpu.com/v1”, api_key=”vgpu_YOUR_KEY”)

Then route smartly. Classify/extract to Qwen3-32B ($0.15/M in/out). Summaries to Qwen2.5-72B. Reasoning/code to DeepSeek-V3. Ninety percent cheap lane, ten percent premium.

Result? 1.5M requests/month, same volume. Bill: $22. Annual haul: $4,300 saved. Two lines changed.

Why VoltageGPU? (And Cheaper Cousins)

VoltageGPU’s no secret sauce—just GPUs humming open models. Catalog? 150+. Qwen3-32B at $0.15/M. DeepSeek-V3 $0.35. Llama-3.3-70B $0.52. Streaming? Check. Images via FLUX.1-dev at $0.025/pop? Cyberpunk server rooms on demand.

That’s it. Same SDK, same response format, same error handling. I changed base_url and model. Everything else stayed identical.

LangChain, LlamaIndex? Plug and play. But caveats — smaller outfit, no function calling everywhere (DeepSeek does), enterprise polish? Nah, indie SaaS sweet spot.

My bold call — and here’s the insight the original misses: This is WebAssembly for AI. Remember WASM? Standardized runtime, any language runs anywhere. OpenAI-compatible APIs? That’s WASM for inference. Lock-in crumbles. Models commoditize like cloud VMs did post-AWS. Prediction: By 2026, 70% of non-frontier inference routes open-weight. OpenAI? They’ll pivot to agents, not token mills.

The Router That Pays the Bills

Don’t blast everything at one model. Waste.

His router:

def route_request(task_type: str, content: str) -> str: model_map = { “classify”: “Qwen/Qwen3-32B”, # etc. }

Ninety percent Qwen3. Ten percent DeepSeek. Tokens plummet.

Before: 1.2B tokens at $2.50+. After: blended $0.15-0.35. Math sings.

And streaming? Identical chunks. No porting hell.

But Wait — Is This the Future?

Absolutely. AI’s platform shift isn’t ChatGPT wrappers. It’s inference as utility. Like electricity post-Edison—grids everywhere, prices crash.

He tried after $500 spike. VoltageGPU: $5 free credit (33M tokens!). Sign up, key, swap URL. Boom.

Skeptic hat: Corporate spin calls open models “good enough.” Nah. They’re better for cost-per-insight. OpenAI’s moat? Rate limits, uptime. But for RAG pipelines? Open wins.

Tradeoffs? Model names verbose (Qwen/Qwen3-32B). Tool use spotty. Scale to bank-level? Maybe not yet. But for devs building real stuff? Revolution.

Quick Wins for Your Stack

-

Audit your API calls. What’s GPT-4o-overkill?

-

Test Qwen3 or Llama3.1-70B. Accuracy holds.

-

Router it. Save 80-95%.

-

Streaming, images — all there.

Energy here? Electric. This isn’t tweak; it’s tectonic. Open-weight APIs turn AI from luxury to Lego bricks. Build wild.

🧬 Related Insights

- Read more: Qilin and Warlock’s BYOVD Assault: Silencing 300+ EDRs in the Kernel

- Read more: Prediction Markets Gamble on Asia’s Gray Zones – Regulators Lurking

Frequently Asked Questions

How do I replace OpenAI API with cheaper alternatives?

Swap base_url to VoltageGPU or similar, pick open model like Qwen3-32B. Same SDK, zero code rewrite. Start with their $5 credit.

Do open-weight models match GPT-4o accuracy?

For classification/summarization: 92-94% vs 94%, 94% cheaper. Edge cases? Route to pricier like DeepSeek-V3.

What’s the cheapest OpenAI-compatible inference?

Qwen3-32B at $0.15/M tokens on VoltageGPU. Handles 90% workloads, streams perfectly.