Arcee’s punching way above its weight.

Look, I’ve covered enough Silicon Valley fairy tales to know when one’s worth a cheer — and this 26-person outfit dropping a 400-billion-parameter open source beast on a measly $20 million? That’s the kind of underdog story that gets my cynical blood pumping. Trinity Large Thinking, they call it, and CEO Mark McQuade brags it’s the most capable open-weight model ever from a non-Chinese shop. Bold claim. But here’s the real hook: in a world drowning in Chinese AI firepower and Big Tech gatekeepers, Arcee wants to hand Western companies a no-strings download-and-deploy alternative.

Why Bet on a Startup Over OpenAI’s Empire?

Companies freak over Chinese models — not because they’re weak (hell no, they’re beasts), but because shipping your data to Beijing feels like handing the keys to Uncle Sam’s nightmare neighbor. Arcee’s pitch? Grab Trinity, tweak it in-house, run it on your servers, or ping their API if you’re lazy. No subscriptions yanked mid-project, no rate limits screwing your workflow.

Remember OpenClaw? That slick open source AI agent tool swore by Anthropic’s Claude for coding wizardry. Then bam — Anthropic flips the script last week, says your sub won’t cover it anymore; pony up extra dough. (Creator Peter Steinberger bailed to OpenAI in February, coincidence?) Arcee’s grinning ear-to-ear: OpenRouter data shows Trinity’s now a top pick there. Stability. Freedom. Words Big Labs forgot.

“the most capable open-weight model ‘ever released by a non-Chinese company,’” — Mark McQuade, Arcee CEO to TechCrunch.

Benchmarks? Solid, not world-beating. It trades punches with top open source rivals, lags Meta’s Llama 4 (that licensing mess Meta slapped on), but shines under pure Apache 2.0 — the open source gold standard, no gotchas.

But.

I’ve seen this movie before. Back in the ’90s, Netscape built a browser empire on open principles, only for Microsoft to bundle IE and crush ‘em. Unique insight time: Arcee’s not just another model; it’s the seed of a browser war redux in AI. Tiny teams like this will splinter the market, forcing giants to open up or bleed users to customizable, on-prem alternatives. Prediction: by 2027, 40% of enterprise AI runs open weights from startups like Arcee, not because they’re perfect, but because control trumps convenience.

Does Trinity Large Thinking Actually Reason Like a Pro?

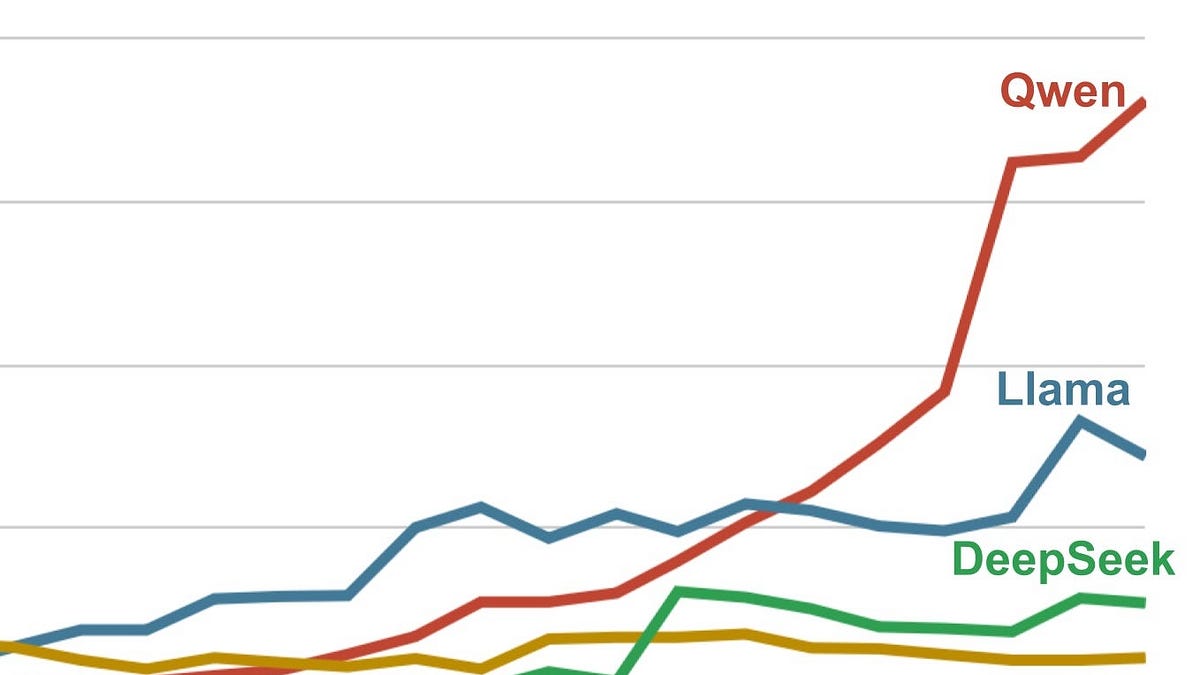

Reasoning models — buzzword bingo, right? Everyone slaps ‘thinking’ on it now. Arcee’s Trinity lineup (small, medium, large) promises chain-of-thought magic without the black box. Tests show it holds its own on math, code, logic benches — think GSM8K, HumanEval scores neck-and-neck with Qwen or Llama 3.1 variants.

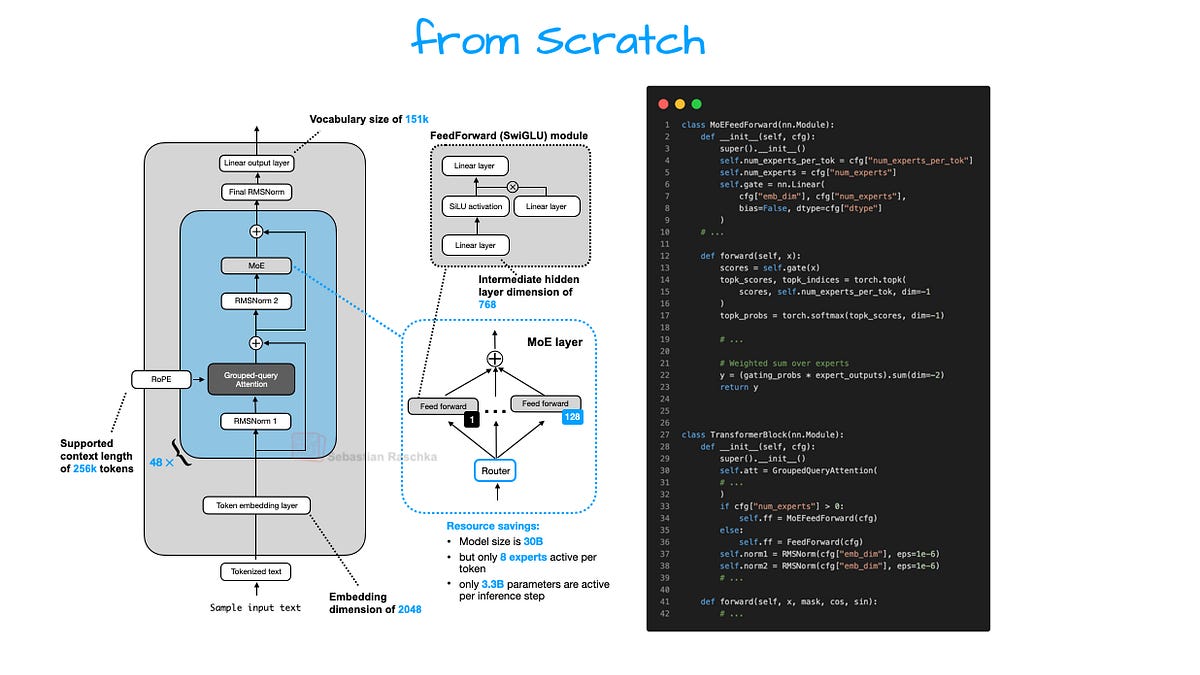

What sets it apart? That shoestring efficiency. $20M vs. OpenAI’s billions-per-model burn rate. How? Smart data curation, I bet — not just hoovering the web, but surgical mixes of synthetic reasoning traces. McQuade won’t spill recipes (trade secrets), but whispers of distilling from frontier closed models hint at the playbook. Cynic hat on: if it’s truly open, why not full recipe cards? Still, for devs tired of API roulette, this is catnip.

And the geopolitics — oh boy. U.S. firms whisper about export controls, data sovereignty. Trinity’s your firewall: American roots, verifiable weights, no CCP oversight. Chinese giants like DeepSeek drop fire for free, but pair that with TikTok scandals? Nah. Arcee’s betting nationalism sells — and in boardrooms, it does.

Short para for punch: Big Labs hate this.

Why? Control slippage. OpenAI tweaks prices, Anthropic ghosts tools — Arcee’s set-it-and-forget-it ethos erodes moats. Meta tries ‘open’ but licenses scream ‘gotcha’ (Llama 4’s accept-or-else clause). Arcee? Pure Apache. Download today, iterate tomorrow. No vendor lock-in velvet glove.

Deeper dive: building 400B params on peanuts demands wizardry. Distributed training hacks, probably LoRA-heavy fine-tunes on Llama/Mistral bases. Team of 26? Lean like early Google, fat on talent. McQuade’s ex-Apple, knows hardware squeezes. They likely clobbered inference costs — key for edge deploys. Imagine shipping Trinity to factories, hospitals: low-latency reasoning without cloud pings.

Can Arcee Outlast the Hype Cycle?

Skepticism radar pings. Open source AI’s littered with ghosts — once-hot models gather dust. Arcee’s edge? Ecosystem buy-in. OpenClaw adoption proves stickiness. Expect agent frameworks, RAG pipelines to plug Trinity first. Cloud API? Smart hedge — monetize without closing doors.

Historical parallel: Linux kernel. Started scrappy (Linus + dorm room), now powers 90% clouds. Arcee could be that for reasoning LLMs — fragmented forks evolving faster than monolithic closed labs. Bold call: if U.S. policy nudges (CHIPS Act vibes), expect DoD contracts flowing. Who makes money? Arcee via APIs/services; you via sovereignty.

Not flawless. Trails Claude 3.5 on nuance, hallucinates like kin. But independence? Priceless.

Wrapping threads: Arcee’s no silver bullet, but damn if it doesn’t expose the farce. Big Tech preaches innovation, hoards keys. This tiny rebel says screw that — build your own.

🧬 Related Insights

- Read more: The Mid-Scrape Trap: Why Checking robots.txt Once Costs You IP Bans

- Read more: AI Fitting Rooms: Retail’s Desperate Bid to Kill the Returns Plague

Frequently Asked Questions

What is Arcee Trinity Large Thinking? Tiny U.S. startup’s 400B open source LLM for reasoning tasks, downloadable under Apache 2.0.

Is Arcee’s model better than Chinese open source alternatives? Comparable capability, but zero geopolitical risk for Western firms — on-prem control seals it.

Can I use Trinity for enterprise AI without Big Tech? Yes: train locally, API access, or self-host. Beats subscription traps.