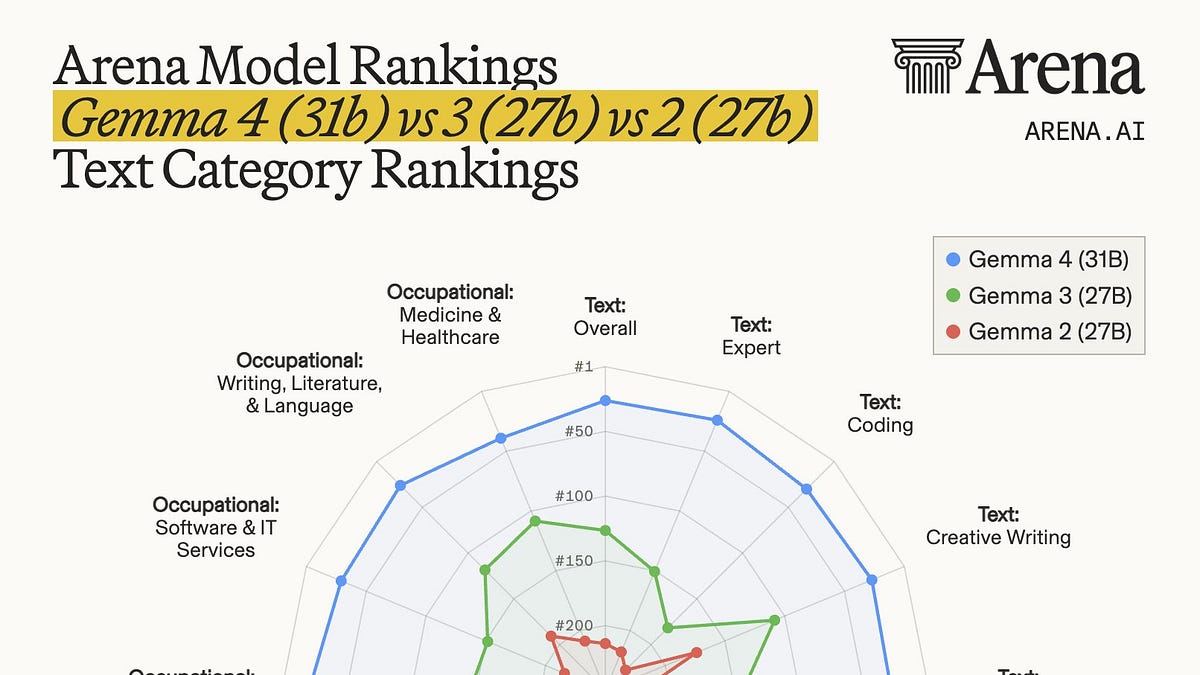

1,452 on Arena AI text. That’s Gemma 4’s 31B variant staring down the leaderboard, not flinching. Google DeepMind dropped this family mid-week, and suddenly the US has a credible open-weight contender again—Apache 2.0 licensed, from 2B edge tinies to a 31B dense flagship that fits on a single H100.

Zoom out: China’s been owning this space. Qwen’s MoE hordes, DeepSeek’s agentic sprawl—they’ve flooded Hugging Face with giants you can self-host without begging a lab for API keys. Gemma 4 doesn’t erase that lead. But here’s the shift: it drags a U.S. lab—Google, no less—back into the fray for folks who demand control, who shun cloud black boxes.

And it’s working. Over 400 million Gemma downloads already, 100,000 community tweaks. This gen? Built on Gemini 3 bones, but open. The architecture? Conservative as hell—hybrid sliding-window attention, Proportional RoPE for contexts up to 128k on big boys. No wild reinvention. The magic’s in the RL polish, the data feasts, the training hacks.

Why a Single H100 Changes Everything for Devs

Load the 31B. Fire it up. It chews through GPQA Diamond at 84.3%, AIME 2026 at 89.2%, LiveCodeBench v6 at 80%. The 26B MoE cousin trails a hair—1,441 Arena, 82.3% GPQA—but activates just 3.8B params per token (eight of 128 experts). Caveat: you still RAM the whole 25.2B beast. No free lunch on inference.

Google’s engineering flexes hard here. Configurable thinking mode. Native system prompts, tool-calling tokens. Text-image multimodal across the board; video-audio on edges. Docs? Gold. “Validate function names and arguments before execution,” they warn—straight talk devs crave.

Gemma 4 does not wipe that scoreboard clean. What it does do is bring a strong Apache 2.0 family from a U.S. lab back into the part of the market that actually wants to run models itself, on local hardware or within tighter enterprise boundaries.

That’s the original scribe nailing it. But let’s deep-dive the why: self-hosting’s shrinking, sure—Anthropic’s at $30B run-rate, 30x in 16 months. Enterprises cozy up to APIs now, privacy fears fading. Yet niches explode: edge on phones (Gemini Nano 4 base, 4x faster, 60% less battery), Raspberry Pi, Jetson Nano. Ecosystem? Day-zero on Ollama, vLLM, llama.cpp, MLX, NIM. Android-ready.

Is Gemma 4’s 31B Actually Toppling Qwen?

Benchmarks lie sometimes—until they don’t. Artificial Analysis pegs the 31B at 39 Intelligence Index, nipping Qwen 3.5 27B’s 42 heels, but using 2.5x fewer tokens (39M vs 98M). Agentics? Weak spot—Qwen edges it. But raw smarts? SciCode 43-40, GPQA tie at 86, HLE 23-22. The 26B MoE? Lags more on agents (32 vs Qwen 35B A3B’s 44). Punchline: 31B’s your dense king; MoE’s throughput play, not wizardry.

My take—the one you’ll not read elsewhere: this echoes TensorFlow’s 2015 open-source pivot. Google then flooded the world with tools, birthing an ecosystem while rivals scrambled. Gemma 4? Same playbook. Conservative stack screams reliability—enterprises hate MoE deployment gotchas. Prediction: by Q4, we’ll see Gemma-forked agents dominating private fleets, forcing Meta’s Llama and Mistral to sprint. US open-weights renaissance, incoming.

But hype check: Google’s PR spins ‘competitive.’ Nuanced truth—it’s no crown-snatcher, but architecture’s the tell. RL-heavy lifts over MoE bloat mean faster evals, stabler runs. That’s the underlying shift: quality data trumps param wars.

Small fries shine too. E2B/E4B for phones—512-token windows, multimodal. They’re not toys; they punch weights, priming on-device AI boom.

How Gemma 4 Rewires the Open Market

Self-host diehards? Shrinking pool, yeah. But they’re loud: defense contractors, air-gapped firms, tinkerers. Gemma slots perfect—Vertex AI, Google AI Edge, but forkable. Prompting guides? Surgical: summarize thoughts for long agents, strip traces from history. Tool lifecycle defined. It’s dev catnip.

China’s lead? Months of MoE families, agentic leaps. Gemma counters with polish, not size. And that license—Apache 2.0—unlocks commercial forks sans drama.

Broader ripple: Anthropic’s $30B signals API comfort. But Gemma says, “Or don’t.” Hybrid future—API for scale, open for sovereignty.

Unique edge: Google’s past closed-garden sins (remember Bard flops?) make this redemption arc. They’re betting open wins talent, forks, scrutiny. Smart.

🧬 Related Insights

- Read more: AWS Frontier Agents: Autonomous Saviors or Expensive Hype?

- Read more: StruQ and SecAlign Crush Prompt Injection Attacks to Near-Zero

Frequently Asked Questions

What is Gemma 4 and its variants?

Google’s open-weight family: 2B/4B edges for devices, 26B MoE for throughput, 31B dense flagship. Apache 2.0, multimodal, tool-calling native.

Does Gemma 4 beat Chinese open models like Qwen?

31B ties or edges on reasoning (GPQA 86=86), lags slightly on agents; uses fewer tokens overall. Strong US contender, not undisputed champ.

Can I run Gemma 4 on consumer hardware?

Yes—edges on Pi/Nano/phones; 26B/31B on single H100 or workstations. Full ecosystem support via Ollama, vLLM, etc.