Picture this: open-weight AI’s been gasping lately. Sudden exits at the Allen Institute, GPT-OSS stuck in purgatory—folks whispered that American open models might fade into Europe’s shadow, or worse, Meta’s solo sprint. But Google DeepMind? They just unleashed Gemma 4, the best small multimodal open models out there, smashing Gemma 3 across every benchmark. Ties for world’s top spot with monsters like Kimi K2.5 (744B params) using a measly 31B dense. Changes everything.

And here’s the kicker—it’s Apache 2.0 licensed now. No more sketchy restrictions. Native video, images (variable res, even), OCR that actually works, chart parsing like a pro. E2B and E4B variants? They eat audio too, for speech on the edge. On-device dreams just got real.

What Everyone Expected (And Why They Were Wrong)

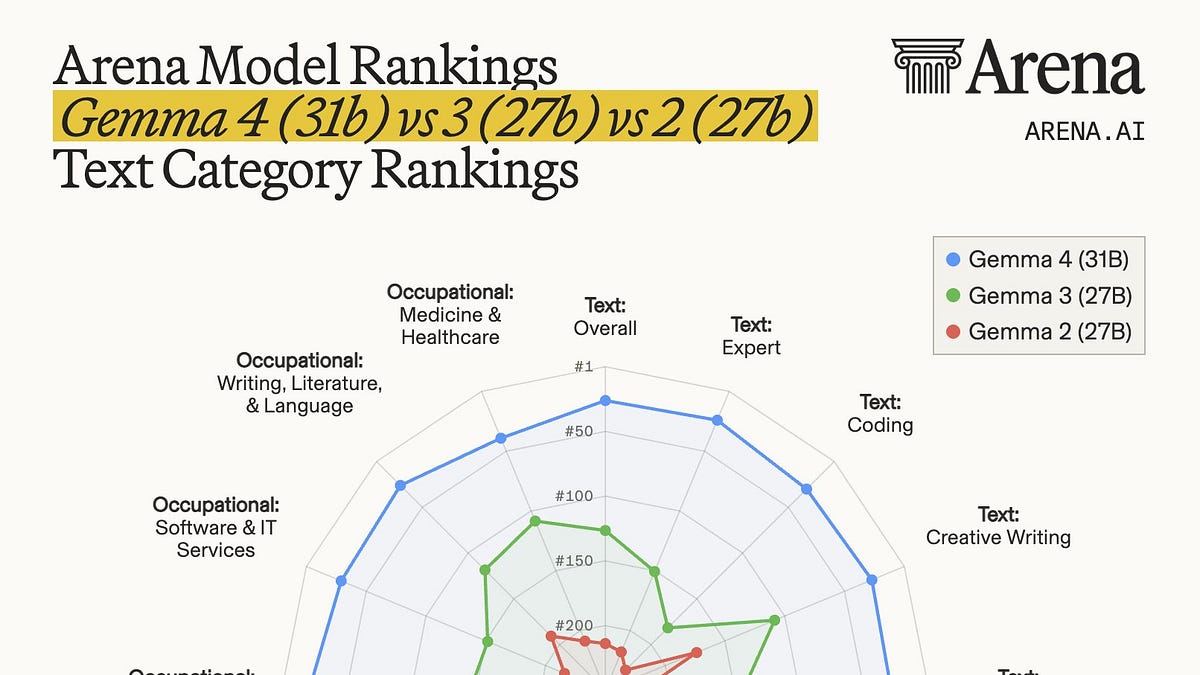

Expectations tanked. Post-Gemma 3, hype cooled—downloads hit 400M, sure, but where’s the leap? Community buzzed about ecosystem gaps, licensing gripes. Then bam: 31B dense hits #3 on Arena open leaderboard (#27 overall), 26B MoE (A4B) at #6. GPQA Diamond? 85.7%. Outperforms 20x bigger rivals on token efficiency.

“Gemma 4 is Google’s biggest open-weight licensing + capability jump in a year: explicitly positioned for reasoning + agentic workflows and local/edge deployment, now under a commercially permissive Apache 2.0 license.”

That’s from the AI Twitter recap—spot on. Jeff Dean chimed in with adoption stats, framing it for agents and edge. Day-zero support everywhere: llama.cpp, Ollama, vLLM, LM Studio. Brew install, fire up llama-server—300 t/s on M2 Ultra with video? Wild.

Look, benchmarks can lie (preference rankings? Gamable as hell). But GPQA, AIME? Hard benches show real jumps. Raschka’s cautious take: measured progress, no hype overdose.

Why Does Gemma 4’s Architecture Scream Efficiency Hacker?

Not your grandpa’s transformer. Per-layer embeddings on small fries. No explicit attention scale—likely baked into QK norms. QK norm + V norm. Shared K/V on big boys, aggressive KV cache sharing on tinies. Sliding windows at 512/1024. No sinks. Softcapping. Partial-dimension RoPE, split thetas for local/global layers.

Baseten nails it: alternative attention, proportional RoPE, PLE, KV sharing, native vision aspect ratios, tight audio frame windows. Norpadon calls it “very much not a standard transformer.”

This isn’t random. It’s surgical—hybrid attention for multimodal without bloating. MoE layering (26B A4B activates ~4B) slashes compute. Edge models E4B/E2B? Tailored for mobile/IoT, long context to 256K on heavies. Function calling, JSON structured outputs baked in. They’re chasing agentic flows, not chat fluff.

My unique angle? This echoes GPT-2’s era—when OpenAI open-sourced to bootstrap the field, forcing everyone to level up. Gemma 4’s no charity; it’s Google reclaiming open leadership post-Llama frenzy. Bold prediction: these edge beasts underpin Apple’s New Siri deal. Why else the video/audio push right now? On-device multimodal, privacy-first—perfect iPhone bait.

But skepticism check. Arena’s ordinal ranks exaggerate (it’s #3 open, not undisputed king). Smaller frame windows for audio? Tradeoff for speed, maybe skimps on long speeches. Still, concrete perf anecdotes—RTX 4090 throughput, WebGPU browser runs—prove it’s no paper tiger.

How Gemma 4 Reshapes the Edge AI Battlefield

Ecosystem lit up day zero. @_philschmid, @UnslothAI dropping local run guides. @ggerganov: llama.cpp WebUI, realtime video at 300 t/s (caveat: prompt recitation boosts it). @julien_c’s brew one-liner went viral.

Why care? Developers starved for multimodal open weights that don’t guzzle GPUs. Gemma 4 delivers: run on M2, 4090, even browser. Ties 1T-param giants with 31B. That’s architectural sorcery—MoE sparsity, cache tricks paying off.

Corporate spin? Google touts it for “reasoning + agentic workflows.” Fair, but they’re late to multimodal open party (Llama 3.2 beat ‘em). This feels like catch-up with steroids. PR win, though—400M Gemma 3 downloads primed the pump.

Wander a sec: imagine devs fine-tuning E2B for IoT vision—drones spotting defects, phones transcribing calls offline. Or agents chaining vision-to-JSON-to-action. Shifts power from cloud barons to edge tinkerers.

Is Gemma 4 the Open Model We’ve Been Waiting For?

Short answer: damn close. Licensing flip to Apache? Huge for enterprise. Multimodal native, no adapters? Chef’s kiss. But watch the games—leaderboards love tricks.

Historical parallel: like BLOOM’s flop taught us scale-alone sucks, Gemma 4 proves smart arch > raw FLOPs. Prediction: by Q3, we’ll see 1K+ Gemma 4 variants, powering half the new edge apps.

Critique time—Google’s not pure open (Gemini stays closed). This? Strategic gift to starve rivals’ data moats. Smart.

Edge cases shine: OCR on wonky scans, charts to code. Audio? Speech rec without clouds. Ties K2.5/Z.ai GLM-5? Underdog victory.

One punchy truth.

It runs locally—fast.

🧬 Related Insights

- Read more: DeepSeek V4 Unleashed: China’s Sparse Attention Revolution Hits Now

- Read more: Gemini Deep Think Cracks Stubborn Math Knots – Google’s Hype Meets Reality

Frequently Asked Questions

What is Gemma 4 and how does it differ from Gemma 3?

Gemma 4’s a family of open multimodal models (31B dense, 26B MoE, E4B/E2B edge) under Apache 2.0—leaps Gemma 3 in benches, adds native video/audio, long context, agent tools.

Can I run Gemma 4 on my laptop or phone?

Yep—llama.cpp/Ollama day-zero support. 300 t/s video on M2 Ultra; E2B/E4B for mobile/IoT. WebGPU even browsers it.

Does Gemma 4 beat closed models like GPT-4o?

Ties top opens (31B vs. 744B/1T), #27 overall Arena. GPQA 85.7% crushes many, but closed giants edge reasoning—efficiency king here.