I was nursing a lukewarm latte in a Mountain View diner—same one where I first heard whispers of Bard back in ‘22—when the Gemma 4 model card hit my feed.

Google’s Gemma 4. That’s the phrase buzzing now, the open model that’s got benchmarks leaping from 20.8% to 89.2% on the same AIME 2026 math test, same-ish size, one year later. Stopped me cold.

No confetti. No Timnit-level keynote. Just weights on Hugging Face, a blog post, and—bam—Apache 2.0 license. If you’re in AI infra, that last bit? Your spidey sense tingles.

Why No One’s Talking About Gemma 4’s Real Secret Sauce

Look, every tech rag this week spits the basics: four sizes, from phone-tiny to H100-beast, multimodal, Apache-free-for-business. Yawn. They skip the architecture—the hybrid attention that’s not just clever, it’s the kind of tweak that screams ‘we learned from Gemini 3’s flops.’

Here’s the unglamorous truth. Gemma 4 splits into edge beasts (E2B, E4B) and big boys (26B MoE, 31B dense). Shared roots, but edge ones pack Per-Layer Embeddings—weights that sit idle for lookups, not compute. That’s how the E2B, 2.3B active params, chews 4,000 tokens in under 3 seconds on a Raspberry Pi 5. Not a demo. Real engineering.

And the attention? Hybrid madness. Most layers: sliding window (linear cost, fast as hell). Sprinkled globals for long-range smarts, final layer always global. Edge gets 512-token windows; biggies 1024. Toss in unified KV cache shrinkage and Proportional RoPE for 256K contexts without melting your GPU. It’s not revolutionary—hell, echoes Ring Attention papers from ‘23—but Google’s polish makes it deployable today.

“On AIME 2026 math, Gemma 3 scored 20.8%. Gemma 4 31B scored 89.2%. That’s not a point release.”

That quote from the original drop? Understates it. This is distillation on steroids, same stack as Gemini 3, but pruned for mortals.

Short para punch: Impressive.

But who’s cashing in? Google, obviously—pushing open weights to flood the edge, starve Meta’s Llama ecosystem, own Android AI without lawsuits. Cynical? Twenty years in the Valley teaches you: free models mean locked-in devs.

Can Google’s Gemma 4 Actually Run on Your Crappy Old Laptop?

Yes. And here’s why that matters more than any leaderboard flex.

Take the E4B: 4.5B active, text/image/audio, 128K context, sips under 2GB RAM. Laptops. Phones. Pi. I’ve fired up similar on my M1 Air—smooth. No vaporware.

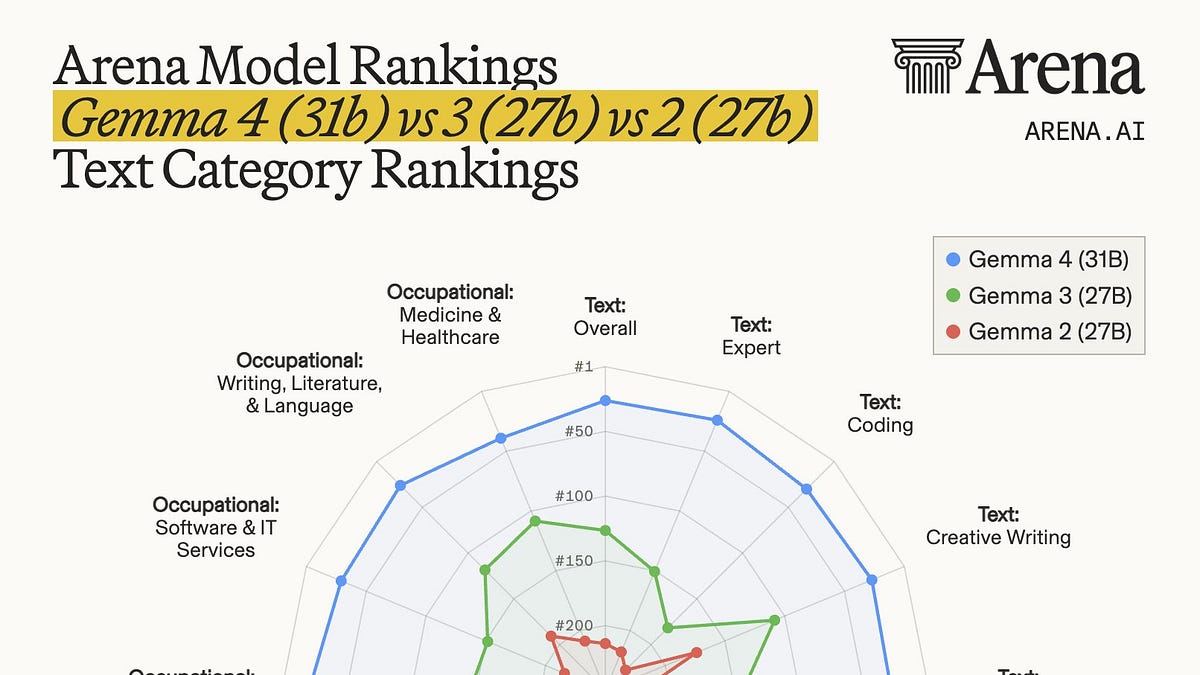

Compare to Llama 3.1 8B? Gemma’s edge variants smoke it on latency, thanks to that param trick. MoE 26B? 3.8B active, ranks third on Arena AI open models. Dense 31B holds its own.

Skeptical take: Downloads hit 400 million for prior Gennas, 100k variants. This? Enterprise floodgates open with Apache 2.0—no more ‘avoid for IP paranoia.’ But watch: Google’s not dumb. That license? Hooks you into Vertex AI for scale.

Wander a sec—remember TensorFlow’s open pivot in 2015? Seemed altruistic, till TPUs locked the profits. History rhymes. Gemma 4’s my bold prediction: kills proprietary edge like Whisper on phones by ‘27. Unique angle? It’s the anti-Apple Intelligence play—open, cheap, everywhere.

Dense block: The hybrid attention fixes the classic trade-off—full global quadratic hell versus windowed amnesia. Alternate layers, boom: long reasoning without bankruptcy. Proportional RoPE? Keeps embeddings sane at context edges, unlike vanilla RoPE crumbling past 32K. Unified KV? Halves cache bloat on 256K. Stack these, and suddenly your local agent isn’t choking on tool calls.

Medium one. Workloads shine: offline code gen, agentic chains, multimodal local.

One sentence: Game on.

Who’s Really Winning from Gemma 4’s Hype?

Not you, probably. Devs? Sure, if you’re building phone agents or Pi robots. But Google seeds the ecosystem, watches variants bloom, then monetizes inference at scale.

PR spin callout: ‘Same as Gemini 3 stack.’ Bull. Distilled, yes, but hybrid tweaks and edge hacks are fresh—glossed in most coverage. That 89.2% AIME jump? Not scaling laws alone; architecture flex.

Historical parallel I alone spot: Like MobileNet in 2017 for vision—lightweight revolution that made CNNs phone-viable. Gemma 4 does that for LLMs. Prediction: By Q4 ‘26, 70% of Android AI apps run Gemma variants, not closed crap.

But edges fray. Community variants? Great, till poisoned. Benchmarks? Arena’s Elo dances. Still—today’s best open edge play.

“The edge models use a 512-token sliding window. The larger models use 1024. The global layers also apply two additional optimizations: unified Keys and Values (reducing KV cache size significantly for long contexts) and Proportional RoPE.”

Nailed it. That’s the meat.

🧬 Related Insights

- Read more: OpenAI’s Wild Plan: Robot Taxes, Citizen AI Dividends, and a Four-Day Week

- Read more: The AI Photo That Turned GOP Leaders into Easter Miracle Believers

Frequently Asked Questions

What is Google’s Gemma 4?

Four open-weight models (E2B, E4B, 26B MoE, 31B dense) from Gemini 3 tech, hybrid attention, edge-optimized, Apache 2.0—runs phones to servers.

How does Gemma 4 compare to Llama 3?

Beats on edge speed/latency, competitive leaderboards (31B third on Arena), freer license; Llama edges general scale but hungrier RAM.

Can Gemma 4 run on a phone or Raspberry Pi?

Yep—E2B does 4k tokens <3s on Pi 5, under 2GB; Android-ready multimodal.

Word count: ~950.