What happens when Google finally admits that locking AI behind proprietary gates won’t cut it anymore?

Gemma 4. That’s Google’s answer — four new open-weight models, announced today, optimized for local hardware and freshly licensed under Apache 2.0. No more custom Gemma terms that devs grumbled about for years. It’s a pivot, sharp and overdue, toward true openness in the Gemma 4 era.

Look, Google’s Gemini lineup? Killer, sure — but you’re dancing to their tune, quotas and all. Gemma’s been the scrappy open alternative since day one. Gemma 3? Solid, but creaky now, over a year old. Enter Gemma 4: 26B Mixture of Experts, 31B Dense, plus Effective 2B and 4B for the tinier stuff. All built to hum on your machine, not some distant server farm.

How Does Gemma 4 Squeeze Power into Pixels?

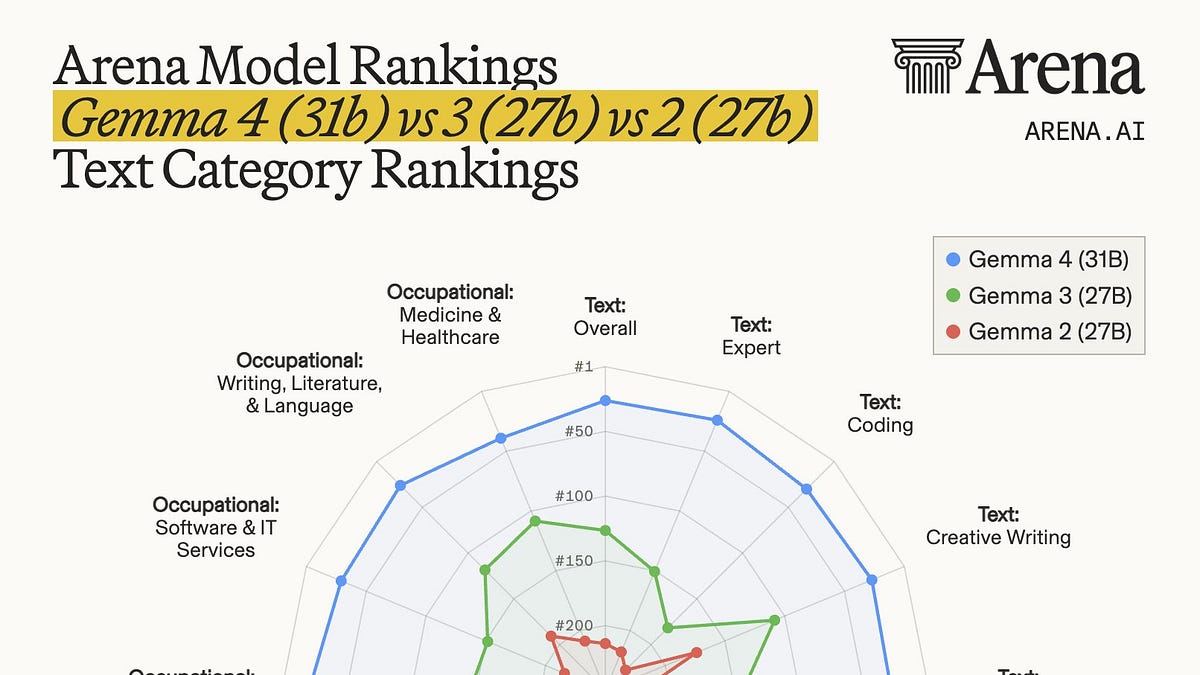

Take the big boys first. That 26B MoE variant? Activates just 3.8 billion parameters during inference — a sly architectural trick that blasts tokens-per-second way past dense rivals of similar size. Google claims it’ll debut at #3 on the Arena leaderboard for open models, nipping at GLM-5 and Kimi 2.5 heels despite being a fraction of their heft. Cheaper to run? Absolutely. On a single 80GB H100 — yeah, $20k territory — unquantized in bfloat16. Quantize it, though, and consumer GPUs get in the game.

The 31B Dense? Trades some speed for raw quality. Fine-tune it for your niche, and watch it shine. But here’s the real juice: latency slashed for that local edge. No cloud lag. Just pure, on-device inference.

Then — the mobile magic. Effective 2B (E2B) and 4B (E4B). Pixel team huddled with Qualcomm, MediaTek. Result? Models slurping minimal memory, battery. “Near-zero latency,” Google boasts. Raspberry Pi? Jetson Nano? Your smartphone? Check, check, check. Beats Gemma 3 on efficiency, hands down.

Google says the Pixel team worked closely with Qualcomm and MediaTek to optimize these models for devices like smartphones, Raspberry Pi, and Jetson Nano. Not only do they use less memory and battery than Gemma 3, but Google also touts “near-zero latency” this time around.

Why Ditch the Custom License for Apache 2.0?

Devs hated the old Gemma terms — too restrictive, they said. Google listened. Apache 2.0? Industry gold standard. Permissive, no copyleft baggage. Fork it, tweak it, commercialize without the handcuffs. This isn’t charity; it’s strategy. Open models build ecosystems. Llama did it for Meta. Now Google wants in, fueling a moat around their full stack — from silicon to software.

But dig deeper. Remember the browser wars? Netscape open-sourced Mozilla, birthing Firefox, forcing IE to adapt. My unique take: Gemma 4 echoes that. Google, once the closed-garden king, now seeds the edge AI soil. Prediction? By 2026, 40% of consumer AI inference happens locally, Gemma variants everywhere, starving cloud-only players.

Skeptical? Fair. Benchmarks hype is cheap. Arena #3 sounds hot, but real-world? Depends on your fine-tune. And those H100s? Not exactly democratized. Still, the small models — E2B on a phone — that’s the disruptor. Imagine on-device copilots that don’t leak your data.

Can Gemma 4 Outrun the Open AI Pack on Your Rig?

Short answer: On local hardware, yeah — potentially. Gemma 3 dust-binned. These crush it on efficiency metrics. MoE sparsity? Genius for throughput. Mobile opts? Tailored quantization keeps VRAM low, inference snappy.

Architectural shift here screams intent: mixture-of-experts scaling without the full-param penalty. Why? Edge devices crave it. Cloud’s infinite RAM? Fading advantage as silicon shrinks. Google’s betting on distributed intelligence — your phone as the new brain.

Critique time. PR spin calls them “most capable local models.” Bold. But smaller than leaders, so quality ceilings loom. Fine-tuning bridges that, though. Devs, your move.

And the license flip? Smart countermove to Hugging Face hordes. Apache pulls talent, models, back to Google orbit indirectly.

Here’s the thing — this isn’t just models. It’s a manifesto. Google saw Mistral, Phi-3 eating their open lunch. Response? Double down, but smarter: hardware-tuned, license-liberated.

Wander a sec: think Jetson Nano projects. Hobbyists rigging robot arms, now with Gemma 4 smarts. Or enterprise? Fine-tuned 31B for secure, air-gapped ops. Possibilities explode.

One punchy caveat. Training details? Opaque as ever. We know post-training magic happened — alignment, safety — but carbon footprint? GPU hogs still rule big runs.

The Edge AI Reckoning

Google’s not alone. Apple Intelligence, Qualcomm’s chips — everyone’s piling on-device. Gemma 4 accelerates it. Why now? Regulations looming on data privacy. EU AI Act breathing down necks. Local = compliant, sovereign.

Bold call: this seeds the post-cloud era. Your Raspberry Pi rivaling a 2023 server. That’s the how. The why? Control the stack end-to-end, from TPU design to app inference.

Developers, grab ‘em on Hugging Face today. Tinker. Break stuff. That’s the point.

**

🧬 Related Insights

- Read more: LLMs 2025: Reasoning Boom That’s Cheaper But Still No Magic Bullet

- Read more: Amazon Nova 2 Sonic: Instant AI Podcasts That Chat Like Humans

Frequently Asked Questions**

What are Google Gemma 4 models?

Four open-weight LLMs: 26B MoE, 31B Dense, E2B, E4B — tuned for local runs on GPUs, phones, edge devices. Apache 2.0 licensed.

Gemma 4 vs Gemma 3: key improvements?

Faster inference, lower latency/memory use, better benchmarks (e.g., #3 on Arena). Mobile opts crush Gemma 3 efficiency.

Can I run Gemma 4 on consumer hardware?

Yes — big ones quantized on RTX 40-series; small ones on phones, Pi, Jetson out-of-box.