Look, I’ve seen enough of these “breakthrough” AI model announcements to fill a small data center. They drop, they generate a flurry of breathless tweets, and then… crickets. So when DeepSeek announced their V4 Pro and V4 Flash, the first thing I did wasn’t check the benchmarks; it was check the investor relations page (which, for most of these AI startups, is blessedly empty). Because that’s where the real story usually lies. Who’s actually making money here?

And this time, there’s a geopolitical twist. DeepSeek, bless their hearts, are trying to wean themselves off the NVIDIA teat. Makes sense. Export controls are a pain, and frankly, the H100s are rarer than a well-written tech blog these days. So, they’re touting compatibility with Huawei’s Ascend chips. Cute. Ascends are still a distant second fiddle to the H100s, but hey, it’s a statement. It’s about independence. It’s about China’s march towards total AI self-sufficiency. Whether it’s an economically sound strategy is another question entirely.

1 Million Tokens and a Prayer

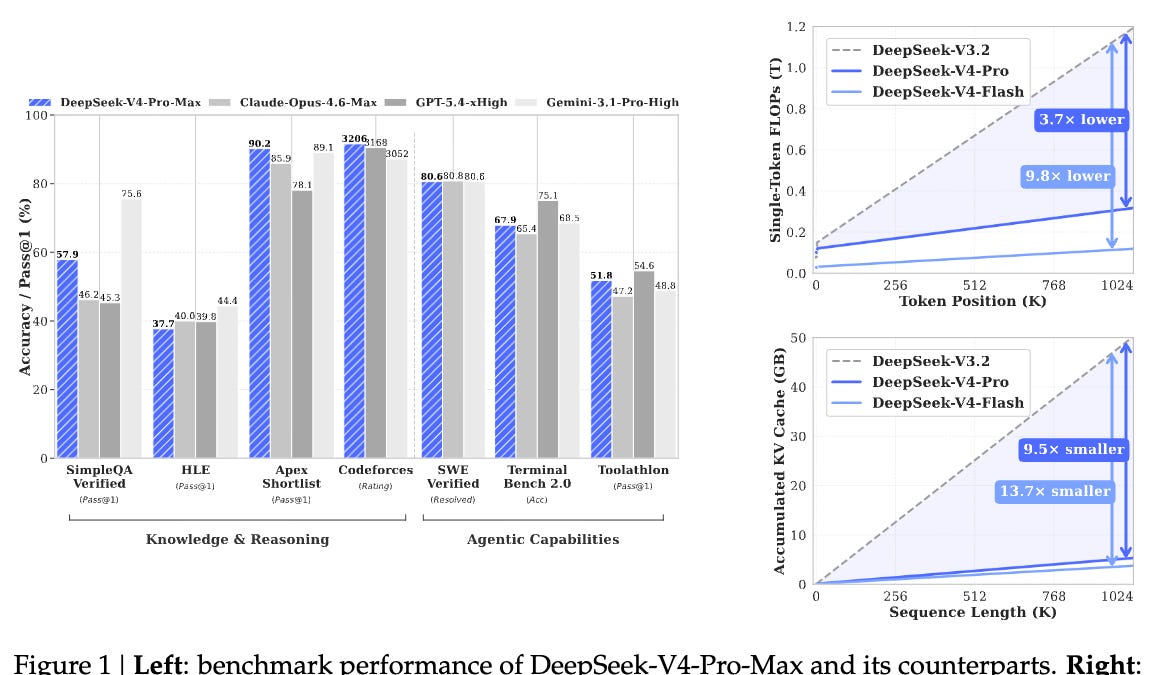

The headline grabber? A cool 1 million token context window. One. Million. Tokens. That’s roughly a thousand times more than what your average chatbot could handle a few years ago. They’re claiming this is due to some fancy new tech they’re calling Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA). Apparently, it makes things “INCREDIBLY efficient.” They’re tossing around numbers like 27% of the FLOPs and 10% of the KV cache memory compared to their V3.2. If true, that’s… a lot. But here’s the thing: efficiency is great, but does it translate to better results that people will pay for?

And they released both Base and Instruct versions. Smart. It sets the stage for more iterations, more potential revenue streams. But let’s not get ahead of ourselves. The technical report, a dense 58 pages of academic wizardry, is where the real meat is. They’re talking about things like Manifold Constrained Hyper-Connections (mHC) and Moonshot’s Muon. Sounds impressive. Sounds… complicated. The kind of complicated that makes it hard for anyone outside a massive, well-funded lab to actually replicate. So, is this democratizing progress, or just making it more exclusive?

Is This Just Another Benchmark Race?

The chatter on the usual AI merry-go-round of subreddits and Twitters is predictably divided. Some folks are calling V4 Pro a solid contender, neck-and-neck with Kimi K2.6 and Claude Sonnet-class models, maybe even touching Opus territory depending on the benchmark. Others are more cautious, noting it’s still a step behind the absolute frontier models like GPT-5.x. And then there’s the whole debate about whether this is “democratizing” progress or creating an architecture so complex that only a select few can actually build and deploy it at scale. The latter seems far more likely to me. We’ve been here before with models that required absurd amounts of compute to fine-tune or run.

But this V4 Pro is a beast, no doubt. 1.6 trillion parameters, with 49 billion active. The V4 Flash is a more manageable 284 billion total, 13 billion active. Both trained on a staggering 32 trillion tokens. The sheer scale is mind-boggling. And the technical claims about memory reduction? If they hold up under scrutiny, that’s significant.

The most concrete technical claims repeated across the discussion: Two models V4 Pro: 1.6T total parameters / 49B active V4 Flash: 284B total / 13B active

Look, the race to build the biggest, most capable model is on. It always has been. But the real question isn’t how many parameters you have or how many tokens you can slurp up. It’s about who can actually build a business around it. Who’s writing the checks? Who’s deploying these things in ways that generate real value, not just academic paper citations?

Why the Huawei Pivot Matters (Maybe)

This pivot to Huawei’s Ascend chips is, as I said, the geopolitical elephant in the room. For DeepSeek, it’s a strategic move to avoid the chokehold of US sanctions and export restrictions. It signals a broader trend of China doubling down on its own AI infrastructure. They’re building their own foundries, their own chip designs, their own AI stacks. This isn’t just about DeepSeek V4 Pro; it’s about the long game of technological sovereignty.

But is the Ascend hardware mature enough to support models of this scale efficiently? Can Huawei’s software stack, like their CANN (Compute, Aggregate, and NPU) platform, truly compete with CUDA? My gut says not yet, not at the bleeding edge. It’s a compromise. A necessary one, perhaps, for strategic reasons, but a compromise nonetheless. It means that while V4 Pro might be technically impressive on paper, its practical deployment and performance might be limited by the underlying hardware, especially compared to what’s possible on NVIDIA’s H100s or Blackwells.

So, while DeepSeek is celebrating its technological leap, I’m reserving judgment. The impressive specs are there. The long context is a genuine step forward. But the real test will be adoption, monetization, and whether this leap in capability can translate into actual, tangible returns. My money’s on a lot of research papers and a very niche deployment for now. The AI gold rush is still on, but the claim jumpers are getting more sophisticated.