So, what does this DeepSeek V4 news actually mean for you, the everyday person trying to make sense of this dizzying AI whirlwind? It means the future isn’t locked behind corporate firewalls anymore. It means that powerful AI, the kind that can write your next great novel, debug your code in seconds, or even help you brainstorm that world-changing app idea, is about to become astonishingly accessible. Think of it like this: for years, the most powerful computers were massive, housed in secret government labs. Then came the personal computer. DeepSeek V4 feels like that same kind of democratizing leap for artificial intelligence.

This isn’t just another model release; it’s a fundamental platform shift. DeepSeek, a Chinese AI firm, has just unleashed V4, and it’s not just good; it’s a statement. It’s a blazing signal flare in the AI landscape, shouting that the era of prohibitively expensive, proprietary AI is on its way out. For individuals, for startups, for anyone with an idea and a laptop, this is the dawn of a new creative and productive age.

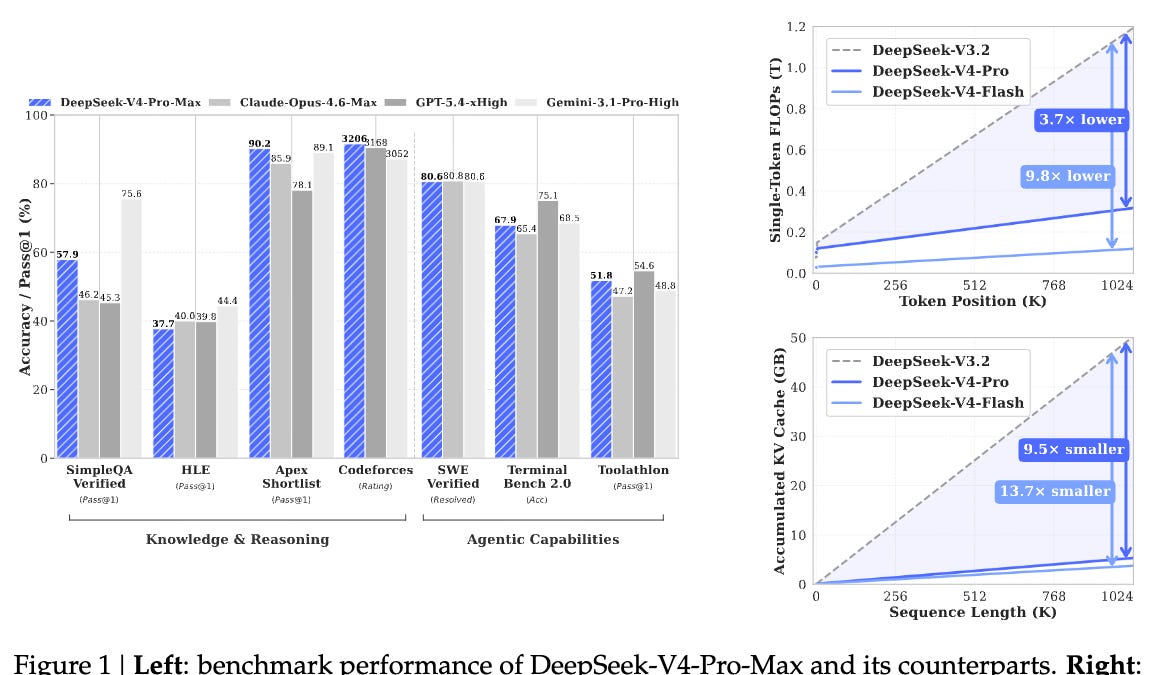

The big story here, the one that’ll make developers’ eyes gleam and entrepreneurs start sketching furiously, is the price-performance ratio. DeepSeek V4-Pro, their powerhouse model, is outperforming beasts like Anthropic’s Claude-Opus and OpenAI’s GPT-4.5 — yes, you read that right — on key benchmarks. But here’s the kicker: it does it for a minuscule fraction of the cost. We’re talking dollars, not hundreds or thousands, per million tokens. And V4-Flash? It’s even cheaper, practically giving away top-tier AI capabilities.

This affordability isn’t just a nice-to-have; it’s a radical enabler. Imagine a student writing a complex research paper who can now access AI to help structure arguments and find obscure sources without breaking their budget. Or a small business owner who can finally afford to build an AI-powered customer service chatbot that doesn’t sound like a cheap robot.

Why the Long Context Window is Actually a Big Deal

But let’s talk about the real wizardry under the hood, the thing that makes V4 feel like it’s truly seeing the world with fresh eyes: its colossal one million token context window. This isn’t just a small improvement; it’s like going from a flip phone to a supercomputer in your pocket. What does that even mean? It means the AI can remember and process an insane amount of information at once – think entire books, lengthy legal documents, or vast codebases – without forgetting the beginning by the time it reaches the end.

Previously, AI models would struggle with long texts, losing track of earlier points, a bit like trying to recall a long conversation after a power nap. This limitation has been a major bottleneck for complex tasks. DeepSeek’s architectural innovation, a more selective “attention mechanism” that prioritizes what’s important, is the key. They’ve essentially taught the AI to focus, like a seasoned detective sifting through clues, rather than being overwhelmed by noise. This allows for deeper understanding, more nuanced responses, and the ability to tackle truly monumental tasks that were previously out of reach for AI.

Will This Disrupt the AI Giants?

Now, the inevitable question: will V4 dethrone the reigning AI kings like OpenAI and Google? Probably not overnight, and maybe not directly. These giants have massive resources and deeply entrenched ecosystems. But here’s my unique take: DeepSeek V4 isn’t aiming to be a direct competitor to them in the same way. It’s aiming to be the foundational layer for everyone else. By making truly frontier-level AI open-source and incredibly affordable, DeepSeek is igniting a wildfire of innovation that the closed models will have to react to. It’s like giving the world a massive toolbox filled with the best tools imaginable, and then sitting back to watch what people build.

This open-source nature is the secret sauce. Anyone can download, inspect, and even modify V4. This fosters collaboration, accelerates discovery, and guards against the opaque, sometimes unsettling, decision-making of closed AI systems. It’s a powerful counter-narrative to the fear that AI development is solely in the hands of a few tech behemoths. This move is a powerful proof to the enduring strength and ingenuity of the open-source community, reminding us that true progress often comes from shared knowledge, not hoarding it.

The echoes of DeepSeek’s previous model, R1, which similarly stunned the industry and spurred a wave of open-weight releases from other Chinese firms, are undeniable. This isn’t a one-off fluke; it’s a strategy. And it’s working.

“We believe open-source is the future of AI development, and we are committed to making cutting-edge AI accessible to everyone.” - DeepSeek (paraphrased based on their actions)

This commitment, if they can sustain it through the inevitable pressures of competition and geopolitical scrutiny, is what truly makes V4 a watershed moment. It’s a bold step towards a more distributed, accessible, and frankly, more exciting AI future.

The “Why” Behind the V4 Push

DeepSeek has been relatively quiet since the R1 splash. But behind the scenes, like any ambitious tech player, they’ve been building. The recent “expert” and “flash” modes weren’t just random updates; they were the whispers of V4 to come. This release isn’t just about technical prowess; it’s also a strategic re-entry onto the global stage after a period of internal reshuffling and external scrutiny. It’s a powerful demonstration that despite challenges, their core mission of pushing AI forward hasn’t wavered. They’re proving that innovation thrives even under pressure, which is a critical point in today’s AI landscape where every move is scrutinized.

This release is particularly significant because it arrives at a time when governments worldwide are grappling with AI regulation. By offering a powerful, open-source alternative, DeepSeek might be subtly influencing the regulatory conversation, pushing towards models that are more transparent and adaptable.

FAQ

Will DeepSeek V4 replace my job?

No AI model is likely to replace entire jobs wholesale in the immediate future. V4, like other advanced AIs, is a tool. It can automate certain tasks, enhance productivity, and create new kinds of work, but it’s unlikely to replace the complex reasoning, emotional intelligence, and nuanced decision-making of human professionals across the board. Think of it as a powerful assistant, not a replacement.

How does DeepSeek V4 compare to GPT-4 or Claude 3 Opus?

DeepSeek V4-Pro claims to match or exceed the performance of models like GPT-4.5 and Claude 3 Opus on major benchmarks, particularly in coding, math, and STEM. The key differentiator is V4’s open-source nature and significantly lower cost per token, making cutting-edge AI more accessible.

Is DeepSeek V4 truly open source?

Yes, DeepSeek V4 is released as open-source, meaning the model weights and architecture are available for download, use, and modification by the public. This contrasts with proprietary models from companies like OpenAI and Google.