The real story behind DeepSeek V4’s launch isn’t just its mind-boggling scale—1.6 trillion parameters, a million-token context window—or its stellar performance on benchmarks. No, the seismic shift is that this behemoth is running natively on Huawei’s Ascend chips, not Nvidia’s. This isn’t a compatibility update; it’s a debut, a declaration of independence that echoes Jensen Huang’s dire pronouncements.

This means individuals and organizations, particularly those in regions facing US export restrictions, can potentially access cutting-edge AI capabilities without relying on the historically dominant Nvidia ecosystem. Think about it: developers in China, or even smaller research labs globally that found Nvidia’s hardware and licensing models prohibitively expensive or inaccessible, now have a powerful, cost-effective alternative to train and deploy massive models.

Beyond the Benchmarks: Why Native Ascend Support Matters

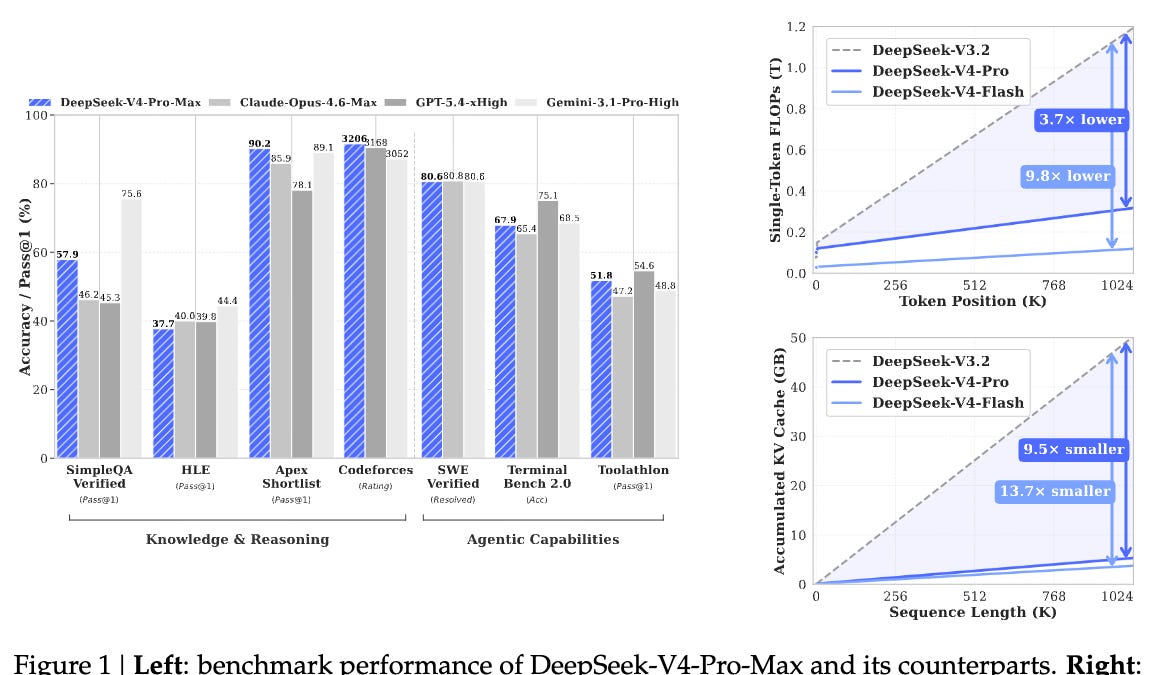

The raw numbers for DeepSeek V4 are undeniably impressive. V4-Pro, with its 1.6 trillion total parameters and 49 billion active ones, boasts a 33 trillion token pretraining corpus and a staggering 1 million token context window. Its sibling, V4-Flash, is no slouch either, packing 284 billion parameters with 13 billion active, trained on 32 trillion tokens. Both models shatter previous context length limitations, moving beyond ‘extended context’ to native, unadulterated million-token support. The performance figures, especially on coding and math benchmarks, where V4-Pro-Max outpaces even rumored GPT-5.4 and Claude Opus 4.6 on certain tasks, are a clear signal. But as the original article pointed out, the price is the truly terrifying aspect – V4-Flash input costs a fraction of its Western counterparts, and V4-Pro output is a mere fraction of Claude Opus 4.6’s price, with comparable or superior performance.

But the economics, while disruptive, aren’t the architectural earthquake. That’s Huawei Ascend. For years, the AI development world has been tethered to Nvidia’s CUDA, H100s, and NVLink. It was the de facto standard, the only viable path to large-scale AI. DeepSeek V4’s debut on Ascend flips that script entirely. Huawei’s official announcement confirms that its Ascend 950, A2, and A3 super nodes support V4 out of the box. This is the result of deep, collaborative engineering between DeepSeek and Huawei, ensuring V4’s inference stack is precisely tuned for Huawei’s CANN (Compute Architecture for Neural Networks) platform.

The Geopolitical Foundry: Sanctions Forging a New Chip Future

To truly grasp the significance, we must look at the geopolitical forces at play. The US export controls, starting in October 2022 and escalating since, have systematically choked off China’s access to advanced AI chips. Large-scale, compliant procurement of Nvidia’s top-tier hardware has become virtually impossible, pushing major players to explore alternative architectures. This isn’t just about national security; it’s about economic use and technological self-sufficiency.

Meanwhile, Huawei, under immense pressure, has been forced to innovate at an accelerated pace. Their Ascend chips are a proof to this forced evolution. The Ascend 950, for instance, utilizes a Multi-Chip Module (MCM) architecture with dual compute and dual I/O dies, manufactured by SMIC using advanced processes that circumvent the need for EUV lithography. Analysts suggest impressive yield rates, demonstrating a workaround for production bottlenecks. And then there’s the proprietary LingQu interconnect, boasting a massive 2TB/s bandwidth—outstripping Nvidia’s NVLink 5.0—with sub-microsecond latency. Crucially, the Ascend 950PR features hardware-native FP4 inference, a computational precision that slashes memory footprints by 75%. This is where V4, one of the first trillion-parameter models to deploy FP4 at scale, finds its perfect hardware partner. The chip and the model aren’t just compatible; they’re precision-matched, a symbiotic relationship forged in the crucible of geopolitical necessity.

The New AI Cartography: Where Do We Go From Here?

This isn’t just a win for Huawei and DeepSeek; it’s a seismic event for the entire AI landscape. It signifies the end of a single-vendor chokehold. For years, if you wanted state-of-the-art AI, you bought Nvidia. Now, you have options. This could democratize access to massive AI models, allowing smaller nations, academic institutions, and even ambitious startups to build out their own AI infrastructure without the specter of hardware scarcity or exorbitant costs. It fosters competition, which, in turn, drives innovation at an even faster clip. The landscape shifts from a single, dominant player to a multipolar world of AI hardware, where performance, cost, and geopolitical alignment will all play a role.

The implications for real people are profound. Imagine AI assistants that are significantly cheaper to run, leading to more accessible and powerful services. Consider research initiatives that can scale without the crippling expense of cutting-edge GPUs. This move hints at a future where AI development isn’t solely concentrated in a few tech giants but is distributed more widely, leading to a broader range of AI applications and perhaps, a more diverse set of AI voices. The fear that Jensen Huang articulated wasn’t about the technology itself, but about the geopolitical implications of a rival ecosystem emerging. That fear is now a reality.

We’re witnessing the architectural dawn of a new AI era, one where the silicon battlefield is widening, and the implications for global technological leadership are immense. It’s no longer just about who builds the best AI model, but who builds the hardware that can efficiently and affordably run it.

🧬 Related Insights

- Read more: Meta Devours Dreamer: Zuck’s Superintelligence Sidekick Finally Has Legs

- Read more: OpenAI’s Bold Bet: Backing an Illinois Bill to Shield AI Labs from Catastrophic Liability

Frequently Asked Questions

What does DeepSeek V4 actually do? DeepSeek V4 is a massive artificial intelligence model capable of understanding and generating human-like text, writing code, and performing complex reasoning tasks. Its key innovations are its enormous scale (1.6 trillion parameters), an exceptionally large context window (1 million tokens), and efficient deployment on non-Nvidia hardware like Huawei Ascend chips.

Will this replace my job? Large AI models like DeepSeek V4 are more likely to augment human capabilities rather than outright replace entire jobs in the immediate future. They can automate repetitive tasks, assist with complex problem-solving, and speed up creative processes. The demand for people who can effectively use, manage, and develop AI tools is likely to increase.

Why is running on Huawei chips so important? Running advanced AI models like DeepSeek V4 on hardware not manufactured by Nvidia is significant because it breaks Nvidia’s long-standing dominance in the AI chip market. This offers alternatives for countries and companies facing US export restrictions, potentially lowers the cost of AI development, and fosters greater competition and innovation in the AI hardware sector. It signifies a move towards a more distributed and multipolar AI ecosystem.