When was the last time you considered your own laptop a cutting-edge AI research platform? Probably never. Yet, here we are. The landscape of artificial intelligence, once the exclusive domain of gargantuan server farms and cloud titans, is splintering. It’s democratizing, fragmenting, and landing squarely on your personal machine, thanks to innovations like Google’s Gemma 4 and Meta’s Openclaw. This isn’t just about convenience; it’s a tectonic shift in how we interact with and develop AI.

For too long, the narrative around large language models (LLMs) has been dominated by colossal enterprises training models with petabytes of data and billions of parameters. This required an infrastructure that was simply out of reach for the vast majority of developers, researchers, and even curious individuals. The cost, the complexity, and the sheer computational brute force involved meant that access was mediated, controlled, and, frankly, expensive.

But the game is changing. Rapidly.

Gemma 4, Google’s family of lightweight, state-of-the-art open models, is a prime example. Built using the same research and technology behind their Gemini models, Gemma is designed to be performant and accessible. This means you can run sophisticated AI tasks – like text generation, summarization, and question answering – directly on your laptop or desktop, provided it has a decent GPU. No constant internet connection, no API fees for every query, just raw processing power at your fingertips.

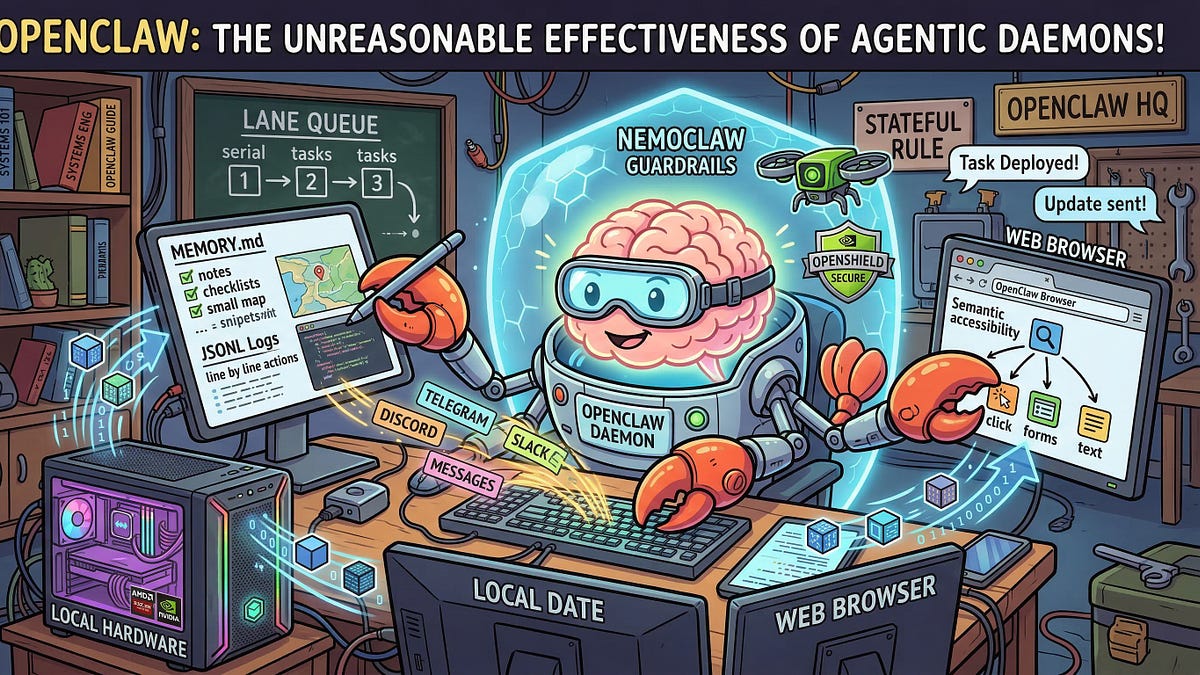

And then there’s Openclaw. While Meta’s Llama series has been a significant force in open-source AI, the focus here is on making these models even more amenable to local deployment. The push is toward optimization, toward squeezing more power out of less hardware. This isn’t just about slapping a pre-trained model onto a consumer-grade graphics card; it’s about architectural innovations that allow these complex neural networks to hum along efficiently on hardware we already own.

Why Does Running AI Locally Matter?

The implications of this local AI revolution are profound. Firstly, it’s a massive win for privacy. When your sensitive data—or even just your conversational patterns—doesn’t need to leave your machine to be processed by a remote server, the risk of breaches and unauthorized access plummets. Think about it: your personal notes, proprietary code snippets, or even just your brainstorming sessions can be fed into an AI without you worrying about them ending up in a company’s training data or a hacker’s database.

Secondly, this democratizes innovation. Researchers who can’t afford massive cloud compute budgets can now experiment, iterate, and build upon existing models in ways that were previously impossible. Small startups can develop specialized AI tools without the prohibitive upfront cost of cloud infrastructure. And hobbyists—the tinkerers and curious minds who have always pushed the boundaries—now have a playground that rivals professional labs of yesteryear.

Consider the speed of iteration. When you’re waiting for cloud API responses, each test, each tweak, adds latency. Running locally means you get instant feedback. This acceleration is critical for rapid prototyping and for fine-tuning models to specific, niche tasks where general-purpose cloud models might fall short. You can train a model specifically on your company’s internal documentation, or on your personal writing style, and see the results in real-time.

“The democratization of advanced AI capabilities is no longer a distant future; it’s a present-day reality unfolding on our personal devices.” This statement, though perhaps a bit too lofty for some PR departments, captures the essence of what’s happening.

Of course, there are challenges. Your personal hardware isn’t going to compete with a data center. Performance will vary wildly depending on your GPU, RAM, and the specific model size you’re attempting to run. Fine-tuning massive models locally might still be a stretch for many. But for inference—the act of using a trained model to generate output—and for running smaller, highly optimized models, the barrier to entry has fallen dramatically.

The Architectural Underpinnings of Local AI

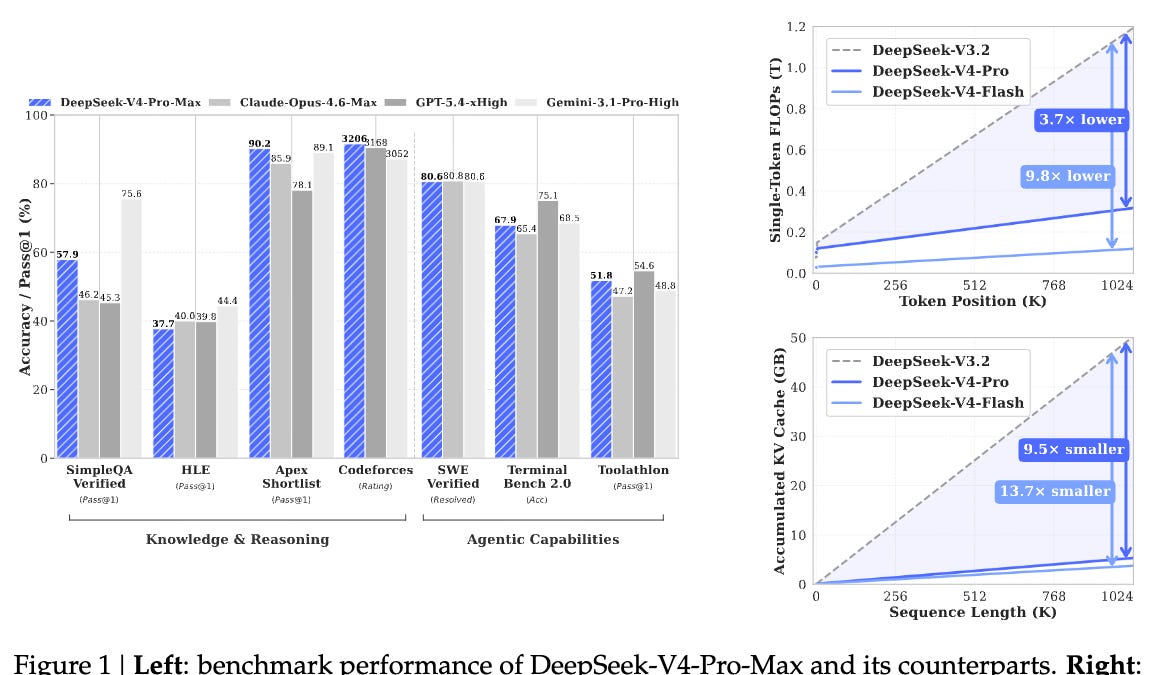

The ‘how’ behind this local AI boom is fascinating. It’s a confluence of factors: the continued, relentless improvement in GPU efficiency and VRAM capacity in consumer hardware, coupled with significant advancements in model quantization and pruning techniques. Quantization, for instance, is a process that reduces the precision of the numbers used to represent a model’s parameters, making the model smaller and faster without a catastrophic loss in accuracy. Pruning involves removing redundant or less important connections within the neural network.

Furthermore, frameworks like llama.cpp and its ilk have been instrumental. These projects are dedicated to making large language models run efficiently on standard CPUs and GPUs. They tackle memory management, computation optimization, and platform compatibility with a ferocity that mirrors the early days of open-source software development.

This isn’t just about making LLMs run; it’s about making them practical. It’s about the engineering challenges that arise when you move from theoretical possibility to widespread deployment. The teams working on these open-source projects are, in many ways, the unsung heroes of this local AI revolution, translating academic breakthroughs into tangible, usable software.

What this ultimately means is a decentralization of AI power. The big players will still push the frontier with ever-larger models, but the tools for experimentation, application, and even specialized training are now within reach of a much broader community. It’s a paradigm shift that promises to accelerate innovation in ways we’re only just beginning to imagine.

Will this eventually displace the need for massive cloud AI? Not entirely. For bleeding-edge research, for models that require truly exascale computing, the cloud remains king. But for a vast array of applications, for personal productivity, for niche business tools, and for fostering a more distributed and privacy-conscious AI ecosystem, the local revolution is here, and it’s running on your machine.

🧬 Related Insights

- Read more: Pentagon’s $200M AI Ultimatum to Anthropic Could Boomerang Hard

- Read more: Reasoning From Scratch Chapter 1: Clever Intro or Clever Marketing?

Frequently Asked Questions

What does it mean to run AI locally?

Running AI locally means executing AI models, like large language models, directly on your own computer’s hardware (CPU or GPU) rather than relying on remote servers or cloud services.

Do I need a supercomputer to run Gemma 4 or Openclaw?

Not necessarily. While a powerful GPU will offer better performance, these models are designed to be more efficient. You can often run them on consumer-grade hardware, though performance will vary based on your system’s specifications and the model size.

Is local AI more private than cloud AI?

Generally, yes. When you run AI models locally, your data doesn’t need to be transmitted to external servers for processing, significantly enhancing privacy and reducing the risk of data exposure.