Lunar New Year bangs fade into Beijing smog — and DeepSeek V4 explodes onto the scene, a million-token beast ready to devour entire codebases in one gulp.

Zoom out. China’s open-source AI labs aren’t just keeping up; they’re lapping Silicon Valley. With chip sanctions biting hard, these teams — DeepSeek leading the pack — crank out innovations that make U.S. models look bloated and pricey. It’s like watching a scrappy garage band outjam stadium rockstars on half the amps.

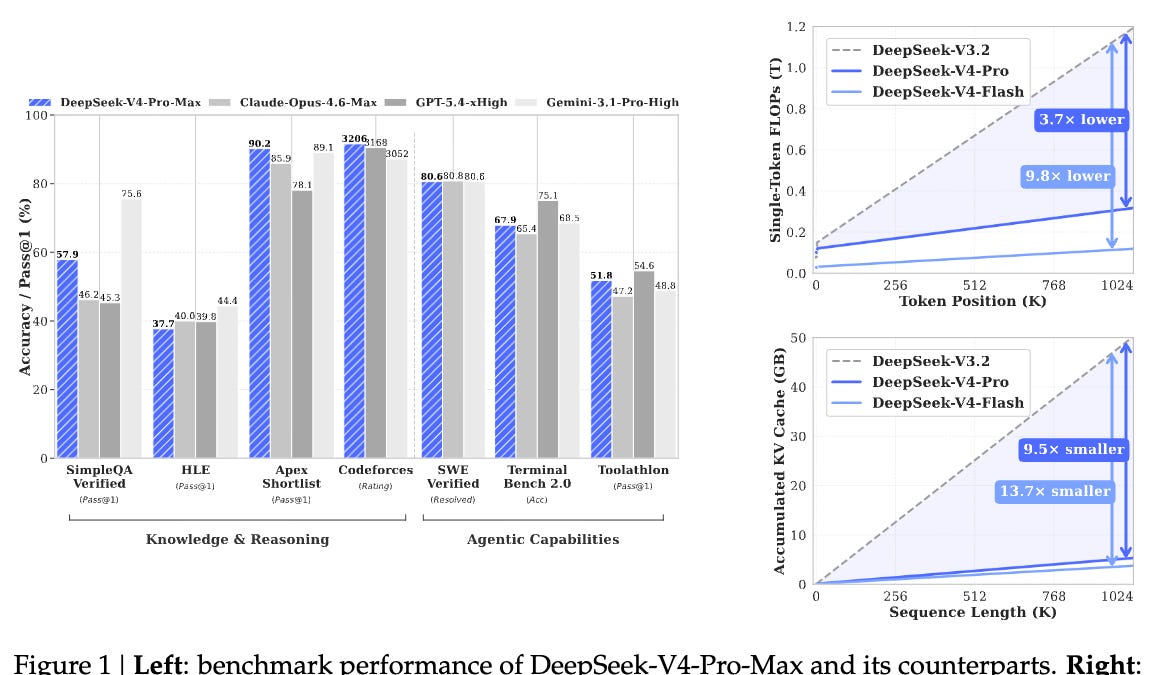

DeepSeek V4. Say it three times. This isn’t some incremental tweak. Rumors swirl around ‘MODEL1,’ a full architectural gut-job launching right around February 17th, 2026. Forget V3’s solid-but-safe vibes. V4 cranks context to over a million tokens via DeepSeek Sparse Attention (DSA) — imagine feeding it your whole GitHub repo, files sprawling like a digital cityscape, and it debugs the mess without breaking a sweat.

But wait — there’s Engram. Picture this: a ‘conditional memory’ wizard splitting facts from reasoning. No more amnesia when the context balloons. Your model recalls vast data troves without choking on inference. Then mHC, those Manifold-Constrained Hyper-Connections, stabilizing training like shock absorbers on a rally car, slashing energy use while scaling wild.

And the crown jewel? MODEL1’s tiered KV cache storage — GPU memory down 40%. Run this frontier model on a budget rig, not some H100 fortress. DeepSeek’s turning AI from oligarch toy into democratized rocket fuel.

“I believe V4 and R2 will remain among the best open-source LLMs available, potentially even narrowing the gap with leading proprietary models.” - Tony Peng (former AI reporter for Synced)

Tony nails it. But here’s my twist — and it’s a doozy. This feels like the Linux kernel moment in the ’90s. While Microsoft hoarded Windows gold, open-source hackers built an OS that powered everything from servers to Android empires. DeepSeek V4? It’s that kernel for agentic AI. Expect devs worldwide to fork it, bolt on agents that browse, code, and hustle autonomously. By 2027, proprietary models like o1 or GPT-whatever will chase these open tails, not lead.

What Powers DeepSeek V4’s Million-Token Magic?

Sparsity. That’s DeepSeek’s secret sauce. DSA isn’t fluffy theory — it’s sparse attention laser-focused on what’s relevant, ignoring the noise. Your model ‘reads’ a codebase like a human skimming a novel: key plot points pop, fluff fades. Pair it with Engram’s memory tiers — facts parked in cheap storage, reasoning hot-swapped in RAM — and boom: efficiency skyrockets.

mHC? Think neural highways with guardrails. Training blows up on big models; this constrains the chaos, funnels compute where it counts. Less power, more smarts. I’ve seen U.S. labs burn billions on brute force — DeepSeek’s flipping the script, proving elegance beats excess.

MODEL1 ties it: KV cache smarter, not bigger. 40% memory win means deploying V4 on laptops, not data centers. China’s vibe? Iterate fast, ship open, let the world remix.

Short para punch: Game on.

Silicon Valley’s 996 grind — yeah, they’re hustling — but DeepSeek’s ecosystem snowballs. Zhipu IPO’d, MiniMax too. Reuters spots rivals piling on consumer-friendly models post-DeepSeek’s January 2025 shaker. Open-weights build products; closed hype trains hype.

Here’s the energy: U.S. agents code cute; Chinese ones agent harder — browsers, RL, hardware hacks. No U.S. equivalent to this open frenzy. Prediction? V4 sparks ‘DeepSeek agents’ flooding dev tools by summer. Your IDE? Powered by Beijing brains.

Will DeepSeek V4 Close the OpenAI Gap for Good?

Gap? It’s shrinking fast. o1 reasons step-by-step; V4 reasons across megacontexts without hallucinating history. Tony Peng’s bullish — and I’m doubling down. Unique angle: This bifurcates AI forever. Closed for chatbots, open for infrastructure. Like cloud vs on-prem — opens win scale.

Critique time. DeepSeek’s PR? Stealthy, not splashy. No Davos keynotes. Just drops that ripple. U.S. spins ‘frontier’; China ships it open. Who’s really leading?

DeepSeek-R2 lags — weeks out — but V4’s the appetizer. Ecosystem buzz: Reinforcement learning tweaks, software stacks bleeding into rivals. China’s AI-chips catch-up? HBM flowing, semis surging. Ascent locked.

Wander a sec: Remember DeepSeek’s 2025 moment? Low-cost stunner. Now? Full ecosystem. Tony Peng, Grace Shao — ground zeros — whisper capabilities still veiled.

So, yeah — wonder hits. AI’s platform shift roars louder. DeepSeek V4? Proof positive.

Why Developers Can’t Ignore DeepSeek V4

Multi-file refactors. Agentic browsers on steroids. Cheap runs. If you’re building, fork V4 yesterday.

Bold call: By 2027, 60% of agent startups run DeepSeek base. Open-source snowballs — U.S. closed crumbles under cost.

Energy peaks. Pace quickens. Future? Chinese fireworks lighting AI’s sky.

🧬 Related Insights

- Read more: AI’s Real Bottlenecks: Helium Shortages, Chip Wars, and 2026’s Crunch

- Read more: Perplexity Computer: Your Second Brain or Just Clever Note-Taking?

Frequently Asked Questions

What is DeepSeek V4?

DeepSeek V4 is the next open-source LLM from China’s DeepSeek, featuring 1M+ token contexts via Sparse Attention, Engram memory, and 40% cheaper inference.

When does DeepSeek V4 launch?

Expected around Lunar New Year, February 17, 2026 — codenamed MODEL1, it’s a full architecture overhaul.

How does DeepSeek V4 beat previous models?

Massive context for codebases, stable training with mHC, and efficient KV cache — all open-weight, runnable on modest hardware.