Large Language Models

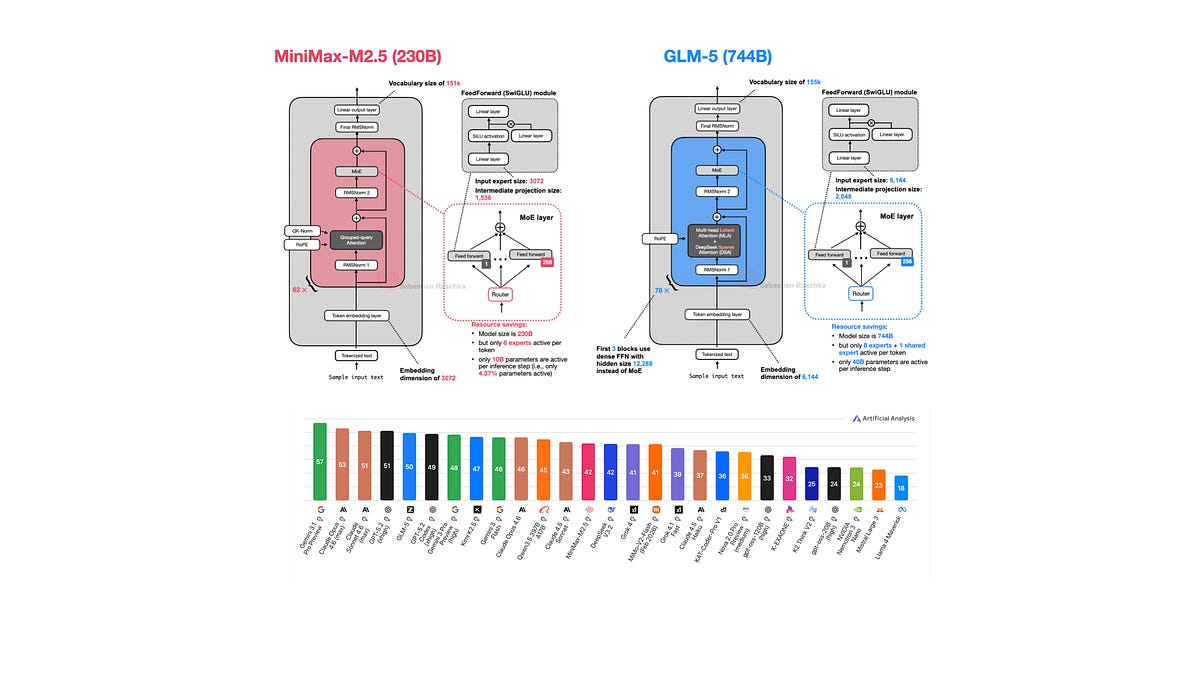

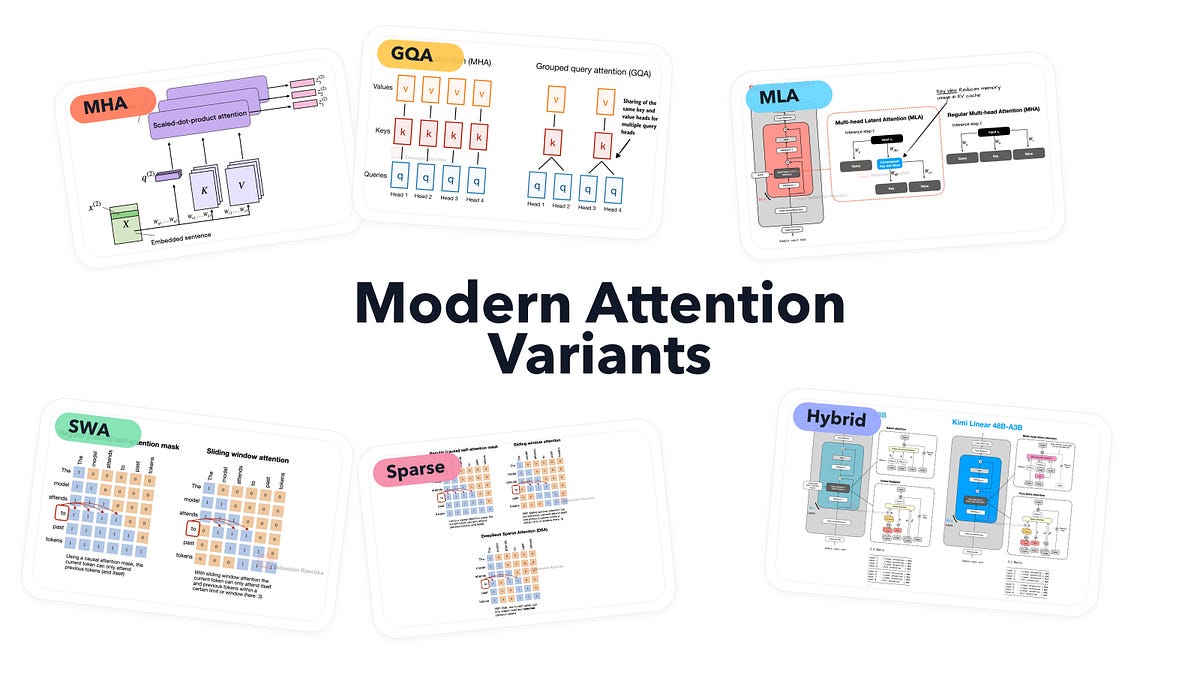

Attention Variants Mapped: Efficiency Wars in LLMs

Attention mechanisms in LLMs aren't static relics—they're battlegrounds for speed and scale. Sebastian Raschka's new gallery reveals the winners.