So, have you ever stopped to consider what’s really happening under the hood when these massive AI models swap performance for… well, more performance? We’re talking about the kind of trade-offs that feel less like engineering and more like alchemy. The latest brouhaha centers on DeepSeek V4, a model suite that’s stirred the pot by offering wildly different price points and, apparently, wildly different results. The question isn’t just if one model is better, but why and how a significantly cheaper option can apparently punch so far above its weight class.

Here’s the thing: companies love to tout their flagship models, the ones boasting the highest parameter counts, the most expensive training runs, the “Pro-Max” editions. They’re the Ferraris of AI, and they cost accordingly. But what if the true innovation isn’t in the sheer scale, but in the cleverness of the architecture? That’s precisely the narrative emerging from the DeepSeek V4 tests. The headline grabber? The $0.04 per million tokens ‘Flash’ model winning a staggering 7 out of 20 real-world tasks when compared against its beefier counterparts.

The KV Cache Trick Nobody Saw Coming

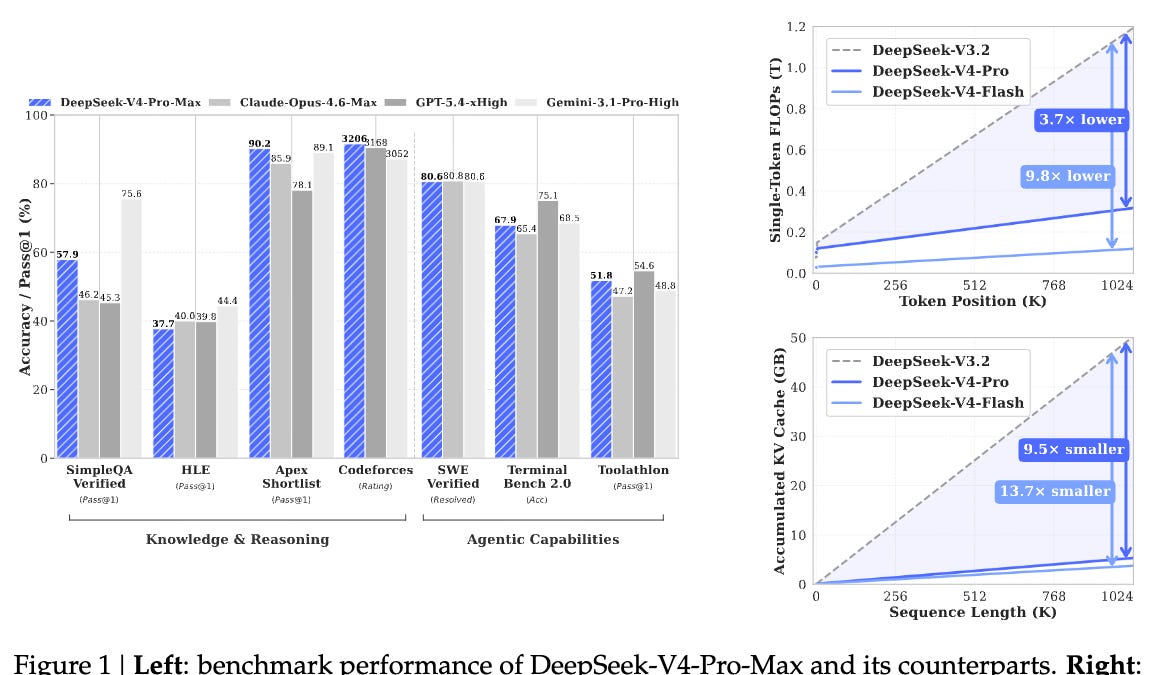

This is where things get juicy. The original piece highlights a “10% KV cache trick” that was apparently overlooked by most. For the uninitiated (and even for some who think they know), the KV cache is this crucial component in transformer models that stores computed key and value states for attention layers, dramatically speeding up inference by avoiding redundant calculations. Imagine a chef pre-chopping all the vegetables before starting to cook – that’s the KV cache. Now, imagine optimizing that pre-chopping process by, say, 10% using a novel technique.

It sounds small, right? A 10% optimization. But in the hyper-competitive, razor-thin-margin world of AI inference, 10% can mean the difference between a profitable service and a money pit. And in this case, that 10% trick seems to have disproportionately benefited the smaller, faster models, allowing them to achieve parity, or even superiority, in certain tasks. It’s a brilliant illustration of how architectural tweaks can sometimes dwarf raw computational power.

Why Did Pro-Max Burn 4.3x More Tokens?

This is the kicker, the detail that screams ‘something’s not right’. The Pro-Max edition, presumably the top-tier offering, consumed a whopping 4.3 times more tokens for a mere 2-point gain in performance on certain metrics. Let that sink in. You’re paying a premium, you’re using vastly more computational resources, and you’re getting a marginal improvement. This isn’t just inefficient; it’s a sign that the Pro-Max might be over-engineered for many common use cases, or perhaps its optimizations are misaligned with the tasks it’s being pushed to perform. It’s like bringing a bazooka to a knife fight – overkill, expensive, and potentially less precise.

The DeepSeek V4 architecture, it seems, is more nuanced than a simple parameter count comparison would suggest. The ‘Flash’ model isn’t just cheap; it’s lean and mean, optimized through clever design choices. This contrasts sharply with models that rely on sheer scale and brute force, often leading to diminishing returns and inflated costs. The question then becomes: how much of this ‘Pro-Max’ premium is actual added value, and how much is just the cost of bloated complexity?

Is the Cheapest Model Always the Best Bet?

No, not necessarily. But in the context of DeepSeek V4’s testing, the results are compelling enough to warrant serious consideration. The real takeaway here isn’t that $0.04 is the magic number for all AI tasks. It’s that the efficiency of the model, driven by smart architectural decisions like the KV cache optimization, can be a far more potent differentiator than sheer size. For developers and businesses looking to deploy AI at scale, this data suggests a shift in focus: from chasing the largest models to understanding which models are the most appropriately sized and optimized for their specific needs.

The implication is profound. We might be moving away from a arms race of parameter counts toward an era of specialized, efficient AI. The models that win won’t just be the biggest; they’ll be the smartest in their design. This test serves as a stark reminder that innovation often comes from understanding the fundamental mechanics, not just throwing more hardware at the problem. It’s a human-centric insight in a machine-driven world – the clever hack, the elegant solution, can often trump brute force.

🧬 Related Insights

- Read more: LeRobot v0.5.0 Unlocks Humanoids — And Exposes the Open-Source Robotics Chasm

- Read more: OpenAI Buys Astral: 2 Million Codex Users Eye Python Workflow Revolution

Frequently Asked Questions

What is DeepSeek V4 Flash? DeepSeek V4 Flash is a specific, more cost-effective mode of the DeepSeek V4 language model, which achieved high performance on 7 out of 20 tested tasks, outperforming pricier models.

How does DeepSeek V4 Flash beat other modes? It reportedly benefits from a novel “10% KV cache trick” that optimizes computational efficiency, allowing it to deliver strong results at a lower cost and token usage compared to more resource-intensive modes like Pro-Max.

Will this make AI cheaper for everyone? While this specific test shows significant cost savings for certain tasks with DeepSeek V4, the broader trend towards more efficient model architectures could lead to more affordable AI services overall as developers adopt similar optimization techniques. This DeepSeek V4 test is a strong indicator of that potential.