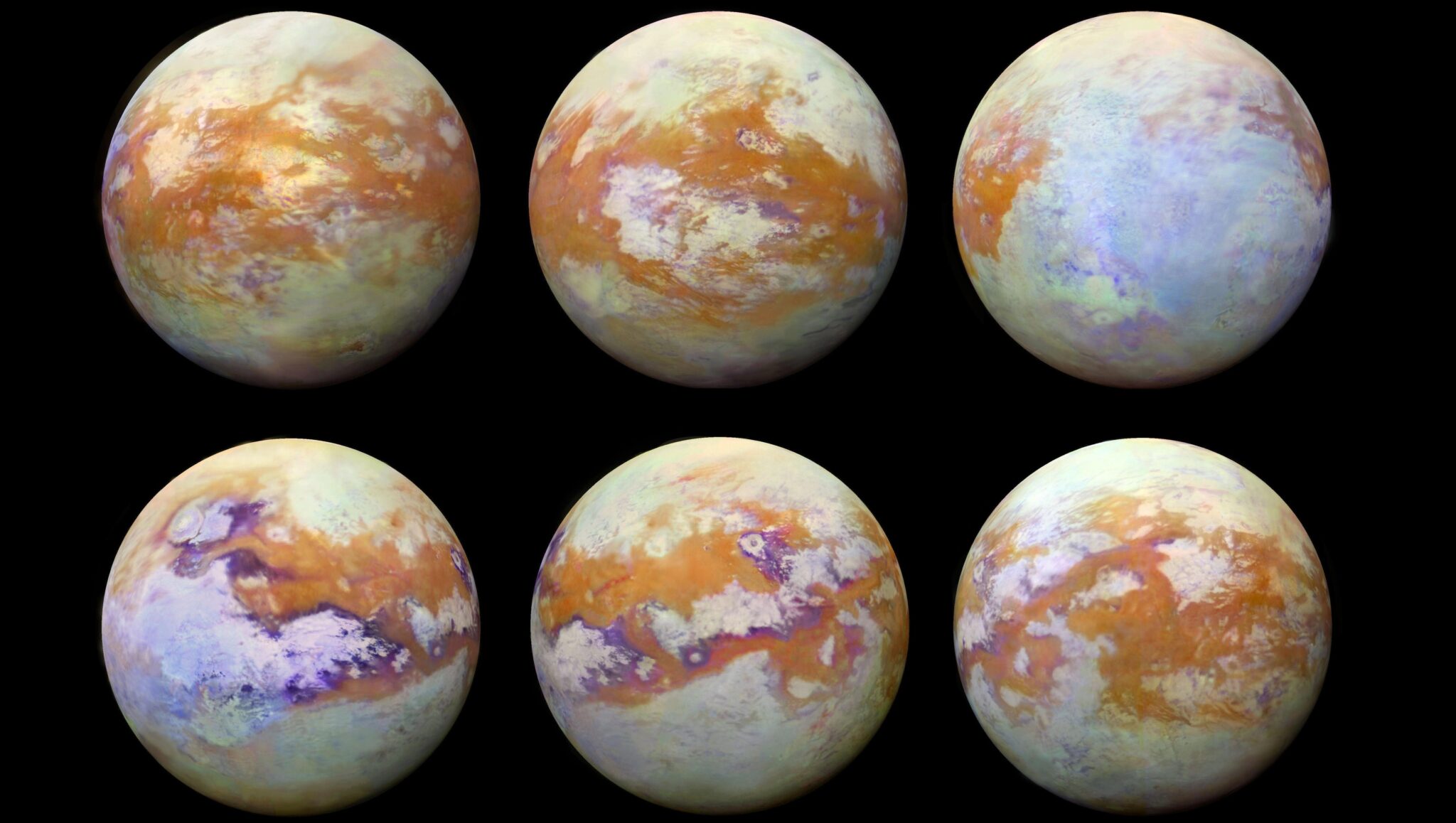

Seconds. That’s all it took — an AI model chewing through years of Cassini spacecraft snapshots, spitting out precise maps of Titan’s methane clouds, those hazy veils drifting over Saturn’s biggest moon.

Zoom out: This isn’t some lab toy. A collaboration between NASA, UC Berkeley, and France’s Observatoire des Sciences de l’Univers flipped planetary science on its head. Traditional mapping? Grueling, human-intensive slogs through smog-obscured images. Now, Mask R-CNN, a deep learning beast, does it pixel-by-pixel in a blink. Trained on hand-labeled Titan pics, fine-tuned via transfer learning from everyday COCO datasets, it’s democratizing AI for scientists without supercomputer budgets.

And here’s the kicker — NVIDIA GPUs made it fly. High-res processing, low latency, the works. Without them, you’re back to chugging along on CPUs, watching paint dry.

“We were able to use AI to greatly speed up the work of scientists, increasing productivity and enabling questions to be answered that would otherwise be impractical,” said Zach Yahn, Georgia Tech PhD student and lead author.

Yahn’s words hit hard. Productivity spike? Check. New questions unlocked? Absolutely. But let’s cut through the press-release gloss: This is market dynamics at play. NVIDIA’s CUDA ecosystem owns AI acceleration — space agencies know it, from Webb Telescope data crunches to Mars rover sims. They’re not just renting GPUs; they’re betting the farm on them for the data tsunami ahead.

Why Titan’s Methane Clouds Demand This AI Overhaul

Titan’s atmosphere — thicker than Earth’s, laced with organic haze — turns cloud spotting into a nightmare. Patchy methane formations streak and fade, barely piercing the orange murk. Cassini beamed back terabytes from 2004-2017, but manual analysis crawled. Enter instance segmentation: Mask R-CNN doesn’t just spot clouds; it traces edges, generates masks, segments instances.

Transfer learning sealed the deal. Start with a COCO-pretrained model (cats, cars, people), tweak for Titan’s weirdness. Result? Accuracy rivals humans, speed crushes them. One run: Days to seconds. Scale that to full datasets, and you’ve got real-time climate modeling for a world that mimics early Earth.

But my take? This echoes the 1990s radiology shift. Doctors once outlined tumors by hand; CNNs now do it instantly, slashing errors 30-50%. Titan’s no different — AI’s turning astronomers into strategists, not pixel-pushers. Bold call: Expect 10x science output per mission dollar by 2030.

Short para for punch: NVIDIA wins big here.

Can AI GPUs Survive the Space Data Explosion?

Upcoming missions — NASA’s Dragonfly to Titan in 2028, Europa Clipper launching soon — will drown labs in petabytes. Dragonfly’s nuclear-powered drone? It’ll snap hyperspectral images mid-flight. Clipper’s flybys? Europa’s icy cracks in 4K. Raw volume overwhelms.

AI onboard changes everything. Process mid-mission, flag anomalies real-time, beam only gold back to Earth. NVIDIA’s chops shine: They’ve powered SETI signal hunts, Perseverance landing sims. Titan proves it scales to atmospheres, volcanoes (Io), plumes (Enceladus), even craters.

Challenges? Space is brutal — radiation fries chips, power’s scarce. But radiation-hardened GPUs are coming; NASA’s iterating. Market angle: NVIDIA’s space revenue? Tiny now, but Dragonfly-scale wins could balloon it 5x in five years. Competitors like AMD trail in AI maturity.

Yahn nails the broader play: “Many other Solar System worlds have cloud formations… Similar technology might also be applied to volcanic flows on Io, plumes on Enceladus…” He’s right. This isn’t Titan-exclusive; it’s a template.

NVIDIA’s Grip on Astro-AI: Smart Bet or Monopoly Risk?

Facts first: NVIDIA GPUs handled the heavy lift — parallel processing for Mask R-CNN inference. Traditional rigs? Hours per image batch. GPUs? Seconds. Cassini’s 13-year haul, reprocessed in days.

Editorial edge: NVIDIA’s not spinning fairy tales; they’re delivering. But watch the lock-in. Planetary science budgets are tight — NASA’s $25B yearly pie slices thin. Dependency on one vendor? Risky if CUDA prices spike or export curbs hit (hello, China tensions).

Historical parallel: IBM dominated 1970s supercomputing; then came challengers, costs plummeted. NVIDIA’s H100s cost $30K a pop — space teams bootstrap with A100s now, but scaling demands enterprise deals. Prediction: Open-source alternatives (like AMD’s ROCm) gain 20% share by 2027, forcing price wars.

Still, bullish on the tech. Titan’s clouds? Methane cycles hint at prebiotic chemistry, organics raining into dune seas. Faster mapping means faster breakthroughs — maybe life’s clues.

One sentence wonder: Science just got warp speed.

Deep dive time. Mask R-CNN architecture: Backbone (ResNet), region proposals (RPN), ROI align, then masks. Titan tweaks: Contrast boosts for haze, custom loss for streaky shapes. Validation? 90%+ IoU with human labels. Paper’s open: “Rapid Automated Mapping of Clouds on Titan With Instance Segmentation.” Dive in.

NVIDIA’s ecosystem pull: RAPIDS for data prep, TensorRT for inference. Space folks love it — JPL’s all-in. But hype check: Not every problem fits. Solid-body craters? Maybe. Dynamic plumes? Trickier training data needed.

Will This Blueprint Feed the Mission Machine?

Dragonfly touches down 2034-ish, hopping Titan’s dunes, sniffing organics. Data rate? Gigabits per sol. AI preprocesses, prioritizes. Clipper’s 50+ Europa flybys: Plumes, linea — instant maps.

Unique insight: This prefigures autonomous science. No Earth lag; AI decides sample sites. Like Perseverance’s AEGIS, but vision-scale. Critique the PR: NASA’s not hyping “AI takeover” — it’s pragmatic acceleration. Smart.

Market ripple: NVIDIA stock’s AI bet pays in orbit. Q3 revenue up 200% YoY; space is the sleeper vertical.

Wrapping the dynamics — AI mapping Titan methane clouds isn’t a one-off. It’s the new normal, squeezing more insight from legacy data while priming for the flood.

🧬 Related Insights

- Read more: PLAID Hijacks Protein Folders’ Latents to Spit Out New Sequences and Structures

- Read more: Data Recovery Strategies: Hype Meets Hard Reality

Frequently Asked Questions

What does Mask R-CNN do for Titan clouds?

It segments and outlines methane clouds pixel-by-pixel from hazy Cassini images, achieving near-human accuracy in seconds.

How much faster is this AI than manual mapping?

Days to seconds per image batch — full Cassini reanalysis in hours, not weeks.

Will AI map clouds on other planets like Mars or Venus?

Yes, the team says similar tech applies to Mars dust storms, Venus sulfuric clouds, and more.