Hammer. Hammer. Hammer.

Your GPU’s memory rows light up like a deranged percussionist at a death metal concert, bits flipping from 0 to 1 in a frenzy nobody saw coming. And just like that—in a shared cloud rig worth eight grand—a nobody user climbs to god-mode, root access to the whole damn host machine.

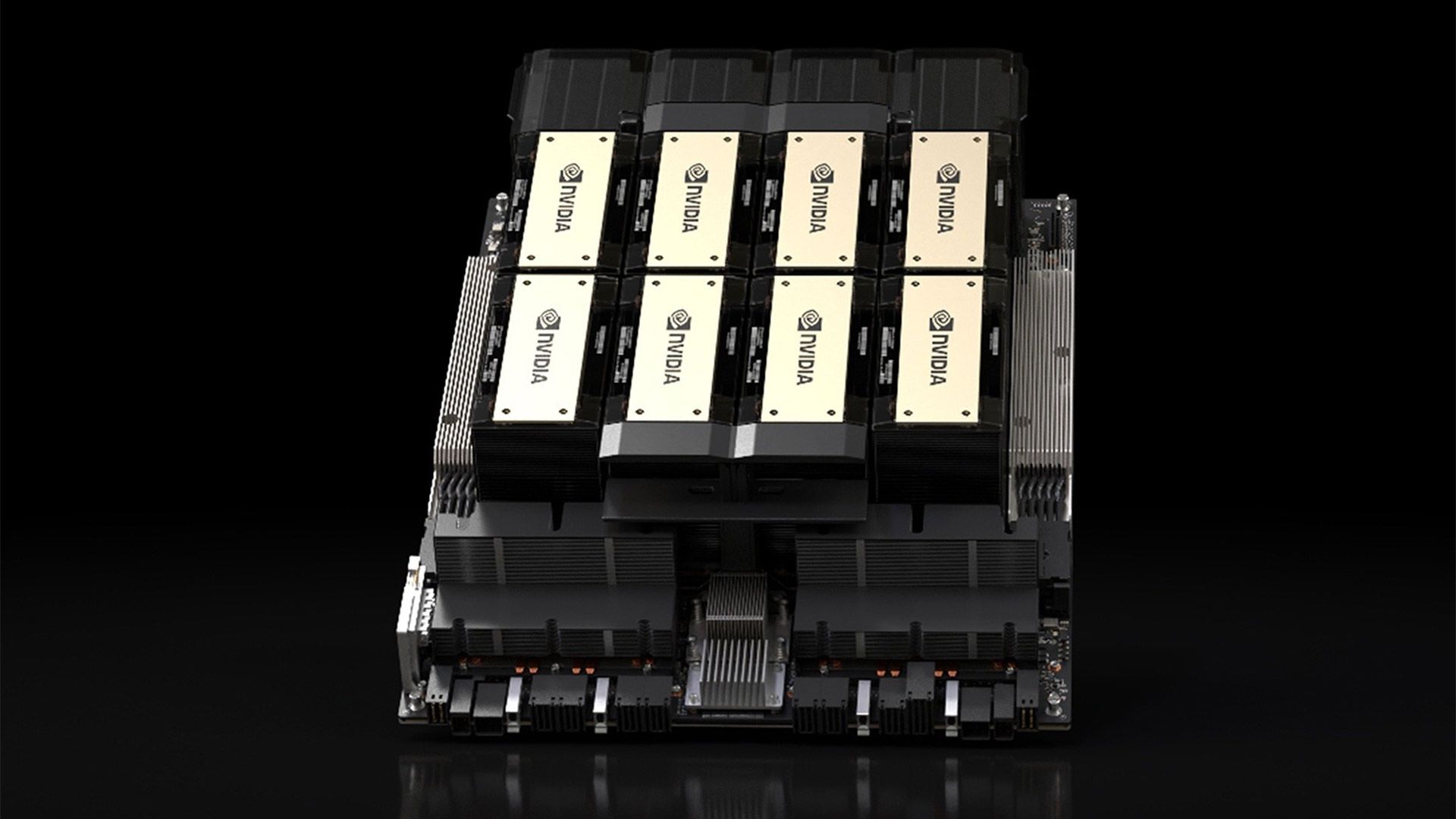

Zoom out. This isn’t some dusty CPU relic from 2014. We’re talking Nvidia’s Ampere GPUs, the heart-pounding engines powering today’s AI revolution, now cracked wide open by three brutal new Rowhammer attacks.

Rowhammer? It’s the ghost in the DRAM machine. Hammer one row of memory cells too hard, too fast, and the electrical chaos leaks over—flipping innocent bits in neighboring rows. Back in the day, it was a curiosity. Now? A full-system killshot on high-end GDDR memory.

Remember When Sharing Meant Dial-Up Modems?

Think back to the wild early internet—everyone piling onto clunky mainframes, trusting the sysadmin not to snoop. Fast-forward to now: cloud hyperscalers cram dozens of users onto single Nvidia A100s or H100s because, hey, who can afford their own? But here’s my hot take, the one nobody’s shouting yet: these attacks echo the Morris Worm of ‘88, that first big internet wake-up call. Except this time, it’s not buggy code—it’s physics betraying silicon itself. And for AI datacenters? It’s a prediction I’ll stake my byline on: we’re barreling toward mandatory secure memory enclaves as standard, or watch your frontier models leak like sieves.

Two independent research squads dropped these bombshells Thursday. One team zeroed in on the A100; the other, the A40. Both used souped-up hammering patterns—think Rowhammer on steroids, with feng shui precision to dodge mitigations like Target Row Refresh.

The kicker? They don’t just mess with the GPU. No, these bit flips cascade into CPU memory, thanks to sneaky DMA tricks when IOMMU’s off (default BIOS setting—oops). Full root. Game over.

“The researchers achieved only eight bitflips, a small fraction of what has been possible on CPU DRAM, and the damage was limited to degrading the output of a neural network running on the targeted GPU.”

That’s last year’s modest GPU poke, from the original GDDR proof-of-concept. Cute, right? These new ones? They shatter that ceiling, chaining flips for kernel-level pwnage.

But—hold up—Nvidia’s not spinning fairy tales here. No press release flood, just crickets. Smart? Or sweating the AI gold rush turning rusty?

How Exactly Do Rowhammer Attacks Flip GPU Bits?

Start with the basics, supercharged for GPUs. GDDR6 memory on Ampere cards packs denser cells than your laptop’s DDR4—more juice, more drama. Attackers craft patterns: hammer this row 100,000 times a second, watch the victim row twitch.

They map the die first—brute-force probing bank layouts, dodging ECC with multi-bit chaos. Then, the magic: GPU-initiated memory ops flood the bus, bit flips ripple host-side. IOMMU off? You’re toast. (Pro tip: flip that BIOS bit yesterday.)

One team’s “SMASH” attack uses shader multiprocessors like precision artillery. The other’s “GPU.fail” leans on row pressing—sustained voltage stress without full hammers. Both land dozens of flips, enough to rewrite kernel structs, spawn root shells.

It’s poetry in peril. Like a pickpocket in a mosh pit—everyone’s slamming, but you’re the one lighter.

And the cloud angle? Brutal. AWS, GCP, Azure—your multi-tenant GPU pods are petri dishes for this. One bad actor flips the server for all.

## Will Rowhammer Kill Shared GPUs for AI Training?

Nah—not yet. But it’ll scar ‘em deep.

These attacks need co-location on the same host, rare but rising with spot instances and cost-cutting. Mitigations exist: Nvidia’s pushing confidential computing, MIG partitioning. But defaults lag—IOMMU, TRR tweaks, they’re bandaids on a bullet wound.

Here’s the wonder: AI’s platform shift demands this power. GPUs aren’t toys; they’re warp drives for intelligence explosion. Yet physics fights back—Rowhammer’s just the latest reminder that scaling hits walls of entropy.

My bold call? By 2026, expect Hopper and Blackwell GPUs with baked-in Rowhammer shields—quantum-dot memory hybrids or optical DRAM. Or competitors like AMD’s MI300X leapfrog with air-gapped designs. Nvidia’s GPU moat? Crumbling under bit-flip fire.

Researchers stress: real-world exploit needs hours of hammering, noisy neighbors. But in stealth mode? Automate it across fleets. Steal models, inject backdoors, ransom the rig.

Energy pulses through me—this vulnerability isn’t doom; it’s evolution’s prod. AI won’t pause for leaky memory. We’ll invent around it, faster, fiercer.

Cloud providers scramble already—Google’s got TEEs, Microsoft’s Open Enclave. But for raw throughput? Shared GPUs stay king… until they don’t.

Wider lens: Rowhammer’s decade arc mirrors AI itself. From toy demos to world-eaters. CPU to GPU. DDR3 to GDDR6X. Each leap invites sharper spears.

So what’s next? Half-hearted patches? Or a memory renaissance?

I vote revolution.

The Road from Hammers to Hardened AI Future

Patch now: Enable IOMMU. Partition GPUs. Audit tenants.

But dream bigger. Imagine Rowhammer-proof memory as AI’s new baseline—neuromorphic chips sidestep DRAM drama entirely. We’re not fixing bugs; we’re forging the next epoch.

Thrilling times. Bits rebel, humans respond. Platform shift accelerates.

🧬 Related Insights

- Read more: Archergate Ends License Lockouts for Good

- Read more: Message Queues in System Design: Kafka’s Dominance Hides the Real Tradeoffs

Frequently Asked Questions

What are Rowhammer attacks on Nvidia GPUs?

Rowhammer exploits flip bits in GPU memory by rapidly accessing nearby rows, letting attackers escalate privileges to full host control in shared cloud setups.

How do you protect against GPU Rowhammer vulnerabilities?

Enable IOMMU in BIOS, use GPU partitioning like MIG, and stick to trusted multi-tenant providers with confidential computing.

Can Rowhammer attacks steal AI models from cloud GPUs?

Potentially yes—root access means dumping memory, exfiltrating weights, or worse, poisoning training runs.