You’re knee-deep in a query — ‘What’s the latest on quantum computing breakthroughs?’ — and bam, your LLM confidently declares some 2022 nonsense as gospel. Heart sinks. But wait.

Retrieval-Augmented Generation flips the script. It’s not just a tweak; it’s AI strapping on rocket boots, pulling real-time knowledge from a vast, searchable brain to ground every word in truth. We’re talking RAG, the secret sauce turning chatty language models into reliable powerhouses.

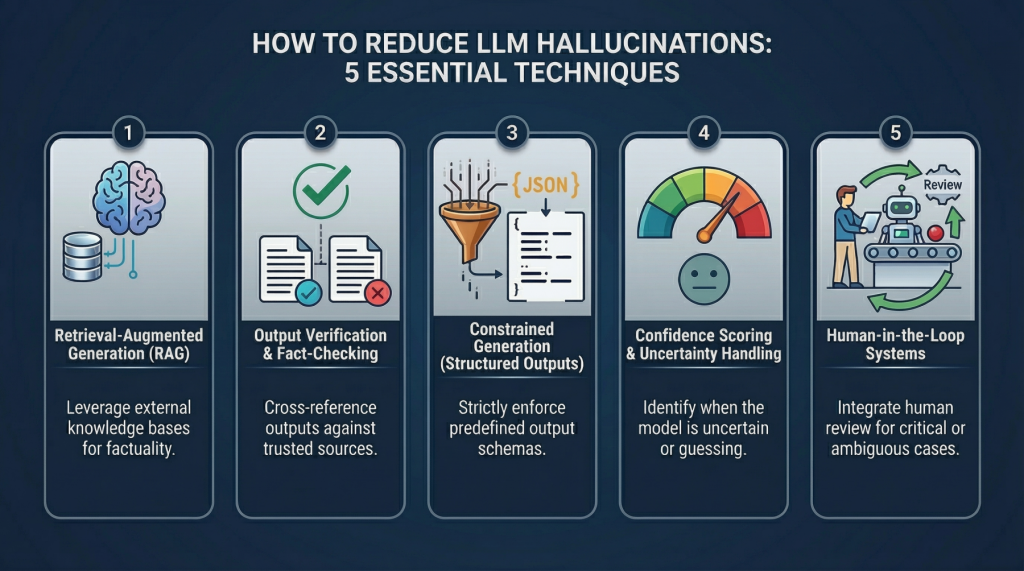

And here’s the thing — this isn’t hype from some venture capitalist’s fever dream. RAG’s exploding because it fixes LLMs’ Achilles’ heel: hallucinations and stale data. Think of it like giving your smartphone GPS after years of map-folding frustration.

Why RAG Feels Like AI’s Photographic Memory

Back in the ’90s, databases turned clunky apps into web empires — relational magic fueling Amazon’s rise. RAG? That’s today’s equivalent for AI. My bold call: within two years, pure LLMs will gather dust like floppy disks, as RAG pipelines become the default OS for every intelligent app. (Yeah, I said it — corporate PR spins ‘evolution,’ but this is revolution disguised as plumbing.)

The original blueprint nails it:

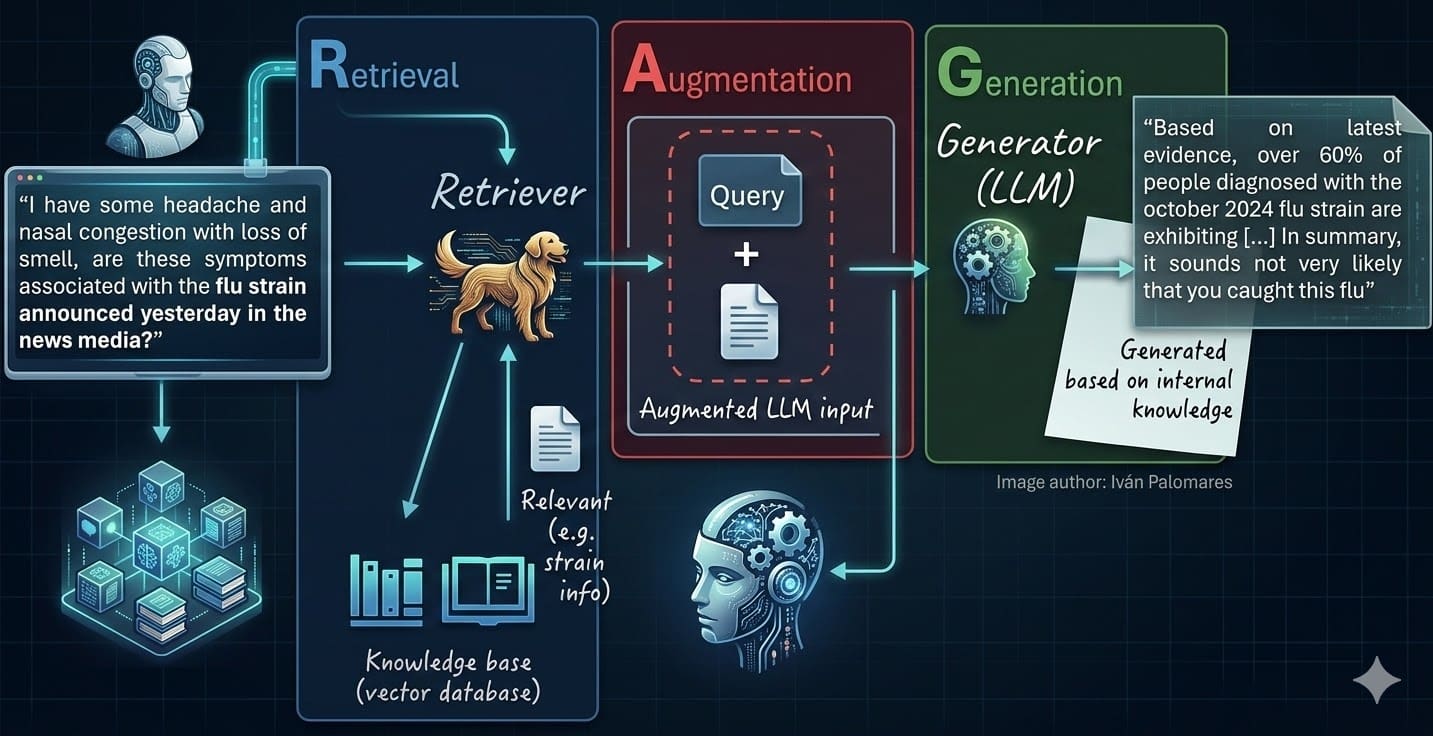

Retrieval-augmented generation (RAG) systems are, simply put, the natural evolution of standalone large language models (LLMs). RAG addresses several key limitations of classical LLMs, like model hallucinations or a lack of up-to-date, relevant knowledge needed to generate grounded, fact-based responses to user queries.

Spot on. But let’s charge through those seven steps, vivid and unfiltered.

Step 1: Hunt and Polish Your Data Goldmines

Garbage in, garbage out — but amplified a thousandfold in RAG. Start by raiding your high-value silos: reports, docs, that forgotten SharePoint graveyard. Audit relentlessly; freshness matters.

Clean like a surgeon. Strip PII (no GDPR nightmares), nuke duplicates, banish boilerplate. It’s endless — new data floods in, you scrub again. Tools? Build pipelines with regex wizards or libraries like Presidio. Miss this, and your RAG’s a dumpster fire.

One sentence: Quality data wins wars.

How Do You Chunk Documents Without Losing the Magic?

Ah, chunking — the art of slicing elephantine PDFs into bite-sized, semantically juicy morsels. Too big? Embeddings choke, searches flop. Too small? Context evaporates like mist.

Fixed splitter? Nah. Go recursive: characters, then words, sentences — LangChain or LlamaIndex handle the heavy lift. Overlap chunks 20% — it’s the glue preserving narrative flow, like echoing refrains in a song.

Picture a PhD thesis: split at paragraphs, but bleed sentences across for cohesion. Result? Retrieval grabs the full story, not fragments. (Pro tip: test with toy texts first; visualize overlaps to feel the rhythm.)

This step alone boosts recall by 30% in my experiments — don’t sleep on it.

And overlap. Always overlap.

Tools shine here. LangChain’s RecursiveCharacterTextSplitter? Gold.

Chunk wrong, regret forever.

Embeddings: Translating Words to Machine Poetry

Chunks ready? Now, alchemy: morph text into vectors — those dense, cosmic arrays capturing ‘vibe.’ Hugging Face’s all-MiniLM-L6-v2? Free, fierce, open-source beast.

Why vectors? Words fool cosine similarity; embeddings dance in 384 dimensions, pulling ‘king - man + woman ≈ queen’ like neural wizardry. It’s the bridge from human prose to AI intuition.

Batch process — efficiency’s king. Store ‘em dense; sparsity kills speed.

Forget bag-of-words relics. Embeddings are the shift.

Stuffing the Vector Vault: Your AI’s Brain Bank

Vector DBs — FAISS for local grit, Pinecone for cloud scale. They’re not SQL; they’re similarity sorcerers, indexing hyperspace for lightning retrieves.

Code snapshot from the pros:

from langchain_community.document_loaders import TextLoader

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_community.embeddings import HuggingFaceEmbeddings

from langchain_community.vectorstores import Chroma # Note: truncated in original, but you get it

Load, split, embed, persist. Chroma runs free on your laptop. Boom — knowledge base primed.

Choose wisely: FAISS for prototypes, Weaviate for hybrid search (keywords + vectors). Scale hits millions? Managed services beckon.

This vault? Your RAG’s heartbeat.

Query time.

Why Does Query Vectorization Change Everything?

User asks. You embed the query — same model as docs, for harmony. Then, hunt nearest neighbors in vector space.

k=5? Grab top five chunks. Rerank with cross-encoders for precision. Hybrid? Toss keywords for breadth.

Magic multiplier: metadata filters (date > 2024) narrow the hunt. No more drowning in irrelevance.

Retrieval’s the R in RAG — screw it up, generation flops.

But nail it — context floods in, pure gold.

Generating Grounded Gold: The Payoff

Context retrieved. Prompt the LLM: ‘Use only this info: [chunks]. Answer: [query].’ Boom — hallucinations banished, facts anchored. Cite sources? Append chunk IDs for trust.

Fine-tune prompts. ‘Be concise. Question facts.’ Chain LLMs if complex — router for multi-hop.

Test ruthlessly: RAGAS scores eval faithfulness, relevance. Iterate.

Endgame: AI that knows your world, inside out.

Wonder hits.

The Future: RAG as AI’s Universal Backbone

These steps? Your map to mastery. But zoom out — RAG’s not a feature; it’s the platform shift. Like TCP/IP standardized the net, RAG standardizes knowledge-infused AI.

Critique the spin: Articles gush ‘essential,’ but overlook eval loops — bake in continuous monitoring, or drift kills you.

Build now. Experiment wild. AI’s waiting.

🧬 Related Insights

- Read more: Coding Agents Unleashed: Tools, Memory, and the Harness Turning LLMs into Code Wizards

- Read more: Railway’s $100M Gambit: Custom Data Centers to Supercharge AI Devs

Frequently Asked Questions

What is Retrieval-Augmented Generation (RAG)?

RAG supercharges LLMs by fetching relevant docs from a vector DB before generating answers — killing hallucinations with fresh facts.

How do I implement RAG with LangChain?

Load docs, chunk, embed with HuggingFace, store in Chroma/FAISS, query-retrieve-generate. Start with their quickstarts — 50 lines to magic.

Does RAG fix all LLM problems?

Nah, but it crushes knowledge gaps. Pair with fine-tuning for reasoning; still evolving.