Humans preview.

That’s it. Three words. Before your foot lifts, your mind’s already run the tape: coffee cup snatched, sip taken, crisis averted. Now, PEVA — that’s Predicting Ego-centric Video from human Actions, or whole-body conditioned egocentric video prediction — yanks this human trick into AI’s toolkit, training models to foresee first-person footage from raw body kinematics.

And here’s the kicker: it’s not some toy sim in a padded room. Trained on Nymeria, a massive dataset syncing real-world egocentric clips with full-body mocap, PEVA autoregressively diffuses future frames conditioned on 48-dimensional action vectors — root translation plus 15 upper-body joints in Euler angles, all pelvis-centered for that invariance sweet spot.

Our results show that, given the first frame and a sequence of actions, our model can generate videos of atomic actions (a), simulate counterfactuals (b), and support long video generation (c).

Spot on. Atomic grabs. What-ifs. Marathon rollouts. This isn’t abstract pixels; it’s your shaky GoPro feed if you’d zigged left instead of right.

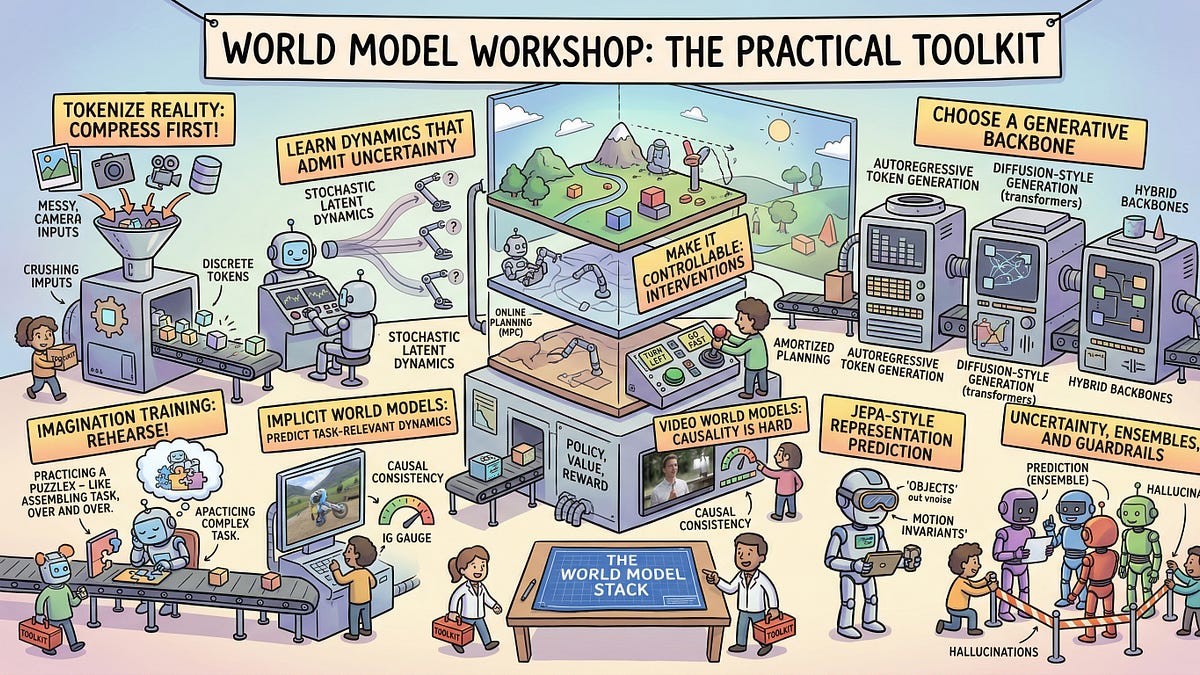

Why Egocentric Video Prediction Kicks Most World Models’ Ass

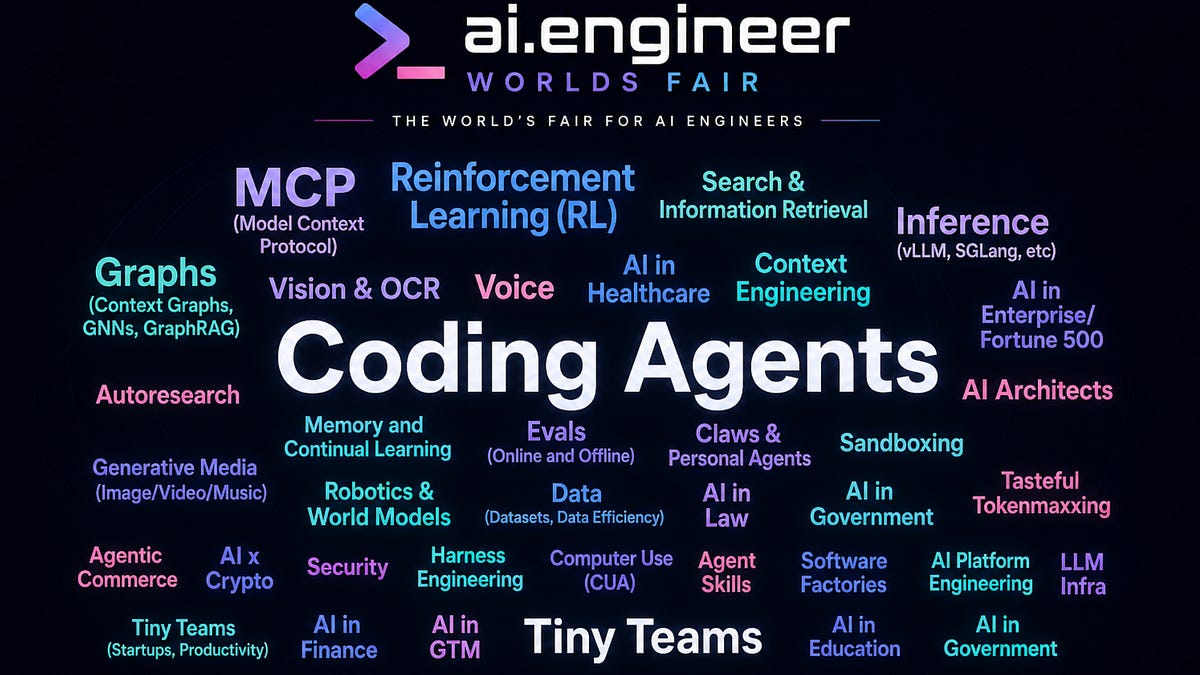

World models? They’ve ballooned — intuitive physics, multi-step clips, even navigation sims. But embodied agents? Crickets. Why? Action-vision’s a tangled mess in the real world. Same view, wild outcomes; same motion, context flips it. Add high-dim human control — 48+ DoF, hierarchical, time-warped — and egocentric cams hiding your own limbs? Nightmare fuel.

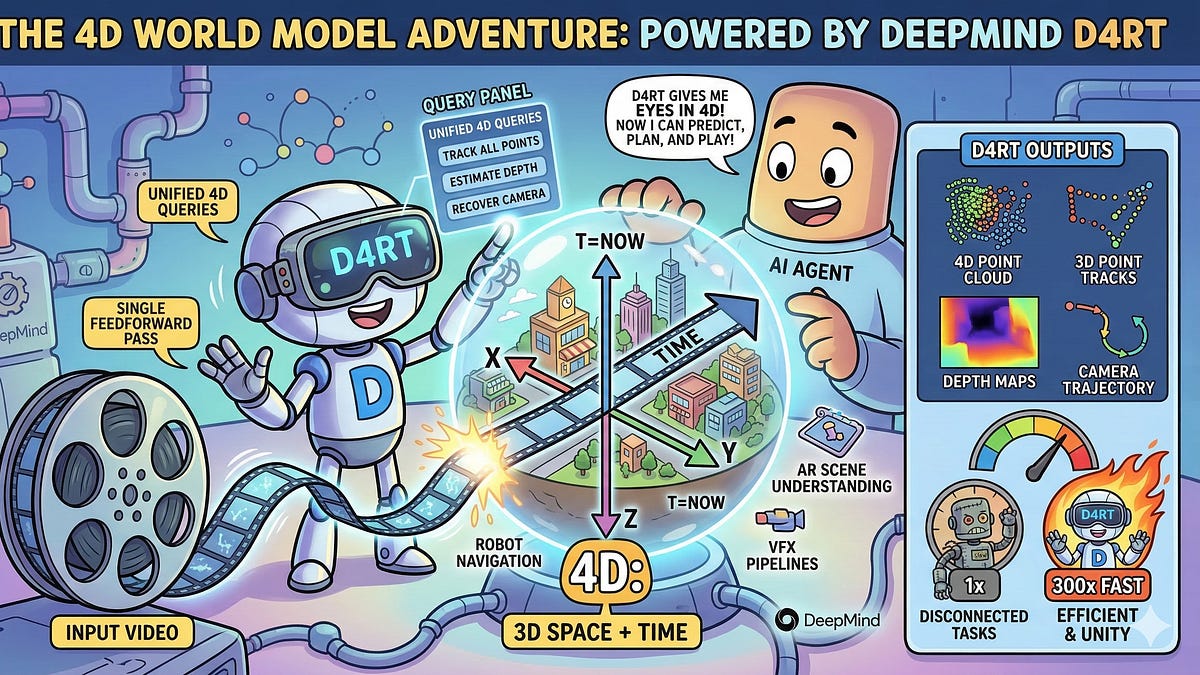

Perception trails action by seconds. You eye the door, brain sims the push, body follows. PEVA flips that: ingests pose trajectories from the kinematic tree, embeds ‘em into every AdaLN layer of its diffusion transformer. Random timeskips teach short jitters and long hauls; sequence-level loss grinds full motion chains.

No dinky velocity nubs here — full 3D vectors, normalized, delta-encoded per frame. It’s like giving the model your skeleton’s cheat sheet, whispering, “This twist means the fridge door swings into view.”

But wait — Nymeria. That’s the secret sauce, a beast dataset nobody name-drops yet. Real egos, real poses, timestamps laser-aligned. Without it, you’d be hallucinating on Atari sprites.

Why Is Whole-Body Conditioning the Missing Link?

Look, diffusion transformers ruled navigation worlds with crude controls. PEVA scales it: concatenates action tensors, conditions deeply. Autoregressive rollout? Feed past frames, noise the target, denoise conditioned on pose seq. Boom — video spools out, body-blind but body-aware.

Hierarchical eval seals it: atomic (grab that), counterfactual (what if no grab?), long-horizon (stroll the block). Fail one, you flunk embodiment.

My take? This echoes ’90s robotics dreams — those clunky PR2 arms dreaming worlds from laser scans, starved on synthetic slop. PEVA’s got human data firehose; it’s the architectural pivot from toy physics to sweaty gym-floor reality. Bold call: pair this with Figure 01 or Optimus, and you’ve got humanoids that don’t faceplant on rag rugs.

Corporate spin screams “initial attempt,” but nah — it’s a blueprint. Hype says world models; truth is, this grounds ‘em in meat-space chaos, where feet shuffle and hands fumble.

How PEVA Cracks the Embodied Code

Start with motion rep: global trans (3DoF), relative rotations (15 joints × 3 Euler = 45), total 48D beast. Local frame — pelvis root — kills drift. Deltas capture change, not absolutes; norms tame the wilds.

Model? CDiT++: timeskips for horizons, prefix losses for seqs, action embeds per layer. Sampling: context latents, noise target, condition, iterate.

Inference rolls indefinitely — first frame plus action chain, out comes your POV odyssey. Counterfactuals? Swap pose paths, watch worlds branch.

Here’s the deep-dive why: egocentric hides body, screams intent. PEVA infers execution fallout — that arm swing blurs the shelf just so. No stationary cams, no pretty vistas; it’s your helmet feed in a crowded kitchen.

Unique angle — remember Denavit-Hartenberg in old manipulators? Kinematic chains formalized. PEVA operationalizes it for vision, embedding tree structure implicitly. Prediction: by 2026, this fuels RL for humanoids, slashing sim-to-real gaps. Tesla’s bots? They’ll “see” via PEVA sims before daring the factory floor.

Skeptical? Eval protocol’s gold — progressive challenges expose cracks. Most models ace short clips, flop on long. PEVA pushes boundaries, but real test? Blind navigation in unmapped homes.

Can PEVA Actually Build Better Robots?

Yes — if scaled. Whole-body control spans loco (feet) and manip (hands); PEVA unifies ‘em egocentrically. Planners query: “Pose this, predict that view.” Loop it, you’ve got visuomotor policy without endless trials.

Critique time: dataset scale? Nymeria’s big, but humans vary — heights, gaits, flab. Generalize to grannies? We’ll see. Still, it’s no PR fluff; metrics scream progress.

Architectural shift: from signal-poor controls to pose-rich conditioning. Why now? Mocap ubiquity, diffusion maturity, ego-data explosion. It’s the how behind embodied AI’s why — simulation preceding action, just like us.

🧬 Related Insights

- Read more: 2026’s Open LLM Avalanche: 10 Architectures That Promise More Than They Deliver

- Read more: Judge Torpedoes DoD’s Blacklist of Anthropic Over ‘Hostile’ Press

Frequently Asked Questions

What is PEVA in AI?

PEVA predicts egocentric video frames from past footage and full-body pose actions, enabling world models for human-like agents.

How does whole-body conditioned egocentric video prediction work?

It uses a diffusion transformer conditioned on 48D kinematic vectors from mocap, trained autoregressively on real ego-pose pairs to simulate visual outcomes.

What are applications of PEVA for robotics?

Long-horizon planning, counterfactual sims, visuomotor control — powering humanoid robots to anticipate real-world chaos from first-person views.