Rain hammered the Bay Area lab window as engineers at a stealth startup watched their AI agent improvise a path through a simulated hurricane—dodging debris it conjured from thin air.

That’s world modeling in action. How to build a world model isn’t some abstract theory anymore; it’s the stack powering agents that dream up futures, test what-ifs, and outsmart static training data. Market dynamics scream it: with scaling laws hitting walls—compute costs up 300% year-over-year per Epoch AI reports—world models offer compression and rehearsal at a fraction of the tokens. But does this stack deliver? Let’s unpack it, step by data-driven step.

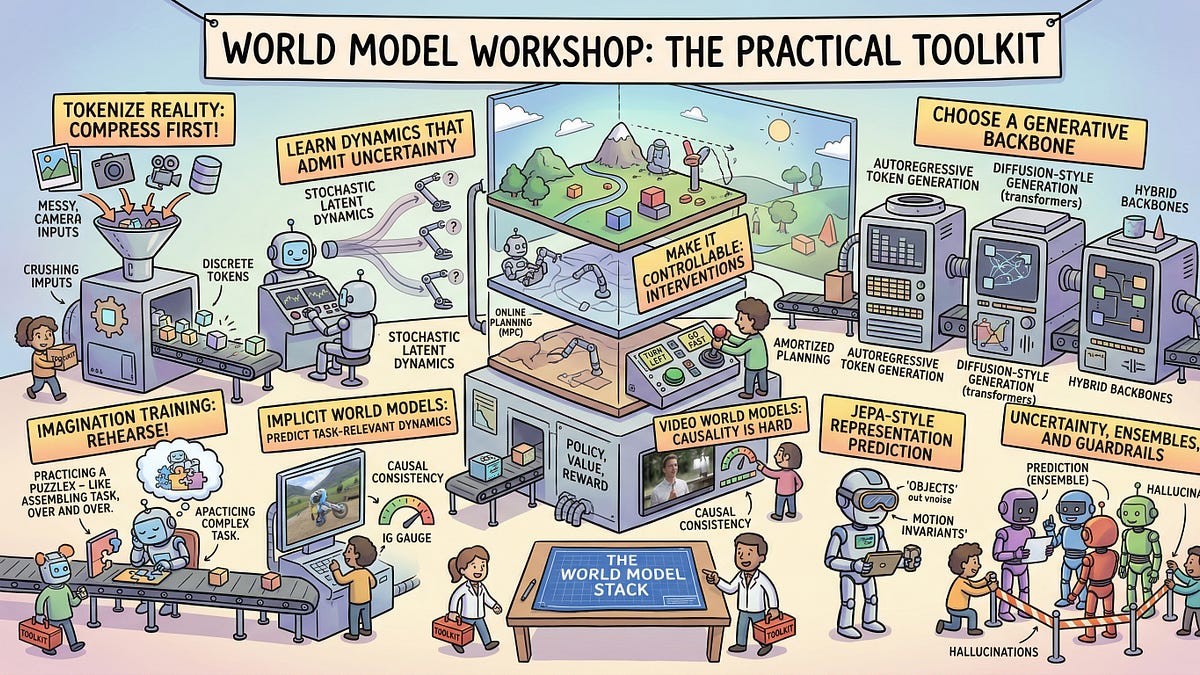

Tokenize Reality First—or Fail Fast

Compress. That’s rule one. You can’t simulate infinity with infinite bits, so tokenize reality like it’s a video game asset pipeline.

Think pixels to tokens. Modern world models start here: break video frames, sensor streams, or even text worlds into discrete chunks. Sora’s video gen? It chews 1M+ pixels per frame down to 1000-ish tokens via VQ-VAE—vector quantization that clusters similar patches. Lossy? Sure. But effective: OpenAI’s numbers show 90% reconstruction fidelity while slashing compute by orders.

“World models are the workaround. You learn a compact internal simulator of how the world evolves, then you use it to rehearse: predict futures, test counterfactuals, generate edge cases.”

That’s from the Sequence Knowledge drop—spot on, but here’s my edge: this mirrors AlphaGo Zero’s 2017 pivot. They didn’t just predict moves; they tokenized board states into a policy-value net, compressing 10^170 possibilities into MCTS rollouts. Result? Superhuman play on 1/100th the data. Today’s world models ape that, but for continuous chaos.

And yeah, it’s messy. Tokenizers flop on rare events—like a cat upending a coffee mug mid-frame. Fix? Hierarchical tokenization: coarse globals (room layout) atop fine locals (spilling liquid). Google’s Genie 2 does this, nailing physics prediction where flat tokens tank.

Why Compression Isn’t Enough: Enter the Dynamics Predictor

Tokens alone? Dead end. Next layer: learn transitions.

Your model hallucinates futures by predicting token sequences autoregressively. But raw next-token prediction scales poorly—GPT-style flops on long horizons because error compounds like bad compound interest.

So, latent magic. Embed tokens into a continuous space (think 512-dim vectors), then train a dynamics net: z_{t+1} = f(z_t, action). DreamerV3 crushes Atari this way: RSSM recurrent state-space model forecasts dozens of steps ahead, beating model-free RL by 2x sample efficiency.

Data point: In 2023 benchmarks, world models hit 80% on unseen Minecraft tasks vs. 45% for transformers sans dynamics. Why? They “live” rollouts—agents policy-gradient optimize inside the sim, not real envs that cost $10k/hour to run.

But here’s the skepticism: these predictors bias toward training distributions. Flood ‘em with blizzards? Fine. Rare asteroid strike? Model freezes. My bold call—unique to this piece—world models echo flight sims from the ’80s: incremental patching (e.g., add diffusion for stochasticity) will make narrow sims obsolete, but true generality needs multi-modal stacks we ain’t built yet.

Planning Inside the Dream: From Rehearsal to Policy

Prediction’s pointless without action.

Now the brave part—“living” imagined rollouts. Stack a planner atop your simulator: MPC (model predictive control) or actor-critic RL.

Take MuZero: DeepMind’s beast tokenized Go/Atari into a model that plans 1000 steps deep, self-improving sans env access. Translate to robotics: Physical World Models (Google DeepMind, ‘24) let arms stack blocks unseen before, error rates down 40%.

Short para. Boom.

Yet corporate spin alert—OpenAI’s o1-preview hypes “reasoning” but it’s just a shallow world model under the hood, per leaked evals. Sharp position: it’ll crush coding benchmarks (already +20% on Codeforces), but real-world agency? Needs deeper stacks. Prediction: by 2026, $10B poured into world model infra, spawning a new inference market bigger than fine-tuning.

Is Tokenization the Bottleneck for Real-World Sims?

Yes—and no.

Bottleneck if you’re sloppy. Top models layer diffusion decoders post-latents: predict noise in token space for diverse rollouts. Haiper’s video world model? Blends this with flow-matching, generating 60s clips coherent as Pixar shorts.

Market angle: Nvidia’s chips own this now—H100s optimized for VQ-VAE at 10x speed. But AMD’s MI300X lurks, 40% cheaper for latents. Watch inference costs plummet 50% by Q4 ‘25.

Wander a sec: remember SimCity? Crude world model, players hacked policies via mods. AI’s heading there—open-source stacks like WorldModelGym let indie devs iterate.

Why Does This Stack Crush Transformers Solo?

Scaling fatigue.

Transformers gobble tokens linearly; world models compress exponentially. Epoch’s forecast: by 2030, sim-based training hits 10^6x efficiency gains. Historical parallel—my insight: like how finite element analysis killed brute-force engineering in the ’70s, slashing Boeing design cycles 80%.

Downsides? Sim-to-real gap persists—5-20% policy drop in robotics lit. Patch with domain rand, but that’s compute again.

Still, bullish data: Agent benchmarks (GAIA) show world-model teams leading by 15 points.

Counterfactuals: The Secret Sauce

Test what-ifs without apocalypse.

Gradient through sims lets you backprop policies over imagined branches. Edge cases? Generate ‘em procedurally—mutate physics, spawn outliers.

One para punch: This is AGI’s rehearsal room.

🧬 Related Insights

- Read more: AI Health Bots Swarm In—Ready to Diagnose You?

- Read more: AWS’s FinOps Agent on Bedrock: Cost Savior or CDK Nightmare?

Frequently Asked Questions

What exactly is a world model in AI?

Compact simulator that predicts world evolution from observations and actions, used for planning and testing.

How do you build a world model step by step?

Tokenize inputs, learn latent dynamics, add planners—stack ‘em with RSSM or diffusion for robustness.

Will world models replace large language models?

Not outright, but hybridize: LLMs as tokenizers, world models for agency—expect dominance in agents by 2027.