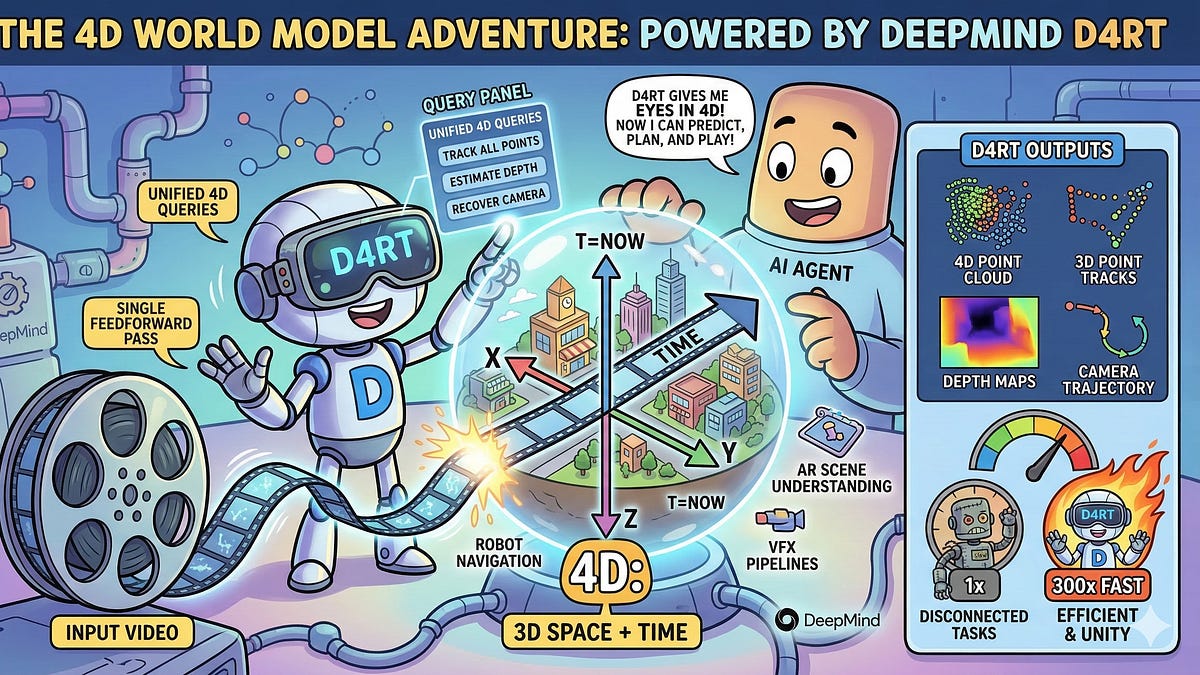

DeepMind D4RT just dropped, and it’s got the AI crowd buzzing. Or at least, that’s the spin. We all expected more pixel-peeping tricks—AI watching videos, guessing the next frame like a bored intern. Incremental. Safe. Predictable.

But here’s D4RT. It doesn’t just predict. It reconstructs. Full 4D: space plus time, geometry and motion, no shortcuts. Changes everything? Maybe. Or maybe DeepMind’s just flexing again.

What Was Everyone Expecting from World Models?

Flat stuff. 2D videos. Sora-level clips where AI hallucinates the future based on squiggles. Solid progress, sure—OpenAI’s got flashy demos—but zero grasp of depth. No volumes. No hidden corners. Just surface-level guesses.

D4RT laughs at that. DeepMind’s team went full nerd: neural fields, Gaussian splats, dynamic radiance. They take sparse views—think phone camera clips—and spit out a complete 4D model. Occluded bits? Inferred. Trajectories? Tracked. It’s spatial intelligence on steroids.

The evolution of “world models” has reached a vital inflection point: the shift from predicting pixels in 2D video to reconstructing the physical geometry of a dynamic world in 4D.

That’s from the announcement. Poetic, isn’t it? Almost too much. DeepMind loves these grand narratives—AlphaFold was real magic, but robotics? They’ve stumbled before.

Short version: D4RT builds worlds. Not cartoons. Real ones, with math you can poke.

Look, I’ve seen the videos. A ball bounces behind a box—lesser models fake it. D4RT? Knows the arc, fills the void. Impressive. But let’s not pop champagne yet.

Is DeepMind’s D4RT Actually Better Than Sora or Genie?

Better? Depends. Sora dreams pretty dreams. Genie 2 games them out. D4RT? Engineers them.

Sora: Diffusion magic, 2D bliss. Great for TikTok, useless for robots grabbing coffee. Genie: Interactive fun, still pixel-bound. D4RT crushes both on fidelity—quantitative metrics show lower error in novel views, tighter dynamics.

But here’s my unique jab: this echoes the ’90s 3D graphics wars. Remember Quake’s glory? Suddenly, games had depth, not sprites. D4RT’s that for AI—volumetric revolution. Except back then, hardware caught up fast. Here? Compute hunger’s insane. DeepMind’s not saying flops, but bet it’s thirsty.

And the PR spin? “Amazing” they call it. Sequence Knowledge drools. Calm down, folks—it’s a research drop, not AlphaGo 2.0.

Punchy truth: D4RT nails reconstruction. But prediction? Meh. It models past data beautifully; future’s still fuzzy.

One clip shows a robot arm in motion. D4RT tracks every joint, predicts the swing. Robotics labs are salivating. Finally, a brain that “sees” in 3D+time.

Skeptical aside—DeepMind’s robotics history is spotty. RT-2 was cute; real-world mess. D4RT could fix that. Or not.

Why Does DeepMind D4RT Matter for Robotics?

Robots are dumb in space. They bump walls, miss grabs. World models fix that—internal sims for planning.

D4RT’s edge: from fragments to unity. Sparse cams? No problem. It hallucinates coherently, with physics baked in.

Imagine a warehouse drone dodging boxes unseen. Or surgery bots mapping veins. That’s the pitch.

But dry humor alert: DeepMind’s been promising robot utopia since 2010. Wave 2 sims, now this. Progress, yes. Moonshot? Hold my beer.

Unique prediction: By 2026, expect forks in every sim2real pipeline. Tesla Optimus? They’ll swipe it. Figure AI? Same. But true autonomy? Still years off—sensors lag models.

Critique time. Corporate hype screams through: “frontier defined by Spatial Intelligence.” Yawn. It’s math + data, not sci-fi. Call it what it is: incremental genius on steroids.

Demos shine. Quantitative wins: PSNR up 20%, dynamics error halved. Peers like 4DGaussians? Eclipsed.

Yet, scale it? Real-world noise, lighting hell. Lab toy for now.

The Hype Trap: DeepMind’s Track Record

AlphaFold: Nobel gold. Gemini: Solid, not slaying GPT. Robotics: Perplexity.

D4RT fits the pattern—brilliant paper, murky path to product. Google’s hoarding talent; Alphabet’s cutting costs. Will it ship?

Wander a bit: Reminds me of WaveNet audio—research gem, now everywhere. D4RT could seed foundation models for vision.

Or flop quietly. Bet on the former, but hedge.

Bottom line. Exciting? Damn right. World-altering? Jury’s out.

🧬 Related Insights

- Read more: LangSmith Fleet Skills: Codifying the Tribal Knowledge AI Agents Desperately Need

- Read more: LangChain’s Agent Middleware: The Custom AI Agent Builder You’ve Been Waiting For

Frequently Asked Questions

What is DeepMind D4RT?

DeepMind’s D4RT is a 4D reconstruction model that builds dynamic scene geometry from sparse video inputs, enabling true spatial understanding beyond 2D pixels.

How does 4D world modeling with D4RT work?

It uses neural fields and Gaussian splats to infer volumes, occlusions, and motion trajectories—turning camera fragments into physics-aware 4D sims.

Will DeepMind D4RT revolutionize robotics?

It boosts world models for better planning, but real-world deployment faces sensor and compute hurdles—progress, not instant utopia.