Devs swarm the Moscone Center, fingers flying over keyboards, spawning chaotic multiplayer arenas where physics bends but never breaks. One guy’s rigging a tire-squealing rally sim; another’s tweaking alien planets with real-time causality. This isn’t some vaporware demo. It’s Moonlake AI in action—causal world models that bootstrap from game engines, delivering multimodal, interactive efficiency where others flail.

Zoom out. We’ve drowned in world model hype lately—Yi’s intro, World Labs’ Marble, Nvidia’s flexes, Google’s Genie 3, even Yann LeCun’s billion-dollar LeWorldModel swing. But glitches persist: floating solids, terrain clips, single-player silos capping at 60 seconds. Moonlake? Diametric opposite. Multiplayer from jump. Indefinite lifespans. Plans over horizons that’d choke diffusion models.

Why Game Engines Beat Blind Scaling

Here’s the thing—scale’s seduced everyone into pixel orgies, but Chris Manning and Fan-yun Sun aren’t buying it. Their paper, Towards Efficient World Models, guts the Bitter Lesson dogma: structure trumps raw compute.

SOTA models still show physical or spatial understanding glitches, such as solid objects floating in mid-air or moving “inside” other solid objects.

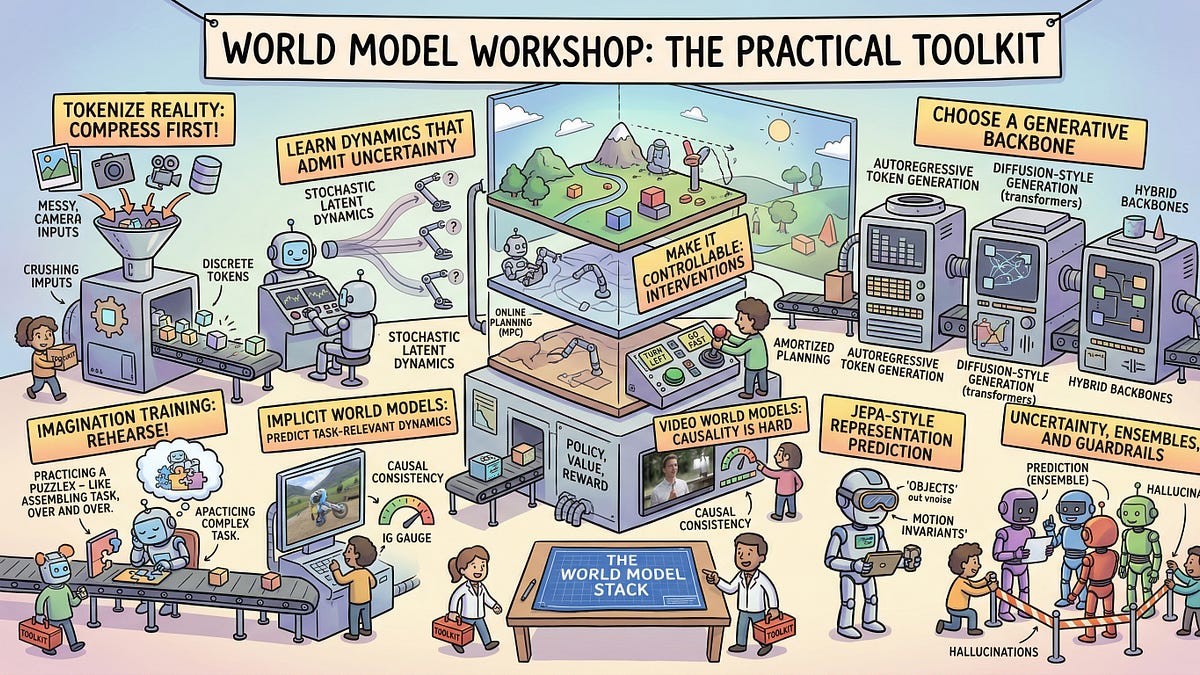

That quote? Straight fire. Humans don’t retina-scan every frame—we abstract, task-direct, lean on semantics. Why render hyper-res pixel soups for planning a corner? A squealing tire description suffices. Moonlake starts with game engines—Unreal, Unity abstractions—as causal scaffolds. Train custom agents on actions-to-observations flywheels. Boom: consistency over epochs, not epochs of inconsistency.

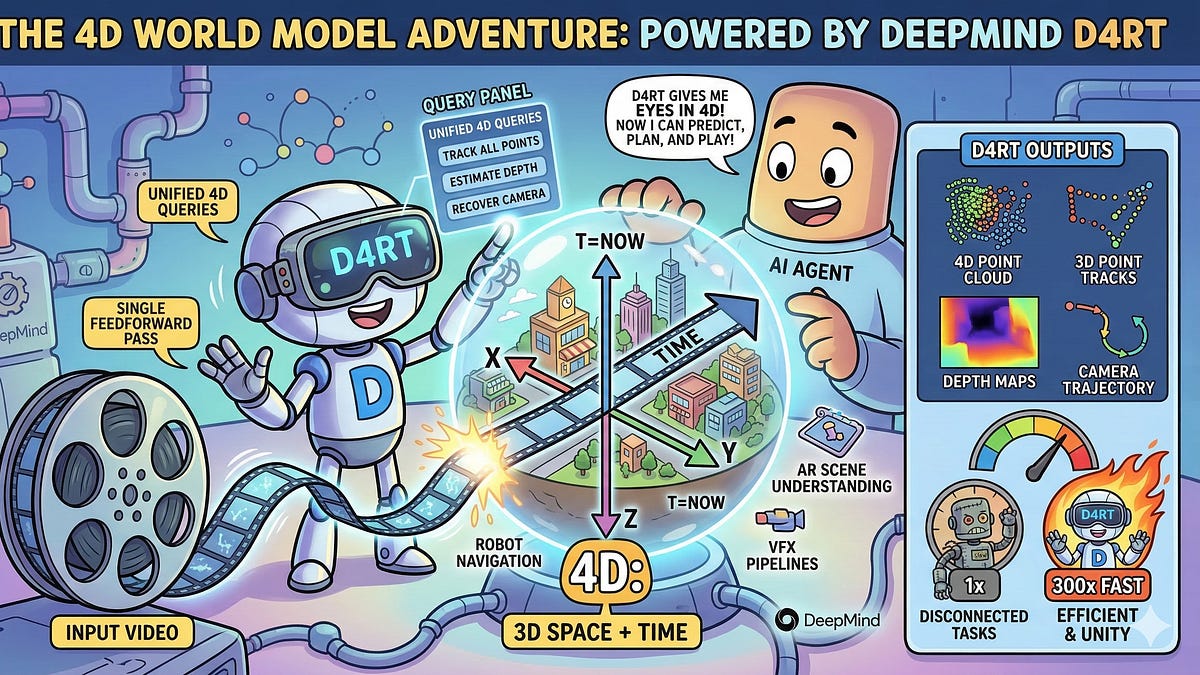

We caught their GDC sessions. Live vids showed worlds morphing under player tweaks—fiction rules hardcoded, no diffusion drift. One demo: tweak gravity mid-game, causality ripples flawlessly. No “learning priors” hallucinations.

But wait—efficiency’s the killer app. High-res visuals? Overkill for 90% of value. Blind scaling hits walls; diffusion’s autoregressive chains bloat inference. Moonlake layers reasoning traces atop symbolic engines, rendering only what’s queried. Language debates JEPA? They sidestep: code engines enforce physics priors diffusion can’t dream up.

Is Moonlake’s Approach the Antidote to Genie 3’s Flaws?

Genie 3 dazzles—until it doesn’t. Terrain clips. No interactivity beyond solo romps. Physics? Laughable. Moonlake founders roast it implicitly: true causality demands action-conditioned worlds. Predict outcomes, plan long-horizon. Their bet: abstraction wins.

Fan-yun Sun puts it sharp in the pod: humans process visually sparse, object-modeled. Why not AI? Boot from engines, layer multimodality—spatial audio latents, tactile proxies. It’s not anti-scale; it’s smart-scale. And multiplayer? That’s the secret sauce—community flywheels via $30K Creator Cups, devs iterating worlds into datasets goldmines.

Skeptical? Fair. But GDC crowds weren’t. Flexibility stunned: sim cities to abstract puzzles, all causal-coherent.

My unique spin—and bear with the history nerd-out—Moonlake echoes id Software’s Quake engine in ‘96. Back then, devs ditched sprite hacks for 3D renderers with physics hooks. Result? Half-Life, Unreal Tournament—worlds that felt alive. Moonlake’s doing that for AI: engines as priors, not afterthoughts. Bold prediction: by 2027, robotics firms poach this stack, ditching end-to-end black boxes for hybrid causal sims. Tesla’s FSD? Waymo’s bags? They’ll hybridize or lag.

Why Does This Matter for the Next AI Frontier?

Planning’s the holy grail—action selection in sparse reward hells. Moonlake nails it: simulate environments, forecast interventions. Beyond games? Physical robots grokking “push the cup left” via latents, not pixels. Virtual agents in enterprise sims—train sales bots on causal customer worlds.

Corporate spin check: Nvidia/Tesla papers gloss efficiency; Moonlake calls bullshit. Structure first. And hiring NLP roots? Manning’s Stanford cred, Sun’s vision—smart.

Pod gems abound. Timestamps tease: 03:12 on structure vs. scale; 37:00 gameplay over graphics. Full vid’s a must—raw, unfiltered.

Look, world models aren’t toys. They’re reality proxies for AGI-ish agency. Moonlake’s multiplayer pivot? Forces robustness—adversaries emerge naturally. No curated benches; real chaos benchmarks.

The Platform Play: Creators to Causality

$30K cups aren’t gimmicks. They’re data engines. Devs build, share, iterate—observations flood back, refining models. Flywheel spins: better sims, richer datasets, tighter causality.

Contrast LeCun’s AMI: objective-driven, but siloed. Moonlake’s open(ish)—tools for all. GDC proved it: worlds from rally sims to fiction realms, all tweakable.

One glitch-prone Genie clip versus Moonlake’s endless jam? No contest.

🧬 Related Insights

- Read more: LM Studio: Run Frontier LLMs on Your Laptop, No PhD Required

- Read more: Google’s Nano Banana 2 Hits Gemini: Pro Images at Flash Speed

Frequently Asked Questions

What are Moonlake causal world models? Moonlake builds interactive, multimodal world models from game engines, prioritizing causality, efficiency, and long-horizon planning over high-res pixels.

How does Moonlake differ from Google Genie 3? Genie 3 is single-player, glitchy, 60-second max; Moonlake’s multiplayer, physics-faithful, indefinite—bootstrapped for real interactions.

Can Moonlake world models work beyond games? Absolutely—targeting robotics, planning, virtual agents via causal sims that predict action outcomes accurately.

This isn’t hype. It’s architecture shift. Watch game devs own AI’s future.