Picture this: a packed room at Strata Data Conference, 2023, where a Snowflake engineer flips the script on a legacy ETL demo, loading raw terabytes into BigQuery and transforming on-the-fly—crowd goes wild.

ETL vs ELT. That’s the battle defining data engineering right now. Traditional ETL—extract, transform, load—has ruled since the 1970s, born in the era of rigid on-premise warehouses. But cloud giants like Snowflake, Databricks, and BigQuery flipped the script with ELT (extract, load, transform), shoving raw data into scalable storage first, then shaping it later. Market data backs the shift: Gartner pegs ELT adoption at 65% among Fortune 500 data teams by 2024, up from 30% in 2020. Why? Cost, speed, flexibility.

ETL’s core pitch: Clean data before it hits the warehouse. Extract from databases, APIs, files—no changes yet. Then transform: filter junk, aggregate sales, sort for schemas. Finally, load into a target like Oracle or SQL Server. It’s meticulous. Reliable for compliance-heavy industries like finance.

But here’s the rub—staging areas balloon. Transformations hog CPU on mid-tier servers. And if schemas evolve? Rewrite the whole pipeline.

Why Does ETL Still Cling On in Enterprises?

Legacy lock-in. Banks with COBOL mainframes can’t just “cloud everything.” ETL tools like Informatica or Talend offer drag-and-drop for complex joins, perfect for regulated data.

Take this from the playbook: > ETL(Extract, Transform, Load) is a data integration process that extracts raw data from a single or multiple sources, transforms this data into a usable form, then loads the clean data into a database where end-users can access it.

Spot on. It enforces governance upfront—data quality checks, audits. Full loads for initial warehouses; incremental for deltas. Batch for nightly reports; streaming via Spark for fraud alerts.

Still, costs mount. A mid-size firm running ETL on-prem? Expect $500K/year in hardware alone, per IDC. No wonder 40% of ETL users report pipeline bottlenecks.

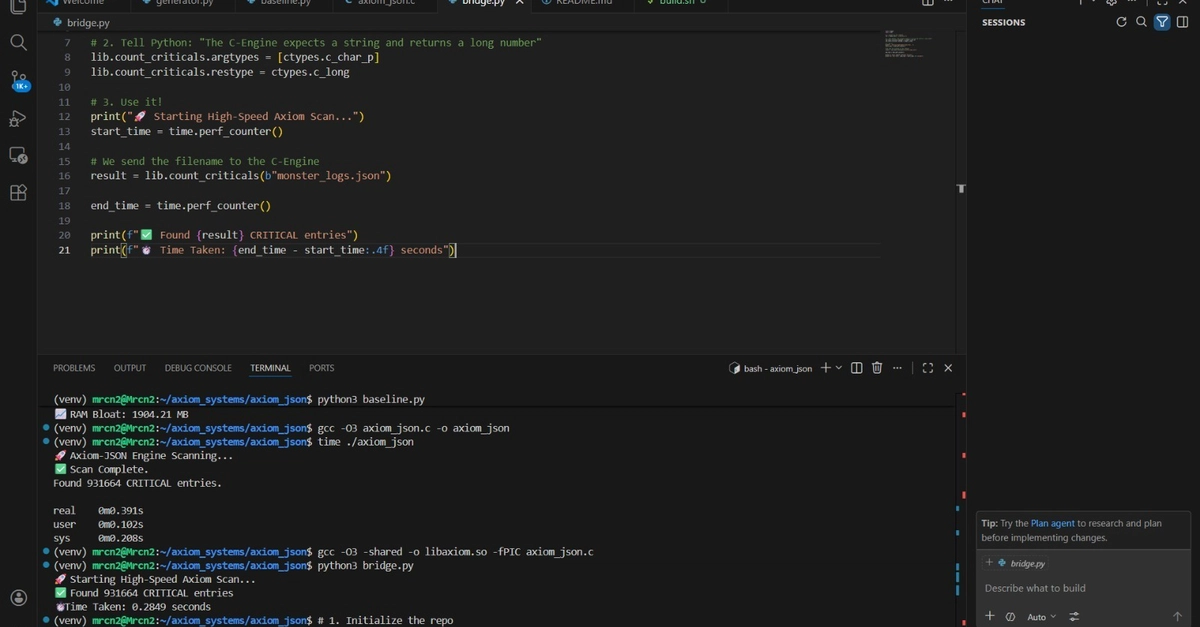

ELT flips it. Extract raw. Load to a data lake or lakehouse—think S3, Delta Lake. Transform inside, using SQL or dbt on warehouse horsepower.

Advantages scream cloud-native. Snowflake’s separation of storage/compute means you pay for queries, not idle transforms. BigQuery? Serverless scaling. Load petabytes, transform subsets on-demand.

Data lakes swallow JSON, logs, images—no preprocess. Tools like Fivetran or Airbyte ingest; dbt or Matillion morph.

My take: ELT’s the iPhone moment for data pipelines. Remember mainframes to PCs? ETL’s the mainframe—powerful but centralized, expensive. ELT decentralizes, like apps on smartphones.

ETL vs ELT: The Hard Numbers

Batch ETL: Scheduled runs, great for BI dashboards. But latency kills ML use cases.

Real-time ETL? Possible, but Spark clusters cost a fortune.

ELT shines here. Streaming ingest via Kafka to lakehouse, transform with Spark SQL—fraud detection in seconds.

Adoption curve: Databricks reports 70% of new workloads ELT. Why? Developer velocity. Data scientists query raw, self-serve. No ETL priest(ess) gatekeeping.

Costs? ELT slashes 50-70%, per Snowflake benchmarks. Transform only what’s queried—brutal efficiency.

Downsides? Raw data sprawl risks “data swamps.” Governance? Tools like Collibra or Monte Carlo step in, but it’s after-the-fact.

Is ELT Actually Better for Your Stack?

Depends. Monolith ERP? Stick ETL. Microservices, IoT firehose? ELT.

Historical parallel: RDBMS vs NoSQL. ETL’s like Oracle—structured, safe. ELT’s MongoDB—flexible, schema-on-read.

Prediction: By 2027, 85% of pipelines hybrid. ETL for sensitive PII; ELT for analytics.

Corporate hype alert—vendors like Matillion pitch “ETL on steroids” for cloud. Cute, but it’s lipstick on a pig. True ELT use warehouse-native transforms.

Teams migrating report 3x faster iterations. One fintech swapped ETL for Fivetran+dbt: ETL took 6 weeks/month; ELT, 2 days.

Governance freaks out initially. But policy-as-code in dbt tests catches issues. Plus, audit trails in lakehouses match ETL’s.

Legacy? Hybrid tools like Stitch bridge.

Future-proof: AI/ML thrives on raw lakes. Vector search in Pinecone? ELT feeds it unfiltered.

When to Pick ETL Over ELT

Strict schemas. Real-time needs with low volume. Compliance Nazis (sorry, regulators).

But market dynamics scream ELT. AWS spends billions on lake formation. Google Cloud’s BigQuery ML eats ELT.

ETL market? Stagnant at $10B; ELT subset exploding to $20B by 2028, Forrester says.

Don’t sleep—your competitor’s already loading raw.

🧬 Related Insights

- Read more: Cursor’s Crown Slips: 8 Alternatives Developers Are Flocking To Now

- Read more: Go Demystifies Sync, Async, Concurrent, Parallel — Perfectly

Frequently Asked Questions

What is the difference between ETL and ELT?

ETL transforms data before loading; ELT loads raw first, transforms in the warehouse—ideal for cloud scale.

When should I use ETL vs ELT?

Use ETL for legacy, compliance-heavy setups; ELT for big data, cloud-native teams chasing speed and cost savings.

Is ELT replacing ETL entirely?

Not yet—hybrids rule, but ELT’s market share surges as warehouses commoditize.