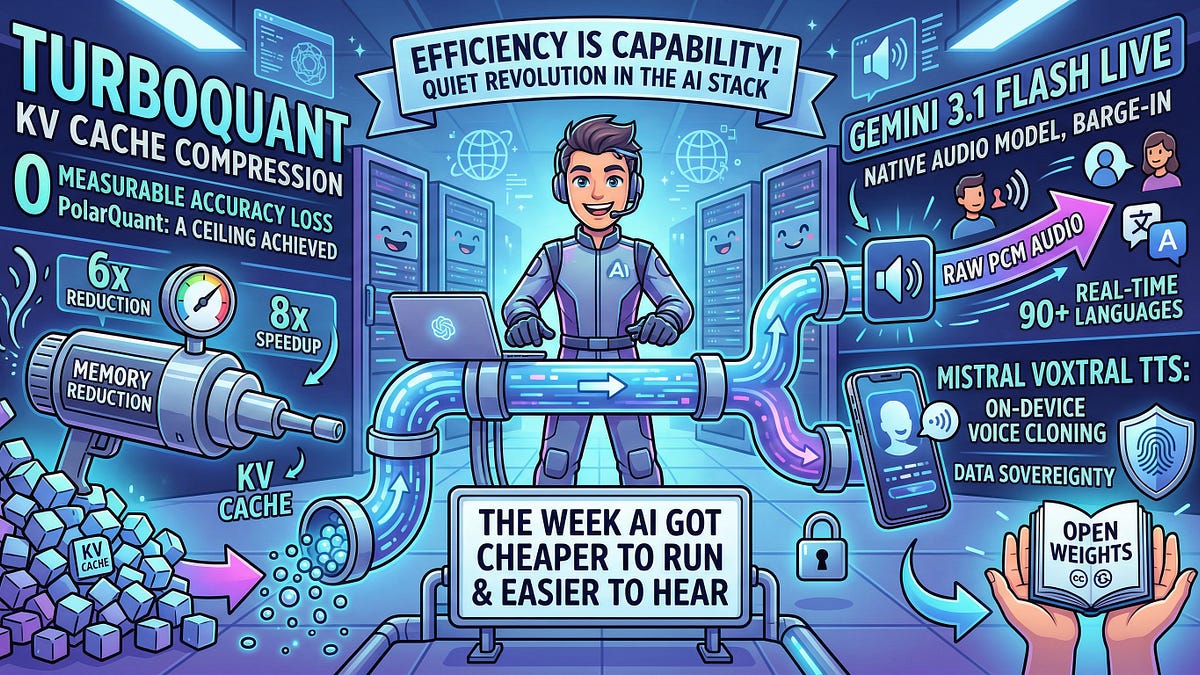

Google Research just dropped a bomb: TurboQuant achieves 3-bit KV cache compression with zero measurable accuracy loss, delivering a 6x memory cut and up to 8x speedup on H100s.

Training-free. Drop-in ready. And it’s hitting the Shannon lower bound on error — meaning we’re staring at the theoretical ceiling of what’s possible through compression alone.

Look, in the brutal economics of LLM serving, memory is king. Context windows balloon to 1M+ tokens, KV caches explode linearly, gobbling GPU HBM like it’s free. Inference costs skyrocket. TurboQuant flips that script.

How Does TurboQuant Actually Work?

It starts with PolarQuant: convert KV vectors from Cartesian to polar coordinates. Why? Angular distributions turn predictable — no more per-block normalization headaches that neuter traditional quantization. Then QJL kicks in, Johnson-Lindenstrauss transform slashes each vector to a single sign bit, balanced by a precision estimator to preserve attention scores.

The numbers? Striking. 2.5 bits per channel with marginal MSE degradation. Superior recall in nearest-neighbor searches. This isn’t hype; it’s information theory flexing.

“The result: 3-bit KV cache compression with zero measurable accuracy loss, 6x memory reduction, and up to 8x speedup on H100s. Training-free. Drop-in.”

That’s straight from the release notes — and it lands like a mic drop.

But here’s my take, the one you won’t find in the original chatter: this echoes the JPEG compression wars of the ’90s. Back then, DCT transforms squeezed images 20:1 without visible artifacts, unleashing web media. TurboQuant? It’s JPEG for AI attention. Expect long-context RAG, agentic workflows, everything to flood consumer apps as costs crater.

Voice Models: End of the Clunky Pipeline?

Shifting gears — voice got two wild bets this week.

Google’s Gemini 3.1 Flash Live ditches the old VAD → STT → LLM → TTS daisy chain. One native audio model. Raw PCM bidirectional. Barge-in mid-sentence. 90+ languages. Scored 36.1% on Scale AI’s Audio MultiChallenge — coherence under interruption, the real killer for voice bots.

Search Live’s deploying it in 200+ countries. Quietly. Globally.

Mistral? Voxtral TTS, 4B params on Ministral 3B backbone. Smartphone-friendly. Clones voice from 5 seconds of audio. 90ms time-to-first-audio. Open weights, Creative Commons. Pitch: data sovereignty for enterprises — your voice, your hardware, no cloud leaks.

Regulated sectors (healthcare, finance) will eat this up.

Why Efficiency Beats Capability Jumps Right Now

No reasoning benchmarks. No AGI teases. Just plumbing.

But plumbing wins wars. Inference cost binds AI’s spread — cheaper stack means everywhere deployment. TurboQuant solves KV bloat. Voice collapses latency stacks. Efficiency is capability when scaling’s the game.

Market dynamics scream it: H100 scarcity, $40k pops. OpenAI’s burning cash on inference. Anthropic too. These drops — Google, Mistral — erode that moat. Hyperscalers serve more users per dollar. Indies run monsters on laptops.

Bold call: by Q4 ‘25, 1M-token contexts hit sub-cent inference. Agents roam free. Voice UIs dominate — think Siri 2.0, but uncensored, on-device.

And the PR spin? None here. These are engineer wins, not keynote fluff. Refreshing.

Is This the Floor for AI Costs?

Short answer: damn close.

TurboQuant flirts with theoretical limits. Voice end-to-end slashes hops (and errors). Next? Speculative decoding, PagedAttention tweaks, MoE sparsity. But gains halve each year — like Moore’s Law fatigue.

The field’s mature. Plumbing’s optimized. Real leaps demand new architectures — world models, test-time training. Or bust.

Still, bullish. Costs plummet, AI ubiquity follows.

🧬 Related Insights

- Read more: OpenAI Japan’s Teen Safety Blueprint: Guardrails for AI’s Young Explorers

- Read more: GPT-5’s Router: OpenAI’s Sneaky Path to Ads in Your Chat

Frequently Asked Questions

What is TurboQuant and how does it compress KV cache? TurboQuant uses PolarQuant (Cartesian-to-polar) and QJL (sign-bit reduction via Johnson-Lindenstrauss) for 3-bit compression, hitting 6x memory savings with no accuracy drop.

Gemini 3.1 Flash Live vs traditional voice pipelines? It processes raw audio end-to-end, supports interruptions, 90+ languages — killing the latency and error pile-up of VAD-STT-LLM-TTS chains.

Can Voxtral run on my phone? Yes, 4B params, clones voices fast, 90ms latency — open weights for on-device, private use.