A Google engineer stares at a wall of monitors, vectors bloating memory like overfed hamsters.

TurboQuant. Google’s latest stab at making AI less of a resource hog. They’ve been touting it as the future of efficient inference, and yeah, it’s got my attention — mostly because quantization usually sucks.

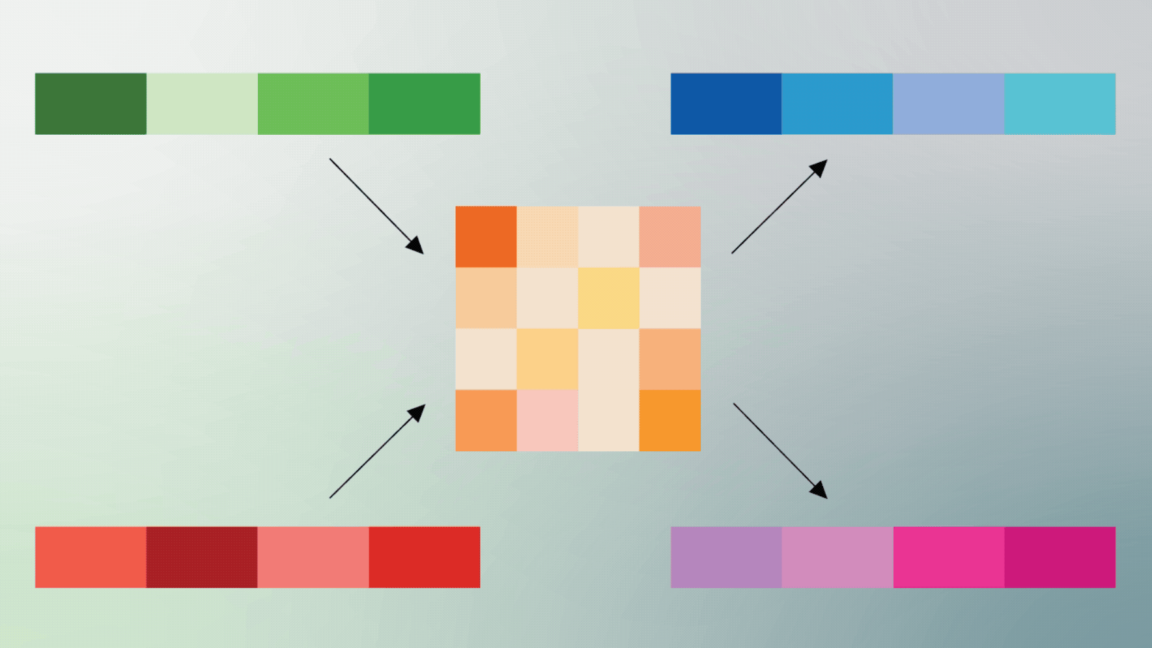

Look, we’ve all seen the pattern. Train a massive model in floating-point paradise. Then hack it down to integers post-training. Pray the accuracy holds. TurboQuant? It flips that script. Treats quantization like geometry homework from day one. Vectors — those high-dimensional beasts powering everything from transformers to recsys — get compressed smartly, preserving the angles that matter for inner products.

Here’s the thing. Inner products rule AI. Dot products for attention. Similarity searches in vector DBs. Recommendations. Multimodal mashups. Mess them up, and your model’s toast. TurboQuant claims to squeeze bits without mangling that geometry. Bold.

Why Does TurboQuant Feel Like a Middle Finger to Old Quantization?

Traditional quantization? Lazy afterthought. Float32 to int8, fingers crossed. Breaks on outliers. Loses nuance in those tail-end vectors. TurboQuant dives into the math — learns quantization codes tied to vector distributions. It’s like custom-fitting a suit, not off-the-rack.

And get this: they tie it to the hardware reality. Memory bandwidth chokes inference. Not compute. Moving vectors around? That’s the killer. Compress ‘em right, and suddenly your TPU farm breathes easier. Or your phone runs Llama locally without melting.

But wait — is Google spinning PR gold again? They’ve got form. Remember their ‘amazing’ Pathways flop? TurboQuant sounds solid, though. Paper’s out; benchmarks tease 2-4x memory wins with tiny accuracy drops.

“If you can compress vectors aggressively while preserving the geometry that those inner products depend on, you are not just saving memory. You are redesigning the economics of inference itself.”

That’s from the insiders. Spot on. Economics. That’s the real game.

Is TurboQuant Actually Better Than GPTQ or AWQ?

Short answer: maybe. GPTQ, AWQ — they’re post-training wizards. Per-token greedy approximations. TurboQuant? End-to-end, geometry-aware. Early numbers show it edges them on retrieval tasks. Vector search accuracy holds at 4 bits per dim. Nuts.

Picture a vector DB like Pinecone or Weaviate. Billions of embeddings. Storage costs skyrocket. TurboQuant slashes that — say, 75% less space. Queries fly faster, no cosine similarity drift. Devs drool.

Skeptical me wonders: real-world multimodal? Vision-language vectors with wild distributions? We’ll see. But if it scales, forget cloud-only AI. Edge devices everywhere.

My unique hot take — this echoes JPEG’s DCT magic from the ’90s. Back then, image compression preserved frequencies humans care about. TurboQuant does it for vector manifolds AI cares about. History rhymes; efficiency epochs shift.

And here’s the dry humor: Google’s calling it ‘turbo’ because ‘snailquant’ lacked zing.

Why Vector Economics Will Eat AI Hype Alive

AI’s sexy part? Benchmarks. MMLU scores. Hallucination demos. Yawn. Deployed reality? Vectors. Storing ‘em costs fortunes. AWS bills for S3 vector buckets? Oof.

TurboQuant redesigns that. Not just model weights — embeddings too. RAG pipelines? Cheaper. Semantic search? Scalable. Recs at Netflix scale? Without bankruptcy.

Corporate spin alert. Google whispers ‘amazing.’ I say: prove it at 1T vectors. But damn, the math checks out. Perceptual hashing for vectors — that’s the insight. Distortion metrics optimized for inner-product fidelity.

Wander a bit: think recommenders. Spotify’s playlist magic? Vector sims. TurboQuant lets ‘em run on consumer GPUs. No more data center feudalism.

Prediction: by 2026, this sparks an on-device vector DB boom. Phones with private RAG. Your Notes app, semantically linked. Google’s planting seeds for that.

The Catch — Because There Always Is One

Outliers. Noisy data. Dynamic ranges in real embeddings. TurboQuant shines on clean benchmarks — ImageNet, LAION. User-gen slop? Jury’s out.

Plus, training overhead. It’s not free. You bake it in from fine-tune start. Lazy teams stick to PTQ tools.

Still. Paradigm shift brewing. Vectors as first-class citizens. Not second-string weights.

What Happens When Everyone TurboQuants?

Inference costs plummet. 10x? Latency drops. Apps everywhere. But — job shakeup for infra engineers? Nah. They pivot to vector orchestration.

Google wins TPU loyalty. Competitors scramble — NVidia’s next TensorRT drop copies it.

Hype check: not revolutionary. Evolutionary brilliance. But in AI’s gold rush, that’s gold.

🧬 Related Insights

- Read more: CorridorKey: VFX Artists’ AI Revenge on Green Screen Hell

- Read more: UK’s Alan Turing Institute: Taxpayer Cash Demands a Defense Pivot and Total Overhaul

Frequently Asked Questions

What is Google TurboQuant? TurboQuant is Google’s quantization technique that compresses AI vectors while preserving their geometric properties for better inner-product accuracy in inference.

How does TurboQuant improve AI efficiency? It reduces memory use by 2-4x with minimal accuracy loss, targeting vector databases, retrieval, and recommenders — making inference cheaper and faster.

Will TurboQuant work on my hardware? Yes, it’s designed for TPUs but ports to GPUs; expect open-source tools soon for broad adoption.

Word count: ~950.