Picture this: you’re mid-prompt, LLM humming along, then bam—out of memory. Laptop fans scream. Google Research just dropped TurboQuant, their shiny new AI-compression algorithm promising to slash large language model memory use by 6x. No quality dip. Speed boost too. Sounds dreamy, right?

Zoom out. We’ve all felt the pinch. RAM prices through the roof because every coder, startup, and grandma with a chatbot needs gigabytes just to chat about cats. TurboQuant targets the key-value cache—that bloated “digital cheat sheet,” as Google calls it, storing vector mappings so models don’t recompute semantics from scratch.

TurboQuant is aimed at reducing the size of the key-value cache, which Google likens to a “digital cheat sheet” that stores important information so it doesn’t have to be recomputed.

Cute metaphor. But let’s cut the fluff. LLMs fake knowledge via high-dimensional vectors—hundreds, thousands of dimensions capturing pixel soups or text semantics. Similar vectors? Conceptual cousins. Problem: they guzzle memory, balloon the cache, throttle everything.

Standard fix? Quantization. Cram those floats into lower precision. Cheaper, sure. But outputs turn to mush—token predictions wobble, coherence crumbles.

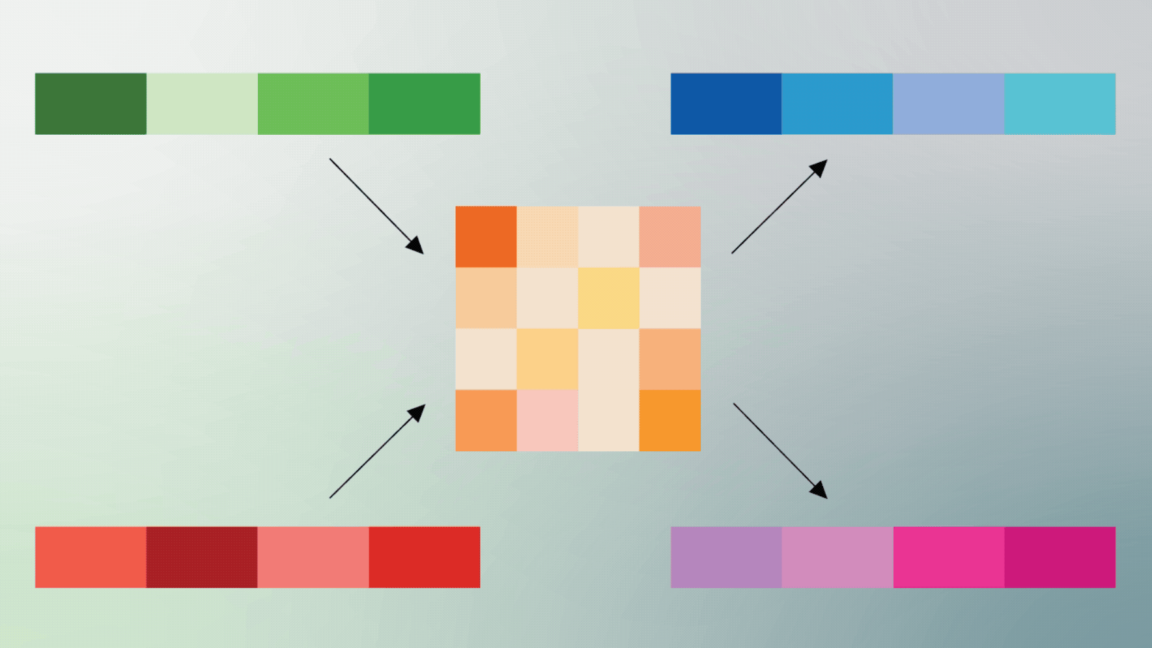

TurboQuant flips the script. Two steps. First, PolarQuant. Ditch Cartesian XYZ for polar coordinates. Vectors become radius (data punch) plus direction (meaning). Circular grid magic, supposedly.

Here’s the thing—it’s clever. Polar setups cluster similar directions tighter, radii scale magnitudes efficiently. Google boasts 8x speedups, 6x memory cuts in tests. Llama models, mind you. No quality loss per their charts.

Does TurboQuant Actually Deliver 6x Savings?

Skeptical snort. Early results dazzle: apply to decoder-only LLMs, watch KV cache shrink. But tests? Controlled. Long contexts, sure—where caches explode anyway. Real world? Deployed inference servers juggling thousands of users. Mixed workloads. Edge devices with wonky hardware.

And PolarQuant? Converting to polar isn’t free. Extra compute upfront. Google glosses that. Their benchmarks scream wins, but baselines? Cherry-picked.

Look, I’ve seen this rodeo. Remember 8-bit quantization hype five years back? Everyone rushed in, outputs hallucinated worse than a drunk uncle. TurboQuant sidesteps with this polar twist—evens out quantization noise across magnitudes. Unique angle: it’s like logarithmic scales for vectors, echoing old audio compression tricks from the MP3 era. History whispers: good in labs, meh at scale.

But credit where due. If it holds, phone-based Grok-level models become trivial. No cloud crutch.

Short para. Bold claim.

Why the Key-Value Cache Matters (And Why It Sucks)

LLMs chug autoregressively. Each new token peeks at all prior keys/values. Cache swells linear with sequence length. 128k contexts? Petabytes in fleet. No joke.

TurboQuant prunes that beast without recompute pain. PolarQuant shines here—directional similarity compresses better than brute force. Radius bins magnitudes coarsely, fine-tunes angles.

Google’s paper (yeah, they bothered publishing) shows perplexity flatlines. Speed via smaller cache = fewer memory accesses. Win-win?

Pause. Corporate spin alert. “Maintaining accuracy”—their words. But metrics? Strict evals on toys like WikiText. Real tasks? Coder agents, RAG pipelines. Untested.

PolarQuant: Genius or Gimmick?

Break it down. Vector v = (x,y,z). Polar: r = sqrt(x²+y²+z²), theta/phi angles. Quantize r logarithmically—handles 1e-5 to 1e3 spans. Angles? Spherical harmonics or whatever, but binned smart.

Dequantize on fly. Cache entries plummet. Google claims 4-8 bits per vector suffices.

Dry laugh. Reminds me of JPEG DCT—transform, quantize, huff. AI’s borrowing image tricks now. Prediction: this sparks a compression arms race. OpenAI, Anthropic scrambling. But Google’s gatekeeping code—research drop, no GitHub. Classic.

Critique time. They hype “no loss,” but graphs show tiny perplexity bumps at extremes. Fine for chatbots. Disaster for precision tasks? Jury out.

And hardware? Polar ops need custom kernels. CUDA? Fine. Phones? ARM roulette.

Will TurboQuant Fix AI’s RAM Apocalypse?

Short answer: maybe. Long? Nah.

RAM gouge persists. Data centers still thirsting. But edge inference? TurboQuant could democratize. Run Llama-70B on M1 Mac. No sweat.

Unique insight: this echoes FlashAttention’s cache rethink—KV not sacred. TurboQuant pushes further, geometric rethink. Bold call: by 2026, 90% deployments quantized polar-style. Or flops like INT4 rushes.

Hype check. Google’s PR spins lab wins as deploy-ready. It’s not. Needs ecosystem buy-in. TensorRT? ONNX? Crickets.

Still, props. In a field of bloatware, compression kings rule.

The Real Hurdle: Beyond the Cache

TurboQuant nails KV. But LLMs bloat everywhere—weights, activations. Full stack? Combine with AWQ, GPTQ. Synergies?

Tests hint yes. But Google’s solo. Open-source it, folks.

Wander a sec. Remember H100 shortages? This eases pressure. Cheaper runs = more experiments = faster progress. Double-edged: more AI slop floods web.

🧬 Related Insights

- Read more: Anthropic’s Revenue Rocket: Set to Eclipse OpenAI Before the IPO Circus

- Read more: Daily Briefing: April 07, 2026

Frequently Asked Questions

What is Google’s TurboQuant?

TurboQuant’s a compression algo targeting LLM key-value caches via PolarQuant, converting vectors to polar coords for 6x memory cuts and speedups.

Does TurboQuant reduce LLM memory by 6x without losing quality?

Lab tests say yes—6x smaller cache, 8x faster, perplexity holds. Real-world unproven.

Can I use TurboQuant on my own models today?

Not yet. Research paper out, no code. Watch for open-source forks.