AI Research

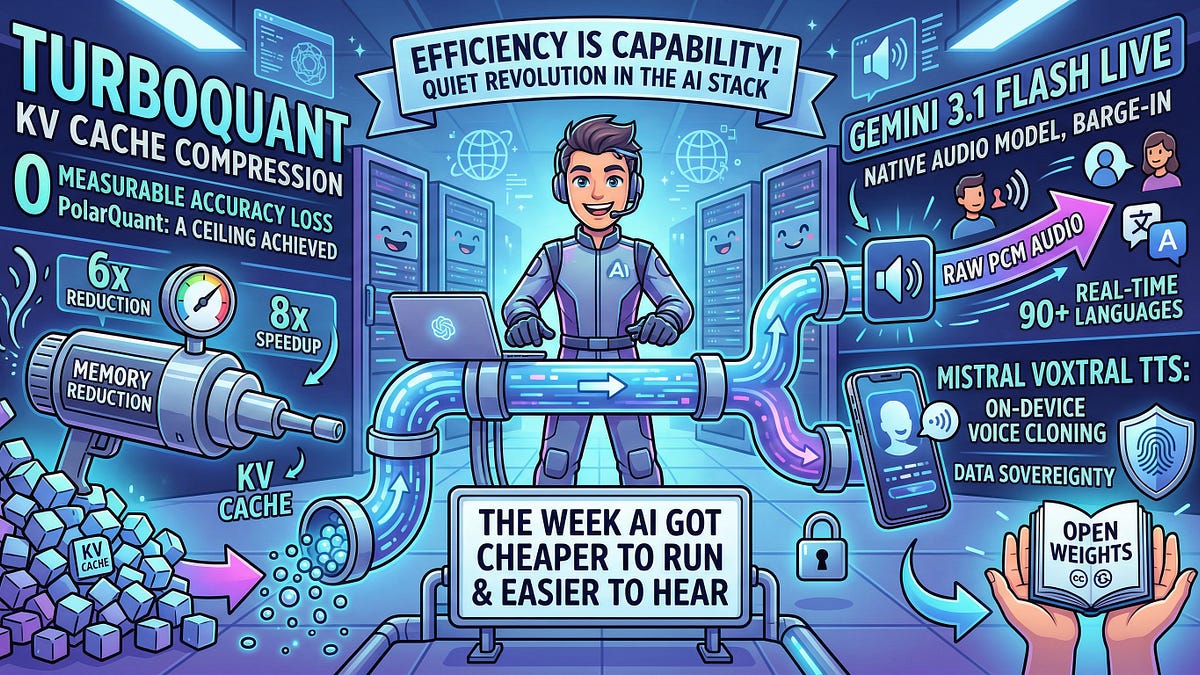

TurboQuant's 6x KV Cache Slash: The Inference Efficiency Leap No One Saw Coming

A 6x memory reduction for LLM inference on H100 GPUs. That's TurboQuant — and it's just the start of AI's quiet efficiency revolution.