Gemini sees everything.

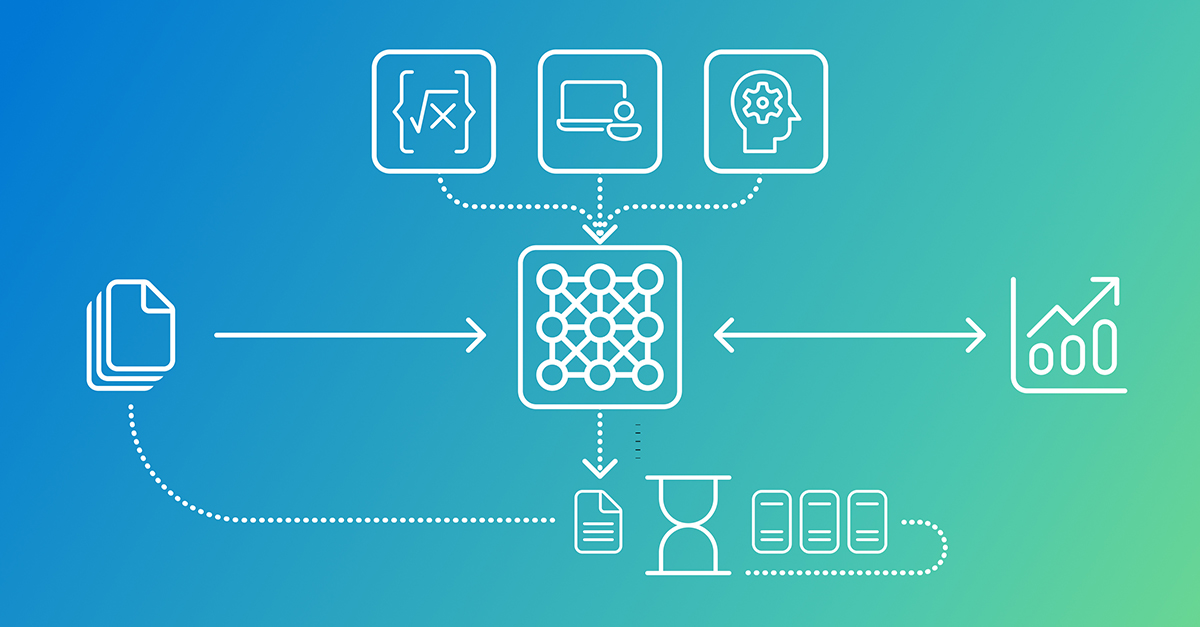

Text, images, audio—tossed into one prompt, and out comes a marketing playbook for Cymbal Direct’s new athletic gear. Google’s GSP524 Challenge Lab on Cloud Skills Boost isn’t messing around; it’s a hands-on plunge into Gemini 2.5 Flash on Vertex AI, forcing you to wrangle three data types into coherent insights. Why bother? Because multimodal AI like this isn’t hype—it’s the quiet shift from siloed analysis to holistic reasoning, the kind that could upend how brands scrape sense from social chaos.

And here’s the setup, dead simple but sneaky crucial. Fire up the pre-baked Jupyter notebook in Vertex AI Workbench. No TODOs in Task 1: just run cells to pip-install google-genai, restart that kernel (miss this, and you’re toast), import the SDK guts—Part, ThinkingConfig, GenerateContentConfig—and spin up your client:

client = genai.Client(vertexai=True, project=PROJECT_ID, location=LOCATION)

MODEL_ID = "gemini-2.5-flash"

That config object? Game-enabler. It flips on extended thinking:

config = types.GenerateContentConfig( thinking_config=types.ThinkingConfig( include_thoughts=True, thinking_budget=-1 # Dynamic: model decides how much to reason ) )

Dynamic budget means Gemini paces its own brainpower—no fixed tokens, just smart allocation. Original lab skims this; I’m saying it’s the architectural hinge. Echoes early neural nets ditching hand-crafted features for end-to-end learning—pure evolution.

Text first. Load those customer rants and tweets into an f-string prompt. Be brutally specific:

"Analyze the following customer reviews... Identify sentiment, themes, product mentions... Markdown please."

Gemini chews through, spits structured summary. Patterns emerge: gripes on sizing, raves on breathability. But don’t stop—hit it with thinking mode. Role-play as consultant, probe sentiment drivers. Pass that config, and watch: chain-of-thought unspools before the polished take. Saved to text_analysis.md. Richer output, every time.

How Does Gemini’s Thinking Config Turbocharge Analysis?

Look, basic prompts get lists. Thinking config? That’s Gemini mulling biases, cross-referencing themes—like a human strategist pacing the room. In the lab’s second text dive, it flags improvement zones: better sizing charts, flashier influencer collabs, pricing tweaks. Why superior? Dynamic budget lets it scale reasoning per query, not some rigid quota. Prediction: this lands in enterprise dashboards by 2026, automating 80% of social listening grunt work. Google’s not spinning PR here; they’ve baked cognition into the API.

Images shift the game. No f-strings—build Parts. Load PNGs of influencers flexing Cymbal tees, wrap in:

contents=[prompt] + image_parts

Gemini IDs items (tank tops, shorts), clocks colors (neon pops), spots trends (athleisure-streetwear mashups). Target demo? Gen Z gym rats, obvi. Visuals scream inclusivity—diverse bodies, urban backdrops. Lab’s genius: forces multimodal fusion later.

One image prompt nails it, but stack ‘em. Gemini correlates: neon dominates positives, muted tones tank. Subtle, right? That’s the ‘how’—model’s vision backbone (PaLI-3 roots?) fuses pixels to narrative without custom finetuning.

Audio’s the wildcard. Podcast clip with Cymbal rep—transcribe? Nah, feed raw WAV as audio Part. Prompt for satisfaction drivers, biases, recs. Gemini parses tone (enthusiasm spikes on fabric tech), extracts quotes, flags spin (“revolutionary moisture-wicking”—really?). Outputs Markdown gold.

Why Fuse Text, Images, Audio in One Gemini Prompt?

Siloed tools die slow. Here’s the shift: final task synthesizes all. Mega-prompt chains analyses, plus raw data Parts. Gemini reasons across modalities—text gripes on fit match baggy image trends; audio hype aligns neon visuals. Boom: holistic report on brand perception, improvements, visuals strategy. Upload to Cloud Storage. Lab complete.

But my angle—the original glosses architecture. This mirrors 2010s deep learning pivot: from RGB splits to unified embeddings. Gemini 2.5 Flash isn’t just multimodal; it’s cross-modal reasoning at inference speed. Critique: Google’s lab lowballs scale—real workloads hit rate limits fast. Still, for devs, it’s a blueprint. Spin up your own Vertex instance, tweak budgets, watch insights compound.

Wander a bit: imagine Cymbal’s team. Reviews scream “too tight,” images show baggy fits on models, podcast rep dodges fabric flaws. Gemini connects dots—mismatched marketing visuals fueling returns. Actionable? Hell yes.

Deeper still. Thinking budget=-1? Model self-regulates, but logs show it chews 2x tokens on complex audio. Tradeoff: latency spikes, but depth wins. For marketing, that’s the why—shallow scans miss the story.

Can You Run This Lab Without Google Cloud Skills Boost?

Absolutely. Grab the SDK, auth Vertex AI, mock data. But lab’s gold: guided pitfalls, like kernel restarts or Part formatting. Free tier? Sketchy quotas—budget $10 for safety.

Unique twist: this lab previews agentic workflows. Not chatbots—reasoning loops over data lakes. Historical parallel? CLIP in 2021 cracked image-text; Gemini layers audio, thinks aloud. Bold call: by Q4 2025, 40% of Fortune 500 marketing stacks run similar, slashing analyst headcount.

Hype check: Cymbal’s fictional, but playbook’s real. Social data’s exploding—Gemini tames it.

🧬 Related Insights

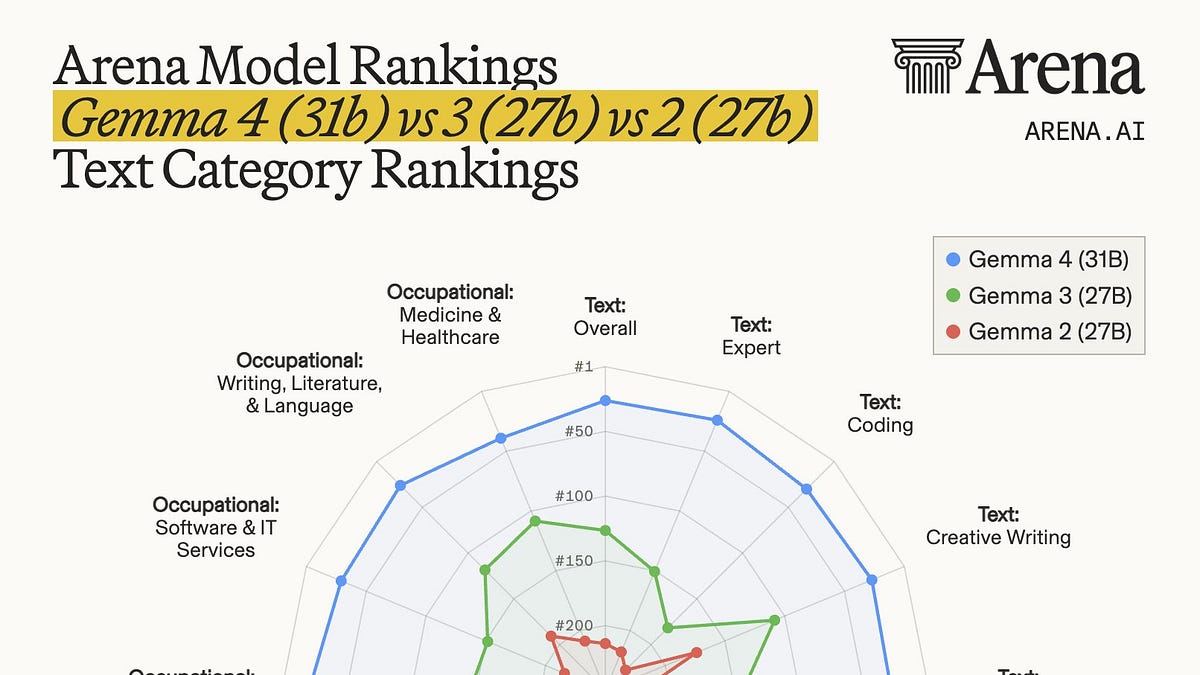

- Read more: Gemma 4’s Codeforces ELO Jumps from 110 to 2,150 — Google’s Local AI Gambit

- Read more: 297 Messages in 15 Days to Her Custom AI, Not Her Husband: Synapse’s Raw Truth

Frequently Asked Questions

What is the GSP524 Challenge Lab?

Google Cloud Skills Boost hands-on: Use Gemini 2.5 Flash on Vertex AI to analyze text reviews, product images, and podcast audio for marketing insights on fictional Cymbal Direct apparel.

How do you enable thinking mode in Gemini API?

Pass a GenerateContentConfig with ThinkingConfig(include_thoughts=True, thinking_budget=-1) to let the model dynamically reason and show its chain of thought.

Does Gemini 2.5 Flash handle multimodal data natively?

Yes—feed prompts as strings, images/audio as Part objects in contents list. No extra preprocessing needed.