Snap a photo of that gnarly physics diagram on your kid’s homework — Phi-4-reasoning-vision doesn’t just label the vectors, it reasons through the forces, crunches the numbers, and spits out the answer with a step-by-step breakdown. Or pull up a messy receipt during tax season; this little beast scans every scribble, tallies totals, flags discrepancies — all offline on your laptop, no cloud crutch needed.

Real people win here. Not some lab fantasy.

Can Phi-4-reasoning-vision Actually Run on Your Laptop?

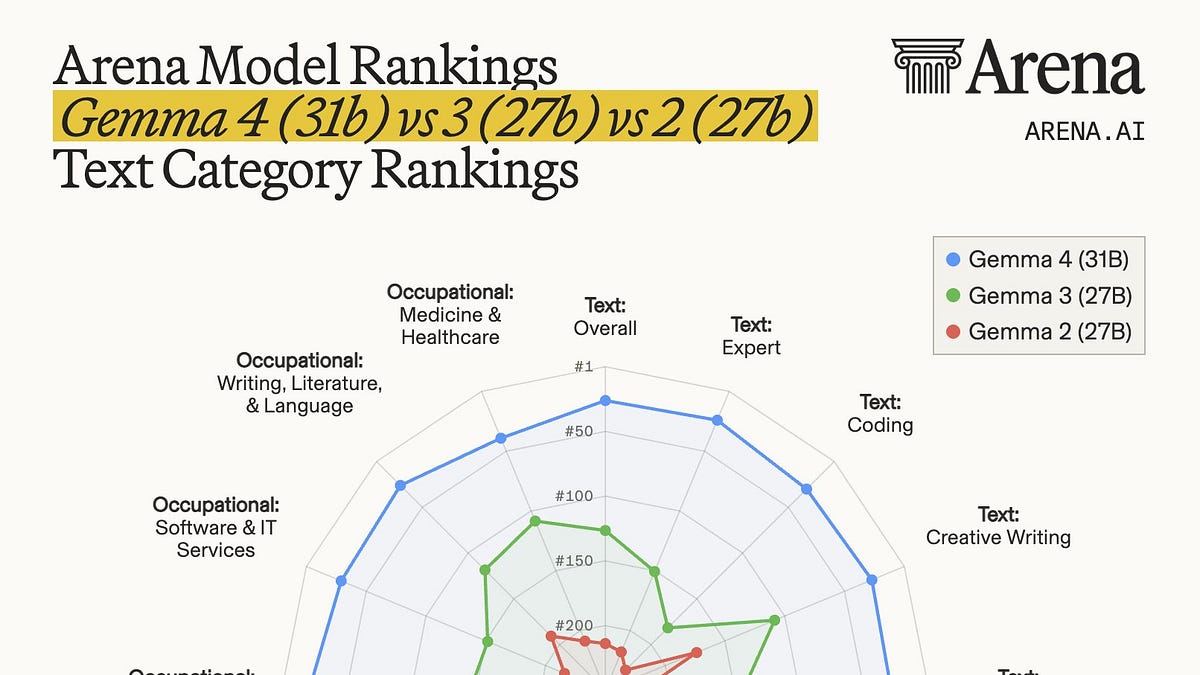

Yes — and that’s the electric thrill. Microsoft’s dropped this 15-billion-parameter multimodal reasoning model, open-weight and screaming efficiency. Trained on a measly 200 billion tokens (that’s right, billion, not trillion), it dances circles around hulking VLMs like Qwen 2.5 VL or Gemma3 that guzzle over a trillion.

Think of it like the Wright brothers’ plane versus a 747: both fly, but one lets you tinker in your garage. Phi-4-reasoning-vision fuses vision and language mid-stream — visual tokens from a SigLIP-2 encoder projected into a Phi-4-reasoning LLM backbone. No early-fusion bloat that demands server farms. Result? It handles image Q&A, document reading, UI navigation, even sequence changes in photos, all while excelling at math and science puzzles.

And the benchmarks? Competitive with models ten times slower. On math reasoning, it laps similarly speedy rivals.

We used just 200 billion tokens of multimodal data leveraging Phi-4-reasoning (trained with 16 billion tokens) based on a core model Phi-4 (400 billion unique tokens), compared to more than 1 trillion tokens used for training multimodal models like Qwen 2.5 VL and 3 VL, Kimi-VL, and Gemma3.

That’s Microsoft flexing their Phi playbook — quality data curation over data dumps. They mixed reasoning-heavy tasks with perception basics, proving you don’t need endless tokens to spark smarts.

But here’s my hot take, the one you won’t find in their blog: this echoes the 1980s personal computer boom. Back then, mainframes ruled; micros like the Apple II put computing — and creation — on desks worldwide. Phi-4-reasoning-vision? It’s the multimodal microchip. Predicts a flood of on-device AI apps: AR tutors overlaying explanations on real lab gear, instant code debuggers eyeing your screen, or doctors glancing at scans with reasoning overlays. No more waiting for API calls — AI as ambient as your phone’s camera.

Energy surges through this shift. Imagine devs shipping apps that “see” user interfaces, automating tests or guiding noobs through software mazes.

Why Does Phi-4-reasoning-vision Beat the Bloat?

Bloat’s the villain. VLMs ballooned — parameters, tokens, latency — turning them into inference hogs unfit for phones or edge devices. Phi flips the script.

They picked mid-fusion wisely: pretrained vision encoder feeds into an LLM that’s already a reasoning ninja from Phi-4-mini roots. Image patches? Processed smartly, not gulped-whole. Data? Ruthlessly curated — no synthetic slop flooding the tank. A blend of reasoning drills (math proofs from diagrams) and vanilla vision (caption that sunset).

It shines on screens too — grounding buttons, predicting clicks. Your virtual assistant, but with eyes.

One paragraph wonder: Efficiency isn’t luck; it’s engineered obsession.

Now, peel back the hood. From Phi-4’s language prowess (that tiny terror trained lean), they layered vision without retraining from scratch. Lessons? Architecture matters more than sheer scale. Early fusion? Tempting for “richer” reps, but it’s a compute vampire — skip it for mortal hardware.

And the UI magic — inferring app states from screenshots — that’s gold for agentic AI. Bots that don’t just chat; they navigate your world.

Skeptics might scoff: 15B ain’t GPT-4o. Fair. But pareto frontier? They’re shoving it forward. Faster inference, lower costs, open weights on Hugging Face, GitHub, Foundry. Run it yourself; tweak it.

For creators — oh boy. Build vision agents that reason over charts, debug UIs visually, tutor via camera. It’s platform shift fuel: AI not as service, but as toolkit.

Wander a sec: Remember when smartphones killed point-and-shoots? This kills cloud VLMs for interactive stuff.

How to Get Started with Phi-4-reasoning-vision Today

Grab it free. Hugging Face loaders are plug-and-play. Modest GPU? 16GB VRAM handles inference. Quantize to 4-bit for laptops.

Prompt it like: “Solve this equation from the image, explain steps.” Or “What’s changed between these two screenshots?”

Excels at homework helpers, receipt parsers, science viz. Weak spots? Ultra-fine art critique, maybe — but who cares when it nails reasoning?

Microsoft’s sharing the playbook: curate data fiercely, mix tasks, fuse mid. Community gold.

This model’s a beacon. Signals the end of VLM obesity. Welcome to lean, mean, reasoning machines — your phone’s about to get superpowers.

🧬 Related Insights

- Read more: Gemma 4: Google’s Tiny AI Titans That Might Actually Fit Your Laptop

- Read more: Cursor’s $2B ARR Blitz: From Code Editor to Enterprise AI Juggernaut

Frequently Asked Questions

What is Phi-4-reasoning-vision?

A 15B open-weight multimodal model from Microsoft that reasons over images — math, science, UIs — trained efficiently on 200B tokens.

How does Phi-4-reasoning-vision compare to other VLMs?

Beats similar-speed models on math/science; matches big ones’ accuracy but runs 10x faster, uses way less training data.

Can I run Phi-4-reasoning-vision on consumer hardware?

Yep — laptops with 16GB VRAM or quantized versions on less; perfect for edge deployment.