For most of AI history, models were specialists. Language models processed text. Computer vision models processed images. Speech models processed audio. Each modality had its own architectures, training procedures, and research communities working largely in isolation.

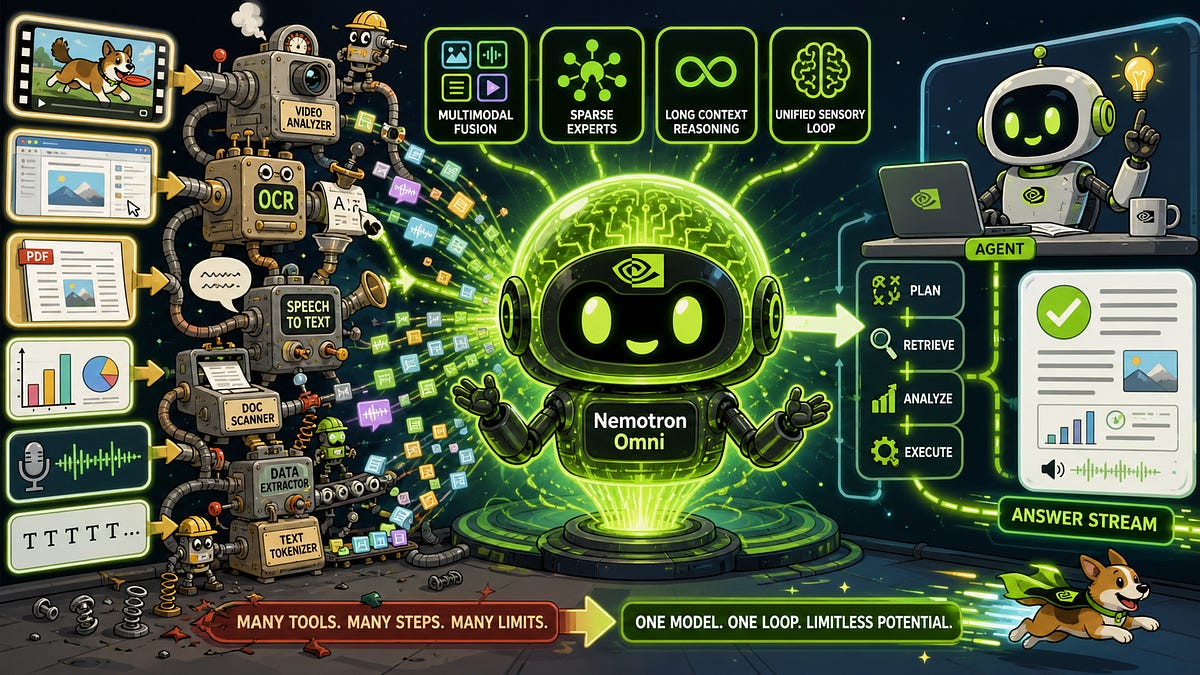

Multimodal AI breaks these boundaries. Modern multimodal models can process and reason across multiple types of data simultaneously: analyzing an image while discussing it in text, transcribing audio while answering questions about its content, or generating images from written descriptions. This convergence is producing AI systems that interact with the world in ways that more closely resemble human perception.

What Makes AI Multimodal?

A multimodal AI system is one that can accept, process, and reason about input from two or more modalities. The key capabilities include:

- Cross-modal understanding: The ability to connect information across modalities, such as understanding that a photograph of a sunset and the text description orange and purple sky over the ocean at dusk refer to the same scene.

- Cross-modal reasoning: The ability to answer questions that require integrating information from different modalities, such as reading a chart in an image and answering numerical questions about it.

- Cross-modal generation: The ability to produce output in one modality based on input in another, such as generating images from text descriptions or narrating video content.

How Multimodal Models Work

Multimodal models typically employ one of several architectural approaches to handle diverse input types.

Encoder Fusion Architecture

The most common approach uses separate encoders for each modality that project inputs into a shared representation space:

- A vision encoder (often a Vision Transformer like ViT or SigLIP) processes images into visual tokens or feature vectors.

- A text encoder/decoder (typically a large language model) handles text processing and generation.

- An audio encoder (like Whisper) converts speech or sound into audio embeddings.

- A projection layer or adapter aligns the representations from different encoders into a shared space that the language model can process.

GPT-4V and Claude 3 follow variations of this pattern, using vision encoders to convert images into sequences of tokens that the language model processes alongside text tokens.

Unified Token Architecture

An emerging approach treats all modalities as sequences of tokens in a single vocabulary. Images are converted to discrete visual tokens through a VQ-VAE or similar tokenizer, audio is tokenized through speech codecs, and all modalities are processed by a single transformer backbone.

This approach, exemplified by models like Gemini and experiments from Meta and DeepMind, offers architectural simplicity and enables more natural cross-modal interactions because all information passes through the same processing pipeline.

Contrastive Learning

CLIP (Contrastive Language-Image Pre-training) by OpenAI pioneered an influential approach: training paired vision and text encoders so that matching image-text pairs produce similar embeddings while non-matching pairs produce dissimilar ones. This creates a shared embedding space where text and image representations are directly comparable.

CLIP does not generate content but enables powerful applications: zero-shot image classification (categorizing images using text descriptions the model has never been explicitly trained on), image search using natural language queries, and serving as the vision encoder for larger multimodal systems.

Key Multimodal Capabilities

Visual Question Answering

Given an image and a natural language question, multimodal models can analyze the image and provide accurate answers. This ranges from simple identification (What color is the car?) to complex reasoning (Which item in this grocery receipt has the highest price per unit?). Modern models can read text in images, interpret charts and diagrams, understand spatial relationships, and reason about visual content at a level that was considered far beyond AI capabilities just a few years ago.

Document Understanding

Multimodal models can process documents as images, understanding both the textual content and the visual layout. This enables extraction of information from complex documents like invoices, forms, and scientific papers without requiring separate OCR (optical character recognition) and layout analysis pipelines. The model understands headers, tables, footnotes, and figure captions as integrated elements of the document.

Image Generation

Text-to-image models like DALL-E 3, Stable Diffusion, and Midjourney generate images from natural language descriptions. These models are typically built on diffusion architectures or transformer-based approaches that learn to progressively refine random noise into coherent images that match the text description.

The quality and controllability of generated images has improved dramatically, to the point where generated images are frequently indistinguishable from photographs for casual observation.

Video Understanding

Extending beyond static images, multimodal models are increasingly capable of understanding video content: recognizing actions, tracking objects across frames, understanding temporal sequences, and generating descriptions or answering questions about video events. Models like GPT-4o and Gemini can process video input, opening applications in surveillance, content moderation, and automated video analysis.

Audio and Speech Integration

Models like GPT-4o process speech natively, enabling natural voice conversations with real-time speech understanding and generation. Unlike traditional voice assistants that pipeline speech-to-text, reasoning, and text-to-speech as separate stages, end-to-end multimodal models process and respond to speech directly, capturing nuances like tone, emphasis, and emotion.

Real-World Applications

Healthcare

Multimodal models can analyze medical images (X-rays, MRIs, pathology slides) alongside patient records, clinical notes, and laboratory results. This integrated analysis can support diagnostic decisions that require synthesizing information across multiple data sources, mirroring how human clinicians work.

Accessibility

Vision-language models power image description services for visually impaired users, providing detailed natural language descriptions of visual content in real-time. Audio-visual models can generate captions and sign language interpretation. These applications directly improve access to information for people with disabilities.

Education

Multimodal tutoring systems can analyze student work (handwritten equations, diagrams, code), understand the visual and textual content, identify errors, and provide targeted explanations. The ability to process homework as a photo rather than requiring typed input makes AI tutoring more natural and accessible.

Creative Industries

From generating marketing visuals to creating storyboards, composing music, and editing video, multimodal AI tools are reshaping creative workflows. Designers use text-to-image models for rapid concept exploration, and video producers use AI for automated editing, captioning, and content repurposing.

Challenges and Limitations

Despite impressive capabilities, multimodal AI faces significant challenges:

- Hallucination across modalities: Models can describe objects that are not present in images, misread text in photographs, or generate factually incorrect descriptions of visual content. Cross-modal hallucination is harder to detect and mitigate than text-only hallucination.

- Bias amplification: Training data biases can compound across modalities. A model trained on captioned images may learn and reinforce stereotypical associations between visual characteristics and text descriptions.

- Compute requirements: Processing multiple modalities simultaneously demands substantially more compute than single-modality models, increasing costs and environmental impact.

- Evaluation difficulty: Measuring multimodal model quality is harder than evaluating single-modality outputs. Cross-modal understanding, spatial reasoning, and visual grounding lack standardized, comprehensive benchmarks.

The Trajectory Ahead

Multimodal AI is converging toward increasingly general systems that perceive and interact with the world through all available channels. Research directions include longer video understanding, real-time multimodal interaction, improved spatial and physical reasoning, and expansion to additional modalities like tactile feedback and 3D scene understanding.

The ultimate trajectory points toward AI systems that, like humans, seamlessly integrate information from all senses to understand and reason about the world. While current systems are far from that goal, the pace of progress in multimodal AI suggests that increasingly capable cross-modal reasoning will be a defining feature of the next generation of AI systems.