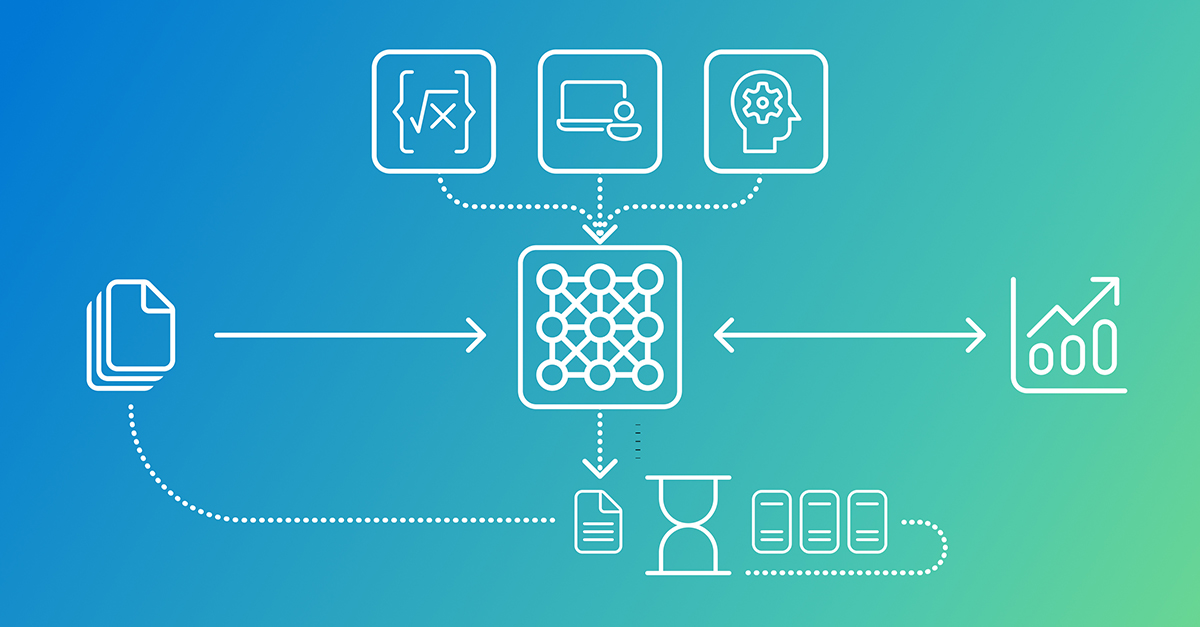

Picture this: a multimodal AI system — crunching images, text, audio in one frenzied pipeline — suddenly spikes as users flood in. Servers spin up, costs balloon, and your engineering lead’s on the phone at 3 a.m. explaining why the quarterly budget’s toast.

That’s not hyperbole. It’s the raw reality hitting teams building multimodal AI systems right now, where scalability clashes head-on with cost optimization.

Zoom out. These beasts aren’t your grandpa’s chatbots. They’re data hogs, fusing vision, language, sound into unified models that demand elastic resources. Get the architecture wrong, and you’re not just slow — you’re broke.

Why Do Multimodal AI Systems Break the Bank?

Workloads here aren’t predictable. Spiky demand from user uploads, real-time inference on mixed media — it laughs at fixed servers.

Here’s the thing: latency can’t budge (users bail at 200ms delays), throughput must surge, availability hits 99.99%. Yet resource utilization? Often sits at 30% idle. Waste.

Organizations chase ROI — scale for revenue features, sure, but ditch the ‘just in case’ over-provisioning. Tie every autoscaling rule to metrics: retention lifts, revenue jumps.

And look, the original playbook nails it:

Balancing scalability and cost optimization is a fundamental challenge in designing modern systems, especially those involving data-intensive or AI-driven workloads.

Spot on. But here’s my twist — a historical parallel to the Netflix streaming pivot in 2008. Monoliths choked their growth; microservices (and Chaos Monkey) freed them. Multimodal AI? Same trap, bigger stakes. Without granular scaling, it’ll stay a lab curiosity, not enterprise muscle.

Short para punch: Predictability kills costs.

Now, deep dive. Factors stack up: workload type (steady vs. bursty), perf SLAs, efficiency monitoring, long-haul planning. Miss one, pay forever.

Can Cloud-Native Services Tame Multimodal Chaos?

Cloud’s elasticity is the hook. Serverless compute (Azure Functions), managed containers — pay-per-use magic.

Storage tiers for petabytes of images/audio. AI/ML services offload model hosting, dodging GPU farms.

But — em-dash alert — watch the gotchas. Inefficient queries or chatty functions? Bills spike sneaky. Monitor like a hawk.

In Azure’s playground, multimodal shines: AKS for containerized models (vision pod scales solo from NLP), Functions for event triggers (new video? Process, route).

API Management gates it all, throttling spend.

Unique edge? Corporate hype calls this ‘smoothly’ — nah. It’s a tightrope. One bad pattern, and elasticity flips to extravagance.

Teams report 40-60% savings post-optimization. How? Auto-scaling groups tuned to CPU/memory, spot instances for non-critical inference.

Microservices: Granular Scaling for Multimodal AI?

Monolith? Dead weight. Break it into microservices — each modality a pod, scaling independent.

High-load recommender blasts out; low-traffic auth sips resources. Efficiency soars.

Tradeoff? Orchestration hell — Kubernetes sprawl, network latency, observability nightmares. Worth it? For multimodal, yes. Duplication avoided, costs sliced.

Azure example: AKS clusters per service (image recog, NLP, audio), Functions for glue, API Mgmt for facade.

Wander a sec: Remember early microservices buzz? Everyone jumped, few mastered. Same here — PR spin says ‘easy,’ reality demands DevOps ninjas.

My bold prediction: By 2026, 80% of production multimodal AI will mandate microservices, or face extinction in cost wars. It’s the architectural shift echoing web 2.0’s service explosion.

Tying It All Together — Real-World Wins

Combine ‘em: Cloud-native base, microservices layer, relentless monitoring (Prometheus, Grafana dashboards pegged to budgets).

Spot anomalies — spiky audio processing? Right-size pods.

Cost visibility? FinOps teams love tagged resources, anomaly alerts.

One para wonder: Results? Scalability without tears.

Critique time. Vendor docs (Azure’s) gloss ops overhead. Truth: Start small, iterate. Don’t buy the ‘managed everything’ dream wholesale.

🧬 Related Insights

- Read more: Coding Agents Unleashed: Tools, Memory, and the Harness Turning LLMs into Code Wizards

- Read more: AWS Frontier Agents: Autonomous Saviors or Expensive Hype?

Frequently Asked Questions

How do you scale multimodal AI systems cost-effectively?

Mix microservices on AKS with serverless Functions — scale hot paths independently, monitor utilization religiously.

What’s the biggest cost trap in multimodal AI?

Idle resources during off-peaks and inefficient queries; use auto-scaling and query optimization to slash 50%+.

Does Azure beat AWS for multimodal scaling?

Tied — both excel, but Azure’s integrated AI services (Cognitive) edge for multimodal pipelines.