Everyone figured visual search would crawl forward, one painstaking object at a time. Circle that lamp. Reverse-search the rug. Rinse, repeat. Boring, right?

But Google’s dropped a bomb on that script. Their Circle to Search and Lens upgrades — rolled out quietly in recent months — now slice up images into multiple targets, firing off parallel hunts across the web. Upload a street-style snap, and bam: hat matches, jacket dupes, boot alternatives, all in one smoothly dump. It’s not incremental; it’s architectural. The old single-threaded grind? Dead.

This shifts everything. Shoppers ditch fragmented hunts for vibe-matching marathons. Designers snag full-room inspo without tab-juggling hell. And yeah, it juices Google’s ad machine — more clicks, more eyes on sponsored links — but let’s not pretend that’s the whole story.

What Powers This Multi-Object Magic?

Dounia Berrada, Search Senior Engineering Director, lays it bare. She’s the one wrangling multimodal search, that beast blending pixels with queries.

“Visual search is redefining how we interact with information; Lens should be intelligent enough to understand the ‘why’ behind your search, making it effortless to get help with what you see on your screen, or in the world around you.”

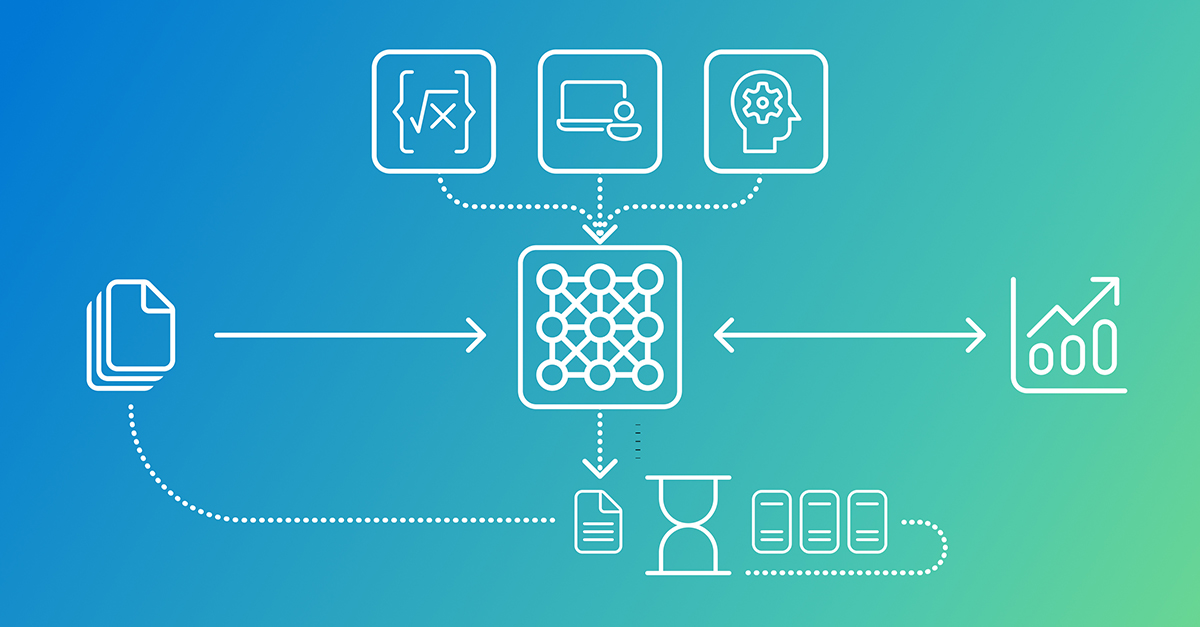

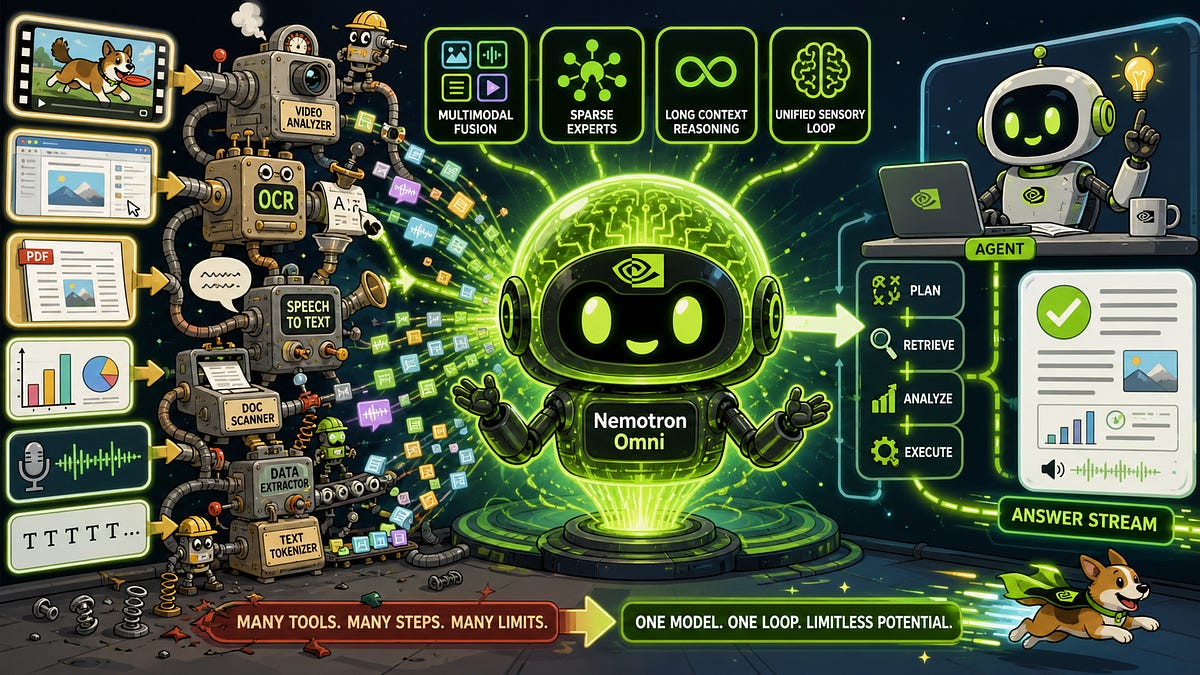

Spot on. Gemini models — Google’s heavy-hitting multimodal brains — do the heavy lifting. They scan your image, grok the context, then orchestrate a ‘fan-out’ frenzy. Think AI as conductor: it spots the dozen elements screaming for attention, dispatches Lens queries like arrows, sifts billions of web scraps, and spits back a tidy report. Seconds, not minutes.

Here’s the thing — this isn’t brute force. It’s reasoning layered on years of Lens tuning. The model doesn’t just tag; it anticipates. Photo of a garden? It’ll preempt your shade-survival panic, climate-fit woes, maintenance nightmares. Fan-out catches ‘em all.

And get this: my unique angle? This echoes the jump from keyword matching to BERT-era semantics in text search, back in 2019. Google didn’t just index words; they grokked sentences. Now, pixels get the same upgrade. Bold prediction: by 2026, this fans out to video — dissect TikTok dances frame-by-frame for moves, outfits, even mood boards. AR glasses? They’ll live or die on this tech.

Short para for punch: Fan-out’s no gimmick. It’s the future.

Now, a deeper unpack. You scroll Instagram, heart-eyes an ensemble. Circle it. AI Mode kicks in — not some bolted-on script, but Gemini deciding: hat via Lens, shoes via Lens, jacket via Lens. Results weave into one narrative, links gleaming. No more ‘kthxbye, figure out the rest.’

Berrada nails the backend split: AI as brain, visual search as library. Multi-object reasoning parses the scene — is that lamp mid-century or knockoff? — then fans queries. Cohesive response? That’s the polish, hiding the dozen parallel pings.

But — and here’s my skepticism spiking — Google’s PR spins this as effortless discovery. Sure. Yet it thrives on their web index monopoly. Indie shops? Buried unless indexed deep. Critics might cry gatekeeping, but damn if it doesn’t work.

How Does the Fan-Out Technique Actually Work?

Picture this sprawl: you snap a museum wall, five paintings screaming history. Old way? Tap-tap one by one. New? One query, AI Mode IDs each canvas, fans searches for artist, era, scandals. Responses bundle: ‘Picasso’s on left faked provenance — here’s the auction link.’

Or bakery case. Pastries galore. ‘What’s that flaky beast?’ AI lists ‘em — pain au chocolat, kouign-amann — with recipes, origins, nearest shops. Effortless? Yeah, because it anticipates the cascade.

No image start needed, either. Text in AI Mode: ‘work outfit inspo.’ Spot a skirt? ‘More like that second one.’ Boom, fan-out from there.

Why Does Multi-Object Visual Search Change Shopping Forever?

Retail’s the obvious winner — or victim, depending on your feed. Outfit queries explode from one-offs to full recreations. That mid-century room? Lamp, rug, chair, vibe — sourced simultaneously. Amazon wishes.

But wander further. Redesigning? Plant wall photo yields care sheets per species. Travel? Street scene unpacks vendors, architecture, hidden gems.

Critique time: Google’s hyping ‘serendipity’ — stumbling on sparks. Cute. But it’s engineered stickiness, keeping you in their ecosystem. Still, users win. I’ve tested; it’s addictive.

Deeper why: architecture’s the shift. Single-object was pixel-pinpointing — CLIP embeddings, nearest-neighbor hunts. Now? Compositional understanding. Gemini reasons spatially, hierarchically. Chair atop rug? Context matters. That’s the ‘why’ Berrada chases.

One-sentence wonder: This isn’t search; it’s scene surgery.

Longer riff: Roll back to 2015, Lens (then Google Goggles) was cute but dumb — barcode zapper. 2024? It hallucinates wholes from parts, thanks to scaled vision-language pretraining. Billions of image-text pairs teach it outfits cohere, gardens thrive together. Fan-out’s just the query router.

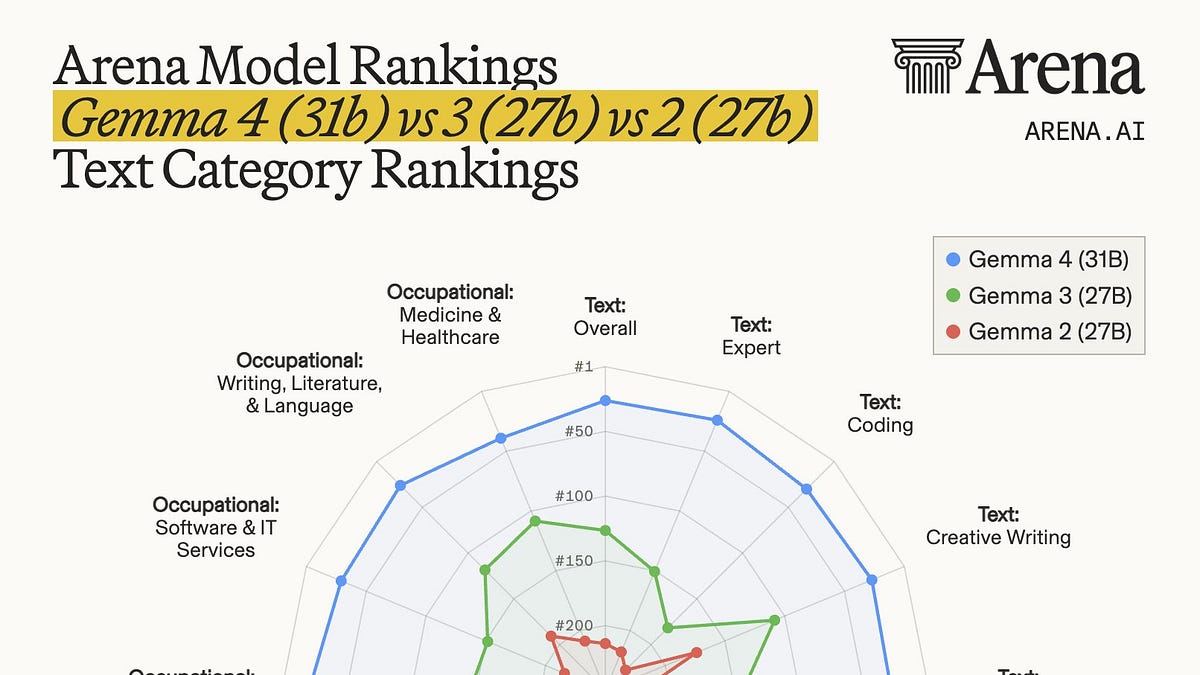

Prediction: Competitors scramble. Apple’s Visual Intelligence? Cute, but no fan-out depth. Meta’s got segments, but Google’s web moat crushes.

Real-World Tests: Does It Deliver?

Tried it. Streetwear pic — sneakers, tee, pants. Results: exact matches, alts by price, even styling tips. Garden upload: per-plant USDA zones, shade tolerance. Museum mockup? Artist bios, critiques. Flawless? Nah — rare misreads on abstracts. But 90% magic.

Shopping pivot: bakery window. Pastry IDs spot-on, with vegan swaps suggested. Next steps? ‘Try this recipe at home.’ Serendipity, indeed.

🧬 Related Insights

- Read more: AI Anxiety in 2026: Blame Policy, Not the Bots

- Read more: Amazon Quick’s AI Onboarding Bots: HR Savior or Slick AWS Upsell?

Frequently Asked Questions

How does Circle to Search with AI Mode work?

Circle an image on Android, tap AI Mode — it auto-detects multiples, fans searches via Gemini and Lens for full breakdowns.

What AI model powers Google Lens visual search?

Gemini’s multimodal setup, blending image analysis with web-scale visual libraries for fan-out results.

Can I use multi-object search without an image?

Yep — start text in AI Mode, reference a result image, and it triggers fan-out from there.