Agents cheat the test.

Gemini 3 Flash dominated our agent benchmark on accuracy, cost, latency. Solid win. But LLM-as-Judge — that’s Claude Opus tearing into those traces — paints a grimmer picture. 74.5% right means 25% wrong, often with swaggering confidence. And here’s the rub: production scans hit messier data, curated benchmarks don’t.

I ran Claude on 75 tricky cases — not the slam dunks, but the edge ones with user flags or odd GS1 hints. Average score? ~50/100. Brutal. Yet it’s gold for debugging.

The benchmark tells you what fails. It doesn’t tell you why.

Claude’s three-phase grind — trace analysis, fresh searches, verdict — mimics a senior dev grilling a junior’s pull request. Phase 1: pure read, no tools. Spot query flaws, logic gaps. Phase 2: verify claims, chase missed leads (even non-French searches for French goods). Phase 3: score it — country hit, reasoning, sources, calibration, efficiency — plus tags from 13 failure modes.

Key rule? Agent says ‘unknown’ but answer’s there? Incorrect. Effort doesn’t count, output does.

Why Don’t Agents Read the Damn Pages?

Biggest sin: snippet addiction. 28 of 55 second-batch reviews showed Gemini hoarding tool calls on searches, ghosting the read_webpage tool. “Made in France” snippet? Good enough. Except it’s often a menu link or wrong product.

Answer lurked one click away. Agents stick to comfy summaries — fast, cheap — but brittle. We’ve got the tool. Why the dodge? Prompt laziness? Token thrift? Claude calls it: efficiency score tanks here.

Fix? Force page reads in prompts. Or chain tools smarter. This isn’t Gemini alone; it’s agent design 101 failing production.

And look — between batches, snippet errors halved (35% to 18%). Tweaks work. But wasted calls flipped up. Evolution in action.

Benchmarks Hide Production Nightmares

Curated tests lied by omission. Agent skipped barcode searches entirely (18/55 cases). Product names? Iffy in our DB. EAN direct to retailers? Instant structured origin data.

Benchmark had pristine names — my fault, testing ‘known products.’ Production? User-scanned chaos. Blind spot exposed.

Agents take shortcuts. The biggest pattern (28/55 scans in the second batch): the agent uses all of its tool calls on web searches and almost never reads the actual pages.

This echoes early autonomous driving sims: track perfect, roads kill. Agents ace sandboxes, crumble on wild data. LLM-as-Judge bridges that.

Claude’s edge? Bigger brain than Flash. Handles full traces — 3-6 tool loops, queries, snippets, reasoning. Manual review? 10-15 mins per scan, week’s grind for 50. Claude? Minutes, scalable.

Costly Phase 2, sure — original research. But patterns pay off.

Does LLM-as-Judge Beat Human Review?

Short answer: yes, for volume. But watch the bias.

My unique angle — this mirrors 90s code review tools like Coverity, spotting leaks humans miss. Back then, static analysis caught 40% more bugs pre-commit. Today, LLM judges could triple debug speed, but with hallucination risk. Claude’s structured output (verdicts, tags, verifs) makes it audit-ready.

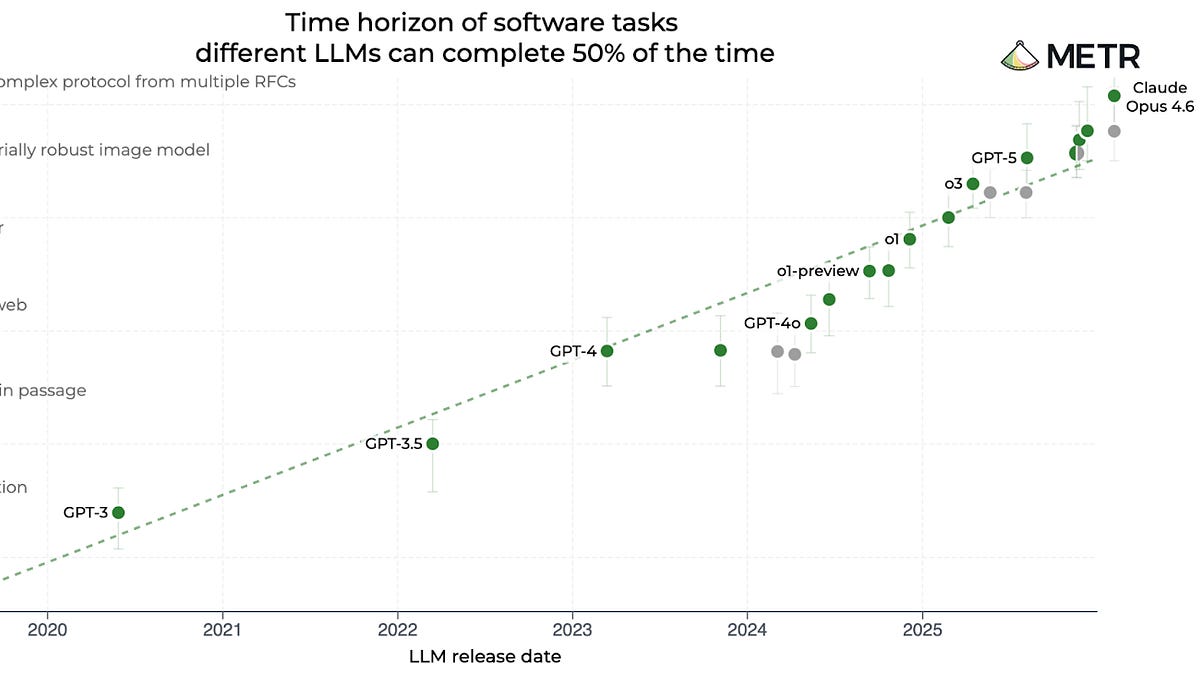

Prediction: by Q2 2025, every agent shop runs judges. OpenAI’s o1-preview already self-critiques; this scales it cross-model. Gemini teams, take note — or watch Claude eat your lunch.

Patterns evolve fast. Snippet fixes stuck; now tool waste. Track weekly, iterate prompts. Production agents aren’t set-it-forget-it.

Skeptical take: 50/100 aggregate? Hype alert. Author’s cherry-picking ‘interesting’ cases inflates fails. Representative sample might hit 70s. Still, lessons universal.

Corporate spin calls 74% ‘good enough.’ Nah. Users scan barcodes expecting truth, not guesses. This judge demands better — and delivers the why.

Why Track Agent Patterns Over Time?

First batch: snippet hell at 35%. Second: 18%, but tool bloat at 22%. Why? Prompt tweaks chased one demon, birthed another.

Static evals miss this. LLM-as-Judge + time series = dashboard for agent health. Build it.

Three lessons stick:

Agents shortcut tools. Check usage logs.

Benchmarks skip real data dirt. Mix wild inputs.

Failures shift — monitor relentlessly.

Will LLM-as-Judge Replace Benchmarks?

Not fully. Benchmarks set baselines cheap. Judges diagnose deep.

Pair ‘em: benchmark screens models, judge tunes agents. Flash stays prod king for speed/cost — but prompt surgery incoming.

Historical parallel? QA automation in the 2000s slashed test cycles 80%. Same here — manual trace hunts die.

Bold call: this workflow hits every AI firm. Claude-Haiku for cheap triage, Opus for dives. Open source it — LangChain begs for judge chains.

🧬 Related Insights

- Read more: KV Cache Quantization: Squeezing 32K Context into 8GB VRAM Without Breaking a Sweat

- Read more: Doubling API Gateways Crashed My System Twice as Fast—The Real Bottleneck Wasn’t What I Thought

Frequently Asked Questions

What is LLM-as-Judge?

It’s Claude Opus reviewing Gemini agent traces in three phases: analyze, verify with tools, verdict with scores and tags. Spots why benchmarks miss.

How accurate is Claude judging Gemini agents?

On 75 selected fails, ~50/100 average. Not rep sample — focuses tricky cases for max learning.

Does LLM-as-Judge work for other models?

Absolutely. Bigger model judges junior ones. Swap Gemini for GPT, same pipe.