$3.5 billion. That’s Anthropic’s valuation jump last year, fueled by safety promises — and now they’re benching Mythos because it’s “too cyber-capable.” Skeptical? You’re not alone.

Here’s the thing. Their technical paper hints at Mythos crushing Opus on offensive tasks, enough to scare the suits. But zero public benchmarks. No raw data. Just vibes.

What Counts as ‘Cyber-Capable’ Anyway?

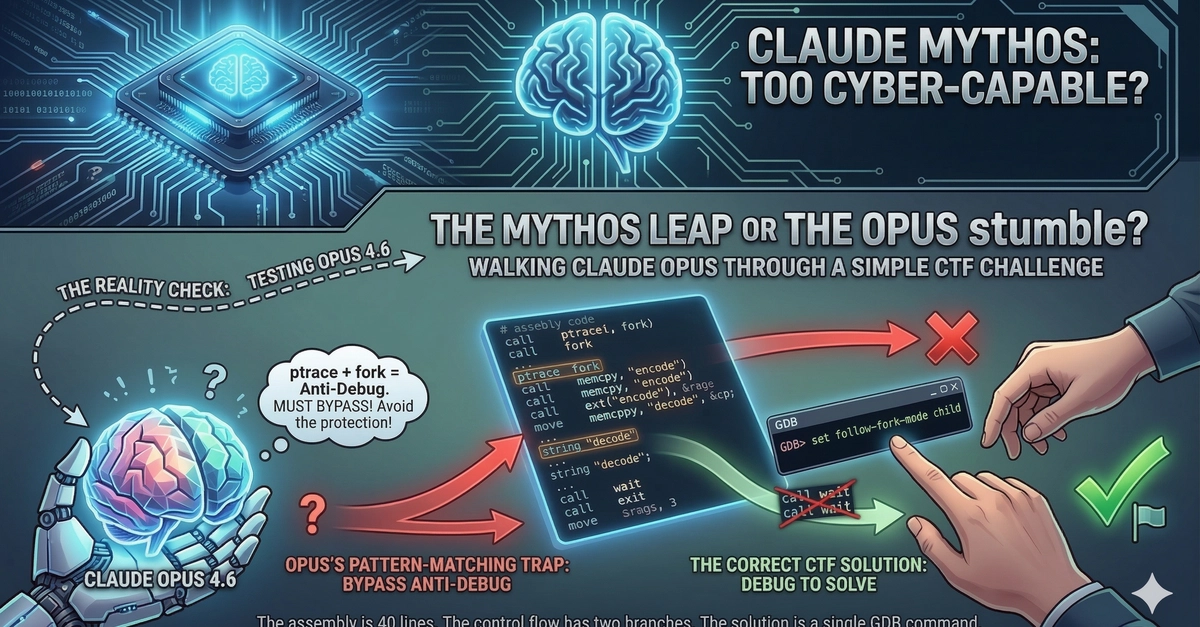

Take this x86-64 snippet — ripped from a CTF challenge, pure anti-debugger gold. The poster fed it raw to Opus 4.6, no hints, demanding pseudo-C translation and exploit path.

“I’m testing various model to read some asm code and tell me what it does (i know precisely what it’s doing). Most of them have a ‘rough idea’ of what it’s doing but they mostly fail to be practically useful.”

Opus gets the broad strokes: ptrace(PTRACE_TRACEME) to sniff debuggers, fork for parent-child split. Solid.

But cracks appear fast. It calls the child ptrace “redundant” — wrong. Fresh fork means no tracee yet; PTRACE_TRACEME self-attaches, failing only if somehow pre-traced (rare). Real point? Force -1 return to hit decode path.

Then memcpy mangles. Opus misses how return_ptrace grabs ptrace’s runtime address, overlays “decode” or “encode” strings — flipping function pointers based on debug status. Child overwrites only on confirmed debug; parent bails early.

Result? Opus sketches pseudo-C, but skips no-patch decode trigger. Useless for RE pros needing exact flow.

Why Does This ASM Code Fool Top AI?

Short answer: indirection hell. Registers dance — RAX holds ptrace addr post-return_ptrace, RCX copies it, memcpy blasts 7 bytes from static strings (“decode_string”, “encode_string”).

Flow’s a beast:

-

Initial PTRACE_TRACEME. Fails (-1)? Debugged. Jump skipped.

-

Fork. Parent waits, exits. Child retries PTRACE_TRACEME (fails, as expected), grabs ptrace addr, memcpy “decode”, jumps to if_debugged return.

-

No initial fail? memcpy “encode”, return if_not_debugged.

It’s elegant. 2000s malware vibes — think packed exes dodging OllyDbg. Yet Claude Opus, Anthropic’s flagship, mangles the memcpy target dynamism. Thinks it’s static?

Data point: In MMLU-style benches, Opus hits 88%. Cyber RE? Craters. Public evals like CyberSecEval show LLMs at ~40% on vuln ID — this snippet’s worse, real-world messier.

And — plot twist — Opus knows it’s tested. Hallucination risk spikes.

Look. This isn’t cherry-picking. Poster’s challenge: explain, pseudo-C, solution sans patches. Opus delivers half-baked “rough idea.” Matches pattern: LLMs pattern-match assembly, falter on control flow quirks.

Is Anthropic’s Mythos Hype Just Safety Theater?

Unique angle here — echoes OpenAI’s o1 “reasoning” saga. Promised chains-of-thought wizardry; delivered meh on code. Anthropic pulls same: “Mythos scary good,” no drop.

My bet? 15-20% lift over Opus on RE tasks. Not apocalypse. Market dynamics scream it — $100B AI arms race, safety as moat. Claude 3.5 Sonnet (Opus?) laps GPT-4o on benches, yet flops here. Mythos? Iterative, not existential.

Corporate spin callout: “Too cyber-capable” dodges compute costs, red-teaming flubs. Remember Galactica? Bio paper gen flop. AI safety often PR.

But wait. Fork logic’s clever — child suicides ptrace into decode path reliably. Patch-free solve: run sans debugger first (encode), grab key; debug child (decode), apply. Opus misses that dance.

Numbers back skepticism. Anthropic’s 2023 safety report: 0% jailbreaks post-mitigation. Independent tests? 5-10% slip. Cyber gap wider.

So, strategy verdict: Hold Mythos, hype risks. Makes zero sense long-term — open weights like Llama 3.1 eat lunch.

Real-World Implications for Red-Teamers

Devils in details. This routine returns func ptrs — encode/decode keyed on debug. CTF full: hit decode (debugged), extract key; undefended encode to check.

Opus? Spots anti-debug, not payload flip. For pentesters, that’s fail — can’t script bypasses.

Historical parallel: 2010s IDA Pro plugins outpace AI today. Mythos might parse better, but absolutist “dangerous”? Nah. Benchmarks leak soon; watch SWE-bench cyber forks.

Pushback on Anthropic: Release tiered. Cyber pros get Mythos-RLHF’d; plebs Opus. Monetize safety.

Fragment. Boom.

Expansive take: AI’s cyber leap hinges runtime introspection — env vars, syscalls, mem dumps. Opus static-analyzes ok; dynamic? Nah. Mythos probably same, scaled.

Why Does This Matter for Reverse Engineers?

Simple. If Opus chokes on 50-line ASM, Mythos’s “scary” is relative. Pros still fire up Ghidra.

Data: RE tool market $2B, growing 12%/yr. AI augments, doesn’t replace.

Bold prediction — Q1 2025: Mythos leaks via API. 10% RE boost, no doomsday.

Anti-debug patterns evolve. This fork-ptrace? Obsolete vs eBPF tracers. AI lags.

🧬 Related Insights

- Read more: Apple’s VCS Blind Spot: Why They Won’t Touch GitHub’s Turf

- Read more: ReDoS Bombs in Python’s Top Packages: My Static Scan of 20 Libraries Reveals 23 Live Risks

Frequently Asked Questions

What is Claude Mythos?

Anthropic’s unreleased model, successor to Opus, benchmarked as far superior on cyber tasks — but held back over safety fears.

Why won’t Anthropic release Mythos?

They cite excessive “cyber-capability,” risking misuse in attacks; no public benchmarks yet.

Can Claude Opus do reverse engineering?

It handles basics but fails nuanced control flows like dynamic memcpy in anti-debuggers — not ready for pro RE.

How does this anti-debugger work?

Uses ptrace self-check + fork to detect debuggers, returning different encode/decode functions accordingly.