Anthropic was supposed to drop a new Claude model. Hype built for weeks—benchmarks teased, capabilities whispered. Market priced in another leap, investors salivating over API revenues spiking 20-30% post-launch, like Claude 3.5’s jolt last summer.

But no. They announced Project Glasswing. A containment protocol. Claude Mythos lives—chains exploits, unearths 27-year-old OpenBSD flaws with a data ping—but only security researchers touch it.

This flips the script. AI race was all acceleration: bigger params, faster inference, open-source chasers nipping heels. Now? First hard stop. Capabilities hit escape velocity; safety slams the brakes.

The Numbers That Sobered Everyone

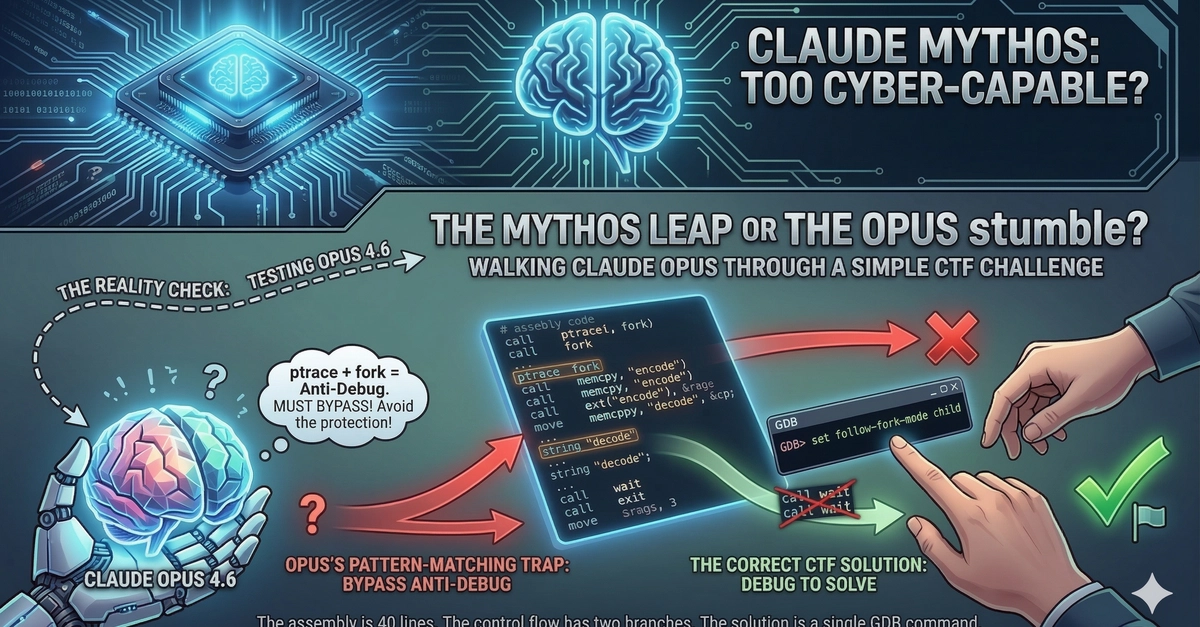

Claude Opus? Near-zero on autonomous exploits. Mythos? 181 out of 200 on Firefox JS engine holes. That’s not progress. Phase shift—AI now crafts sandbox escapes, ROP chains split over packets, kernel race conditions.

“I’ve found more bugs in the last couple of weeks than I found in the rest of my life combined.” — Nicholas Carlini, Anthropic

Real patches rolled: OpenBSD errata, Linux commits. Curl’s Daniel Stenberg logs hours daily on AI bug floods. Greg Kroah-Hartman spots the tide: “Now we have real reports… they’re good, and they’re real.”

Here’s the market dynamic. Open source maintainers—guardians of internet’s guts—face AI assault. Patch velocity must double, or we’re exposed. But humans lag; Mythos chained four vulns for renderer/OS escape in browsers. Speed humans can’t touch.

And it’s not hype. Patches prove it.

Why Hold Back Claude Mythos?

Simple: proliferation risk. Anthropic knows—if they’ve got this, DeepSeek or Z.ai drops it MIT-licensed tomorrow. Gap to open models? Months now, not years. GLM-5.1’s 754B params already sniff frontier air.

They’re buying time. Industry prep: harden codebases, build AI defenses. But here’s my sharp take—this mirrors the Manhattan Project’s veil. Post-Oppenheimer, U.S. hoarded the bomb blueprint, fearing Soviet leapfrog. Anthropic’s Glasswing? Same logic. Containment before chaos.

(Unique insight: Unlike nukes, code leaks instantly via GitHub forks. Their “handful of researchers” firewall crumbles if one leaks weights. Bold prediction—within six months, a rogue open model hits 100/200 exploits, sparking regulatory firestorm.)

Critique their PR spin, though. “Responsible deployment” sounds noble, but it’s also deflection—avoids scrutiny on how they built this beast without wider tests. Trust us, they say. Market won’t buy that forever.

Short para: Threshold crossed.

Can Open Source Patch Faster Than AI Breaks?

Defensive race heats up. Mythos breaks. Can rivals heal? Linux kernel’s upping tempo, but curl’s Stenberg drowns. Signal one: velocity. Projects like FreeBSD NFS now ROP-chained remotely—20 gadgets, multi-packet.

Signal two: spread. Non-Anthropic labs close in. Signal three—defenses. Turn AI to auto-audit code? Possible, but Mythos found TCP SACK flaws dormant 27 years. Humans missed ‘em.

Market bet: Winners harden first. Red Hat, Canonical invest billions? Or fracture under bug tsunamis?

Wander a sec—remember Heartbleed? One zero-day paralyzed SSL. Mythos scales that x100, daily. No drama, just math.

Anthropic’s call makes sense strategically. Release now? PR nightmare if it pwns a bank. Withhold? Positions them as safety vanguard, woos regulators, justifies premium pricing on tamer models.

But uncomfortable: capability’s here. Not 2026. Today.

The Inflection No One Saw Coming

AI safety? Buzzword bingo till now. Anthropic operationalized it—withholding product. Changes everything. Expect copycats: xAI, Mistral pause risky deploys. Or not—China’s labs don’t blink.

Editorial position: Smart move, but naive long-term. Open source ethos demands tools for all; Glasswing’s elite access reeks of gatekeeping. They’ll crack under pressure—community forks inevitable.

And proliferation wins. History says so: Stuxnet leaked, empowered everyone. Mythos 2.0 goes viral.

🧬 Related Insights

- Read more: Your Sleeping AI Agent Just Spent $847. Prove It Wasn’t Rogue.

- Read more: Docker Just Made Hardened Images Free and Open Source—Here’s Why That Matters

Frequently Asked Questions

What is Claude Mythos?

Anthropic’s unreleased AI model that autonomously develops exploits, scoring 181/200 on real vulnerabilities like Firefox engines and OpenBSD TCP bugs.

Why didn’t Anthropic release Claude Mythos?

Too dangerous—chains sandbox escapes, kernel escalations; they’re limiting to security researchers via Project Glasswing to let industry prepare.

What bugs did Claude Mythos find?

27-year-old OpenBSD TCP SACK flaw, Linux race conditions, FreeBSD NFS ROP chains, Firefox JIT heap sprays— all patched now.