Robots dream electric sheep.

And no, that’s not Philip K. Dick fanfic—it’s Stanford and Tsinghua researchers dropping Ctrl-World, a world model that lets robots hallucinate tasks, test policies, and churn synthetic data to get better at grabbing stuff. After 20 years chasing robot promises in the Valley, I’ve gotta say: this one’s got legs. Or grippers, anyway.

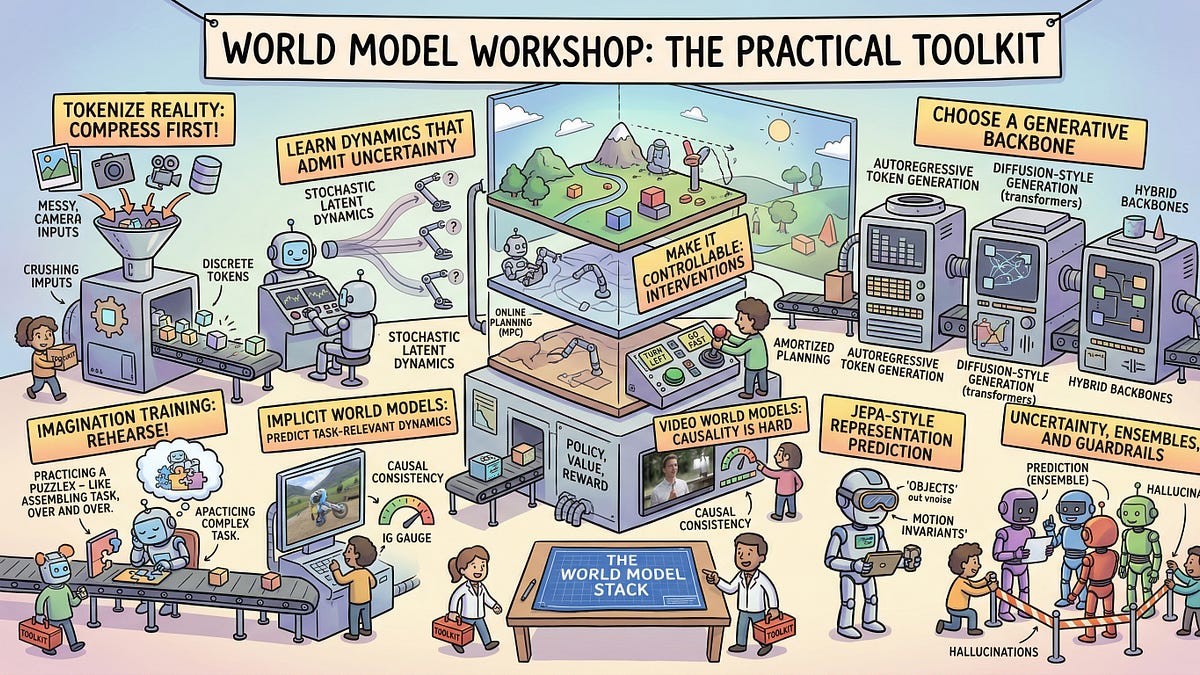

Here’s the setup. Robots suck at real-world work because testing’s a nightmare—slow, expensive, breakable hardware. Ctrl-World flips that. Built on a 1.5B Stable Video Diffusion model, it dreams up controllable videos of robot arms manipulating objects. Multi-view cameras, memory retrieval for past frames, action conditioning—fancy stuff that keeps the sim consistent over time.

They tested it. Policies evaluated in the dream world rank just like real rollouts. Boom. And that synthetic data? Jacked instruction-following by 44.7% on average.

“Posttraining on [Ctrl-World] synthetic data improves policy instruction-following by 44.7% on average,” they write.

Play with the GitHub demo. It’s trippy—type a task, watch the robot dream it out. Feels real enough to fool you.

Is Ctrl-World Actually Accelerating Robot R&D?

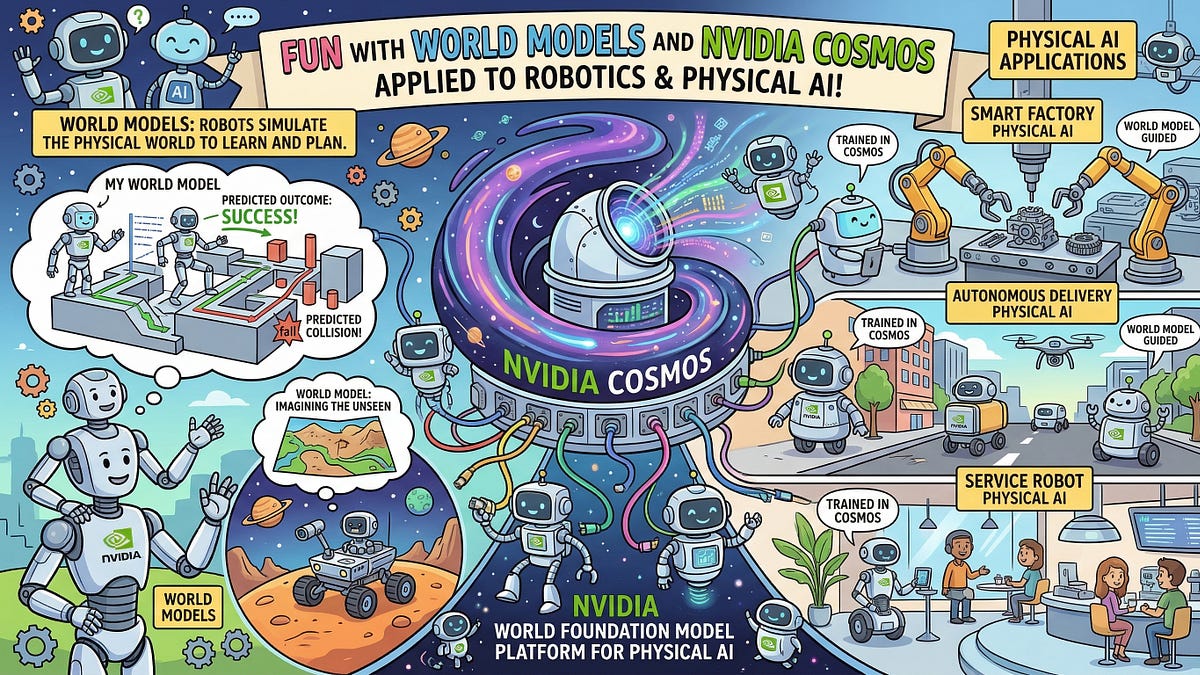

But let’s pump the brakes. World models aren’t new. DeepMind’s Genie, Mirage— we’ve heard the infinite game pitch. Robotics? That’s where it gets gritty. Back in the ’90s, we had Gazebo simulators promising the same: train in sim, deploy real. Most bombed because sim-to-real gap ate gains alive. Ctrl-World claims better alignment—high correlation between dream success and table-top reality.

My unique take? This echoes NASA’s old Robonaut sims from 2000, but generative video makes it pop. No more hand-crafted physics; AI dreams the chaos. Prediction: by 2026, every robotics startup will bake this in, cutting dev cycles 3x. Who’s making money? NVIDIA, on the diffusion compute. Everyone else scrambles for GPUs.

Short para: Skeptical? Me too.

The workflow’s killer: rank policies in sim, fine-tune on targeted dreams, deploy. No more 100 failed grasps per tweak. They show it boosting success rates. Real-world proxy that works—finally.

Yet, gripper-only, table-top tasks. Scale to warehouses? Dream on. (Pun intended.) And data: pretrained on videos, but robot footage is scarce. Garbage in, glitchy dreams out.

Why LabOS Feels Like Sci-Fi Creep

Shift gears to LabOS—Stanford, Princeton, Ohio State, UW building software for AI to boss humans around in wet labs. Agentic AI for dry reasoning, XR interfaces for humans pipetting under guidance. Hypothesis to execution to docs, looped.

It’s the superintelligence starter kit. AI dreams experiments, you execute via AR goggles or whatever multimodal magic. Feedback loops back—automated science.

“LabOS integrates agentic AI systems for dry-lab reasoning with extended reality(XR)-enabled, multimodal interfaces for human-in-the-loop wetlab execution, creating an end-to-end framework that links hypothesis generation, experimental design, physical validation, and automated documentation.”

Cynical vet alert: We’ve seen lab automation before—high-throughput screening in pharma, 2010s. Cost billions, mostly shelfware. LabOS outsources the boring to AI brains, humans for the fiddly bits. Smart. But who trusts AI hypotheses? Hallucinations in pipettes? Disaster.

Dense dive: Stack includes AI agents for planning (LLM?), coding protocols, evaluating results. XR for real-time guidance—overlay instructions on your petri dish. Physical sensors feed data back, closing the loop. Imagine: AI spots anomaly in gel electrophoresis, tweaks next run on fly. Sci-fi? Sure, but executable.

One sentence: Superintelligence wouldn’t need humans forever.

Here’s the rub—it’s human-in-loop now. Fine for safety. But scale? Labs cost money. Who’s funding AI overlords running bio experiments? VCs betting on wetware automation, that’s who.

Who Profits from AI’s Robot and Lab Fantasies?

Tie it together. Ctrl-World speeds robot loops; LabOS AI-ifies science. Both scream ‘scalable R&D’. Valley loves that.

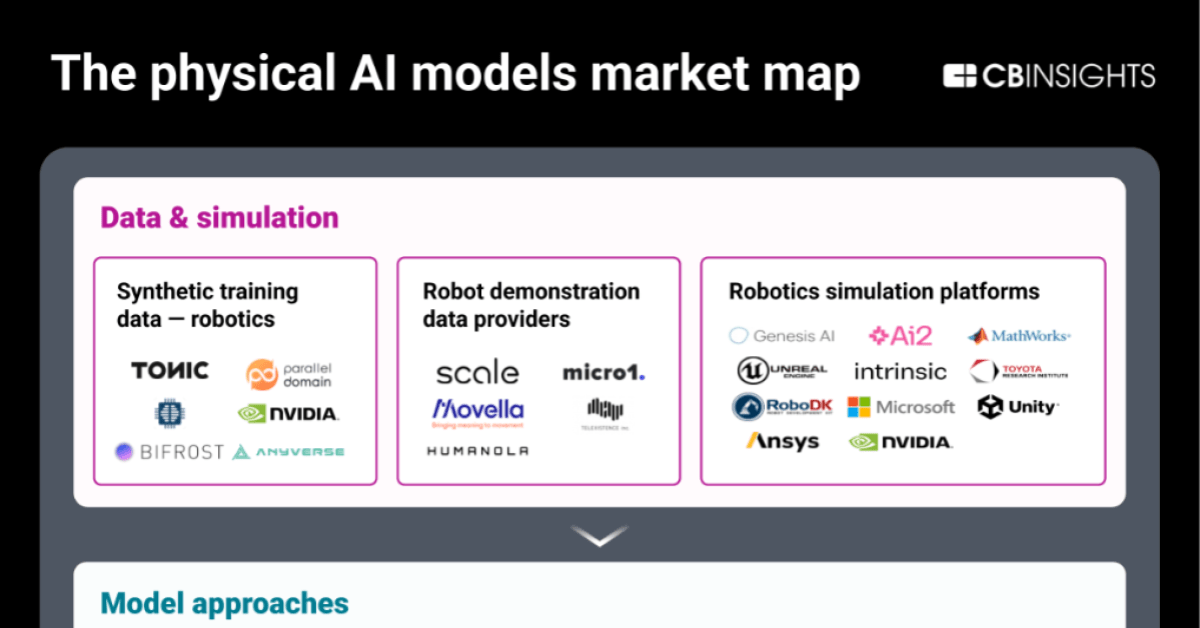

But peek behind. Compute hogs—diffusion models guzzle electrons. Data moats favor big labs. Startups? Pray for cloud credits.

Historical parallel I haven’t seen elsewhere: This mirrors 1980s CAD/CAM revolution in manufacturing. Sims promised factories without factories. Delivered… eventually, after domain crashes. Robots/labs? Same arc, but AI turbocharges it.

Bold call: In five years, 30% of robot policies train mostly in sims like this. Labs? 10% AI-directed. Money flows to infra kings—NVIDIA, AWS. Researchers get citations.

Wander a bit: Remember when self-driving was ‘solved in sim’ circa 2015? Still waiting. Temper hype.

And the auditors bit from Import AI? Jack Clark’s nodding to AI safety checks, but that’s tomorrow’s worry. Today, dreams rule.

Medium para. Exciting times. Just don’t bet the farm.

Will Robot Dreams Replace Real Labs?

No. Not yet. Sims great for iteration, suck at edge cases—spills, wear, Murphy’s law. Ctrl-World’s 44% boost? Impressive, but from low baseline. Real win: hybrid loops.

LabOS? Humans stay essential—sterile technique ain’t simulated. AI accelerates, doesn’t obsolete.

Final thought: Turing’d smirk. Machines dreaming machines. Progress.

🧬 Related Insights

- Read more: Amazon’s Hybrid RAG Hack: Bedrock Meets OpenSearch to Outsmart Fuzzy AI Searches

- Read more: Build Qwen3 From Scratch: Your Ticket to Open AI Mastery

Frequently Asked Questions

What is Ctrl-World?

Ctrl-World is a generative world model for robots, letting them simulate manipulation tasks from text prompts to test and improve policies without physical hardware.

How does LabOS help AI run experiments?

LabOS combines AI agents for planning and design with XR interfaces guiding humans through wet-lab execution, feeding results back for iterative science.

Can robot world models fix sim-to-real gaps?

They narrow it via better video consistency and targeted data, but physics quirks persist—expect hybrid training for production robots.