It’s unraveling a maze. Not some kid’s puzzle — a brutal, grid-twisting nightmare from the ARC-AGI benchmark that leaves GPT-4 and Claude gasping. But this solver? A mere 7-million-parameter speck called the Tiny Recursion Model (TRM). Looping. Refining. Winning.

Zoom out. We’ve been chasing AI intelligence by stacking parameters like Jenga towers, billions high. Bigger models, right? Smarter brains. Except they’re not — not for real reasoning. Enter TRM, a radical rethink: trade space for time. Let a tiny network iterate, self-correct, think deeper. And bam — it outsmarts giants 10,000 times its size.

Here’s the electric part. This isn’t just another benchmark flex. It’s proof that intelligence might mimic the human mind more than a data-hoarding behemoth — recursive loops over rote prediction.

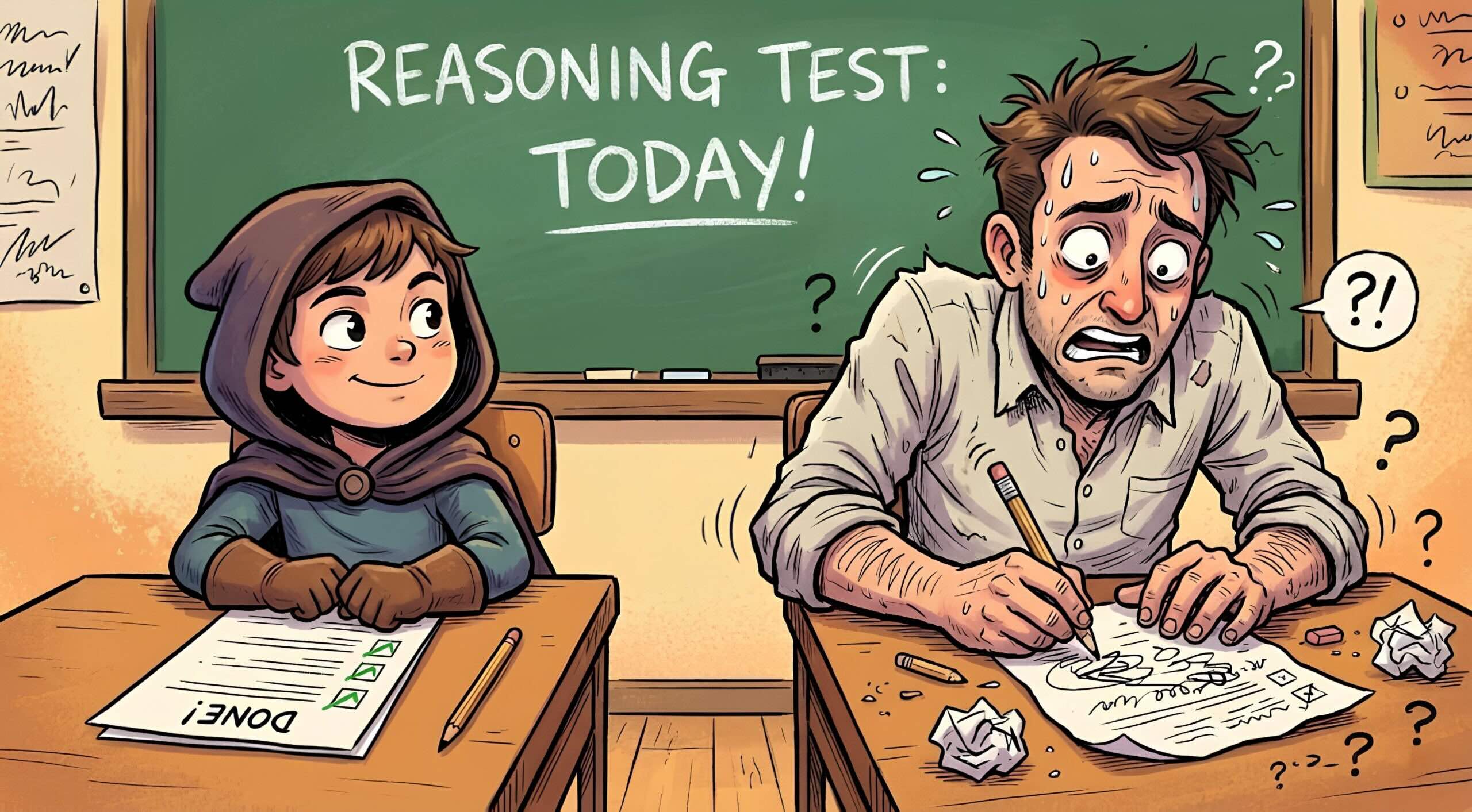

Why Do Reasoning Giants Like ChatGPT Keep Failing?

Brittle. That’s the word. Picture GPT-4 as a downhill skier, barreling forward token-by-token. One slip early? Crash. No backtrack, no mull-it-over. It’s all next-token prediction, even in fancy Chain-of-Thought setups.

These models are primarily trained on the Next-Token-Prediction (NTP) objective. They process the prompt through their billion-parameter layers to predict the next token in a sequence. Even when they use “Chain-of-Thought” (CoT)… they are again just predicting a word, which, unfortunately, is not thinking.

Spot on. And the second killer flaw? Memorization masquerading as logic. Throw a novel puzzle their way — poof. Billions of params turn to mush. ARC-AGI exposes it: these behemoths adapt known tricks, not invent from scratch.

But TRM? It reasons. For real.

Think evolution here — my hot take, absent from the original buzz. Brains aren’t parameter monsters; they’re efficient loops, neurons firing recursively, building insights step-by-step. TRM echoes that primal efficiency, potentially kickstarting AGI without melting data centers. Bold? You bet. But history nods: RNNs flopped once, now recursion’s roaring back.

How Does a Tiny Model Loop Its Way to Victory?

Simple setup, genius execution. TRM juggles a “trinity” of states: the unchangeable question (x, like your maze grid), current hypothesis (yt, best guess), and latent reasoning (zn, the brain’s scratchpad).

No massive transformer feed-forward slog. Instead, one wee MLP — two layers, tops — runs in cycles.

First, pure thinking: n sub-steps updating zn. It scans x, yt, old zn. Spots gaps, contradictions. Refines thoughts without touching the answer yet. Pure abstraction.

Then, answer tweak: project zn to upgrade yt. Feed that back. Repeat T times.

It’s a feedback frenzy. Tiny network, massive depth through iteration. 7M params, but reasoning chains longer than any one-shot giant.

And efficiency? Laughable compute footprint. While GPT-4 guzzles energy for a single pass, TRM ponders cheaply, scaling smarts with time, not size.

Can This 10,000x Smaller Brain Really Outsmart ChatGPT?

Numbers don’t lie. On ARC-AGI — the ultimate novel-reasoning test — TRM laps mainstream models. DeepSeek? Claude? GPT-4? All humbled.

Why? No snowball errors. It self-corrects mid-loop. No memorization crutch; pure deduction from primitives.

Skeptics whine: “Benchmarks! Real-world?” Fair. But imagine devs deploying this: edge devices solving logistics puzzles, robots navigating unseen terrains — all offline, sipping battery.

Corporate spin check — papers hype architectures, but overlook training quirks. TRM’s initialized randomly, learns via iteration. Yet, is it general? Early days. Still, this feels like the PC vs. mainframe pivot: small, iterative machines democratizing power.

Picture apps exploding. Sudoku solvers in your watch. Custom theorem-provers for scientists. Hell, personalized tutors iterating on your weak spots.

The wonder hits: AI as a platform shift, yes — but lean, recursive platforms. No more parameter arms race. We’re entering the era of thinking machines that ponder, not parrot.

And the pace? Accelerating. Jolicoeur-Martineau’s 2025 drop (yeah, future-dated paper — preprint magic) sparks a wave. Open-source it, and watch bedrooms birth AGI rivals.

But wait — pitfalls. Fixed loop counts (n, T) might choke on ultra-complexity. Scaling laws? Unclear if 100M-param TRM variants crush everything. Training data still matters; garbage in, loops out.

Thrilling uncertainty.

What Happens When Recursion Rules AI?

Bold prediction: by 2027, hybrid TRM-LLM agents dominate. Giants for knowledge, tinies for logic. Data centers shrink; intelligence explodes.

Developers, rejoice — code this in PyTorch tomorrow. It’s not black-box wizardry; transparent loops you tweak.

The future? Electric. AI, untethered from size, thinking free.

**

🧬 Related Insights

- Read more: BlinkCAD Torches the DWG Viewing Nightmare—No AutoCAD, No Drama

- Read more: Streamlit’s Auth Wake-Up Call: Descope Promises SSO Without the Headache

Frequently Asked Questions**

What is the Tiny Recursion Model?

A 7M-parameter network that solves reasoning puzzles by iteratively updating thoughts and hypotheses in loops, beating giants like GPT-4.

How does TRM outperform larger models like ChatGPT?

By trading parameter count for recursive self-correction — no brittle token prediction, just deepening logic over steps.

Will tiny recursive models replace giant LLMs?

Not fully, but they’ll hybridize: LLMs for breadth, TRMs for depth — ushering efficient, edge-ready AI.