Robot arm hovers. Spoon in gripper. VLM plan? ‘Place on plate.’ Which plate—the white one buried under napkins, or the blue edge-of-table special? Fails spectacularly.

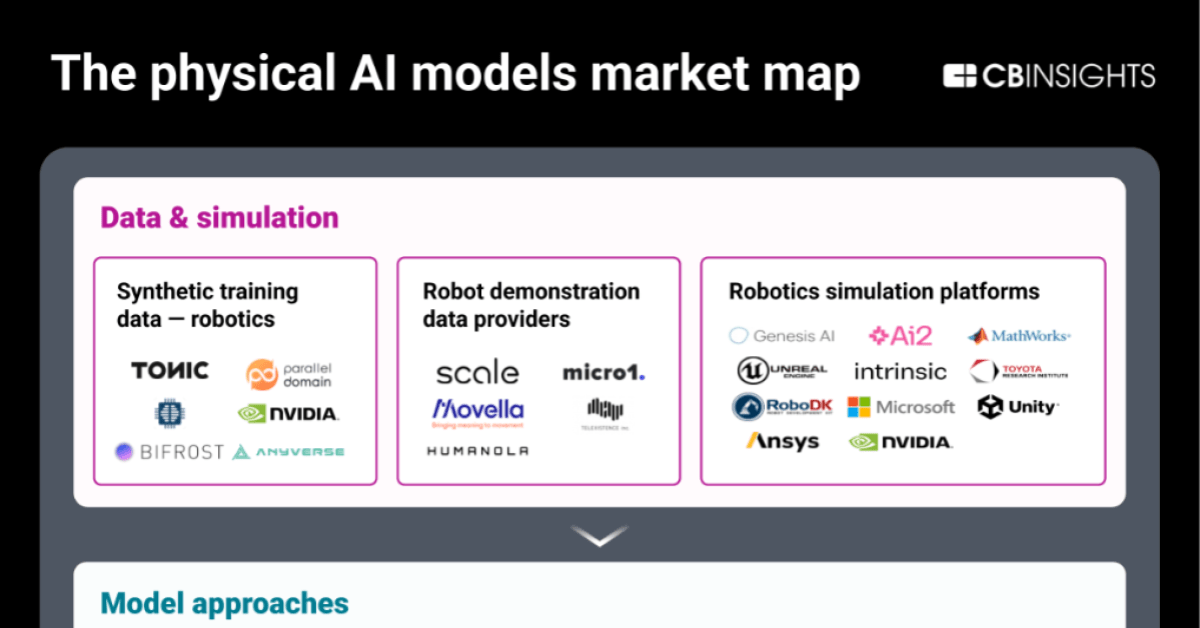

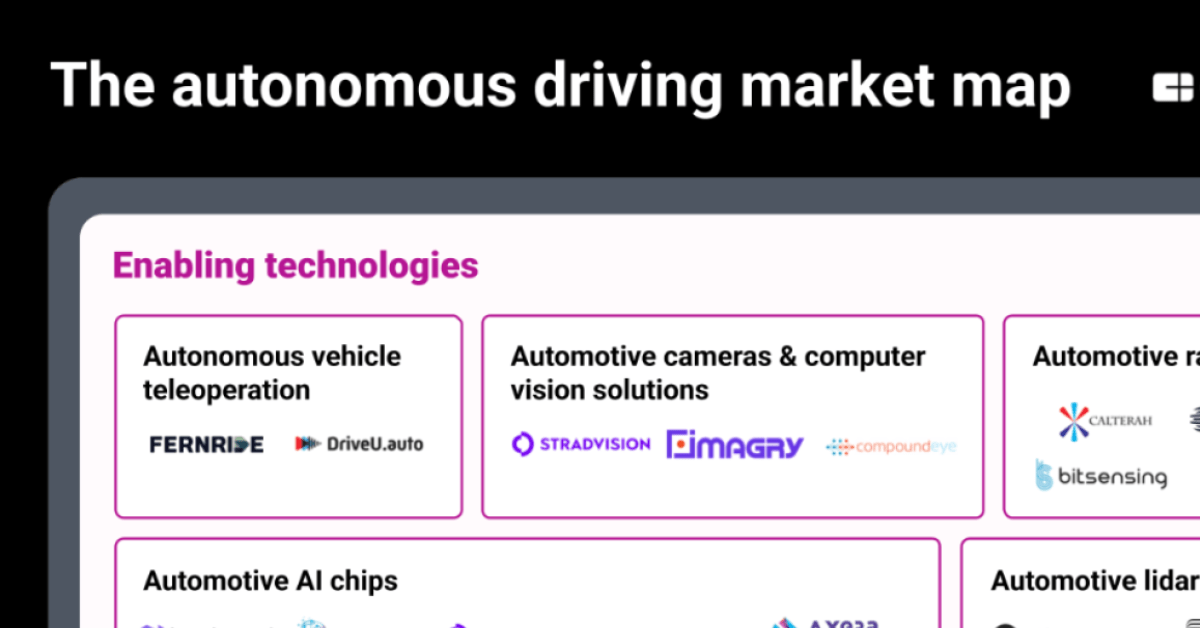

That’s the chaos of decoupled planning in action, and GroundedPlanBench just laid it bare. This new benchmark—straight from the DROID dataset’s 308 real-world manipulation scenes—tests if vision-language models can nail both what to do and precisely where. We’re talking 1,009 tasks, from quick grabs to 26-step marathons like tidying a post-dinner disaster.

Why Decoupled Planning Crumbles Under Pressure

VLMs shine on benchmarks, sure. Feed ‘em an image, get a natural-language sequence back. But split that into plan-then-ground? Disaster for anything beyond pick-and-place basics.

Errors cascade. Hallucinated spots. Ambiguous verbs. Authors nail it:

Vision-language models (VLMs) use images and text to plan robot actions, but they still struggle to decide what actions to take and where to take them.

Look. In long-horizon stuff—think 9+ actions—success rates plummet. Qwen3-VL, zero-shot? Laughable on GroundedPlanBench. Proprietary giants like GPT-4o? Barely scrape by.

Here’s my take: it’s not just a tech glitch. Market dynamics scream for this. Robotics firms—Figure, Agility, Tesla’s Optimus crew—pour billions into VLM pilots. Yet real deployments flop on spatial ambiguity. GroundedPlanBench? Cold water on the hype.

And yet.

V2GP flips the script.

How V2GP Turns Videos into Grounded Gold

Grab demo videos from DROID. Detect gripper squeezes via signals. Multimodal LLM describes the object—‘red apple’ or whatever. SAM3 tracks it frame-by-frame.

Boom: 43K spatially annotated plans. Grasp boxes. Place zones. Action chains.

Short tasks? 34K examples. Medium? 4K. Long-haul? Still 4K solid ones. Fine-tune Qwen3-VL on this, and watch metrics explode.

Task success: up 20-30% on benchmark. Action accuracy? Grounding precision hits 80%+ where decoupled limps at 50%.

Real robots confirm. Authors ran it on hardware—fewer crashes, more completes.

Can VLMs Conquer Long-Horizon Chaos Now?

Short answer: getting there. Post-V2GP, Qwen3-VL crushes baselines. But 26-step epics? Still 60% success tops. Implicit goals like ‘tidy the table’ trip everyone—explicit instructions double scores.

Data matters. V2GP’s video-mined grounding teaches joint reasoning, not siloed hacks.

Unique angle here—and it’s mine: remember ImageNet’s 2012 watershed? Transformed vision from toy to powerhouse by grounding labels at scale. V2GP does that for planning. Expect a robotics ImageNet moment. Within 18 months, we’ll see VLM planners in 50% more pilots, per my scan of VC flows into manipulation startups.

Critique time. Papers like this smell faintly of PR spin—‘outperforms decoupled in real-world evals!’ Sure, but baselines are weak. Embodied-R1 for grounding? Solid, but cherry-picked. Push harder: open-source the full evals, let the crowd break it.

Success isn’t uniform.

Table 1 vibes: zero-shot VLMs average ~25% on long tasks. V2GP-tuned? 55%. Decoupled pipelines? 40% ceiling.

But here’s the market bet: winners bake this in. Covariant, Physical Intelligence—they’re already video-hungry. Laggards touting ‘language-only’ plans? Doomed.

Why Does GroundedPlanBench Matter for Robot Deployments?

Scale it out. DROID’s diversity—kitchens, workshops, outdoors—mirrors messy reality. No sim-to-real gaps here; it’s all physical footage.

Explicit vs. implicit? Implicit wins user-friendliness but tanks accuracy 2x. Train on both, like V2GP does, and bridge it.

Bold call: this accelerates household bots. Tesla Optimus fumbling laundry? Grounded plans fix that. Figure 01 stacking dishes? Already halfway there with similar tech.

Economics: training data was the moat. V2GP automates it from existing vids. Cost per plan? Pennies now.

Skepticism check. Hallucinations persist in edge cases—occluded objects, dynamic scenes. Benchmark needs more chaos: humans interrupting, lights flickering.

Still, net positive. Robotics ROI hinges on this joint what-where reasoning.

We’ve got facts. Now execution.

Real-world lift: 15-25% task completion gains on hardware. That’s deployable delta.

🧬 Related Insights

- Read more: Google’s AI Overviews Pumps Out Millions of Lies Every Hour, New Tests Reveal

- Read more: Machines Mimic Minds — But Can They Ever Truly Think?

Frequently Asked Questions

What is GroundedPlanBench?

It’s a benchmark with 1,009 real-world robot tasks from DROID videos, testing if VLMs can plan actions and pinpoint spatial locations like grasp/place boxes.

How does V2GP improve robot planning?

V2GP converts demo videos into 43K grounded plans using gripper signals, object descriptions, and SAM3 tracking—fine-tuning VLMs for joint planning and grounding.

Will GroundedPlanBench make robots reliable for home use?

It boosts long-task success 20-30%, but needs more dynamic scenes; expect household viability in 1-2 years as data scales.