Token burn is broken.

Meta’s engineers and OpenAI’s hotshots aren’t racing on code shipped or bugs squashed. Nope. They’re leaderboard warriors for tokens torched—210 billion in one week, per reports, that’s 33 Wikipedias gulped and spat out. Tokenmaxxing, they call it. Sounds badass, right? Wrong. It’s a trap, structurally baking waste into agent design at the worst possible moment.

Why Are Companies Obsessed with Token Burn?

Look, tokens are cheap now—frontier models sling them like confetti. But here’s the data punch: Tyler Folkman’s experiment nails it. Simple query: three biggest cities in Utah. Raw API? 77 tokens. Fancy agent framework like LangChain’s Deep Agents? 5,983 tokens. That’s 78 times more, across seven LLM pings.

Scale to real work—a bug fix with file reads. Agent scarfs 151,120 tokens; raw API does it in 4,492. 34x bloat. Why? Scaffolding hell: 400-token system prompts, todo middleware, tool schemas bloating to 3,000+. Every tool call, sub-agent spin-up—pure token tax.

For a beefy million-token context? Meh, noise. But cram that into a 14B local model with 32K window? Boom—19% memory gone before your task even loads. And if execs grade on token burn? Engineers build more scaffolding. Reward the disease.

One OpenAI engineer reportedly burned through 210 billion tokens in a single week — the equivalent of reading 33 Wikipedias, processed and discarded.

Gizmodo’s bullet analogy? Spot-on. But dig deeper—this echoes the 1970s software debacle. Back then, managers measured coders by lines of code. Result? Bloated spaghetti that crashed spectacularly (think IBM’s early mainframes). Token burn? Same sin, 2026 edition. My unique call: firms chasing this will torch $10B+ in needless compute by 2028, while efficiency rebels like Dan Woods run 397B Qwen3.5 on a MacBook at 20 tokens/sec, zero API bleed.

Apples-to-apples efficiency wins.

Is Token Burn Killing Agent Adoption?

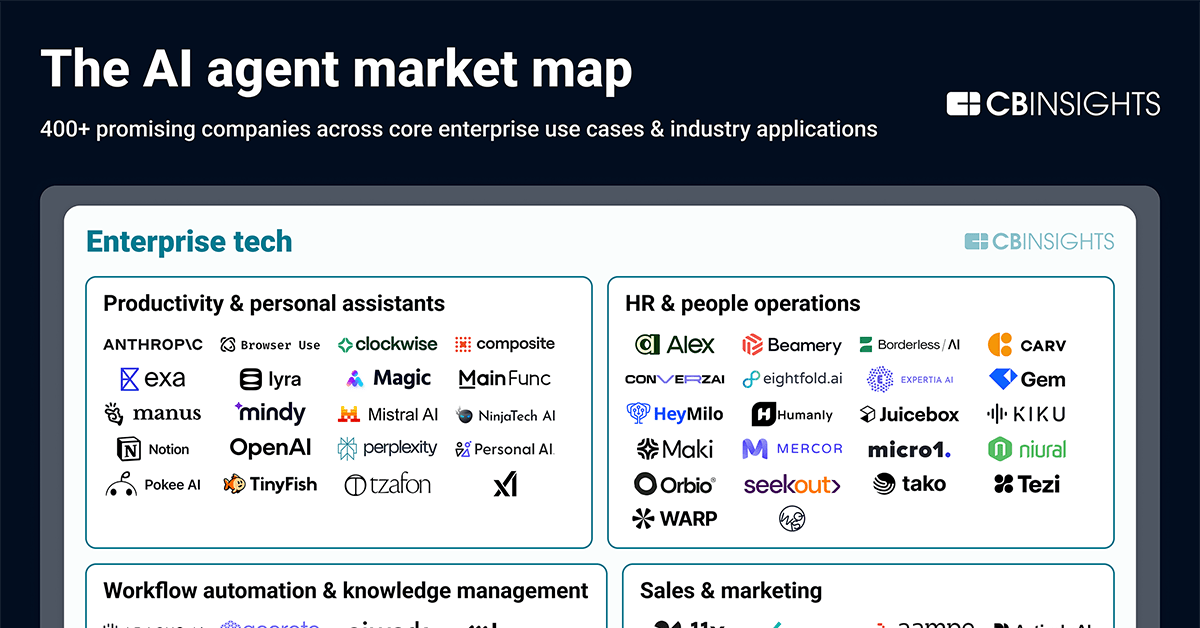

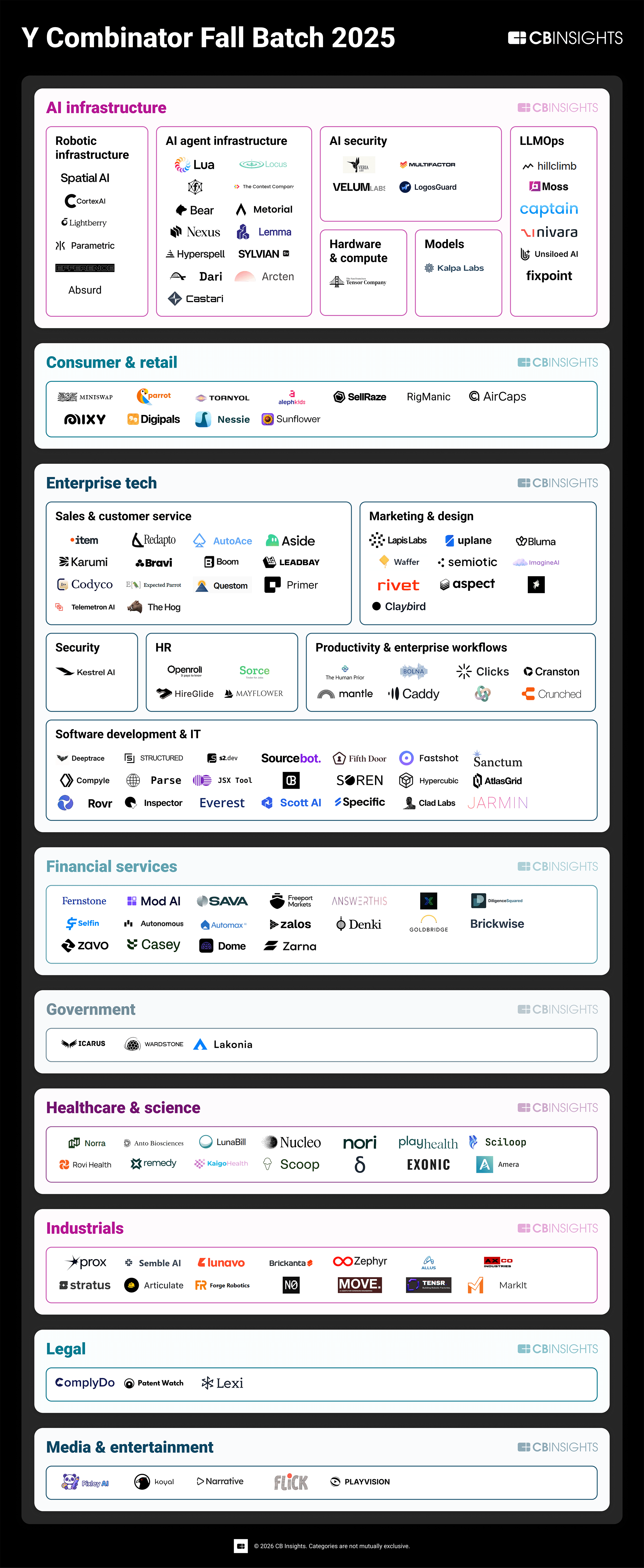

Enterprises are all-in—Karpathy hasn’t coded since December, just herds agents. OpenCode? 120K GitHub stars, 5M users monthly. Not fringe. Yet eval tools lag hard. Tokens? Easy log. Actual wins? Crickets.

What should we track? Agent Efficiency Ratio: Tasks Done / (Tokens × Revisions). Or basics:

| Metric | What it measures |

|---|---|

| Task Completion Rate | Did it finish? |

| First-Shot Success | Corrections needed? |

| Tokens per Task | Cost per win |

| Revision Ratio | Rework waste |

Burn 10K tokens for a shippable PR? Gold. 2K tokens needing three fixes? Trash—hidden failure tax murders ROI.

Dan Woods proves the fix: Apple’s LLM-in-a-Flash on Qwen3.5-397B. Streams experts from SSD lazily—17B active params. MacBook 48GB? 5.5 tps. High-end Silicon? 20 tps. Free, local, frontier-grade. Sparse arch: not all weights fire per token. Agents need this mindset—trim scaffold, not pad it.

But hype machines spin token volume as “scale.” Callout: Meta’s PR glosses this as innovation; it’s misaligned incentives, plain. Companies nailing outcome metrics first? They’ll architect lean agents, justify budgets when bosses grill “what’d it do?”

The irony stings.

Those leaderboard chasers burn for show. Eval builders—task rates, dollar-per-outcome—will expose the farce. Battlefield truth: tally territory, not shells.

Scale hits now. No metrics? Proxies rule, waste reigns. Flip it—measure value, build kings. Or watch rebels like Woods eat your lunch, one efficient token at a time.

Håkon Åmdal’s AgentLair? Claude agent runs code, outreach, ops autonomously. Bet they’re not tokenmaxxing.

Why Does Better Measurement Matter for Enterprises?

Market dynamics scream urgency. Agent tooling explodes—LangChain, etc.—but overhead multipliers (78x!) kill margins at scale. Enterprises deploying thousands? Token bills balloon while output crawls.

Prediction: By mid-2026, outcome-metric leaders snag 40% agent market share. They’ll prune bloat, hit 10x efficiency vs. token junkies. Laggards? Compute Armageddon.

Fixes exist. Stream weights. Sparse scaffolds. Eval suites tracking revisions, not raw throughput.

Don’t count bullets. Claim ground.

🧬 Related Insights

- Read more: Transformers: The Engine Under GPT’s Hood, Minus the Hype

- Read more: Market Regimes Exposed: HMM + K-Means Sniffs Out Risk-On Before the Rally Ignites

Frequently Asked Questions

What is token burn in AI agents? Token burn measures tokens (input/output units) an AI agent consumes during tasks. It’s easy to track but ignores output quality—leading to wasteful designs.

Why is token burn a bad productivity metric? It incentivizes bloated scaffolding over efficient task completion. Agents using 78x more tokens for simple queries highlight the flaw; real wins like shipped code matter more.

How to measure AI agent productivity better? Use Task Completion Rate, Tokens per Task, First-Attempt Success, and Revision Ratio. Efficiency Ratio = Tasks / (Tokens × Revisions) captures value over volume.